Think of search as the application platform, not just a feature

As a developer, the decisions you make today in how you implement search will

either set you up to prosper, or block your future use cases and ability to

capture this fast-evolving world of vector representation and multi-modal

information retrieval. One severely blocking mindset is relying on SQL LIKE

queries. This old relational database approach is a dead end for delivering

search in your application platform. LIKE queries simply don’t match the

capabilities or features built into Lucene or other modern search engines.

They’re also detrimental to the performance of your operational workload,

leading to the over-use of resources through greedy quantifiers. These are

fossils—artifacts of SQL from 60 or 70 years ago, which is like a few dozen

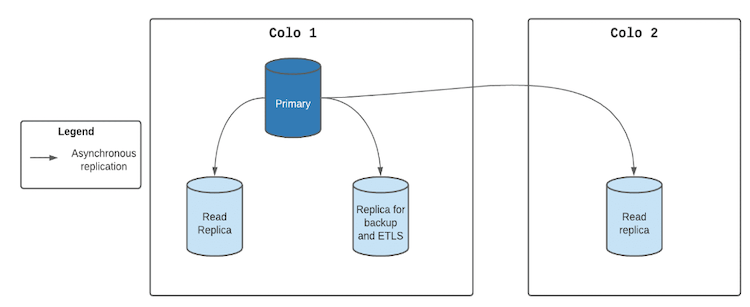

millennia in application development. Another common architectural pitfall is

proprietary search engines that force you to replicate all of your application

data to the search engine when you really only need the searchable fields.

What Is a Data Reliability Engineer, and Do You Really Need One?

It’s still early days for this developing field, but companies like DoorDash,

Disney Streaming Services, and Equifax are already starting to hire data

reliability engineers. The most important job for a data reliability engineer is

to ensure high-quality data is readily available across the organization and

trustworthy. When broken data pipelines strike (because they will at one point

or another), data reliability engineers should be the first to discover data

quality issues. However, that’s not always the case. Insufficient data is first

discovered downstream in dashboards and reports instead of in the pipeline – or

even before. Since data is rarely ever in its ideal, perfectly reliable state,

the data reliability engineer is more often tasked with putting the tooling

(like data observability platforms and testing) and processes (like CI/CD) in

place to ensure that when issues happen, they’re quickly resolved. The impact is

conveyed to those who need to know. Much like site reliability engineers are a

natural extension of the software engineering team, data reliability engineers

are an extension of the data and analytics team.

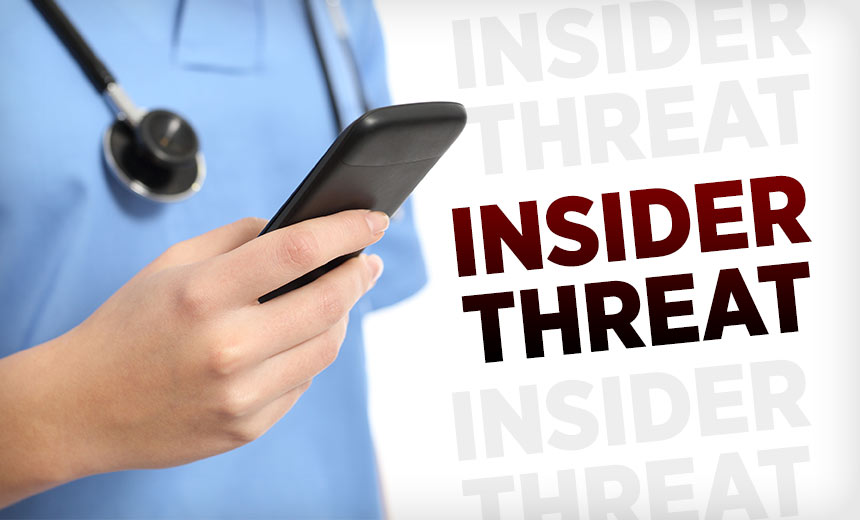

Mitigating Insider Security Threats in Healthcare

Some security experts say that risks involving insiders and cloud-based data are

often misjudged by entities. "One of the biggest mistakes entities make when

shifting to the cloud is to think that the cloud is a panacea for their security

challenges and that security is now totally in the hands of the cloud service,"

says privacy and cybersecurity attorney Erik Weinick of the law firm Otterbourg

PC. "Even entities that are fully cloud-based must be responsible for their own

privacy and cybersecurity, and threat actors can just as readily lock users out

of the cloud as they can from an office-based server if they are able to

capitalize on vulnerabilities such as weak user passwords or system architecture

that allows all users to have access to all of an entity's data, as opposed to

just what that user needs to perform their specific job function," he says. Dave

Bailey, vice president of security services as privacy and security consultancy

CynergisTek, says that when entities assess threats to data within the cloud, it

is incredibly important to develop and maintain solid security practices,

including continuous monitoring.

Is cybersecurity talent shortage a myth?

It is a combination of things but yes, in part technology is to blame. Vendors

have made the operation of the technologies they designed an afterthought. These

technologies were never made to be operated efficiently. There is also a certain

fixation to technologies that just don’t offer any value yet we keep putting a

lot of work towards them, like SIEMs. Unfortunately, many technologies are built

upon legacy systems. This means that they carry those systems’ weaknesses and

suboptimal features that were adapted from other intended purposes. For example,

many people still manage alerts using cumbersome SIEMs that were originally

intended to be log accumulators. The alternative is ‘first principles’ design,

where the technology is developed with a particular purpose in mind. Some

vendors assume that their operators are the elites of the IT world, with the

highest qualifications, extensive experience, and deep knowledge into every

piece of adjoining or integrating technology. Placing high barriers to entry on

new technologies—time-consuming qualifications or poorly-delivered, expensive

courses—contributes to the self-imposed talent shortage.

How Manufacturers Can Avoid Data Silos

The first and most important step you can take to break down silos is to develop

policies for governing the data. Data governance helps to ensure that everyone

in a factory understands how the data should be used, accessed, and shared.

Having these policies in place will help prevent silos from forming in the first

place. According to Gartner data, 87 percent of manufacturers have minimal

business intelligence and analytics expertise. The research found these firms

less likely to have a robust data governance strategy and more prone to data

silos. Data governance efforts that improve synergy and maximize data

effectiveness can help manufacturing companies reduce data silos. ... Another

way to break down data silos is to cultivate a culture of collaboration.

Encourage employees to share information and knowledge across departments. When

everyone is working together, it will be easier to avoid duplication of effort

and wasted time. To break down data silos, manufacturers should move to a

culture that encourages collaboration and communication from the top down.

Top 7 metaverse tech strategy do's and don'ts

Like any other technology project, a metaverse project should support overall

business strategy. Although the metaverse is generating a lot of buzz right now,

it is only a tool, said Valentin Cogels, expert partner and head of EMEA product

and experience innovation at Bain & Company. "I don't think that anyone

should think in terms of metaverse strategy; they should think about a customer

strategy and then think about what tools they should use," Cogels said. "If the

metaverse is one tool they should consider, that's fine." Approaching with a

business goals-first approach also helps to refine the available choices, which

leaders can then use to build out use cases. Serving the business goals and

customers you already have is critical, said Edward Wagoner, CIO of digital at

JLL Technologies, the property technology division of commercial real estate

services company JLL Inc., headquartered in Chicago. "When you take that

approach, it makes it a lot easier to think how [the products and services you

deliver] would change if [you] could make it an immersive experience," he

said.

Digital begins in the boardroom

Boards need to guard against the default of having a “technology expert” that

everyone turns to whenever a digital-related issue comes onto the agenda. Rather

than being a collection of individual experts, everyone on a board should have a

good strategic understanding of all important areas of business – finance, sales

and marketing, customer, supply chain, digital. The best boards are a group of

generalists – each with certain specialisms – who can discuss issues widely and

interactively, not a series of experts who take the floor in turn while everyone

else listens passively. There is much that can be done to raise levels of

digital awareness among executives and non-executives. Training courses,

webinars, self-learning online – all these should be on the agenda. But one of

the most effective ways is having experts, whether internal or external, come to

board meetings to run insight sessions on key topics. For some specialist

committees, such as the audit and/or risk committees, bringing in outside

consultants – on cyber security, for example – is another important feature.

4 reasons diverse engineering teams drive innovation

Diverse teams can also help prevent embarrassing and troubling situations and

outcomes. Many companies these days are keen to infuse their products and

platforms with artificial intelligence. But as we’ve seen, AI can go terribly

wrong if a diverse group of people doesn’t curate and label the training

datasets. A diverse team of data scientists can recognize biased datasets and

take steps to correct them before people are harmed. Bias is a challenge that

applies to all technology. If a specific class of people – whether it’s white

men, Asian women, LGBTQ+ people, or other – is solely responsible for developing

a technology or a solution, they will likely build to their own experiences. But

what if that technology is meant for a broader population? Certainly, people who

have not been historically under-represented in technology are also important,

but the intersection of perspectives is critical. A diverse group of developers

will ensure you don’t miss critical elements. My team once developed a website

for a client, for example, and we were pleased and proud of our work. But when a

colleague with low vision tested it, we realized it was problematic.

Bringing Shadow IT Into the Light

IT teams are understaffed and overwhelmed after the sharp increase in support

demands caused by the pandemic, says Rich Waldron, CEO, and co-founder of

Tray.io, a low-code automation company. “Research suggests the average IT team

has a project backlog of 3-12 months, a significant challenge as IT also faces

renewed demands for strategic projects such as digital transformation and

improved information security,” Waldron says. There’s also the matter of

employee retention during the Great Resignation hinging in part on the quality

of the tech on the job. “Data shows that 42% of millennials are more likely to

quit their jobs if the technology is sub-par,” says Uri Haramati, co-founder and

CEO at Torii, a SaaS management provider. “Shadow IT also removes some burden

from the IT department. Since employees often know what tools are best for their

particular jobs, IT doesn’t have to devote as much time searching for and

evaluating apps, or even purchasing them,” Haramati adds. In an age when speed,

innovation and agility are essential, locking everything down instead just isn’t

going to cut it. For better or worse shadow IT is here to stay.

Log4j Attack Surface Remains Massive

"There are probably a lot of servers running these applications on internal

networks and hence not visible publicly through Shodan," Perkal says. "We must

assume that there are also proprietary applications as well as commercial

products still running vulnerable versions of Log4j." Significantly, all the

exposed open source components contained a significant number of additional

vulnerabilities that were unrelated to Log4j. On average, half of the

vulnerabilities were disclosed prior to 2020 but were still present in the

"latest" version of the open source components, he says. Rezilion's analysis

showed that in many cases when open source components were patched, it took more

than 100 days for the patched version to become available via platforms like

Docker Hub. Nicolai Thorndahl, head of professional services at Logpoint, says

flaw detection continues to be a challenge for many organizations because while

Log4j is used for logging in many applications, the providers of software don't

always disclose its presence in software notes.

Quote for the day:

"Go as far as you can see; when you get

there, you'll be able to see farther." -- J. P. Morgan

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/70765625/ai_bias_board_1.0.jpg)