True Success in Process Automation Requires Microservices

To future-proof early investments in RPA, organizations need to implement an

orchestration layer separate from their bot layer. Many RPA implementations lack

a “driving” capability that can connect one process to another. In the insurance

example above, one bot that inputs a claim can connect to another that inputs

data into the modern CRM system (and so on until the claims process is

completed). To take that modernization a step further, development teams can

focus on replacing RPA bots one by one with applications built on a

microservices architecture. The idea of ripping and replacing legacy systems is

expensive and daunting for most organizations. In reality, gradual digital

transformation makes more sense. RPA bots can help enable this transformation by

keeping legacy systems functional while developers re-architect and modernize

business applications in order of priority. Think of it as switching a house

over to LED bulbs one by one — you can still keep the lights on in the rest of

the house as each bulb gets updated.

Ransomware recovery: 8 steps to successfully restore from backup

"In many cases, enterprises don't have the storage space or capabilities to keep

backups for a lengthy period of time," says Palatt. "In one case, our client had

three days of backups. Two were overwritten, but the third day was still

viable." If the ransomware had hit over, say, a long holiday weekend, then all

three days of backups could have been destroyed. "All of a sudden you come in

and all your iterations have been overwritten because we only have three, or

four, or five days." ... In addition to keeping the backup files themselves

safe from attackers, companies should also ensure that their data catalogs are

safe. "Most of the sophisticated ransomware attacks target the backup catalog

and not the actual backup media, the backup tapes or disks, as most people

think," says Amr Ahmed, EY America's infrastructure and service resiliency

leader. This catalog contains all the metadata for the backups, the index, the

bar codes of the tapes, the full paths to data content on disks, and so on.

"Your backup media will be unusable without the catalog," Ahmed says.

LockBit 2.0 Ransomware Proliferates Globally

“Once in the domain controller, the ransomware creates new group policies and

sends them to every device on the network,” Trend Micro researchers explained.

“These policies disable Windows Defender, and distribute and execute the

ransomware binary to each Windows machine.” This main ransomware module goes on

to append the “.lockbit” suffix to every encrypted file. Then, it drops a ransom

note into every encrypted directory threatening double extortion; i.e., the note

warns victims that files are encrypted and may be publicly published if they

don’t pay up. ... Trend Micro has been tracking LockBit over time, and noted

that its operators initially worked with the Maze ransomware group, which shut

down last October. Maze was a pioneer in the double-extortion tactic, first

emerging in November 2019. It went on to make waves with big strikes such as the

one against Cognizant. In summer 2020, it formed a cybercrime “cartel” – joining

forces with various ransomware strains (including Egregor) and sharing code,

ideas and resources.

Mandiant Discloses Critical Vulnerability Affecting Millions of IoT Devices

Over the course of several months, the researchers developed a fully functional

implementation of ThroughTek’s Kalay protocol, which enabled the team to perform

key actions on the network, including device discovery, device registration,

remote client connections, authentication, and most importantly, process audio

and video (“AV”) data. Equally as important as processing AV data, the Kalay

protocol also implements remote procedure call (“RPC”) functionality. This

varies from device to device but typically is used for device telemetry,

firmware updates, and device control. Having written a flexible interface for

creating and manipulating Kalay requests and responses, Mandiant researchers

focused on identifying logic and flow vulnerabilities in the Kalay protocol. The

vulnerability discussed in this post affects how Kalay-enabled devices access

and join the Kalay network. The researchers determined that the device

registration process requires only the device’s 20-byte uniquely assigned

identifier (called a “UID” here) to access the network.

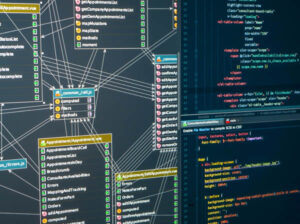

CQRS in Java Microservices

Command Query Responsibility Separation (CQRS) is a pattern in service

architecture. It is a separation of concerns, that is, separation of services

that write from services that read. Why would you want to separate read and

write services? One of the advantages of microservices is the ability to scale

services independently. We can often say with some level of certainty that one

set of services will be busier than others. If they are separate, they can be

scaled to best fit the normal use case and conserve cloud cycles. I will be

looking into CQRS provided by the Axon library. Axon implements CQRS with

event sourcing. The idea behind event sourcing is that your commands are

executed by sending events to all subscribers. Instead of storing state in

your persistence store, you store the immutable events, so you always have a

record of the events that led up to a particular state. Inside your program,

you will have an aggregate, which represents a stateful object but is

ephemeral in that the system can bring it in and out of existence as

needed.

AIOps Strategies for Augmenting Your IT Operations

Enrichment is the unsung hero of the entire event correlation process. Raw

alarm data is a start, but it’s not sufficient to be able to pinpoint the root

cause and enable an effective fix. When you have alerts coming in from a

variety of domains, it can be difficult to correlate them to produce a

fine-tuned set of tickets. You can use timestamps or point of origin, but that

will provide limited insight, and you'll miss connections between related

alerts coming from other sources or from other time windows. Easy-to-deploy

alert enrichments add value to every single alert, providing the extra layer

of understanding needed to determine which alerts are interrelated, and in

what way, enable you to focus on high-level correlated incidents, instead of

following every low-level alert that comes in the AIOps platform. Done right,

this process of enrichment reduces the ‘noise’, and helps you bring in

topology information from your CMDB, APM, and orchestration tools, change

information from your change management and CI/CD pipelines, and business

context from your team’s knowledge and procedures.

Addressing the demand for global software developer talent

Clear upskilling career paths should be provided for new and experienced

software developers. Younger developers will expect rapid career advances —

show them fast and more attractive ways forward, such as more opportunities to

work on innovation projects and technologies or earn a new job title or salary

due to learning a new skill. Experienced developers may want more time to

explore new technologies, some freedom to decide what to work on next, or just

shore up what they have been working on for years. A mentoring programme

connecting graduates with more experienced developers is a good idea. However,

it may add an onerous workload. Supplement that ‘human’ support with tools

that, for instance, help monitor code quality, engendering a consistent coding

practice level and preventing the number of errors that escape into

production. Be flexible with everyone’s working hours, location, and choice of

tools. Give them superior quality hardware and other workplace products to

make their jobs as easier. Online training and permission to spend work time

on it are essential.

IT Leadership: 11 Future of Work Traits

“Three- to five-year plans got smashed into a single year plan,” says Sarah

Pope, VP of Future of Technology, global consulting company Capgemini. “Two

priorities that became obvious as a result of COVID are customer experience

and employee experience. Customer experience didn't have to be just 'good,' it

needed to reflect customers' new behaviors and patterns. Similarly, employee

experience wasn't just about technology enablement and corporate culture, but

about how work fits into digital lives.” Enterprises have been pushing to

reopen their offices and business leaders are well aware that not everyone

will want to return. While there's a general acknowledgement that hybrid

workplaces will be the norm going forward, few organizations know what that

will really look like. However, it's obvious that if some people refuse to

return to the office at all, and others only want to work in the office a

couple of days per week, businesses need to make smart use of space, people,

and time. ... “Secondarily, [they'll want to bring people together in a

physical environment] from a maintaining culture and community perspective,

[such as hosting] those dinners or workshops that can tack on to a team

event.”

5 things to know about pay-per-use hardware

When enterprise IT teams get a quote for consumption-based infrastructure,

many will find themselves in unfamiliar territory, having never evaluated this

kind of pricing scheme. “It’s easy for HP or Dell to come in and say how much

they’re going to charge you per core, but then you realize you have no idea

whether that price is fair. That’s not how you calculate things in your own

facilities, and it’s apples to oranges versus public cloud costs,” Bowers

said. “As soon as enterprises are given a quote, they tend to go into

spreadsheet hell for three months, trying to figure out whether that quote is

fair. So it can take three, four, five months to negotiate a first deal.”

Enterprises struggle to evaluate consumption-based proposals, and they lack

confidence in their usage forecasts, Bowers said. “It takes a lot of financial

acumen to adopt one of these programs.” Experience can help. “The companies

that make the most confident decisions are those that did a lot of leasing in

the past. Not because this is a lease, but because those companies have the

mental muscles to be able to evaluate the financial aspects of time, value,

variable payments, and risks of payment spreads,” Bowers said.

An upbeat outlook for UK IoT sector despite barriers

The concern within the UK IoT sector reflects the fact that permanent roaming

– as the typical solution to delivering multi-region IoT projects – remains

fraught with problems. These range from the inability of roaming agreements to

support device Power Saving Modes, to the frequently arising commercial

disputes, the performance issues caused by having to backhaul data, and the

fact several countries have placed a complete ban on permanent roaming. In

contrast to the US environment, where two dominant operators (AT&T and

Verizon) deliver the majority of coverage, the European environment is far

more fragmented with multiple operators delivering regional coverage. This

adds a considerable layer of complexity and commercial disputes can threaten

the viability of multi-region rollouts, creating a concerning degree of risk

to IoT projects. UK IoT professionals are very aware that this issue of

cellular connectivity must be resolved to ensure the viability of future

large-scale projects – eight out of ten agree or strongly agree that the

evolution of intelligent connectivity is going to be critical to continue to

fuel adoption of IoT.

Quote for the day:

"Good leaders must first become good

servants." -- Robert Greenleaf

.jpg)