Reinforcement learning competition pushes the boundaries of embodied AI

Creating reinforcement learning models presents several challenges. One of them

is designing the right set of states, rewards, and actions, which can be very

difficult in applications like robotics, where agents face a continuous

environment that is affected by complicated factors such as gravity, wind, and

physical interactions with other objects. This is in contrast to environments

like chess and Go that have very discrete states and actions. Another challenge

is gathering training data. Reinforcement learning agents need to train using

data from millions of episodes of interactions with their environments. This

constraint can slow robotics applications because they must gather their data

from the physical world, as opposed to video and board games, which can be

played in rapid succession on several computers. To overcome this barrier, AI

researchers have tried to create simulated environments for reinforcement

learning applications. Today, self-driving cars and robotics often use simulated

environments as a major part of their training regime. “Training models using

real robots can be expensive and sometimes involve safety considerations,”

Chuang Gan, principal research staff member at the MIT-IBM Watson AI Lab, told

TechTalks.

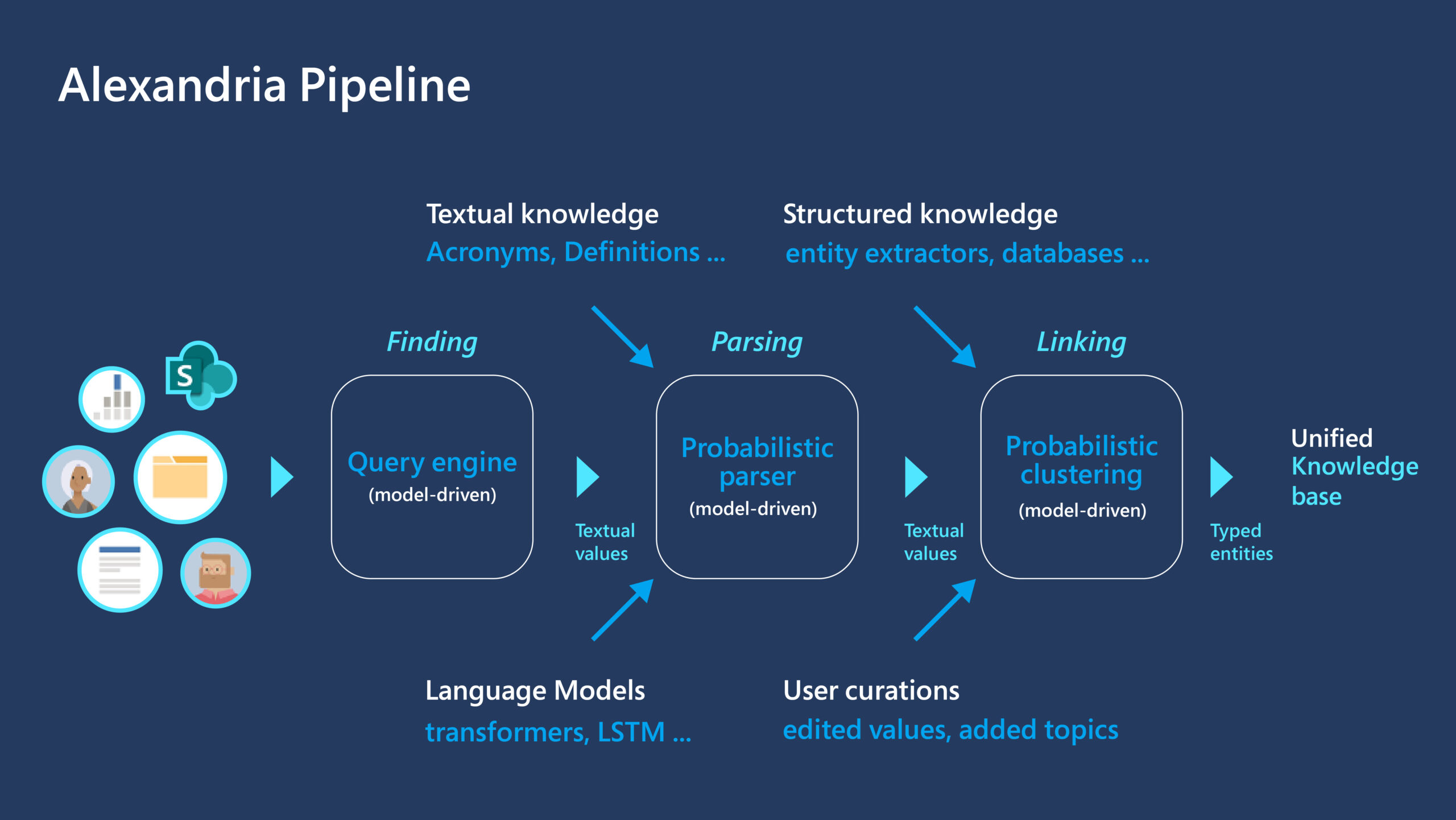

ONNX Standard And Its Significance For Data Scientists

ONNX standard aims to bridge the gap and enable AI developers to switch between

frameworks based on the project’s current stage. Currently, the models supported

by ONNX are Caffe, Caffe2, Microsoft Cognitive toolkit, MXNET, PyTorch. ONNX

also offers connectors for other standard libraries and frameworks. “ONNX is the

first step toward an open ecosystem where AI developers can easily move between

state-of-the-art tools and choose the combination that is best for them,”

Facebook had said in an earlier blog. It was specifically designed for the

development of machine learning and deep learning models. It includes a

definition for an extensible computation graph model along with built-in

operators and standard data types. ONNX is a standard format for both DNN and

traditional ML models. The interoperability format of the ONNX provides data

scientists with the flexibility to chose their framework and tools to accelerate

the process, from the research stage to the production stage. It also allows

hardware developers to optimise deep learning-focused hardware based on a

standard specification compatible with different frameworks.

Microsoft warns of damaging vulnerabilities in dozens of IoT operating systems

According to an overview compiled by the Cybersecurity and Infrastructure

Security Agency, 17 of the affected product already have patches available,

while the rest either have updates planned or are no longer supported by the

vendor and won’t be patched. See here for a list of impacted products and patch

availability. Where patching isn’t available, Microsoft advises organizations to

implement network segmentation, eliminate unnecessary to operational technology

control systems, use (properly configured and patched) VPNs with multifactor

authentication and leverage existing automated network detection tools to

monitor for signs of malicious activity. While the scope of the vulnerabilities

across such a broad range of different products is noteworthy, such security

holes are common with connected devices, particularly in the commercial realm.

Despite billions of IoT devices flooding offices and homes over the past decade,

there remains virtually no universally agreed-upon set of security standards –

voluntary or otherwise – to bind manufacturers. As a result, the design and

production of many IoT products end up being dictated by other pressures, such

as cost and schedule.

Automate the hell out of your code

Continuous integration is a software development principle that suggests that

developers should write small chunks of code and when they push this code to

their repository the code should be automatically tested by a script that runs

on a remote machine, automating the process of adding new code to the code

base. This automates software testing thus increasing the developers

productivity and keeping their focus on writing code that passes the tests.

... If continuous integration is adding new chunks of code to the code base,

then CD is about automating the building and deploying our code to the

production environment, this ensures that the production environment is kept

in sync with the latest features in the code base. You can read this article

for more on CI/CD. I use firebase hosting, so we can define a workflow that

builds and deploys our code to firebase hosting rather than having to do that

ourselves. But we have one or two issues we have to deal with, normally we can

deploy code to firebase from our computer because we are logged in from the

terminal, but how do we authorize a remote CI server to do this? open up a

terminal and run the following command firebase login:ci it will throw back a

FIREBASE_TOKEN that we can use to authenticate CI servers.

15 open source GitHub projects for security pros

For dynamic analysis of a Linux binary, malicious or benign, PageBuster makes

it super easy to retrieve dumps of executable pages within packed Linux

processes. This is especially useful when pulling apart malware packed

specialized run-time packers that introduce obfuscation and hamper static

analysis. “Packers can be of growing complexity, and, in many cases, a precise

moment in time when the entire original code is completely unpacked in memory

doesn't even exist,” explains security engineer Matteo Giordano in a blog

post. PageBuster also takes caution to conduct its page dumping activities

carefully to not trigger any anti-virtual machine or anti-sandboxing defences

present in the analyzed binary. ... The free AuditJS tool can help JavaScript

and NodeJS developers ensure that their project is free from vulnerable

dependencies, and that the dependencies of dependencies included in their

project are free from known vulnerabilities. It works by peeking into what’s

inside your project’s manifest file, package.json. “The great thing about

AuditJS is that not only will it scan the packages in your package.json, but

it will scan all the dependencies of your dependencies, all the way down. ...”

said developer Dan Miller in a blog post.

Securing the Future of Remote Working With Augmented Intelligence

Emerging technology can potentially reshape the dimensions of organizations.

Augmented reality, as well as virtual reality, will play a crucial role in

office design trends that have already come into being. Architecture

organizations are already dedicating a space for virtual reality. This is

basically an area that is equipped with all the essential requirements of

virtual reality. An immense amount of businesses are prone to taking this step

as more meetings are held virtually to accommodate the spread-out workforce.

Organizations are currently spending a hefty amount on virtual solutions and

will continue to invest in the future. Director of business development at

HuddleCamHD, Paul Richards, affirmed that “Numerous meeting rooms will become

more similar to TV production studios instead of collaborative spaces. Erik

Narhi, an architect as well as computational design lead at the Los Angeles

office of global design company Buro Happold also agreed that in this current

era, it is impossible to neglect augmented reality and virtual reality.”

Hybrid work for home is not going anytime soon.

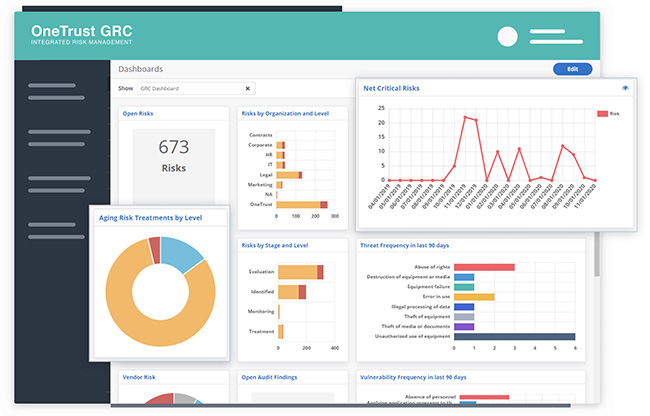

Risk-based vulnerability management has produced demonstrable results

Risk-based vulnerability management doesn’t ask “How do we fix everything?” It

merely asks, “What do we actually need to fix?” A series of research reports

from the Cyentia Institute have answered that question in a number of ways,

finding for example, that attackers are more likely to develop exploits for

some vulnerabilities than others. Research has shown that, on average, about 5

percent of vulnerabilities actually pose a serious security risk. Common

triage strategies, like patching every vulnerability with a CVSS score above 7

were, in fact, no better than chance at reducing risk. But now we can say that

companies using RBVM programs are patching a higher percentage of their

high-risk vulnerabilities. That means they are doing more, and there’s less

wasted effort. The time it took companies to patch half of their high-risk

vulnerabilities was 158 days in 2019. This year, it was 27 days. And then

there is another measure of success. Companies start vulnerability management

programs with massive backlogs of vulnerabilities, and the number of

vulnerabilities only grows each year. Last year, about two-thirds of companies

using a risk-based system reduced their vulnerability debt or were at least

treading water. This year, that number rose to 71 percent.

A definitive primer on robotic process automation

This isn’t to suggest that RPA is without challenges. The credentials

enterprises grant to RPA technology are a potential access point for hackers.

When dealing with hundreds to thousands of RPA robots with IDs connected to a

network, each could become an attack vessel if companies fail to apply

identity-centric security practices. Part of the problem is that many RPA

platforms don’t focus on solving security flaws. That’s because they’re

optimized to increase productivity and because some security solutions are too

costly to deploy and integrate with RPA. Of course, the first step to solving

the RPA security dilemma is recognizing that there is one. Realizing RPA

workers have identities gives IT and security teams a head start when it comes

to securing RPA technology prior to its implementation. Organizations can

extend their identity and governance administration (IGA) to focus on the

“why” behind a task, rather than the “how.” Through a strong IGA process,

companies adopting RPA can implement a zero trust model to manage all

identities — from human to machine and application.

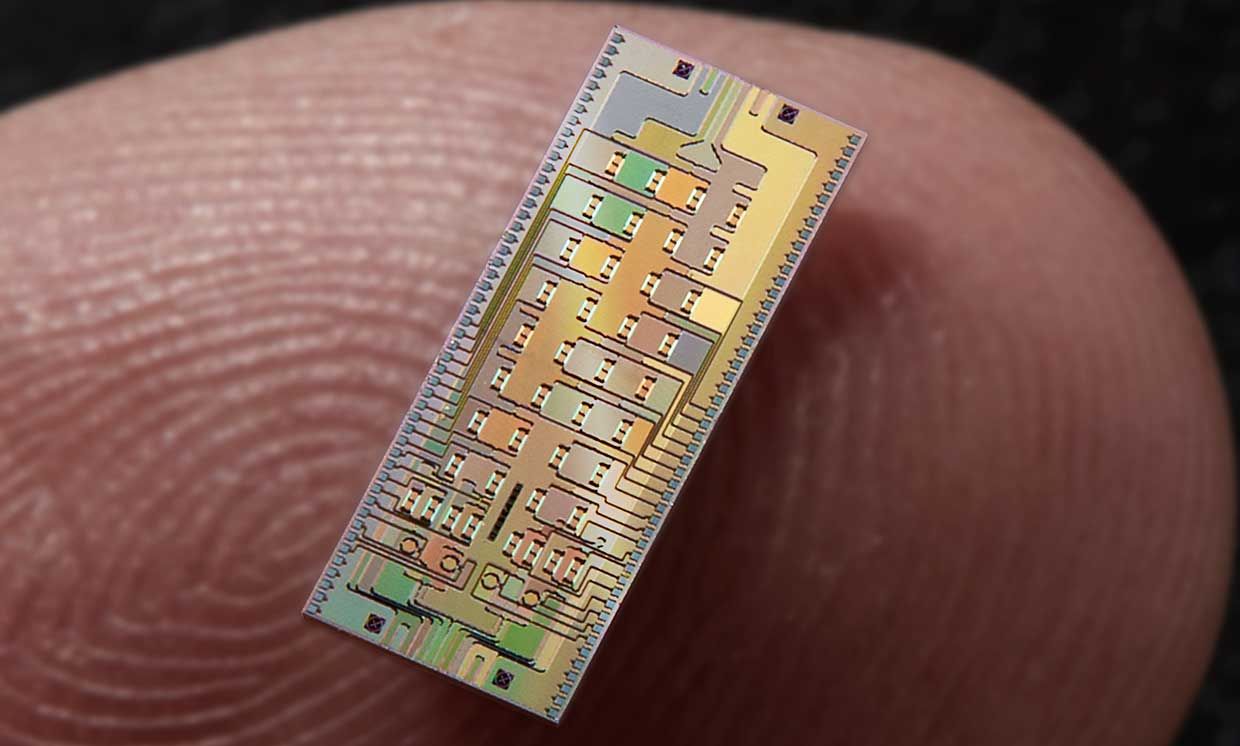

Demystifying Quantum Computing: Road Ahead for Commercialization

For CIOs determining what the next steps for quantum computing are, they must

first consider the immediate use cases for their organization and how

investments in quantum technology can pay dividends. For example, for an

organization prioritizing accelerated or complex simulations, whether it’s for

chemical or critical life sciences research like drug discovery, the increase

in computing performance that quantum offers can make all the difference. For

some organizations, immediate needs may not be as defined, but there could be

an appetite to simply experiment with the technology. As many companies

already put a lot behind R&D for other emerging technologies, this can be

a great way to play around with the idea of quantum computing and what it

could mean for your organization. However, like all technology, investing in

something simply for the sake of investing in it will not yield results.

Quantum computing efforts must map back to a critical business or technology

need, not just for the short term, but also the long term as quantum computing

matures. CIOs must also consider how the deployment of the technology changes

existing priorities, particularly around efforts such as

cybersecurity.

Leaders Talk About the Keys to Making Change Successful and Sustainable

Many organizations that have been around for a while have established

processes that are hard to change. Mitch Ashley, CEO and managing analyst at

Accelerated Strategies Group, who’s helped create several DevOps

organizations, shared his perspective about why changing a culture can be so

difficult. “Culture is a set of behaviors and norms, and also what’s rewarded

in an organization. It’s both spoken and unspoken. When you’re in an

organization for a period of time, you get the vibe pretty quickly. It’s a

measurement culture, or a blame culture, or a performance culture, or whatever

it is. Culture has mass and momentum, and it can be very hard to move. But,

you can make cultural changes with work and effort.” What Mitch is referring

to, this entrenched culture that can be hard to change, is sometimes called

legacy cultural debt. I loved Mitch’s story about his first foray into DevOps

because it’s a great place to start if you’re dealing with a really entrenched

legacy culture. He and his team started a book club, and they read The Phoenix

Project. He said, “The book sparked great conversations and helped us create a

shared vision and understanding about our path to DevOps. ...”

Quote for the day:

"The secret of leadership is simple:

Do what you believe in. Paint a picture of the future. Go there. People will

follow." -- Seth Godin