Riding out the wave of disruption

Disruption is not necessarily the crisis it’s frequently considered to be for

incumbents, the researchers stress. Two technologies can often coexist in the

marketplace for a significant period. Thus, it’s important for incumbent

companies not to overreact. They should target dual users and reexamine the

factors that have led to the old technology sticking around for so long. Of

course, the profit implications of cannibalization of the old technology and

leapfrogging depend on which type of firm is trumpeting the new technology. New

entrants will always stand to gain when they introduce a technology that takes

off. But incumbents rolling out a successive technology will also gain if their

competitors would have introduced it anyway or if the 2.0 version has a higher

profit margin than the original. The authors write, “Leapfroggers are an

opportunity loss for incumbents, but switchers are a real loss.” Regardless of

the predictive model they use, marketers should strive to understand how the

various consumer segments identified in this study will grow or shrink over time

and use that information in their forecasts of early sales or market penetration

of successive technologies.

AI and APIs: The A+ Answers to Keeping Data Secure and Private

Adding to the complexity is ensuring that AI and data are used ethically,

Marques points out. Two key categories comprise secure AI, he says: responsible

AI and confidential AI. Responsible AI focuses on regulations, privacy, trust,

and ethics related to decision-making using AI and ML models. Confidential AI

involves how companies share data with others to address a common business

problem. For example, airlines might want to pool data to better understand

maintenance, repair, and parts failure issues but avoid exposing proprietary

data to the other companies. Without protections in place, others might see

confidential data. The same types of issues are common among healthcare

companies and financial services firms. Despite the desire to add more data to a

pool, there are also deep concerns about how, where, and when the data is used.

In fact, complying with regulations is merely a starting point for a more robust

and digital-centric data management framework, Jahil explains. Security and

privacy must extend into a data ecosystem and out to customers and their PII.

For example, CCPA has expanded the concept of PII to include any information

that may help identify the individual, like hair color or personal preferences.

What is a data center REIT?

The rationale for converting to REIT status will vary from company to company,

but broadly it offers beneficial tax status and greater access to capital for

growth. “The biggest benefit is that REITs don’t pay any corporate tax,” says

Millionacre’s Frankel. “Think of a data center company that isn't a REIT. Its

income can effectively be taxed twice; once at the corporate level when the

company earns a profit, and again on the individual level when the company

pays a dividend to investors.” The rules on whether an organization can apply

for REIT classification vary from country to country, but broadly having a

portfolio of properties from which real-estate activities such as rent is the

majority of your revenue is derived, and having a number of investors to which

you provide the majority of that revenue, is the minimum requirement. “REITs

are able to raise capital more easily via share issuances and/or joint venture

partnerships as investors have a better idea of the company’s financial

situation once public, says Cushman & Wakefield’s Imboden. “The degree of

difficulty [on becoming a REIT] depends largely on if the company was

structured and managed with the intention of becoming a REIT, or if the

decision was made after years of operating.”

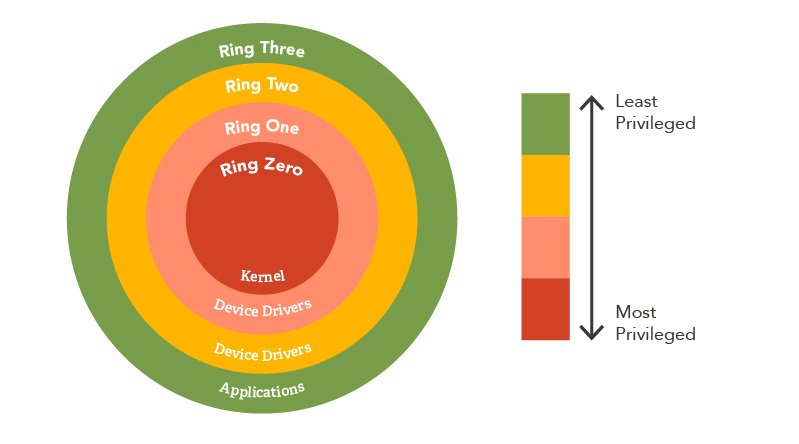

12 security career-killers (and how to avoid them)

“The biggest problem I’ve seen is security people who think security is the

be-all and end-all. They go in with that attitude, and they don’t see how they

have to enable the business,” says James Carder, CSO of the security tech

company LogRhythm. He says they instead need to collaborate with their

business-unit colleagues to understand their objectives and then be an

enabler, not a hinderance. Others agree. “Security is a profession that has

plenty of standards and regulations and frameworks, but too many times we try

to implement them in a blind way, from the perspective of the standards

instead of trying to implement them in the context of the business,” adds Russ

Kirby, CISO of software company ForgeRock. Similarly, Kirby has seen security

pros become so focused on their own objectives that they alienate other

departments that may otherwise want to work together to find a solution. He

points to one scenario, where security staffers wanted to change an

application’s minimum password length from 8 characters to upwards of 20. The

IT application team pushed back, explaining that they could go to 12

characters but anything more would take significant time and money to

change.

Six industries impacted by the combination of 5G and edge computing

"Weather and humidity can impact the performance of 5G,'' Roberts added; he

also noted that, as 5G continues to proliferate, there will be many more cell

towers. That's consistent with recently released research by PwC, which

reported that "the performance of 5G networks remains uneven." Widespread

usage is not here yet "because it's a big challenge to upgrade

infrastructure," agreed Mark Sami, a director at West Monroe. Right now, for

example, to get Verizon's Ultra Wideband network, "you need a line of sight to

a tower so you have to be in close proximity," Sami said. ... "It's all about

driving applications and how do you make these 5G and edge solutions [work] in

a manner where you create more opportunities for the developer community to

write applications to that infrastructure architecture,'' said Sid Nag, a

vice-president at Gartner. Some 90% of industrial enterprises will use edge

computing by 2020, according to Frost & Sullivan. "The applications are

endless,'' observed Chris Steffen, a research director at Enterprise

Management Associates. "Every vertical is going to be impacted in some way,''

he added, depending on specific use cases and relevance.

Why Disconnected Data Grinds Customer Journeys to a Halt

Business architecture matters because it defines and explains the

relationships between customer business processes. And information and

application architecture matter because they define the major types of

information and the applications that process customer data. Clearly, this

kind of systems thinking is essential to defining holistic customer journeys —

or in the language of marketing, the friction points between customer facing

systems and data that flows between them. Thinking this way raises questions

like why customers need to interface with applications separately and why they

have to enter data multiple times when interacting with these separate

applications — two big sources of customer journey friction. Data limits the

quality of the customer journey at three major points: a company’s sales,

marketing and service processes. According to economist Theodore Levitt, any

sales and marketing processes should focus on the following: “the role of

marketing is creating and keeping the customer.” To create or obtain new

customers, organizations must simplify the processes to become a customer,

regardless of the customer channel chosen. In practice this means integrating

customer facing systems, so customers enter information only once.

Rust Could Be the Secret to Next-Gen Computing

The team think there are good prospects for using ‘rust’ to create

super-efficient computers. This is because although very simple in architecture,

the Fe2O3-based device where merons and bimerons were found already contains all

the ingredients to manipulate these tiny bits quickly and efficiently – by

flowing a tiny electrical current in an extremely thin metallic ‘overcoat’. In

fact, the team state that controlling and observing the movement of merons and

bimerons in real time is the goal of a future X-ray microscopy experiment,

currently in the planning phase. Moving from basic to applied research means

cost and compatibility considerations are of paramount importance. While iron

oxide is extremely abundant and cheap, the fabrication techniques employed by

researchers at Singapore and Madison are complex and require atomic-scale

control. However, the team are optimistic as they recently demonstrated that is

possible to ‘peel off’ a thin layer of oxide from its growth medium and stick it

almost anywhere, with its properties being largely unaffected. They say their

next steps will be the design and fabrication of proof-of-principle devices

based on ‘cosmic strings’ .

New Opportunities from Tech-Driven Industry Convergence

When we study the evolution of information technology, we find that companies

traditionally leveraged technology solutions to serve specific business

functions within an industry. For example, in life sciences or pharmaceutical

companies, technology solutions were usually grouped by function such as

commercial, R&D, and supply chain. Most answers were explicitly designed for

the specific process and had little scope for portability across sectors.

However, as technologies evolved, solutions have become increasingly broad-based

and sector-agnostic. While cloud and high-tech companies still provide

industry-specific solutions, there is a convergence in the types of problems

they solve for customers across industries. ... As the lines are getting

blurred, we need to rethink our traditional approach to grouping various sectors

when building technology solutions. For instance, all consumer-facing industries

such as CPG, pharma, insurance, and manufacturing are likely to have significant

overlap in the challenges they face. Similarly, healthcare, finance, medical

devices, retail, and telecommunications are likely to find common ground.

Networking software can ease the complexity of multicloud management

Cloud providers offer essential tools in three key areas: security, networking,

and management and orchestration (MANO). Their security capabilities and

controls often must be manually implemented, and their networking requires that

their on-ramps and off ramps—which providers optimize--be specifically routed.

Each cloud has its own MANO tools to provide management, visibility, and

automation tools that must be set in order to gain visibility see and tune

application performance. That means a learning curve and fragmented MANO for

enterprise IT teams that support multicloud environments. These factors combine

to make many IT operations involving IaaS multiclouds difficult to scale and the

task of troubleshooting performance slowdowns tedious and time consuming. The

leading IaaS providers are building new access capabilities at the edge of their

networks. Key to user experience is network performance, which relies on network

routing to and from the nearest cloud on-ramp. Leveraging WAN network

intelligence is essential to delivering a reliable, high quality experience

between applications in the public cloud and end-users. Enterprise IT will

require the network intelligence to connect to the best IaaS point of presence

to accelerate application delivery.

The transportation sector needs a standards-driven, industry-wide approach to cybersecurity

We have already witnessed attacks on electronic charging stations via the

Near-Field Communication (NFC) card, which handles billing for EV charging. The

ID cards have inherent vulnerabilities due to third-party providers not securing

customer data. Research has shown malicious individuals can copy these cards and

use them to charge other vehicles. Another concern is related to traditional

lithium-ion batteries, which are used in EVs and have the potential to explode.

While this issue is being addressed by battery suppliers with investment in

R&D, this safety effort must also consider the risk of cyber attacks. If

it’s known that a battery in an EV can explode, this may increase the likelihood

that a bad actor may target this type of car with the intent to cause harm. As

EV battery technology advances, it’s imperative that comprehensive cybersecurity

measures evolve and improve in parallel so automakers and technology providers

can prevent this type of hacking from occurring. As the AV industry advances, so

will the incentives for hackers. There is an increased potential for financial

crimes committed via ransomware attacks. Further, these attacks could cause

vehicles to behave abnormally, potentially endangering human lives.

Quote for the day:

"To accomplish great things, we must not

only act, but also dream, not only plan but also believe." --

Anatole France

:max_bytes(150000):strip_icc():format(webp)/GettyImages-1223790532-b9202544771f4246912063b14cc0e41a.jpg)