5 Trends for Industry 4.0: The Factory of the Future

The growing complexity of machine software as well as the ongoing modularization

of modern production equipment has led to more simulation upfront. The fact that

international travel for commissioning or service has significantly reduced or

in some cases halted these days reinforces this trend. Functional tests of

production equipment of the future will be performed using comprehensive models

for simulation and virtual commissioning. The factory of the future will be

built twice—first virtually, then physically. Digital representations of

production machines continuously fed with live data from the field will be used

for health monitoring throughout the entire lifetime of the equipment and will

eventually make onsite missions be an exception ... Flexible production in the

factory of the future will require robots and autonomous handling systems to

adapt faster to changing requirements. While classic programming and teaching of

robots isn’t suitable for preparing the system to handle the huge and

fast-growing number of different goods, future handling equipment will

automatically learn through reinforcement learning and other AI techniques. The

prerequisites—massive calculation power and huge amounts of data—have been

established over the past years.

Runtime data no longer has to be vulnerable data

With all of these security advantages, you might think that CISOs would have

quickly moved to protect their applications and data by implementing secure

enclaves. But market adoption has been limited by a number of factors. First,

using the secure enclave protection hardware requires a different instruction

set, and applications must be re-written and recompiled to work. Each of the

different proprietary implementations of enclave-enabling technologies requires

its own re-write. In most cases, enterprise IT organizations can’t afford to

stop and port their applications, and they certainly can’t afford to port them

to four different platforms. In the case of legacy or commercial off-the-shelf

software, rewriting applications is not even an option. While secure enclave

technologies do a great job protecting memory, they don’t cover storage and

network communications – resources upon which most applications depend. Another

limiting factor has been the lack of market awareness. Server vendors and cloud

providers have quickly embraced the new technology, but most IT organizations

still may not know about them.

Liquid Neural Network: What’s A Worm Got To Do With It?

Liquid networks make the model more robust by improving its resilience to

unexpected and noisy data. For instance, it can make algorithms adjust to

heavy rains that obscure a self-driving car’s vision. Liquid network makes the

algorithm more interpretable. The network can help overcome the machine

learning algorithms’ black-box nature because of the neurons’ expressive

nature. The liquid network has performed better than other state-of-the-art

time series by a few percentage points to predict future values in datasets

used in atmospheric chemistry and traffic patterns. Apart from the high

reliability, it also helped reduce computational costs. The researchers were

aiming for fewer but richer nodes in the algorithm. In other words, the study

focused on scaling down the network rather than scaling up. “This is a way

forward for the future of robot control, natural language processing, video

processing — any form of time series data processing,” said Ramin Hasani, the

paper’s lead author. ... Tremendous progress has been made in developing smart

bots that can perform multiple intelligent tasks like work alongside humans or

give mental health advice. However, its adoption presents a significant

concern in terms of safety and ethics.

Virtual Panel: The MicroProfile Influence on Microservices Frameworks

The term cloud-native is still a large gray area and it's concept is still

under discussion. If you, for example, read ten articles and books on the

subject, all these materials will describe a different concept. However, what

these concepts have in common is the same objective - get the most out of

technologies within the cloud computing model. MicroProfile popularized this

discussion and created a place for companies and communities to bring

successful and unsuccessful cases. In addition, it promotes good practices

with APIs, such as MicroProfile Config and the third factor of The

Twelve-Factor App. ... The use of reflection by the frameworks has its

trade-offs. For example, at the application start and in-memory consumption,

the framework usually invokes the inner class ReflectionData within

Class.java. It is instantiated as type SoftReference, which demands a certain

time to leave the memory. So, I feel that in the future, some frameworks will

generate metadata with reflection and other frameworks will generate this type

of information at compile time like the Annotation Processing API or similar.

We can see this kind of evolution already happening in CDI Lite, for

example.

General Availability of the new PnP Framework library for automating SharePoint Online operations

Overtime the classic PnP Sites Core has grown into a hard to maintain code

base which made us decide to start a major upgrade effort for all PnP .NET

components. As a result of that, PnP Framework is a slimmed down version of

PnP Sites Core dropping legacy pieces and dropping support for on-premises

SharePoint in favor of improved quality and maintainability. If you’re still

using PnP Sites Core with your on-premises SharePoint than that’s perfectly

fine, we’re not going to pull these components but you’ll not see any updated

versions going forward. PnP Framework is a first milestone in the upgrade of

the PnP .NET components, in parallel we’re building a brand new PnP Core SDK

using modern .NET development techniques focused on performance and quality

(check our test coverage and documentation). Overtime we’ll implement more and

more of the PnP Framework functionality in PnP Core SDK and then replace the

internal implementation in PnP Framework. The modern pages API is good

example: when you use that API in PnP Framework you’re actually using the

implementation done in PnP Core SDK. Below picture gives an overview of our

journey and the road ahead:

Endpoint Detection and Response: How Hackers Have Evolved

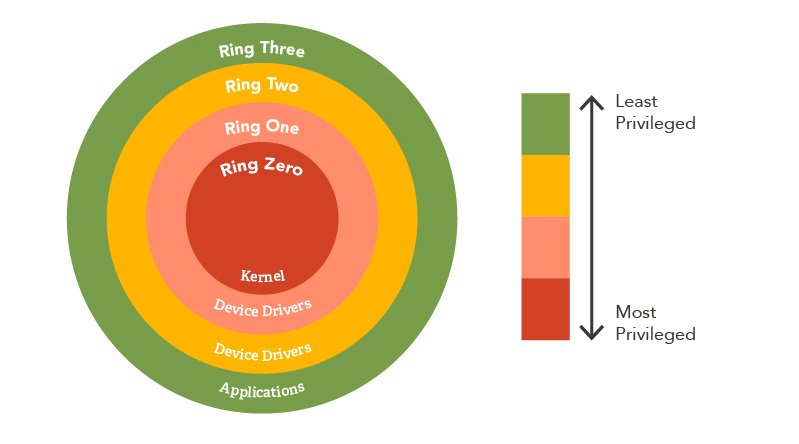

While kernel mode is the most elevated type of access, it does come with

several drawbacks that complicate EDR effectiveness. In kernel mode,

visibility can be quite limited as there are several data points only

available in user mode. Also, third-party kernel-based drivers are often

difficult to develop and if not properly vetted can lead to higher chances of

system instability. The kernel is often regarded as the most fragile part of a

system and any panics or errors in kernel mode code can cause huge problems,

even crashing the system entirely. User mode is often more appealing to

attackers as it has no way of directly accessing the underlying hardware. Code

that runs in user mode must use API functions that interact with the hardware

on behalf of the application, allowing for more stability and fewer

system-wide crashes (as application crashes will not affect the system). As a

result, applications that run in user mode need minimal privileges and are

more stable. Suffice to say, a lot of EDR products rely heavily on user mode

hooks over kernel mode, making things interesting for attackers. Since the

hooks exist in user mode and hook into our processes, we have control over

them. Since applications run within the user’s context, this means everything

that's loaded into our process can be manipulated by the user in some form or

another.

Continuous Delivery: Why You Need It and How to Get Started

For decades, enterprise software providers have focused on delivering large

quarterly releases. "This system is slow because if there are any bugs in such

a large release, developers have to sift through the deployed update in its

entirety to find the problem to patch," said Eric Johnson, executive vice

president of engineering for open-source code collaboration platform provider

GitLab. Enterprises committed to CD rapidly deliver a string of highly

granular releases. "This way, if there are any bugs in a new individual

release they’re easily and swiftly addressed by developers' teams. Most

developers appreciate CD because it helps them deliver higher quality work

while limiting the risk of introducing unwanted change into production

environments. CD ensures that the entire software delivery lifecycle from

source control, to building and testing, to artifact release, and ultimately

deployment into real environments, is automated and consistent, explained

Brent Austin, director of engineering at Liberty Mutual Insurance. High levels

of test automation are critical in CD, allowing developers to confidently

introduce changes quickly with high confidence and higher quality. "CD also

helps developers think in small batch sizes, which allows for easier and more

effective rollback scenarios when issues are found and makes introducing

change safer," Austin said.

Interview With a Russian Cybercriminal

Interacting with a ransomware operator is "unusual, but not that unusual,"

says Craig Williams, director of outreach for Cisco Talos. Of course, a key

challenge in chatting with a criminal is knowing when to trust them.

Researchers asked many questions they were able to verify, but there were

scenarios in which they felt Aleks wasn't telling the whole story. Williams

says the strongest example of this related to targeting the healthcare

industry. "He pointed out how he didn't target healthcare customers … but then

knew an awful lot about when healthcare paid, and in what situations they

paid, and what type of data they have, and exactly how valuable it would be,

and if they had insurance, they were more likely to pay," he explains. For

example, Aleks reportedly told researchers hospitals pay 80% to 90% of the

time. Aleks seems to choose victims based on their ability to pay quickly,

Williams says, though the report notes the attacker's views may not represent

those of LockBit group. For example, Aleks says the EU's General Data

Protection Regulation (GDPR) may work in adversaries' favor. Victim companies

are more likely to pay "quickly and quietly" so as to avoid penalties under

GDPR.

The most important skills for successful AI deployments

As AI has bolstered the operations of more and more sectors, it’s become

apparent that knowledge of the technology alone isn’t enough for deployments

to succeed. Whether the AI solution is serving companies or individuals, the

engineers behind the roll-out need to understand the business at hand. “The

company needs people who know the principles of how these algorithms work, and

how to train the machine, but can also understand the business domain and

sector,” said Sanz-Saiz. Without this understanding, training an algorithm can

be more complex. Any successful data scientist not only needs to bring

technical expertise, but also needs to have domain and sector expertise as

well.” Without sufficient industry knowledge, decision-making can become

inaccurate, and in some cases, such as healthcare, it can also be dangerous.

Companies such as Kheiron Medical have been using an AI solution to transform

cancer screening, accelerating the process and minimising human error. For

this to be effective, careful assessments and evaluations at every stage of

the screening procedure need to be in place. “I think a commitment to clinical

rigour needs to underpin everything that we do,” explained Sarah Kerruish,

chief strategy officer at Kheiron.

Google’s New Approach To AutoML And Why It’s Gaining Traction

AutoML is an automated process of searching for a child program from a search

space to maximise a reward. The researchers broke down the process into a

sequence of symbolic operations. Meaning, a child program is turned into a

symbolic child program. The symbolic program is further hyperified into a

search space by replacing some of the fixed parts with to-be-determined

specifications. During the search, the search space materialises into

different child programs based on search algorithm decisions. It can also be

rewritten into a super-program to apply complex search algorithms such as

efficient NAS (ENAS). PyGlove is a general symbolic programming library on

Python. Using this library, Python classes, as well as functions, can be made

mutable through brief Python annotations, making it easier to write AutoML

programs. The library also allows AutoML techniques to be quickly dropped into

preexisting machine learning pipelines while benefiting open-ended research

which requires extreme flexibility. PyGlove implements various popular search

algorithms, such as PPO, Regularised Evolution and Random Search.

Quote for the day:

"If you can't handle others'

disapproval, then leadership isn't for you." --

Miles Anthony Smith

No comments:

Post a Comment