Ethical AI in healthcare

In many ways these technologies are going to be shaping us even before we've

answered this question. We'll wake up one morning and realize that we have

been shaped. But maybe there is an opportunity for each of us, in our own

settings and in conversations with our colleagues and at the dinner table, and

with society, more broadly, to ask the question, What are we really working

toward? What would we be willing to give up in order to realize the benefits?

And can we build some consensus around that? How can we, on the one

hand, take advantage of the benefits of AI-enabled technologies and on the

other, ensure that we're continuing to care? What would that world look like?

How can we maintain the reason why we came into medicine in the first place,

because we care about people, how can we ensure that we don't inadvertently

lose that? The optimistic view is that, by virtue of freeing up time by

moving some tasks off of clinicians’ desks, and moving the clinician away from

the screen, maybe we can create space, and sustain space for caring. The hope

that is often articulated is that AI will free up time, potentially, for what

really matters most. That's the aspiration. But the question we need to ask

ourselves is, What would be the enabling conditions for that to be realized?

Apache Cassandra’s road to the cloud

What makes the goal of open sourcing cloud-native extensions to Cassandra is

emergence of Kubernetes and related technologies. The fact that all of these

technologies are open source and that Kubernetes has become the de facto

standard for container orchestration has made it thinkable for herds of cats

to converge, at least around a common API. And enterprises embracing the cloud

has created demand for something to happen, now. A cloud-native special

interest group has formed within the Apache Cassandra community and is still

at the early stages of scoping out the task; this is not part of the official

Apache project. at least yet. Of course, the Apache Cassandra community had to

get its own house in order first. As Steven J. Vaughan-Nichols recounted in

his exhaustive post, Apache Cassandra 4.0 is quite definitive, not only in its

feature-completeness, but also in the thoroughness with which it has fleshed

out the bugs to make it production-ready. Unlike previous dot zero versions,

when Cassandra 4.0 goes GA, it will be production-ready. The 4.0 release

hardens the platform with faster data streaming, not only to boost replication

performance between clusters, but make failover more robust. But 4.0 stopped

short about anything to do with Kubernetes.

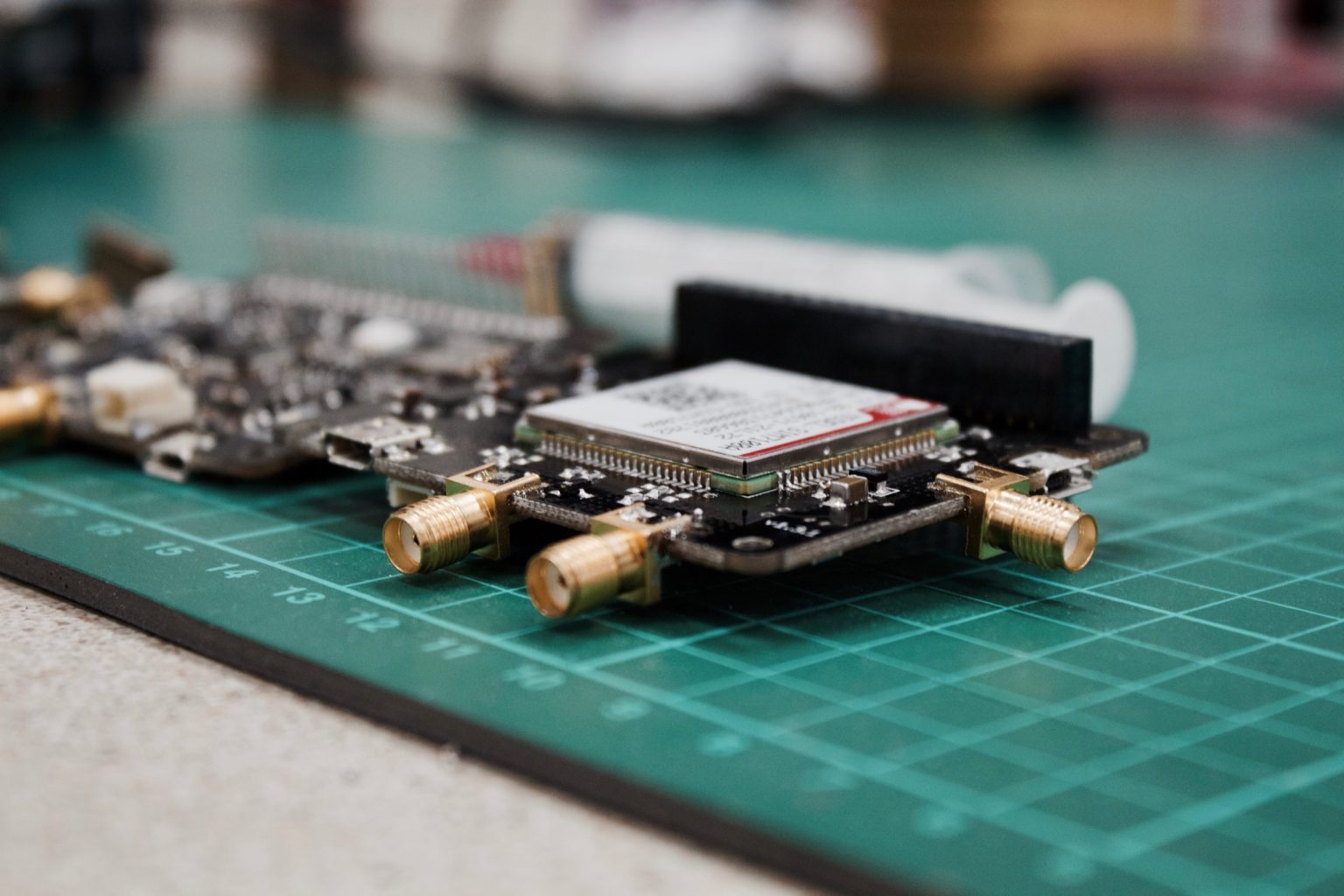

From doorbells to nuclear reactors: why focus on IoT security

An important step in network security for IoT is identifying the company’s

most essential activities and putting protections around them. For

manufacturing companies, the production line is the key process. Essential

machinery must be segmented from other parts of the company’s internet network

such as marketing, sales and accounting. For most companies, just five to 10%

of operations are critical. Segmenting these assets is vital for protecting

strategic operations from attacks. One of the greatest risks of the connected

world is that something quite trivial, such as a cheap IoT sensor embedded in

a doorbell or a fish tank, could end up having a huge impact on a business if

it gets into the wrong communication flow and becomes an entry point for a

cyber attack. To address these risks, segmentation should be at the heart of

every company’s connected strategy. That means defining the purpose of every

device and object linked to a network and setting boundaries, so it only

connects to parts of the network that help it serve that purpose. With 5G, a

system known as Network Slicing helps create segmentation. Network Slicing

separates mobile data into different streams. Each stream is isolated from the

next, so watching video could occur on a separate stream to a voice

connection.

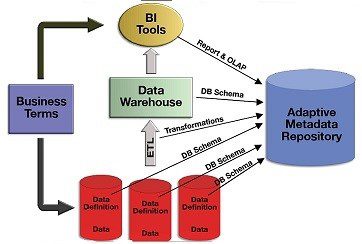

The ABCs of Data Science Algorithms

An organization’s raw data is the cornerstone of any data science strategy.

Companies who have previously invested in big data often benefit from a more

flexible cloud or hybrid IT infrastructure that is ready to deliver on the

promise of predictive models for better decision making. Big data is the

invaluable foundation of a truly data-driven enterprise. In order to deploy AI

solutions, companies should consider building a data lake -- a centralized

repository that allows a business to store structured and unstructured data on

a large scale -- before embarking on a digital transformation roadmap. To

understand the fundamental importance of a solid infrastructure, let’s compare

data to oil. In this scenario, data science serves as the refinery that turns

raw data into valuable information for business. Other technologies --

business intelligence dashboards and reporting tools -- benefit from big data,

but data science is the key to unleashing its true value. AI and machine

learning algorithms reveal correlations and dependencies in business processes

that would otherwise remain hidden in the organization’s collection of raw

data. Ultimately, this actionable insight is like refined oil: It is the fuel

that drives innovation, optimizing resources to make the business more

efficient and profitable.

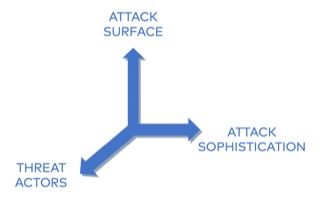

Soon, your brain will be connected to a computer. Can we stop hackers breaking in?

Some of the potential threats to BCIs will be carry-overs from other tech

systems. Malware could cause problems with acquiring data from the brain, as

well as sending signals from the device back to the cortex, either by altering

or exfiltrating the data. Man-in-the-middle attacks could also be recast

for BCIs: attackers could either intercept the data being gathered from the

headset and replace it with their own, or intercept the data being used to

stimulate the user's brain and replace it with an alternative. Hackers could

use methods like these to get BCI users to inadvertently give up sensitive

information, or gather enough data to mimic the neural activity needed to log

into work or personal accounts. Other threats to BCI security will be

unique to brain-computer interfaces. Researchers have identified malicious

external stimuli as one of the most potentially damaging attacks that could be

used on BCIs: feeding in specially crafted stimuli to affect either the users

or the BCI itself to try to get out certain information, showing users images

to gather their reactions to them, for example. Other similar attacks could be

carried out to hijack users' BCI systems, by feeding in fake versions of the

neural inputs causing them to take unintended actions – potentially turning

BCIs into bots, for example.

SaaS : The Dirty Secret No Tech Company Talks About

The priority is to protect employees and ensure business continuity. To

achieve this, it is essential to continue adapting the IT infrastructure

needed for massive remote working and to continue the deployment of the

collaborative digital systems. Beyond these new challenges, the increased

risks related to cybersecurity and the maintenance of IT assets, particularly

the application base, require vigilance. After responding to the emergency,

the project portfolio and the technological agenda must be rethought. This may

involve postponing or freezing projects that do not create short-term value in

the new context. Conversely, it is necessary to strengthen transformation

efforts capable of increasing agility and resilience, in terms of

cybersecurity, advanced data analysis tools, planning, or even optimisation of

the supply chain. value. The third major line of action in this crucial period

of transition is to tighten human resources management, focusing on the

large-scale deployment of agile methods, the development of sensitive

expertise such as data science, artificial intelligence or cybersecurity. The

war for talent will re-emerge in force when the recovery comes, and it is

therefore important to strengthen the attractiveness of the company.

Digital Strategy In A Time Of Crisis

The priority is to protect employees and ensure business continuity. To

achieve this, it is essential to continue adapting the IT infrastructure

needed for massive remote working and to continue the deployment of the

collaborative digital systems. Beyond these new challenges, the increased

risks related to cybersecurity and the maintenance of IT assets, particularly

the application base, require vigilance. After responding to the emergency,

the project portfolio and the technological agenda must be rethought. This may

involve postponing or freezing projects that do not create short-term value in

the new context. Conversely, it is necessary to strengthen transformation

efforts capable of increasing agility and resilience, in terms of

cybersecurity, advanced data analysis tools, planning, or even optimisation of

the supply chain. value. The third major line of action in this crucial period

of transition is to tighten human resources management, focusing on the

large-scale deployment of agile methods, the development of sensitive

expertise such as data science, artificial intelligence or cybersecurity. The

war for talent will re-emerge in force when the recovery comes, and it is

therefore important to strengthen the attractiveness of the company.

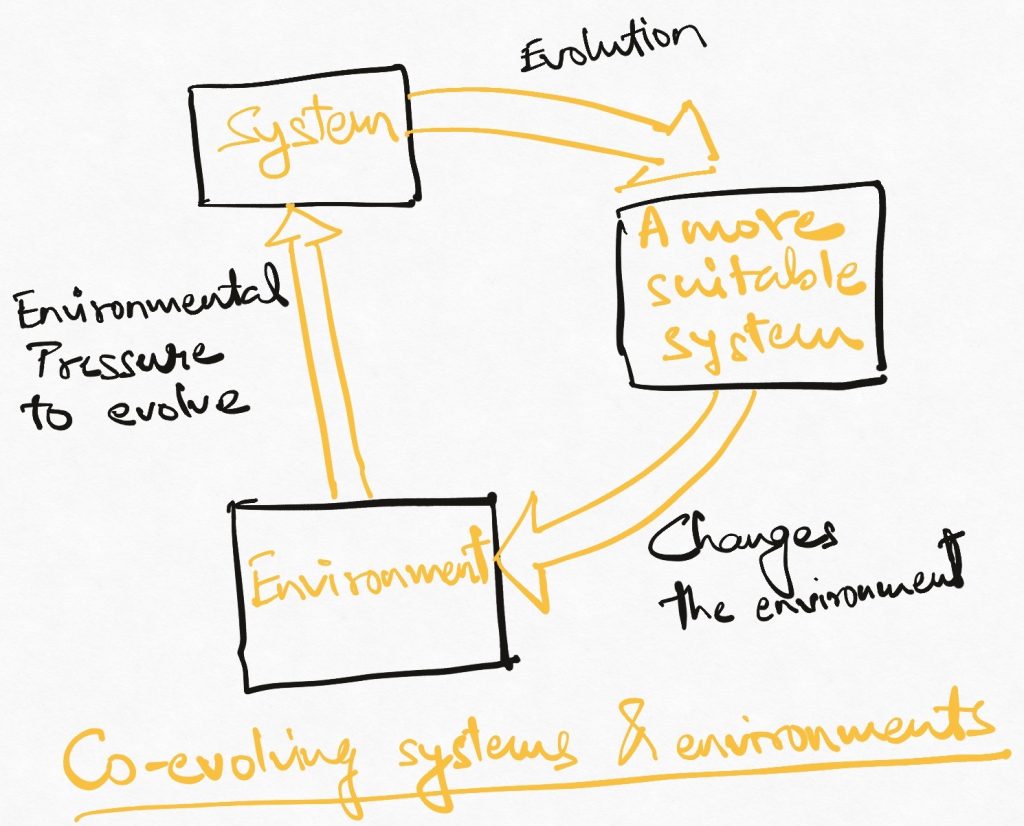

Why ISO 56000 Innovation Management matters to CIOs

The ISO 56000 series presents a new framework for innovation, laying out the

fundamentals, structures and support that ISO leaders say is needed within an

enterprise to create and sustain innovation. More specifically, the series

provides guidance for organizations to understand and respond to changing

conditions, to pursue new opportunities and to apply the knowledge and

creativity of people within the organization and in collaboration with

external interested parties, said Alice de Casanove, chairwoman of the ISO

56000 standard series and innovation director at Airbus. ISO, which started

work on these standards in 2013, started publishing its guidelines last year.

The ISO 56002 guide for Innovation management system and ISO 56003 Tools and

methods for innovation partnership were published in 2019. ISO released its

Innovation management -- Fundamentals and vocabulary in February 2020. Four

additional parts of the series are forthcoming. The committee developed the

innovation standards so that they'd be applicable to organizations of all

types and sizes, de Casanove said. "All leaders want to move from serendipity

to a structured approach to innovation management," she explained

How plans to automate coding could mean big changes ahead

Known as a "code similarity system", the principle that underpins MISIM is not

new: technologies that try to determine whether a piece of code is similar to

another one already exist, and are widely used by developers to gain insights

from other existing programs. Facebook, for instance, uses a code

recommendation system called Aroma, which, much like auto-text, recommends

extensions for a snippet of code already written by engineers – based on the

assumption that programmers often write code that is similar to that which has

already been written. But most existing systems focus on how code is written

in order to establish similarities with other programs. MISIM, on the other

hand, looks at what a snippet of code intends to do, regardless of the way it

is designed. This means that even if different languages, data structures and

algorithms are used to perform the same computation, MISIM can still establish

similarity. The tool uses a new technology called context-aware semantic

structure (CASS), which lets MISIM interpret code at a higher level – not just

a program's structure, but also its intent. When it is presented with code,

the algorithm translates it in a form that represents what the software does,

rather than how it is written; MISIM then compares the outcome it has found

for the code to that of millions of other programs taken from online

repositories.

RPA bots: Messy tech that might upend the software business

Where it gets interesting is that these RPA bots are basically building the

infrastructure for all the other pieces to fit together such as AI, CRM, ERP

and even documents. They believe in the long-heralded walled-garden approach

in which enterprises choose one best-of-breed infrastructure platform like

Salesforce, SAP or Oracle and build everything on top of that. History has

shown that messy sometimes makes more sense. The internet did not develop from

something clean and organized -- it flourished on top of TCP: a messy,

inefficient and bloated protocol. Indeed, back in the early days of the

internet, telecom engineers were working on an organized protocol stack called

open systems interconnection that was engineered to be highly efficient. But

then TCP came along as the inelegant alternative that happened to work and,

more important, made it possible to add new devices that no one had planned on

in the beginning. Automation Anywhere's CTO Prince Kohli said other kinds of

messy technologies have followed the same path. After TCP, HTTP came along to

provide a lingua franca for building web pages. Then, web developers started

using it to connect applications using JavaScript object notation.

Quote for the day:

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/67002921/Holographic_optics_hero.0.png)