The Challenges of Building a Reliable Real-Time Event-Driven Ecosystem

Building a dependable event-driven architecture is by no means an easy feat.

There is an entire array of engineering challenges you will have to face and

decisions you will have to make. Among them, protocol fragmentation and

choosing the right subscription model (client-initiated or server-initiated)

for your specific use case are some of the most pressing things you need to

consider. While traditional REST APIs all use HTTP as the transport and

protocol layer, the situation is much more complex when it comes to

event-driven APIs. You can choose between multiple different protocols.

Options include the simple webhook, the newer WebSub, popular open protocols

such as WebSockets, MQTT or SSE, or even streaming protocols, such as Kafka.

This diversity can be a double-edged sword—on one hand, you aren’t restricted

to only one protocol; on the other hand, you need to select the best one for

your use case, which adds an additional layer of engineering complexity.

Besides choosing a protocol, you also have to think about subscription models:

server-initiated (push-based) or client-initiated (pull-based). Note that some

protocols can be used with both models, while some protocols only support one

of the two subscription approaches. Of course, this brings even more

engineering complexity to the table.

Successful Digital Transformation Requires a Dual-track Approach

This first part of the dual-track approach focuses on the identification and

implementation of new digital tech throughout an organization, while also

working to change cultures and business workflows impacted by the

transformation, according to the report. While this step is critical, it

is also complex and time consuming. The benefits may take time to come to

fruition, which is why many executives are dissatisfied with current

transformation results. Not only are executives impatient, but they don't have

the second part of the dual-track to get them by, the report found. The

second portion is a parallel track that hones in on areas overlooked in

large-scale transformation tactics. These areas include the organization's

ability to quickly connect and modernize hundreds of crucial processes that

cross both business workflows and work groups, according to the report. This

goal can be achieved through rapid-cycle innovation, which encourages business

professionals outside of IT to propose and create new apps for updating

existing workflow processes, with the goal of achieving quick wins for the

company and supporting long-term transformation, the report found.

How deploying new-age technologies has changed the role of leadership amid COVID-19

Circumstances created by a pandemic, such as COVID-19 have been hugely

disruptive and could even render organizations paralytic, if they are far

removed from any understanding of how technology is an imperative and not

optional add on. This is why it is critical to have a proactive mindset to

technology, instead of a reactive approach. Proactive investment in technology

is helping organizations reap maximum benefits as this approach allows leaders

to prepare their people to embrace and become comfortable in using technology,

so that it becomes spontaneously embedded in an organization at a fundamental

level. The investments we proactively made many years ago, whether in secure

virtual platforms or AI driven due diligence processes that help automate how

we finalize our contracts, has helped us seamlessly adapt to working with

minimum disruption. The biggest asset has been the spontaneous comfort level

of our people in adapting to this transformed scenario of working from home,

due to their prior high degree of familiarity with using technology platforms

and processes at work over the past many few years, ensuring our ability to

optimize productivity.

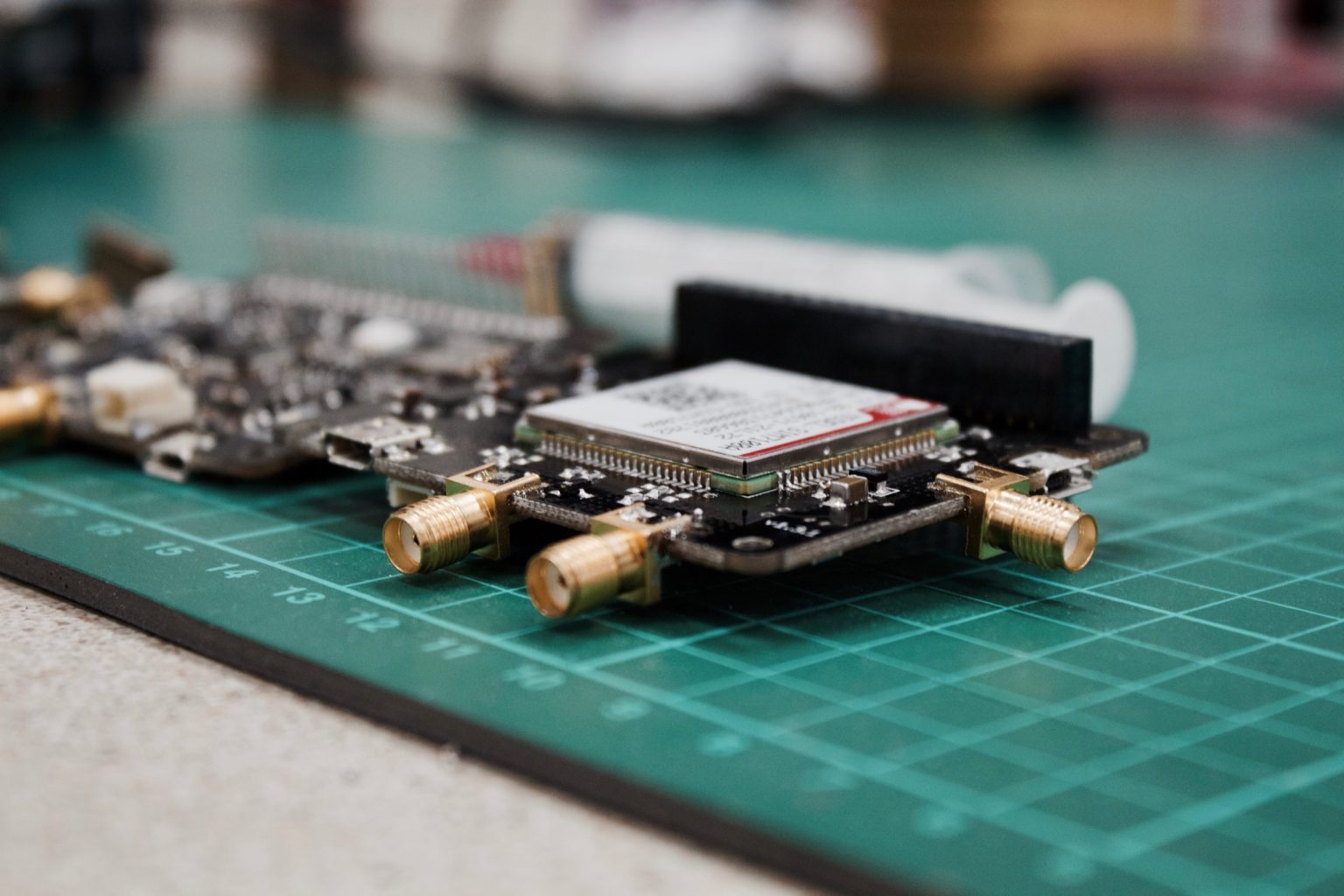

Anatomy of a Breach: Criminal Data Brokers Hit Dave

At the moment, however, some evidence points to ShinyHunters having phished Dave employees. The group has previously advertised - and has been suspected of being behind - the sale of millions of stolen records obtained from Indonesian e-commerce firm Tokopedia, Indian online learning platform Unacademy, Chicago-based meal delivery outfit HomeChef, online printing and photo store ChatBooks, university news site Chronicle.com, as well as Microsoft's private GitHub repositories, according to Baltimore-based security firm ZeroFox. How does ShinyHunters steal so much data? Cyble says that in a post to a hacking forum, a user called "Sheep" says of the Dave breach: "This database was dumped through sending GitHub phishing emails to Dave.com employees. The employees were found by searching for developers in the organization on LinkedIn/Crunchbase/Angel. All of the databases sold by ShinyHunters were obtained through this method. In some cases, [the] same method was used but for GitLab, Slack and Bitbucket."IoT Security: How to Search for Vulnerable Connected Devices

Researchers offer many tools and ways to search for hacker-friendly IoT

devices. The most effective methods have already been tested by botnet

creators. In general, the use of certain vulnerabilities by botnets is the

most reliable criterion for assessing the level of security of IoT devices and

the possibilities of their mass exploitation. Searching for vulnerabilities,

some attackers rely on the firmware (in particular, those errors that were

discovered during firmware analysis using reverse engineering methods). Other

attackers start looking for vulnerabilities searching for the manufacturer’s

name. In any case, for a successful search, some kind of a distinctive

feature of a vulnerable device is needed, and it would be nice to find several

such features. ... There are really many vulnerabilities in IoT devices, but

not all of them are easy to exploit. Some vulnerabilities require a physical

connection, being near or on the same local network. The use of others is

complicated by quick security patches. On the other hand, manufacturers are in

no hurry to patch firmware and often admit it. Getting an accurate list

of vulnerable IoT devices will require significant efforts, it is not just a

one-time query.

Security: This nasty surprise could be waiting for retailers when they open up again

"A lot of retailers, when they come back online, they're going to be focused

on business processes and getting employees back to work. They're not

necessarily thinking, 'maybe I need to update Windows on my computer

terminal', or update POS terminal firmware." In retail, where surges in online

transactions during the pandemic have forced retailers to quickly transform

their ecommerce capabilities, hackers have shifted their focus to make the

most of this opportunity. This includes changing-up well-known types of

attacks by using them in different ways, such as exploiting credit cards

within a different type of merchant platform, and targeting parts of

retailers' systems that might otherwise slip through the cracks. We've already

seen new forms of attacks on retailers take place during the pandemic. In late

June, researchers at security software firm Malwarebytes identified a new

web-skimming attack , whereby cybercriminals concealed malware on ecommerce

sites that would steal information typed into the payment input fields,

including customers' names, address and card details.

Finland government funds work on potential quantum leap

The Finnish government has allocated €20.7m to the venture, which will be run

as an innovation partnership open to international bidding. Closer to home,

VTT-TRCF plans to cooperate with Finnish companies across the IT and

industrial sphere during the various phases of the project’s implementation

and application. The rapid advances in quantum technology and computing have

the potential to provide societies with the tools to overcome major future

problems and challenges, such as the Covid-19 pandemic, that remain out of the

reach of contemporary supercomputers. Quantum technologies have the potential

to complete complex calculations, which currently take days to do, orders of

magnitude quicker. Making calculations that traditional computers are

fundamentally unable to do, if practical, they would mark a leap forward in

computing capability far greater than that from the abacus to a modern

computer. Antti Vasara, the CEO of VTT-TRCF said: “The quantum computers

of the future will be able to accurately model viruses and pharmaceuticals, or

design new materials in a way that is impossible with traditional methods.”

What the CCPA means for content security

Simply installing an ECM system will not yield a secure content ecosystem. If

there is one thing that all ECM experts agree on, it's installing an ECM

system will accomplish nothing aside from consuming resources. People need to

use the system to manage content -- and want to use it -- even after setting

up the necessary security controls to meet the requirements of the CCPA.

Deploying an ECM system that is so secure that people do not want to use it is

a waste of resources. The ECM system does not need to be complicated. Setting

up a secure desktop sync of content is an important first step in ease of use

and adoption. Instead of just rolling it out, companies need to work with each

group using the software first. The business must help users organize their

content and set up a basic structure for storing content so that the system

doesn't become disorganized. Depending on the system that a business is using,

setting up a basic structure may include a basic taxonomy, content types,

standard metadata or a combination of any of these. If a business implements

its ECM system correctly, its largest challenge will be securing mobile

devices and laptops.

How blockchain could play a relevant role in putting Covid-19 behind us

Covid-19 has revealed the weaknesses of global supply chains with countless

reports of PPE issues, a lack of food in impoverished areas, and a breakdown

of business-as-normal, even in places where demand has remained constant.

Trust has always been the keystone of trade. But how can you trust supply

chain partners to deliver in times of widespread failure? Owing to its

decentralised nature, blockchain-based applications create a transparent

ecosystem when you trust — and see — that the mechanisms in place are fair to

all. It can provide instant overviews of entire supply chains to highlight

issues as soon as they arrive. What’s more, it is possible to implement live

failsafes with smart contracts that can ensure the smooth continuation of the

supply chain and remove the very need for trust in the first place. To this

end, the World Economic Forum developed the Blockchain Deployment Toolkit, a

set of high-level guidelines to help companies implement best practices across

blockchain projects – especially those helping solve supply chain issues. They

worked with more than 100 organisations for more than a year, delving into 40

different blockchain use cases, including traceability and automation, to help

guide organisations in their efforts to solve real-world problems with

blockchain.

The growing trend of digitization in commercial banking

“Technology has absolutely been at the forefront of all the changes we have

seen and will see in upcoming years,” explained Rao. Even so, the business of

banking has not changed on a fundamental level. Rather, products have become

more commoditized; similar business products are being offered, but customers

are using them in different ways. In Rao’s words, “the ‘what’ component has

not changed, but the ‘how’ has.” This is where digitization has had the

biggest impact. For example, commercial banking capabilities like making a

payment or collecting a receivable have long been available for corporate

entities. But today, the same capability can be offered in a way that

emphasizes a great user experience—something that hasn’t always been a focal

area in the commercial banking space. ... Large traditional banks are

frequently riddled with outdated legacy systems on the back end of operations,

which dilutes their offerings even with modern digital technology at the front

end. These legacy systems make it costly to create the ideal customer

experience, leading many banks to focus on implementing strategies that pave

the path towards modernization. In certain cases, this means opening up and

modernizing selective pieces of back-end systems to improve operations

overall.

Quote for the day: