Stay Ahead of the 5G and DevOps Race with Continuous Network Monitoring

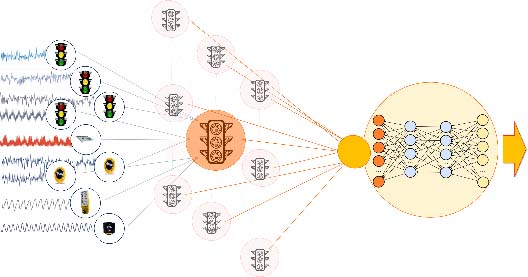

Automobiles aside, another industry that benefits from being proactive rather than reactive is telecommunications. Not only does the telecoms world requires routine checks and maintenance, but it also needs to identify problems before they cause larger issues or disruptions. Networks are evolving rapidly and this will continue as 5G deployments expand; as will the need for regularly scheduled maintenance and examinations. DevOps–a set of procedures that automates between software development (Dev) and IT operations (Ops) along with continuous delivery (CD)–allows for a level of agility that enables new features and services to be deployed within weeks or days. There are four stages of establishing these services–design, deploy, test and operate–all of which demand a constant pace and network monitoring. To maximize DevOps and CD, including the speed benefits that come with both, predictive network monitoring (PNM) is vital.

Deploying Edge Cloud Solutions Without Sacrificing Security

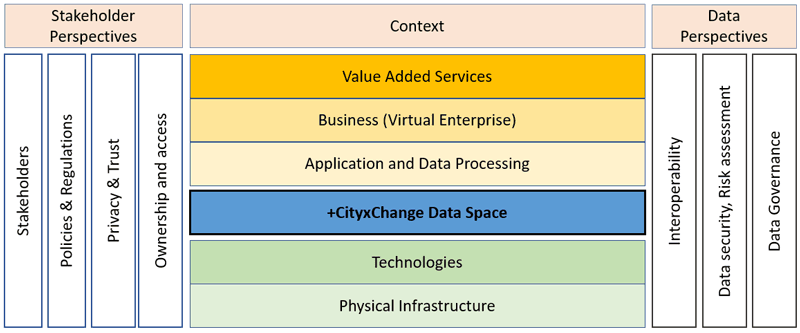

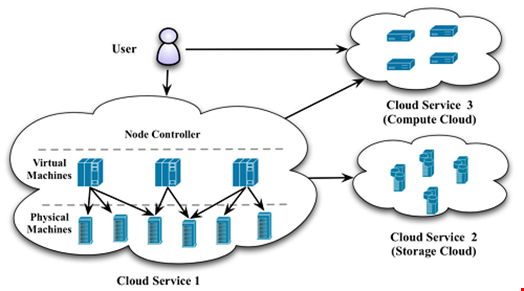

First, let's think about the structure of edge cloud systems. In most implementations, edges are within organizations' computing boundaries, and so they will be protected by a wide variety of tools that focus on perimeter scanning and intrusion detection. However, that's not quite the whole story: in most systems, there will also be a tunnel between the edge straight to cloud storage. Sending data from the edge to the cloud in a secure way is fairly straightforward, because organizations will control the infrastructure that is used to encrypt and verify it. The problem arises when the cloud needs to send data back to the edge for processing. The challenge here is to ensure that this data is authenticated and verified, and is therefore safe to enter into an organizations' internal systems. First, and most obviously, edge cloud systems fragment data. Having each device connected directly to cloud services might incur a performance loss, but at least this data is centralized, and can be covered by a single cloud security policy. Because edge cloud servers – almost by definition – need to be connected to many different devices, they represent a nightmare when it comes to securing these same connections.

DDoS in the Time of COVID-19: Attacks and Raids

Unfortunately, or fortunately, cyber security is an essential business. As a result, those working in the field are not getting to experience any downtime during a quarantine. Many of us have been working around the clock, fighting off waves of attacks and helping other essential businesses adjust to a remote work force as the global environments change. Along the way we have learned a few things about how a modern society deals with a pandemic. Obviously, a global Shelter-in-Place resulted in an unanticipated surge in traffic. As lockdowns began in China and worked their way west, we began to see massive spikes in streaming and gaming services. These unanticipated surges in traffic required digital content providers to throttle or downgrade streaming services across Europe, to prevent networks from overloading. The COVID-19 pandemic also highlights the importance of service availability during a global crisis. Due to the forced digitalization of the work force and a global Shelter-in-Place, the world became heavily dependent on a number of digital services during isolation. Degradation or an outage impacting these services during the pandemic could quickly spark speculation and/or panic.

Governing by data: Limits and opportunities

Healthcare is perhaps the most obvious area of public service for the adoption of data analysis, given that medical science is largely built on this. The UK government has been led by data and science in reacting to the coronavirus epidemic over recent weeks, making a celebrity out of the UK’s chief medical officer Chris Whitty. But politics can trump data analysis. David Nutt, professor of neuropsychopharmacology at Imperial College London, was sacked as the government’s chief advisor on drugs in 2009 after saying policy in this area was not based on evidence. Nutt’s research found that legal alcohol was more harmful to society than illegal drugs, although heroin was rated as having the greatest damage on individuals. “The logical conclusion is, if government drugs policy is about harms, alcohol should be the primary focus,” Nutt writes in his new book Drink? The new science of alcohol and your health. “But for political reasons, this evidence has been ignored.”

IT directors plan to protect cloud budgets and consolidate vendors during downturn

According to the survey, agile delivery and cloud cost optimization are the most important priorities for tech leaders at the moment. IT managers will be using these tools to respond more quickly to customer demands and increase fiscal discipline. Agile and DevOps practices will drive faster software releases with lower failure rates and quicker recovery from incidents. IT leaders also need to pay attention to internal customers as well. The report recommends that teams should move from reactive infrastructure management to proactive support of digital transformation efforts by working closely with business owners, developers, product managers, and tech partners. The financial crunch due to the coronavirus will motivate financial teams to track down redundant, unused, and underused cloud services and turn them off. IT managers also reported that they will analyze worloads and identify the right pricing models—on-demand, spot, or reserved—to maximize savings. The survey also found that the gap between public cloud platform providers is closing with Google Cloud, Amazon Web Services, and Microsoft Azure each getting an equal share of votes as a preferred cloud provider. Tech leaders are looking for providers that can deliver on business needs

The Bootstrap 4 Grid Deconstructed

While upgrading my skillset and implementing an Angular based website, I again looked at the Bootstrap Grid system and decided to deep-dive into it and see what makes it work. I'll be using my original article as a kind of template for the structure of this article and will sometimes reference it for things explained there. I will also assume a basic knowledge of HTML and CSS. That you know what a <div>, <span>, etc. are..., that you know about CSS inheritance rules, ... I also assume you have read the article about the Bootstrap 3 grid system so you are familiar with responsive breakpoints and the like. ... The Grid: It's Still All About Rows and Columns. Nothing has changed here: we still need to define a container with rows which in turn contains columns. However, where in the Bootstrap 3 grid you had to always specify the width of your columns and make them add up to a total of 12, this is no longer true for the Bootstrap 4 grid. The Bootstrap 4 grid defines a simple col class which allows you to evenly spread your columns over the width of your page while taking up as much space as necessary for the content to match the column.

USB-C power for laptops is still complicated - and here's why

The problem is that while USB-C can support any and all of those, what actually works is down to the capabilities of the port and of the cable itself (more specifically, the control chips at either end of the cable). Some laptops have one USB-C port that supports the PD (Power Delivery) standard and one that doesn't, because that way you can use a cheaper controller chip and only have to route the power down one path on the motherboard. Different protocols have different licencing requirements, so not every cable supports Thunderbolt. And you need specific controller chips in the cable to support PD. That's why the UNO interchangeable cable we looked at recently didn't support PD, making it an almost, but not quite, universal cable. The £46/$55 Infinity Cable (also from Chargeasap) has some nice tweaks: a cord wrap; a smaller, less bright LED on the cable so you know when power is flowing but you don't get dazzled by your phone cable at night; and the 15-year warranty that presumably inspired the name. But the big change is that it supports PD up to 100W. The Infinity cable has USB-C on one end, with an optional ($5) USB-A adapter for when you need to use an older port; the other end is a magnet with interchangeable connectors for USB-C, Micro-USB and Lightning. The magnets are strong -- get the tip close to the cable and it snaps on securely, but if you yank on the cable the tip will come off before you pull your device off the table.

The Internet Only Works During A Pandemic Because We Killed Net Neutrality

In fact, networks in China and Italy, like here in the States, have (with a few exceptions) held up reasonably well under the massive load of telecommuting and home learning. Not because of net neutrality policy, but because network engineers are generally good at their jobs. While there have been some network problems, they're usually of the "last mile" variety in both the EU and US. As in, your ISP never upgraded that "last mile" to your house, so you're still stuck on a DSL line from around 2007 that struggles to handle Zoom teleconferencing particularly well. But most core networks around the world have held up rather admirably. The claim that the EU was suffering some kind of exceptional congestion problems appears to have originated among some EU regulators who simply urged Netflix to reduce bandwidth consumption by 25% to pre-emptively help lighten the load. There was no supporting public evidence provided of actual harm. The move was precautionary.

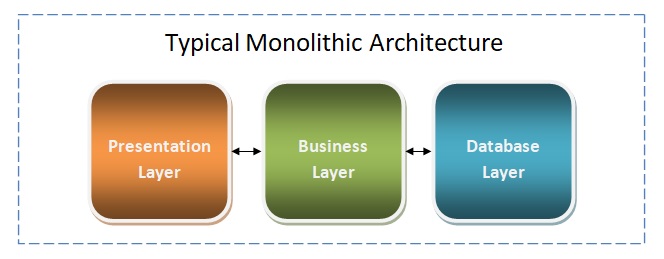

How to overcome application modernisation barriers

“We’re talking about IT estates that have grown up over the past 30 to 40 years, and you find that many of these organisations have not invested in technology over time,” he says, adding that a lack of integrations between these applications is a major barrier to building agile, modern application portfolios. Like Mendix’s Ford, Fairclough recommends modernisation projects are divided into “prioritised chunks”, which he says enables IT teams to tackle the most important things first. “Maybe there are some things that you don't even need to tackle, so actually you segment and decide that we can run those IT systems over there for another few years and then just retire them,” he says. Describing a challenging modernisation project he worked on, Fairclough says the amount of work required to complete the project had been “totally underestimated”. He says the project involved an IT estate of more than 500 applications, which meant the customer did not understand how everything was connected. As a consequence, project costs were pushed up “exponentially”.

Failover Conf Q&A on Building Reliable Systems: People, Process, and Practice

The biggest challenge associated with the topic of reliability is knowing where to invest your time and energies. We’re never ‘done’ making a system reliable, so how do we know what components are most critical? Where will we get the highest ROI? Furthermore, how do we decide that a system is reliable enough? To answer that last question, set recovery time and recovery point objectives (RTOs and RPOs) and let yourself be guided by them. Based on those metrics, decide where you should be investing your time. To decide where to start improving the overall reliability of your system, you need to know how all of the components interact, and identify the most critical components and bottlenecks. You can spend all of your time making a database reliable, but that won’t matter if it sits behind a heavily used but unreliable caching layer. Dependency graphs are great for visualising how the components of your service fit together and will allow you to identify the places where you will reap the biggest reliability rewards. The challenge here is that dependency graphs get stale ridiculously quickly unless they are automated.

Quote for the day:

"When you can't make them see the light, make them feel the heat." - Ronald Reagan