India’s new Software Products Policy marks a Watershed Moment in its Economic History

It is in this light that the recently rolled out National Software Products Policy (#NSPS) by the Ministry of Electronics & IT (MeitY), Government of India marks a watershed moment. For the very first time, India has officially recognised the fact that software products (as a category) are distinct from software services and need a separate treatment. So dominated was Indian tech sector by outsourcing & IT services, that “products” never got the attention they deserve – as a result that industry never blossomed and was relegated to a tertiary role. Remember that quote – “What can’t be measured, can’t be improved; And what can’t be defined, can’t be measured”. The software policy is in many ways a recognition of this gaping chasm and marks the state’s stated intent to correct the same by defining, measuring and improving the product ecosystem. It’s rollout is the culmination of a long period of public discussions and deliberations where the government engaged with industry stakeholders, Indian companies, multinationals, startups, trade bodies etc to forge it out.

How Lessons from Production Adoption Resulted in a Rewrite of the Service Mesh

Linkerd is an open-source service mesh and Cloud Native Computing Foundation member project. First launched in 2016, it currently powers the production architecture of companies around the globe, from startups like Strava and Planet Labs to large enterprises like Comcast, Expedia, Ask, and Chase Bank. Linkerd provides observability, reliability, and security features for microservice applications. Crucially, it provides this functionality at the platform layer. This means that Linkerd’s features are uniformly available across all services, regardless of implementation or deployment, and are provided to platform owners in a way that’s largely independent of the roadmaps or technical choices of the developer teams. For example, Linkerd can add TLS to connections between services and allow the platform owner to configure the way that certificates are generated, shared, and validated without needing to insert TLS-related work into the roadmaps of the developer teams for each service.

How to get your company’s people invested in transformation

Transformation, driven by new industrial platforms, geopolitical shifts, global competition, and changing consumer demand, is front-page news because it moves share prices, tests leadership ability and mettle, and creates new business models that change how whole sectors operate. But we rarely talk about the people who live through and help drive these often-wrenching changes. Can a global company successfully transform without bringing along its 30,000 employees? I doubt it. The human dimension is profoundly important. But too often, it’s forgotten or under-recognized in the rush to restructure or launch initiatives. Leaders who can engage emotionally with employees and humanize change initiatives by creating inspiration and innovation are most likely to succeed. This may sound obvious, but is a challenge for type A leaders who overly emphasize process, effort, and control. Transformational change often requires leaders to adopt an “antihero” style, characterised by empathy, humility, self-awareness, flexibility, and an ability to acknowledge uncertainty.

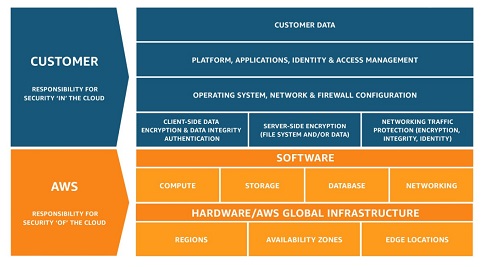

Secure Your Migration to the Cloud

Having a clearly defined and enforceable data lifecycle strategy, ensuring data is protected in transit and at rest, is one of the most important aspects of any cloud migration. You need to understand what sensitive data you are migrating and leverage the tools and processes to keep it protected, including cloud access security brokers (CASB). A cloud access security broker, according to Gartner, “is an on-premises or cloud-based security policy enforcement point that is placed between cloud service consumers and cloud service providers to combine and interject enterprise security policies as cloud-based resources are accessed.” CASBs are powerful tools because they give you a centralized view of all your cloud resources. Many IT teams that deploy a CASB for the first time realize that there are many cloud resources in use that they were previously unaware of, some of which may be placing sensitive data at risk. By using CASBs and other tools, you can regain visibility into where data resides and apply the proper safeguards to keep it protected.

The Matrix at 20: A Metaphor for Today's Cybersecurity Challenges

Shape-shifting is core to the movie's plot. "Agents," Neo's sinister enemies, take over the bodies of innocent bystanders in their relentless pursuit of Neo and his crew. The cybersecurity analogy here is an advanced persistent threat (APT) group utilizing stolen credentials to gain a foothold into an organization — one of the most pernicious elements facing today's enterprise. Modern breaches often involve malicious APT-like agents gaining access to an employee's credentials in order to achieve their goal. This usually happens as a result of spearphishing attempts, enabling attackers to steal customer data, intellectual property, or financial and banking data. Just as Neo stays vigilant in looking for constant threats, CISOs fight the epidemic of stolen credentials with proactive risk-based authentication techniques that stop attackers from even obtaining a foothold in the first place. The key in both situations is having visibility into attacker behavior. As Neo begins his journey to the "real world," a jaded crew member, Cypher, asks, "Why, oh why, didn't I take the blue pill?"

Bolster enterprise application support from dev to deployment

Declarative models help teams discover how code has deviated from the declared goal state. Use the diff feature in these tools -- some examples include kubectl diff for Kubernetes and the --diff option in Ansible playbooks -- to compare current and goal-state conditions and alert you to differences. Jenkins is a common automation server to integrate CI/CD with a repository, with competitors such as Integrity, GoCD or GitLab CI/CD. When choosing a tool, ensure that it will integrate with your organization's repository to maintain that single source of truth. There are broad management toolkits available for IT organizations that don't create tool integrations internally. For example, Weaveworks offers an integrated tool and a GitOps-centric distribution of Kubernetes, and it recommends and supports tool integrations for the latter. Diamanti and Rancher Labs have similar container platform capabilities. Start a GitOps approach to enterprise application support with a review of the components and capabilities of a managed toolkit, then weigh the benefits of a single-source option or put together a collection of tools to meet your specific requirements.

AI pioneer: ‘The dangers of abuse are very real’

Killer drones are a big concern. There is a moral question, and a security question. Another example is surveillance — which you could argue has potential positive benefits. But the dangers of abuse, especially by authoritarian governments, are very real. Essentially, AI is a tool that can be used by those in power to keep that power, and to increase it. Another issue is that AI can amplify discrimination and biases, such as gender or racial discrimination, because those are present in the data the technology is trained on, reflecting people’s behaviour. ... Deep learning, as it is now, has made huge progress in perception, but it hasn’t delivered yet on systems that can discover high-level representations — the kind of concepts we use in language. Humans are able to use those high-level concepts to generalize in powerful ways. That’s something that even babies can do, but machine learning is very bad at.

Reliance Jio’s latest acquisition is a $100M bet on the future of internet users in India

Jio’s aggressive data plan strategy, which started with free voice calls and free 4G data, disrupted India’s telecom market and forced the incumbents to move quicker and reduce prices — mobile data is reportedly now cheaper in India than anywhere else on the planet. It was, of course, a huge hit with consumers. The operator has consistently led on 4G subscriber numbers and it is ranked third overall with over 280 million customers, or around 23 percent market share. Clearly, keeping up with what’s next is a critical part of its plan to grow bigger still. Vaish said Haptik wasn’t under pressure to sell but the team found an “ideal match in terms of philosophy” with Jio, which is also exploring alternative ways to enable consumers to interact with its devices and service. The company has a ‘Hello Jio’ assistant on its devices, and Haptik may help it further its strategy in the future although Vaish said that hasn’t been nailed down at this point. Jio is allowing Haptik to continue to work with customers because, at this point, enterprise services are the “only proven business” for conversational platforms, Vaish said.

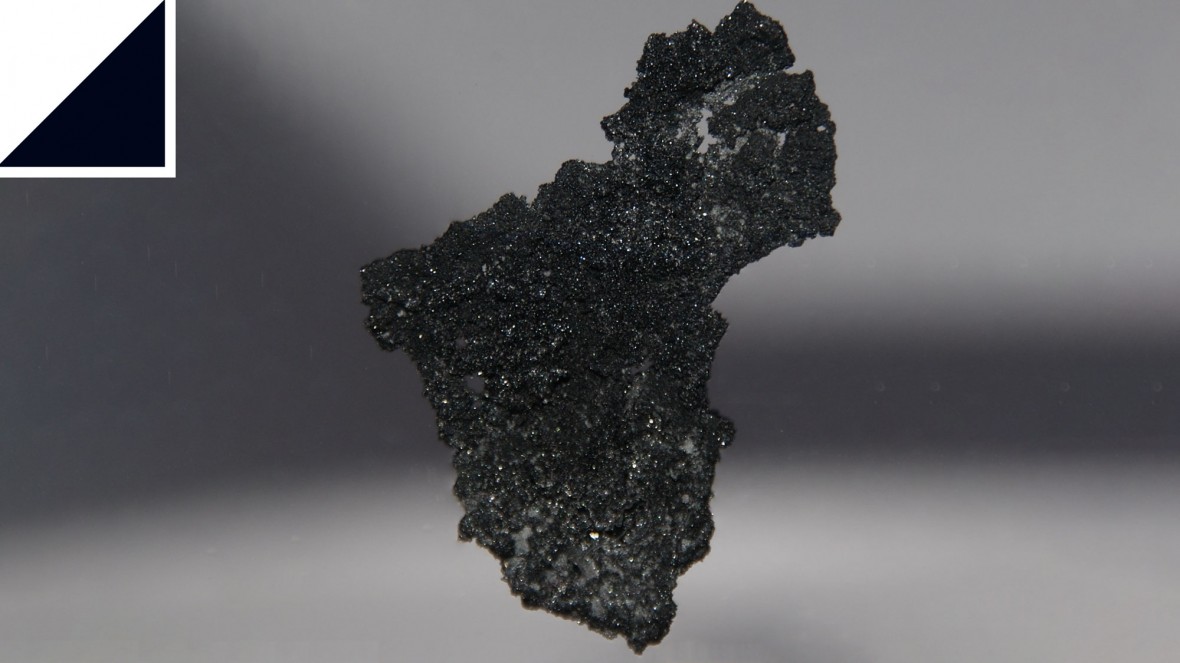

Sorry, graphene—borophene is the new wonder material that’s got everyone excited

This exotic substance wasn’t synthesized until 2015, using chemical vapor deposition. This is a process in which a hot gas of boron atoms condenses onto a cool surface of pure silver. The regular arrangement of silver atoms forces boron atoms into a similar pattern, each binding to as many as six other atoms to create a flat hexagonal structure. However, a significant proportion of boron atoms bind only with four or five other atoms, and this creates vacancies in the structure. The pattern of vacancies is what gives borophene crystals their unique properties. Since borophene’s synthesis, chemists have been eagerly characterizing its properties. Borophene turns out to be stronger than graphene, and more flexible. It a good conductor of both electricity and heat, and it also superconducts. These properties vary depending on the material’s orientation and the arrangement of vacancies. This makes it “tunable,” at least in principle. That’s one reason chemists are so excited. Borophene is also light and fairly reactive. That makes it a good candidate for storing metal ions in batteries.

Discovering Culture through Artifacts

First of all, managers have power over their team. This power often takes the form of rewards (pay raises, promotions, etc) and punishments (bad performance reviews, terminations, etc.). In both cases, a manager is rewarding and punishing members of his team based on the behavior. It is through these incentives and disincentives that the culture of a team, organization and/or company is defined. Members of the team learn through observation of which behavior is rewarded/punished, and tailor their own behavior in turn. Interestingly enough, it doesn’t matter what a manager says, but rather which behaviors they reward/punish. A second means by which a manager can influence the culture of a team is by modeling the behavior they want the team to exhibit. One can learn a lot by observing the behavior of others, especially when that person is in a position of power or influence. I see this a lot when it comes to modeling behavior around giving and receiving feedback. Great managers know how to listen and thank people for feedback, and modeling this behavior for their team.

Quote for the day:

Confident and courageous leaders have no problems pointing out their own weaknesses and ignorance. - Thom S. Rainer