There’s good reason for Gartner’s confidence. AI has been moving fast and is already a key area of research and development for many organizations. The fruits of these efforts can already be seen in algorithms that influence things such as social media feeds, autonomous vehicles, apps and even some call centers. And that rapid progress means that we’ll soon start seeing AI technology appear “virtually everywhere” over the next 10 years as it becomes available to the masses. Gartner says movements and trends such as cloud computing, open-source technology projects and the “maker” community are fueling AI’s rise. It adds that AI is most prevalent in technologies such as AI platform as a service, artificial general intelligence, autonomous driving, autonomous mobile robots, flying autonomous vehicles, smart robots, conversational AI platforms, deep neural networks and virtual assistants.

What It Takes To Disrupt A Massive Industry

The strategy involves finding a gap in an incumbent’s product line and create something that will augment it. This essentially makes you a friend, not a foe. To this end, Nir initially built an analytics layer on top of existing platforms to help with cyber fraud. “And it worked out,” he said. “We became partners with the major players. We talked to their partners, sold to their customers.” But of course, this strategy must go to the next level if a company is to get to scale. “We poured the profits from the helper app back into engineering, building our next-gen SIEM platform,” said Nir. “Not only did the sales fund development, but we had access to customers who were using all the major SIEM platforms. We had a front-row seat and saw all the problems these customers were having with the legacy products. Needless to say, the big SIEM vendors weren’t pleased, but we had enough sales and momentum to go it alone. Also, we knew from experience how much better our next-gen platform was. When we launched our SIEM, we already had customers committed."

Is it time to automate politicians?

A robot could take over every politician’s favourite task of cutting ribbons to inaugurate new buildings. We already cede decision-making responsibility on health and finances to algorithms, why not with voting? An automated democracy could replace both politicians and ballot boxes. That may be extreme. Yet comical though it sounds, parts of our politics has already been technified. Consider reach. Both Narendra Modi, India’s prime minister, and the French presidential candidate Jean-Luc Mélenchon, beamed holograms of themselves to speak to several groups of thousands of people simultaneously. Next, there’s the message. In America’s 2016 election, candidates used social-media advertising to target different voters with different messages. The growing automation of our government is no longer sci-fi. Instead, it’s a reality we are only beginning to grasp. So to the question, can we replace politicians with robots? The answer is a soft yes.

Cyber Resilience: Where do you rank?

It is imperative for businesses to be proactive rather than reactive when it comes to cyber security. Businesses need to ensure every part of their enterprise is protected, even from employees as well as contractors and their supply chain partners. A small vulnerability can be hugely detrimental to a businesses’ cyber security. An effective method of preventing cyber attacks is to develop a culture of resilience within a business. Cyber security should not be the exclusive domain of the IT department, it has companywide consequences and should be the responsibility of the C-suite to drive a cyber safe culture within their organisations. As cyber crime presents itself in a variety of forms, businesses can combat the risk of a cyber attack by implementing staff training to spot a potential cyber attacks, like phishing emails, establishing password strength and change requirements, mandating software updates and data back-ups to secure their data. All while restricting what data employees can access and share.

How artificial intelligence is transforming the financial ecosystem

Artificial intelligence is fundamentally changing the physics of financial services. It is weakening the bonds that have held together the component parts of incumbent financial institutions, opening the door to entirely new operating models and ushering in a new set of competitive dynamics that will reward institutions focused on the scale and sophistication of data much more than the scale or complexity of capital. A clear vision of the future financial landscape will be critical to good strategic and governance decisions as financial institutions around the world face growing competitive pressure to make major strategic investments in AI and policy makers seek to navigate the challenging regulatory and social uncertainties emerging globally. Building on the World Economic Forum’s past work on disruptive innovation in financial services, this report provides a comprehensive exploration of the impact of AI on financial services.

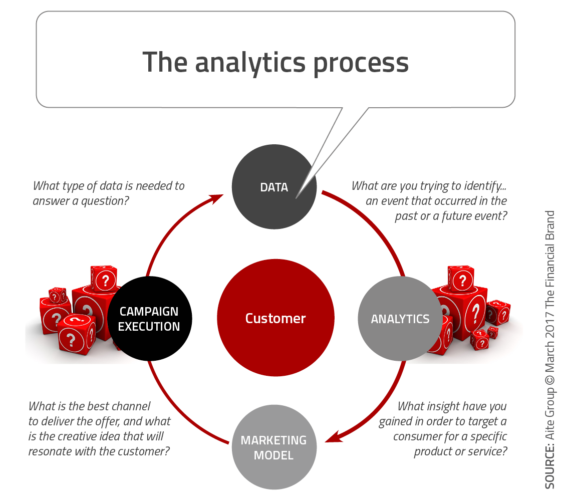

Predictive Analytics: The Future of Financial Marketing

As we move from traditional analytics to predictive analytics, we can leverage new technology to deliver marketing messages to customers. Beyond direct mail, email, and even digital marketing, new touchpoints, such as chatbots, and voice-first interactive assistants will provide new ways to engage with a consumer. “Artificial intelligence (AI) that is fueled by predictive analytics, machine learning, and natural language processing will be the brains behind the face,” states Aite Group. Predictive analytics is the future of financial institution marketing, predicting when a consumer will experience a life event or need a financial service solution. This advanced form of needs analysis, once only available to the largest organizations, is now financially and operationally available to organizations of all sizes. The combination of predictive analytic tools and advanced digital delivery options can guide the customer to the best financial solution at the most opportune time … sometimes before the consumer even realizes they have a need.

New DevOps Study Offers A Reality Check for Financial Services

Despite reason for optimism, there are still potential hurdles to making the most of DevOps practices. Among those whose businesses have already migrated to DevOps, seventy-one percent of respondents claimed to have experienced challenges. In 26 percent of cases, IT leaders found that the operating teams were limiting the transition to DevOps. Another 26 percent reported difficulty due to management structure lacking clear business objectives, which made defining DevOps strategy difficult. The survey’s results paint a clear picture: Organizations need to have a unified approach in order to make the most of their DevOps goals. Speaking about areas where financial services organizations should focus, Hayes-Warren stated that IT leaders should lead the drive to automate processes and applications by leveraging the cloud. Furthermore, companies need to focus on their culture to ensure they’re adoption organization-wide practices that integrate members of their IT departments.

Will Machine Learning AI Make Human Translators An Endangered Species?

Training a neural machine to translate between languages requires nothing more than feeding a large quantity of material, in whichever languages you want to translate between, into the neural net algorithms. To adapt to this rapid transformation, One Hour Translation has developed tools and services designed to distinguish between the different translation services available, and pick the best one for any particular translation task. "For example, for travel and tourism, one service could be great at translating from German to English, but not so good at Japanese. Another could be great at French but poor at German. So we built an index to help the industry and our customers. We can say, in real time, which is the best engine to use, for any type of material, in any language." This work – comparing the quality of the output of NMT generated translation, gives a clue as to how human translators could see their jobs transforming in coming years.

Fintech Without Borders: Regulators Consult on Global Financial Innovation Network

The GFIN Regulators are encouraging responses from “innovative financial services firms, financial services regulators, technology companies, technology providers, trade bodies, accelerators, academia, consumer groups and other stakeholders keen on being part of the development of the GFIN.” Firms should submit responses to GFIN@fca.org.uk. Feedback submitted to this email address will be shared among the GFIN Regulators unless a firm specifically states otherwise. Alternatively, firms may provide feedback or arrange to discuss the Consultation with one or more particular GFIN Regulators. Contact details for these purposes are provided in the Consultation. A regulatory sandbox is a platform for firms to test innovative new products, services or business models on a limited scale before a full launch. A number of national regulators including the FCA, the HKMA and the MAS have developed such sandboxes

How ‘Similar-Solution’ Information Sharing Reduces Risk at the Network Perimeter

Even when information is shared, it’s typically between identical solutions deployed across various sites within a company. While this represents a good first step, there is still plenty of room for improvement. Let us consider the physical security solutions found at a bank as an analogy for cybersecurity solutions. A robber enters a bank. Cameras didn’t detect the intruder wearing casual clothes or anything identifying him or her as a criminal. The intruder goes to the first teller and asks for money. The teller closes the window. Next, the robber moves to a second window, demanding money and that teller closes the window. The robber moves to the third window, and so on until all available windows are closed. Is this the most effective security strategy? Wouldn’t it make more sense if the bank had a unified solution that shared information and shut down all of the windows after the first attempt?

Quote for the day:

"Added pressure and responsibility should not change one's leadership style, it should merely expose that which already exists." -- Mark W. Boyer

![mobile apps crowdsourcing via social media network [CW cover - October 2015]](https://images.techhive.com/images/article/2015/10/mobile_apps_crowdsourcing_via_social_media_network_thinkstock-100618594-large.jpg)