So what can those companies do? Instead of plugging data in random tools online, tell employees to route all translation through a professional provider. Translation vendor selection is usually based on quality, turnaround and cost. To ensure data security, ask prospective resources how they receive and deliver files for translation. If they say email, watch out. “[Email is] 10 times riskier than any [online] solution because it’s very easy to break into people’s email,” Vashee says. Email is also readily forwarded — something many translation companies depend on. A human translator gets the job by specializing in that content type and the language direction needed — English into Polish, for example. If either of those factors change, so does the translator. As a result, even the largest translation companies don’t have in-house resources for everything you need.

What to consider when deploying a next-generation firewall

When consulting with vendors on a NGFW deployment, one of the first conversations will be around the organization’s security posture. No amount of technology can replace the critical work of evaluating an environment and prioritizing the most important business-critical assets that need to be protected. This is a conversation that may include multiple departments, from IT to network and security services, to HR and executive leadership. “Basically, organizations need to figure out, if they don’t already know, where the pearls of their data are and make a plan around protecting that,” says Gartner researcher Hils. Organizations typically gather these requirements and approach multiple vendors for a quote. Most firewalls are still deployed at the perimeter of the data center, but depending on if customers have adopted microsegmentation and network virtualization there could be firewalls deployed within the data center as well.

Tim O'Reilly: The flawed genie behind algorithmic systems

The algorithms took on the biases of the user, delivering content that reflected their likes -- and dislikes. Algorithmic systems, he argued, are a little like the genies of Arabian mythology. "These algorithms do exactly what we tell them to do. But we don't always understand what we told them to do," he said. Part of the problem is that developers don't know how to talk to algorithms and ask for the right wish, he said. Consider the financial markets, which today are vast algorithmic systems with a master objective function to increase profits. "The idea was that this would allow businesses to share those profits with shareholders who would use [them] in a socially conscious way," he said. "But it didn't work out that way." Instead, financiers are gaming the system, creating income inequality.

Q&A: Secure data centres and fintech companies

We believe there are two core aspects to a data centre that make them attractive to Fintech businesses. The first is security. This does not just include physical and cyber security, which of course are immensely important, it also includes security of service. Fintechs need to know that their product will always be available, that they won’t experience any outages or disruption in service, that could potentially prove to be a huge cost financially and to their reputation. Data centres must ensure that they have a robust infrastructure in place to ensure that they can provide a secure and reliable service to their partners. ... Fintech businesses must be able to prove to the Financial Conduct Authority (FCA) that they are not introducing any degree of risk to the financial services environment, so opting for a data centre provider who has a pedigree in compliance and security is vital.

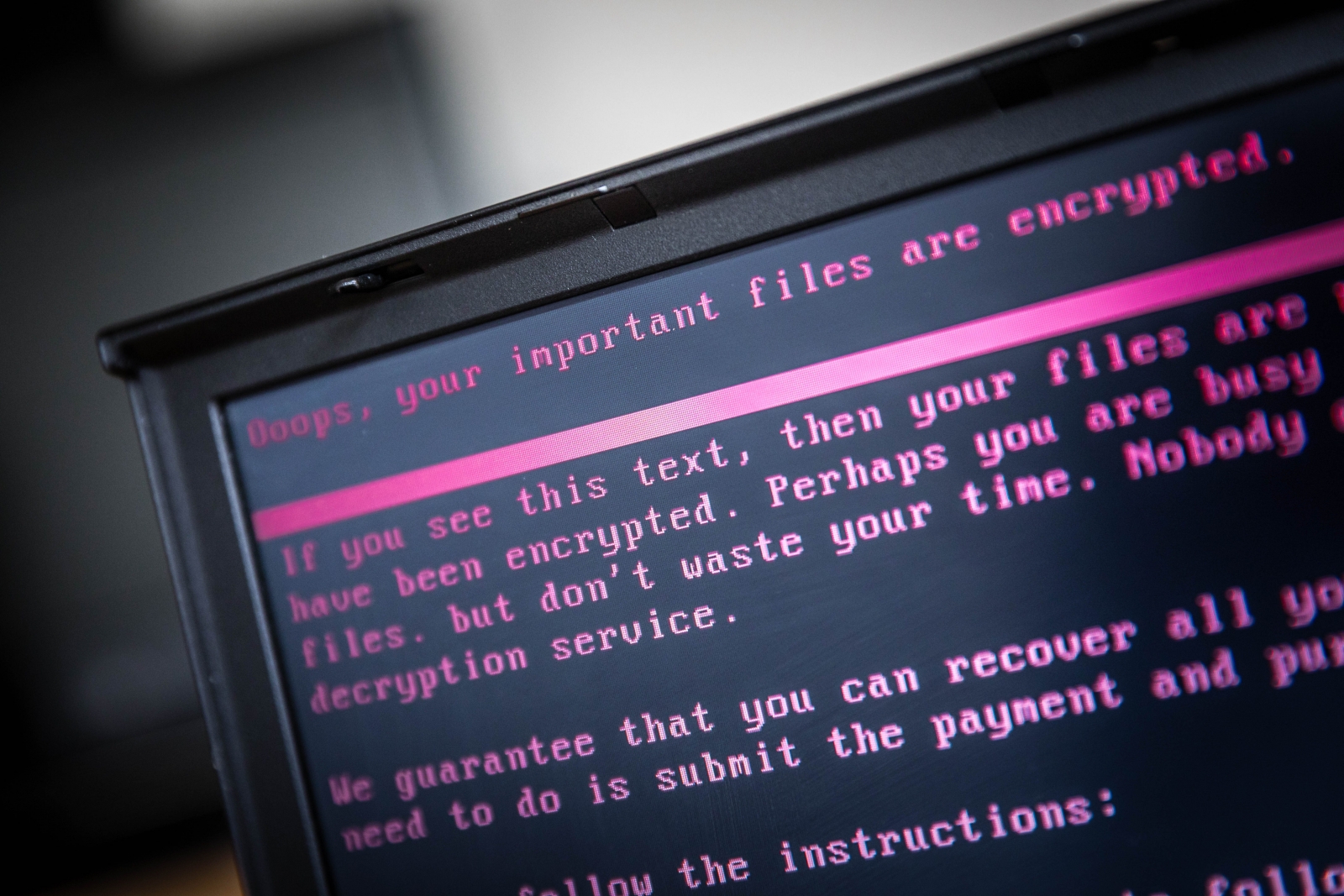

3 Ways to Mitigate Insider Threat Risk Prior to an Employee’s Departure

We are seeing a disturbing insider threat trend impacting operations and causing reputational harm in the days leading up to an employee’s departure from an organization. For example, last week a Twitter employee deleted President’s Trump’s Twitter account prior to leaving the premises on his last day of employment. In September, a contractor was convicted of cyber sabotage on an Army computer toward the end of his contract, costing U.S. taxpayers millions. These cases highlight the importance of ensuring that the appropriate insider threat risk mitigations are in place to help your organization prevent, detect, and respond to an insider incident. Whether termination or resignation, an employee’s pending departure from your organization increases the chance that data leaks or sabotage will occur that could impact operations, lead to the loss of competitive advantage, affect shareholder value, or result in embarrassment and devaluation of image and brand.

Three trends to keep top of mind when crafting an AI strategy

New interfaces will dramatically change the way consumers and employees access computing resources, Andrews said. Specifically, the new wave of interfaces relies on natural language processing and generation, visual analytics and gesture interpretation -- technologies powered by AI. ... AI capabilities are being embedded into the internet of things (IoT) devices that operate on the computing edge, but those capabilities will be limited. Model building with AI will happen elsewhere, but runtime analysis and "interaction into action models" that provide, say, visual analysis can live on an edge device, Andrews said. ... AI-powered applications will be able to tell each other what they need to meet a goal without human interaction. But to create this kind of commonplace AI, application diversity is crucial. "In any ecosystem, strength comes from that diversity and from multiple perspectives," he said.

Xerox CISO: How business should prepare for future security threats

As we move to AI, then we also have to move into AI in a security space ‑‑ thinking about the talent shortage, thinking about the fact that we're not going to close this talent gap. How do we close the talent gap? How do we get around it? By allowing AI, allowing robots and smart learning and things like that to play a role in this. We need to challenge our vendors and say, “You've got great platforms that perform analytics for me, but now I need these great platforms to not just perform the analytics, but to actually do something.” That's where it stops. It stops at analytics, and then it expects you've got a team of people that will actually do [something with the data]. It would be great if, as the smart security people that we are, we could say these are the list of security things that I am comfortable with a machine doing for me.

Hacking medical devices is the next big security concern

“Successful exploitation of these vulnerabilities may allow a remote attacker to gain unauthorised access and impact the intended operation of the pump,” says the ICS-CERT report. In other words, with enough skill, a hacker could change the quantities of medication administered to a patient. Smiths Medical said the chances of this happening are “highly unlikely”, but has promised a software security update to resolve the issues by January 2018. Smiths Medical is not the only device manufacturer under fire. There are plenty of others, including St Jude Medical, which is currently battling lawsuits relating to vulnerabilities in its implantable cardiac defibrillators and pacemakers. These triggered a recall of some 465,000 devices in August this year, which will involve patients attending hospitals and clinics in order for the devices to be updated. No invasive surgery will be needed, but the procedure must be carried out by medical staff.

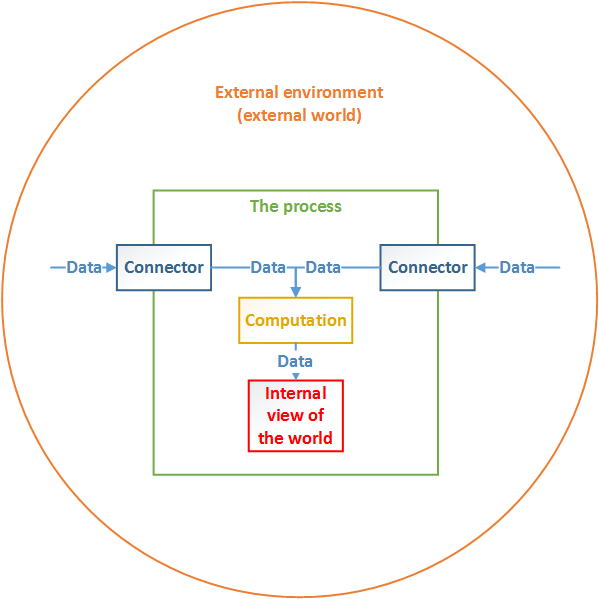

Why speeds and feeds don’t fix your data management problems

Applications and storage have long been oblivious to each other’s capabilities and needs. The majority, if not nearly all, of today’s enterprise applications do not know the attributes of the storage where its data resides. Applications cannot tell if the storage is fast or slow, premium or low cost. They are also unaware of storage’s proximity, and factors like network congestion between storage and the application server, which can significantly impact latency. Conversely, storage does not know what data is the most important to an application. It only knows what was recently accessed, and uses that information to place data in caching tiers, which will increase performance if that same data happens to be accessed again. Some enterprises try to address these issues with caching tiers, but unfortunately caches do not have the intelligence needed to reserve capacity for mission-critical applications.

Security Think Tank: Web security down to good risk management

Encrypting data, both when it is in transit and being stored, is key, and its importance will grow with the adoption of GDPR in 2018. Similarly, encrypting the hard drives of corporate laptops minimises breaches if they are lost or stolen. However, it is not just the external threat landscape that is changing. Having robust internal controls, including access management strategies can also minimise threats. Many security fails are down to providing users with more system access than is needed to undertake the task they are required to do. Outlining the inputs that an application needs to work and then engaging in role management and access control to ensure that no more than that is available prevents this – and is particularly important where external attacks attempt to take control of an existing user in the target application.

Quote for the day:

"Education is when you read the fine print. Experience is what you get if you don't." -- Pete Seeger