AI and investing: The artificial intelligence analytical revolution

In the next five years, investment management will go through an analytical revolution, AI and investing will come together and revolutionise the way that investment information is analysed, packaged and presented to investors. This will change the face of investment management, with professional investors able to make informed investment decisions faster and will for the first time give private investors access to the same advanced stock selection and portfolio construction tools as the professionals. At the heart of this revolution is Augmented Intelligence, harnessing the power of AI combined with human decision making. As Paul Tudor Jones famously said, “No human is better than a machine, but no machine is better than a human with a machine”. ... By bringing out “interesting” insights, whether to confirm or enhance a suspected salient point or by identifying one that might have been overlooked otherwise, AI is the humble ‘idiot-savant’ that can usefully take on the tedious data-intensive work that humans are not best suited for.

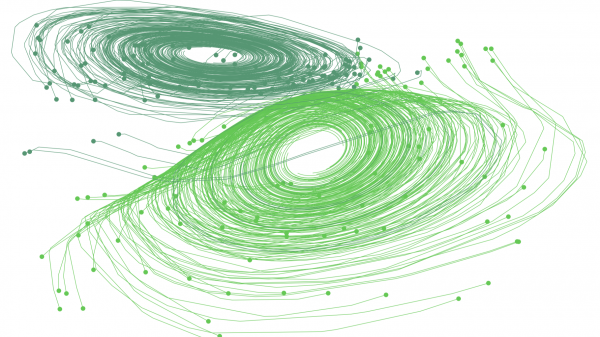

A radical new neural network design could overcome big challenges in AI

The layer approach has served the AI field well—but it also has a drawback. If you want to model anything that transforms continuously over time, you also have to chunk it up into discrete steps. In practice, if we returned to the health example, that would mean grouping your medical records into finite periods like years or months. You could see how this would be inexact. If you went to the doctor on January 11 and again on November 16, the data from both visits would be grouped together under the same year. So the best way to model reality as close as possible is to add more layers to increase the granularity. (Why not break your records up into days or even hours? You could have gone to the doctor twice in one day!) Taken to the extreme, this means the best neural network for this job would have an infinite number of layers to model infinitesimal step-changes. The question is whether this idea is even practical.

DevOps adoption is creating chaos in the enterprise

With DevOps a nearly universal concept in the modern enterprise, it stands to reason that there are going to be issues. If so, the numbers in OverOps' report indicate there's more than just a margin of implementation error at work: Something is going wrong in a lot of DevOps organizations. Take automation, for example: DevOps is designed for faster release schedules, which means that automated tools are used to catch an increasing percentage of software errors. Despite increased use of automation, 76.6% of respondents said they're still using manual processes, and a shocking 52.2% rely on customers to tell them about errors. All that manual troubleshooting takes time, with 20% of respondents saying they spend one full workday a week fixing bugs, and another 42% spend between one half and one full day. Think back to the shared responsibility that developers and operations feel under DevOps, and you can start to see where OverOps' report is going: The big problem in DevOps is confusion.

Computers could soon run cold, no heat generated

The new “exotic, ultrathin material” is a topological transistor. That means the material has unique tunable properties, the group, which includes scientists from Monash University in Australia, explains. It’s superconductor-like, they say, but unlike super-conductors, doesn’t need to be chilled. Superconductivity, found in some materials, is partly where electrical resistance becomes eliminated through extreme cooling. “Packing more transistors into smaller devices is pushing toward the physical limits. Ultra-low energy topological electronics are a potential answer to the increasing challenge of energy wasted in modern computing,” the Berkeley Lab article says. ... Another group of researchers from the University of Konstanz in Germany say supercomputers will be built without waste heat. That group is working on the transportation of electrons without heat production and is approaching it through a form of superconductivity.

Managing risk in machine learning

As we deploy ML in many real-world contexts, optimizing statistical or business metics alone will not suffice. ... Given the growing interest in data privacy among users and regulators, there is a lot of interest in tools that will enable you to build ML models while protecting data privacy. These tools rely on building blocks, and we are beginning to see working systems that combine many of these building blocks. ... Because there’s no ironclad procedure, you will need a team of humans-in-the-loop. Notions of fairness are not only domain and context sensitive, but as researchers from UC Berkeley recently pointed out, there is a temporal dimension as well (“We advocate for a view toward long-term outcomes in the discussion of ‘fair’ machine learning”). What is needed are data scientists who can interrogate the data and understand the underlying distributions, working alongside domain experts who can evaluate models holistically.

When a NoOps implementation is -- and when it isn't -- the right choice

"Basically, NoOps is the same thing as no pilots or no doctors," Davis said. "We need to have pathways to use the systems and software that we create. Those systems and software are created by humans -- who are invaluable -- but they will make mistakes. We need people to be responsible for gauging what's happening." Human fallibility has driven the move to scripting and automation in IT organizations for decades. Companies should strive to have as little human error as possible, but also recognize that humans are still vital for success. Comprehensive integration of AI into IT operations tools is still several years away, and even then, AI will rely on human interaction to operate with the precision expected. Davis likens the situation to the ongoing drive for autonomous cars: They only work if you eliminate all the other drivers on the road.

Microsoft is telling awesome open source stories

Open source isn't just about code. Or needn't be. The spirit of open source is collaboration and sharing, which Microsoft has recently kicked up a notch with a new series of blogs that show how company culture can change, and what it could mean for open source development. Microsoft is already the world's biggest contributor to open source, at least as measured by the number of employees contributing to open source projects. It doesn't need to tell tales, and yet that's exactly what the company is doing, to cool effect, with its new Microsoft Open Source Stories blog. The blog aims to share the behind-the-scenes stories about how certain projects went open source. As Microsoft's Dmitry Lyalin related to Microsoft watcher Paul Thurrott, "We hope to tell over 20 stories through this process as we have had a lot of great stuff hidden behind the firewall."

Social engineering at the heart of critical infrastructure attack

Analysis reveals that the malware moves in several steps. The initial attack vector is a document that contains a weaponised macro to download the next stage, which runs in memory and gathers intelligence. The victim’s data is sent to a control server for monitoring by the actors, who then determine the next steps. The researchers said it was still unclear whether the attacks they observed were a first-stage reconnaissance operation with more to come. “We will continue to monitor this campaign and will report further when we or others in the security industry receive more information,” they said. Raj Samani, chief scientist and fellow at McAfee, said Operation Sharpshooter was yet another example of a sophisticated, targeted attack being used to gain intelligence for malicious actors.

Merging Internet Of Things And Blockchain In Preparation For The Future

Companies, users of IoT and Blockchain, as well as prominent figures in these futuristic technologies are all starting to come around to the idea that the Fourth Industrial Revolution will not just be built on one, but rather an amalgamation of all of them in different facets. If IoT has issues with security and corruption, it makes sense that Blockchain come to its aid and help secure the data with its immutable ledger. At the same time, if AI is struggling with its recording of data and a record of the AI, a distributed ledger can help with that too. So, as AI and IoT, for example, gain an edge in their previous issues with the integration of blockchain, so does the blockchain become more ingrained and useful going forward, making it indispensable in some sectors. Adoption like this always has been the hope for the distributed ledger technology. It is probably time for blockchain builders and implementers to stop worrying about disrupting current and past sectors with the use of a single blockchain, and instead look to how they can use blockchain in alliance with IoT, Big Data, AI, and others. to build that Fourth Industrial Revolution.

Top 10 Tech Predictions for 2019

Some predictions are easy. For example, it’s a good bet that popular buzzwords like digital transformation, cloud computing, artificial intelligence and quantum computing will continue to get a lot of attention in the news. What is less clear is exactly how these areas of technology might evolve. Which innovations will become an integral part of doing business and which will fade in importance? How will enterprises attempt to leverage these technologies for competitive advantage? And what should IT leaders be doing now to prepare for the near future? ... The analyst predictions, on the other hand, could be useful to CIOs and other IT leaders who are writing goals, setting budgets and deciding on training priorities for the coming year. In many cases, the analysts have offered direct advice to enterprise IT on how to capitalize on these trends. Often the various research firms agree with each other in regards to which steps enterprises should take. But in other cases, cybersecurity being one, the analysts had wildly divergent ideas on how trends are likely to impact enterprises and what leaders should do about it to prepare.

Quote for the day:

"A leader should demonstrate his thoughts and opinions through his actions, not through his words." -- Jack Weatherford

No comments:

Post a Comment