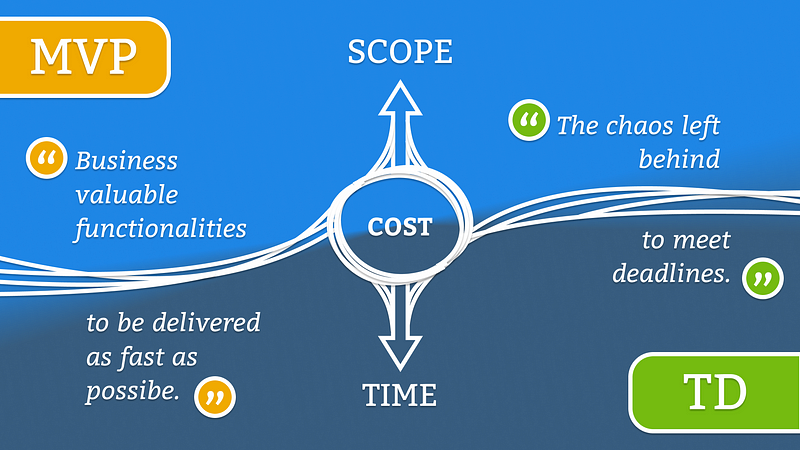

Prioritizing TD over MVP and vice versa needs to be someone’s responsibility, otherwise who would handle the delivery time from this balance? Thanks to the gains of my Project Management knowledge, now I do handle. I’m the one who should forecast when it makes sense to spend more time working on a better engineering because I know my Stakeholder enough to predict his or her next move towards a brand-new MVP. Let me quickly change contexts for didactic purposes: Ordering pizza while savagely hungry at home, I expect it to arrive maximum within an hour and hot. I know a lot of stuff might go wrong on the way to my house. I would probably embrace some and others I wouldn’t. If the pizza arrives two hours later, I won’t accept it. If it arrives simply warm, it’s fine. The same applies to projects. Most valuable to stakeholders won’t stand a perfect engineering if that doesn’t pay its cost, which means delivery right on time or sooner.

This is not a theoretical risk, either. It is already happening. Recent incidents involving Dunkin Donuts' DD Perks program, CheapAir and even the security firm CyberReason's honeypot test showed just a few of the ways automated attacks are emerging “in the wild” and affecting businesses. In November, three top antivirus companies also sounded similar alarms. Malwarebytes, Symantec and McAfee all predicted that AI-based cyber attacks would emerge in 2019, and become more and more of a significant threat in the next few years. What this means is that we are on the verge of a new age in cybersecurity, where hackers will be able unleash formidable new attacks using self-directed software tools and processes. These automated attacks on their own will be able to find and breach even well-protected companies, aand in vastly shorter time frames than can human hackers. Automated attacks will also reproduce, multiply and spread in order to massively elevate the damage potential of any single breach.

A data inventory is key to maintaining data privacy compliance

Building and maintaining a comprehensive data inventory can enhance overall data quality and help create a path to streamline the compliance efforts, which helps in the effort of reducing risk through the creation of an effective controls framework. Additionally, identifying potential processes that can be automated creates opportunity for better regulatory reporting in both accuracy and efficiency. Improved accuracy supports improved data security. Clear data maps and inventories can support more effective and proactive security measures that address critical issues, such as which specific business processes the data touches and the related risks of that interaction. Complete data lineage capability is also enabled through data accuracy, allowing for a cohesive approach by audit, security, and compliance groups alike.

Network management must evolve in order to scale container deployments

Highly containerized environments are subject to something called “container sprawl.” Unlike VMs, which can take hours to boot, containers can be spun up almost instantly and then run for a very short period of time. This increases the risk of container sprawl, where containers can be created by almost anyone at any time without the involvement of a centralized administrator. Also, IT organizations typically run about eight to 10 VMs per physical server but about 25 containers per server, so it’s easy to see how fast container sprawl can occur. A new approach to managing the network is required — one that can provide end-to-end, real-time intelligence from the host to the switch. Only then will businesses be able to scale their container environments without the risk associated with container sprawl. Network management tools need to adapt and provide visibility into every trace and hop in the container journey instead of being device centric. Traditional management tools have a good understanding of the state of a switch or a router, but management tools need to see every port, VM, host, and switch to be aligned with the way containers operate.

Office 365, Outlook Credentials Most Targeted by Phishing Kits

The phishing kit used the most during the second half of the year was a multi-brand kit that mainly targets Office 365 and Outlook credentials, but which also supports spoofed pages for AOL, Bank of America, Chase, Daum, DHL, Dropbox, Facebook, Gmail, Skype, USAA, Webmail, Wells Fargo, and Yahoo. The second most popular phishing kit in the timeframe also targets Office 365, Cyren says. This tool, however, was specifically built for Office 365 phishing and packs built-in techniques to evade detection, including blocking IPs and security bots, as well as user agents to hide from phishing defenses. A PayPal phishing kit has emerged as the third most used, and employs new levels of sophistication, with several evasive techniques, the researchers say. Fourth in line comes a multi-brand phishing kit that can target almost anything from lifestyle brands to data, banking and email credentials, and more. Apple, Netflix, Dropbox, Excel, Gmail, Yahoo, Chase, PayPal and Bank of America are among the targeted brands.

5 Cloud Trends That Will Dominate 2019

Despite the hubbub being raised about the job-stealing nature of automation, you should expect automation services to keep rising in popularity as 2019 unfolds. Automation platforms are more efficient today than ever before, which means that businesses of all shapes and sizes have a sizable economic incentive to digitize their operations to the greatest extent possible. While human capital will always be vital in the cloud marketplace, it’s growing quite obvious that the future of the cloud will at least partly be determined by clever algorithms that do some of our thinking for us. Major corporations like Amazon and Microsoft are already beginning to cash in on this trend with the use of lawyer SEO; Amazon Web Services has a wide variety cloud automation services, for instance, including automatic testing to locate weak security points. As digital privacy and network security grow more important to the public, especially as new data breaches continue to occur, automation will be viewed as a way of securing the cloud and making it a more reliable place to store our sensitive information.

Understanding Blockchain Basics and Use Cases

The use cases for blockchains are still being hotly debated. There is the obvious example of censorship-resistant digital currencies. However, the volatility and fragmentation seen in the cryptocurrency market during 2018 seems to suggest that the actual applicability of trustless digital currencies is limited. From the enterprise perspective, it is becoming clear that they can also be used to create systems or networks that are deployed as a shared construct between multiple entities that don't necessarily trust each other yet want to share data and maintain a form of consensus about concerns that all parties care about. These use cases, where a centralized authority is unacceptable to the participants, or too costly to set up, are still emerging. This is despite the time, effort and venture capital that has deployed into the wide array of blockchain projects created to date. As more projects come to market as we move into 2019, it remains to be seen whether the promise of blockchain will ever amount to the major impact that its advocates have now been promising for quite some time.

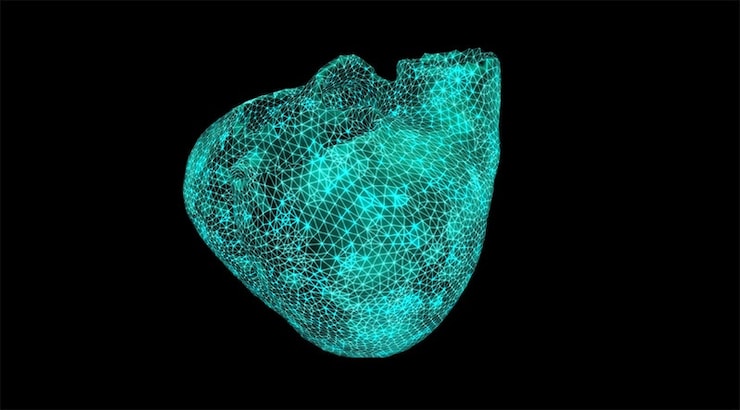

AI Inspires a Healthcare Revolution

Heart surgeons are employing data and analytics alongside scalpels and stents as they carry out intricate operations, using digital replicas of human hearts and AI to predict the likely outcomes of treatments. In the future, we may all have these replicas—known as digital twins—that are continuously fed data about our bodies and can help predict when we may become ill, and suggest preventive therapy and the most effective treatments. Digital twin technology has the potential to make significant improvements in diagnosis and treatment of a range of conditions. Building a digital replica of a heart requires collecting reams of data about the patient’s physiological condition, fitness levels and lifestyle. In one case, cardiologists created a digital version of the heart of a patient suffering from an irregular heartbeat, to test whether the patient was among the 70 percent likely to respond to a particular treatment.

When the Tide Goes Out: Big Questions for Crypto in 2019

Debates have raged around the globe about how cryptocurrencies, and particularly ICOs, fit within existing securities, commodities and derivatives laws. Many contend that so-called ‘utility tokens’ sold for future consumption are not investment contracts – but this is a false distinction. By their very design, ICOs mix economic attributes of both consumption and investment. ICO tokens’ realities – their risks, expectation of profits, reliance on the efforts of others, manner of marketing, exchange trading, limited supply, and capital formation — are attributes of investment offerings. In the U.S., nearly all ICOs would meet the Supreme Court’s ‘Howey Test’ defining an investment contract under securities laws. As poet James Whitcomb Riley wrote over 100 years ago: “When I see a bird that walks like a duck and swims like a duck and quacks like a duck, I call that bird a duck.” In 2019, we’re likely to continue seeing high ICO failure rates while funding totals decline.

Embracing agile development: Don’t let technical debt get in the way of innovation

By prioritising this type of approach first, you can begin to reduce debt and then resolve other portions as part of a long-term strategy. Remember: this isn’t going to resolve itself overnight. Get the whole team on board before committing to across-the-board technical debt reduction because unlike a Waterfall approach, Agile changes are small and frequent, so everyone will need to commit to the new method. Again, teams can tackle this by adopting an EAD approach, so they can focus on moving slowly and deliberately to avoid including any new changes that might introduce new debt or increases existing debt. An EAD approach also helps to ensure teams are committed to testing throughout the DevOps process, which in turn creates a more collaborative environment and promotes transparency. With Agile, each successive version of the software builds directly on the previous version. It also allows for repeat work that improves upon previously completed activities.

Quote for the day:

"The greatest leader is not necessarily the one who does the greatest things. He is the one that gets the people to do the greatest things." -- Ronald Reagan

No comments:

Post a Comment