Is generative AI mightier than the law?

The FTC hasn’t been shy in going after Big Tech. And in the middle of July, it

took its most important step yet: It opened an investigation into whether

Microsoft-backed OpenAI has violated consumer protection laws and harmed

consumers by illegally collecting data, violating consumer privacy and

publishing false information about people. In a 20-page letter sent by the FTC

to OpenAI, the agency said it’s probing whether the company “engaged in unfair

or deceptive privacy or data security practices or engaged in unfair or

deceptive practices relating to risks of harm to consumers.” The letter made

clear how seriously the FTC takes the investigation. It wants vast amounts of

information, including technical details about how ChatGPT gathers data, how the

data is used and stored, the use of APIs and plugins, and information about how

OpenAI trains, builds, and monitors the Large Language Models (LLMs) that fuel

its chatbot. None of this should be a surprise to Microsoft or ChatGPT. In May,

FTC Chair Lina Khan wrote an opinion piece in The New York Times laying out how

she believed AI must be regulated.

Four Pillars of Digital Transformation

The four principle was understanding your customers and your customer segments.

that's number one. Second is aligning with your customers and your functional

teams. Because you cannot do anything digital transformation in a silo. You can

say, "Oh, Asif and Shane wants to digital transform this company and forget

about what people A and people B are thinking. Shane and I are going to go and

make that happen." We will fail. Not going to happen. That's where the

cross-functional team alignment comes in. The third is influencing and

understanding what the change is, why we want to do it, how we are going to do

it. And what's in it, not for Shane, not for Asif. What's in it for you as a

customer or as an organization? Again, showing the empathy and explaining the

why behind it. And finally, communicating, communicating, communicating,

over-communicating and celebrating success. To me, those are the big four

pillars that we use to sell the idea of what the digital transformation is to at

any level from a C-level, all the way to people at the store level. Can I

explain them what's in it for them?

Scientists Seek Government Database to Track Harm from Rising 'AI Incidents'

Faced with mounting evidence of such harmful AI incidents, the FAS noted the

government database could somewhat align with other trackers and efforts. "The

database should be designed to encourage voluntary reporting from AI developers,

operators, and users while ensuring the confidentiality of sensitive

information," the FAS said. "Furthermore, the database should include a

mechanism for sharing anonymized or aggregated data with AI developers,

researchers, and policymakers to help them better understand and mitigate

AI-related risks. The DHS could build on the efforts of other privately

collected databases of AI incidents, including the AI Incident Database created

by the Partnership on AI and the Center for Security and Emerging Technologies.

This database could also take inspiration from other incident databases

maintained by federal agencies, including the National Transportation Safety

Board's database on aviation accidents." The group further recommended that the

DHS should collaborate with the NIST to design and maintain the database,

including setting up protocols for data validation categorization,

anonymization, and dissemination.

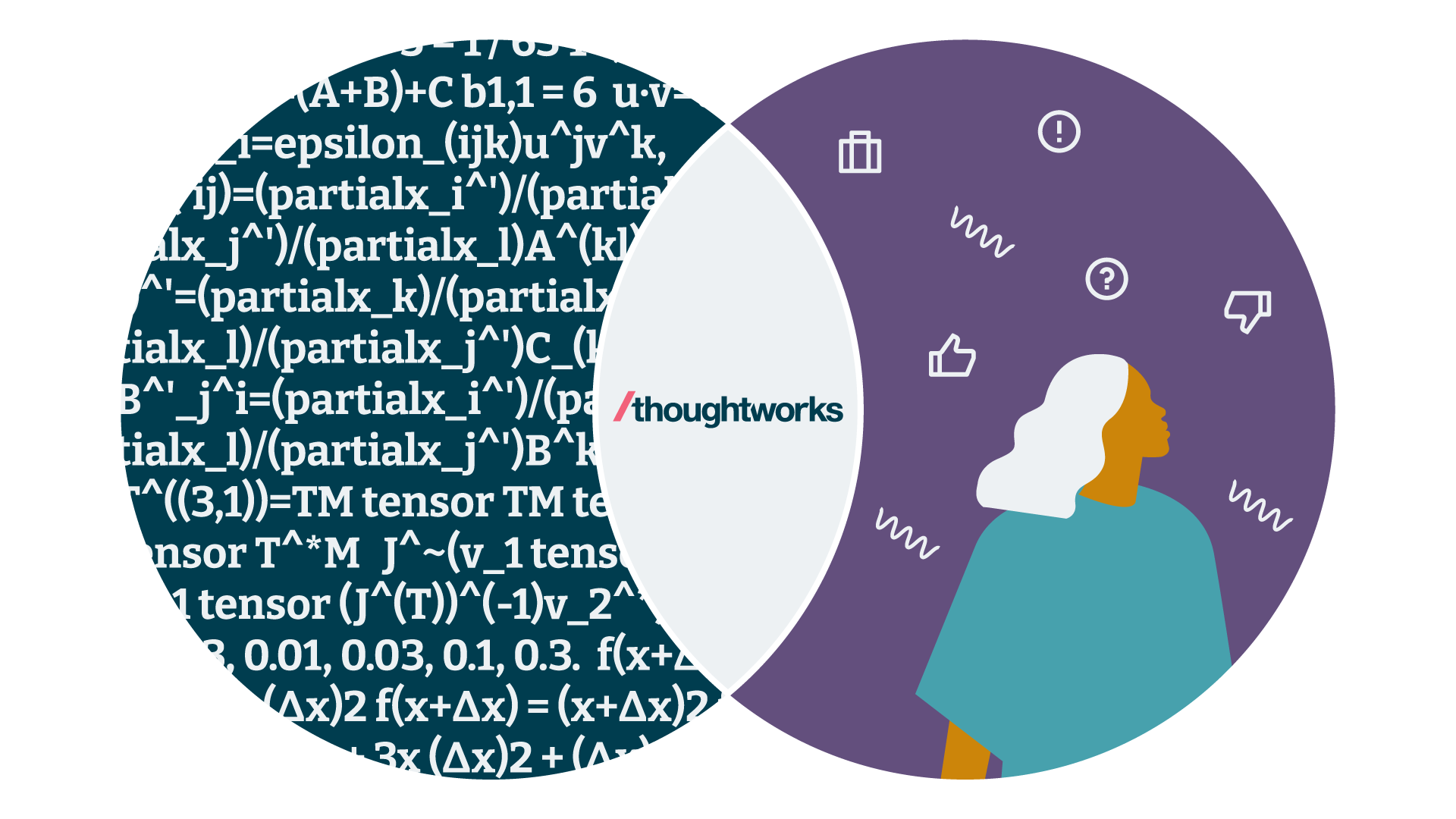

Generative AI: Headwind or tailwind?

It's topping everyone's wish list, with influences converging from various

directions: customers, staff members and corporate boards, all applying pressure

to harness its potential in their respective markets. On the bright side,

there's a unified objective: to make progress. The challenge, however, is that,

like most early-stage technologies, the path forward with generative AI isn't as

straightforward — there's a lot of ambiguity about what to do, how to do it or

even where to start. The potential of generative AI surpasses mere

cost-effectiveness and efficiency. It can fuel the generation of new ideas,

fine-tune designs and facilitate the launch of new products. It could serve as

your catalyst for innovation if you're bold enough to step into this new

frontier. But where do you step first? Our approach is first to identify a

problem or "missing." In the simplest explanation possible, envision a Venn

diagram where one circle represents the new tech wave (generative AI) and the

other represents your customer, their challenges, opportunities, tasks, pains

and gains.

Hackers: We won’t let artificial intelligence get the better of us

Hackers who have adopted or who plan to adopt generative AI are most inclined to

use Open AI’s ChatGPT ... Those that have taken the plunge are using generative

AI technology in a wide variety of ways, with the most commonly used functions

being text summarisation or generation, code generation, search enhancement,

chatbots, image generation, data design, collection or summarisation, and

machine learning. Within security research workflows specifically, hackers said

they found generative AI most useful to automate tasks, analyse data, and

identify and validate vulnerabilities. Less widely used applications included

conducting reconnaissance, categorising threats, detecting anomalies,

prioritising risk and building training models. Many hackers who are not native

English speakers or not fluent in English are also using services such as

ChatGPT to translate or write reports and bug submissions, and fuel more

collaboration across national borders.

Your CDO Does More Than Just Protect Data

Influential CDOs who can collaborate without being perceived as aloof or

arrogant stand out in the field. Balancing visionary thinking with practical

implementation strategies is vital, and CDOs who instill purpose and

forward-looking excitement within their teams create a culture of innovation

and continuous improvement. These qualities are essential for unlocking the

full potential of data leadership. ... With boards needing more depth of

tech knowledge to oversee strategy, CDOs can be valuable directors. CDOs who

can demonstrate experience in making data core to the company’s strategy or

informing a transformational pivot for the business would bring a high

amount of value to boardroom discussions. The opportunity to understand and

see risks and opportunities through a board member’s eyes is an invaluable

experience for a CDO, which not only helps the CDO to prepare for future

board service but also gives your board members additional education about

the future of data and what it can bring to your organization.

Data Warehouse Telemetry: Measuring the Health of Your Systems

At the heart of the data warehouse system, there is a pulse. This is the set

of measures that indicate the system's performance -- its heartbeat, so to

speak. This includes the measurements of system resources, such as disk

reads and writes, CPU and memory utilization, and disk usage. These metrics

are an indicator of how well the overall system is performing. It is

important to measure to make sure that these metrics do not go too low or

too high. When they go too low, it is an indicator that the system has been

oversized and resources are being wasted. When they go too high, it is an

indicator that the system is undersized and resources are nearing

exhaustion. As the resources hit a critical level, overall performance can

grind to a halt, freezing processes and negatively impacting the user

experience. When a medical practitioner sees that a patient’s heart

rate/pulse is too fast or too slow, they will provide several

recommendations, including ongoing monitoring to see if the situation

improves or changes to diet or exercise.

Reducing Generative AI Hallucinations and Trusting Your Data

Data has two dimensions. One is the actual value of the data and the

parameter that it represents; for example, the temperature of an asset in a

factory. Then, there is also the relational aspect of the data that shows

how the source of that temperature sensor is connected to the rest of the

other data generators. This value-oriented aspect of data and the relational

aspect of that data are both important for quality, trustworthiness, and the

history and revision and versioning of the data. There’s obviously the

communication pipeline, and you need to make sure that where the data

sources connect to your data platform has enough sense of reliability and

security. Make sure the data travels with integrity and the data is

protected against malicious intent. ... Generative AI is one of those

foundational technologies like how software changed the world. Mark

[Andreesen, a partner in the Silicon Valley venture capital firm Andreessen

Horowitz] in 2011 said that software is eating the world, and software

already ate the world. It took 40 years for software to do this.

10 Reasons for Optimism in Cybersecurity

The new National Cybersecurity Strategy announced by the Biden

Administration this year emphasizes the importance public-private

collaboration. Google Cloud’s Venables anticipates that knowledge sharing

between the public and private sectors will help enhance transparency around

cyber threats and improve protection. “As public and private sector

collaboration grows, in the next few years we’ll see deeper coordination

between agencies and big tech organizations in how they implement cyber

protections,” he says. The public and private sectors also have the

opportunity to join forces on cybersecurity regulation. ... As the

cybersecurity product market matures it will not only embrace

secure-by-design and -default principles. XYPRO’s Tcherchian is also

optimistic about the consolidation of cybersecurity solutions.

“Cybersecurity consolidation integrates multiple cybersecurity tools and

solutions into a unified platform, addressing the crowded and complex nature

of the cybersecurity market,” he explains.

Keeping the cloud secure with a mindset shift

Organizations developing software through cloud-based tools and environments

must take additional care to adapt their processes. Adapting a “shift-left”

approach for the continuous integration and continuous deployment CI/CD

pipeline is particularly important. Traditionally, security checks were

often performed towards the end of the development cycle. However, this

reactive approach can allow vulnerabilities to slip through the cracks and

reach production stages. The shift-left approach advocates for integrating

security measures earlier in the development cycle. By doing so, potential

security risks can be identified and mitigated early, preventing malware

infiltration and reducing the cost and complexity of addressing security

issues at later stages. This proactive approach aligns with the dynamic

nature of cloud environments, ensuring robust security without hindering

agility and innovation. Businesses should consider how they can mirror the

shift-left ethos across their other cloud operations.

Quote for the day:

"Leadership offers an opportunity to

make a difference in someone's life, no matter what the project." --

Bill Owens

:format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/24347780/STK095_Microsoft_04.jpg)