DDoS Attackers Revive Old Campaigns to Extort Ransom

Radware's researchers say the tactics recently observed with the attacks

launched by this particular group indicate a fundamental change in how it

operates. Previously, the operators would target a company or industry for a

few weeks and then move on. The 2020-2021 global ransom DDoS campaign

represents a strategic shift from these tactics. DDoS extortion has now become

an integral part of the threat landscape for organizations across nearly every

industry since the middle of 2020," the report states. The other major change

spotted is this threat group is no longer shy about returning to targets that

initially ignored their attack or threat, with Radware saying companies that

were targeted last year could expect another letter and attack in the coming

months. "We asked for 10 bitcoin to be paid at (bitcoin address) to avoid

getting your whole network DDoSed. It's a long time overdue and we did not

receive payment. Why? What is wrong? Do you think you can mitigate our

attacks? Do you think that it was a prank or that we will just give up? In any

case, you are wrong," the second letter says, according to Radware. "The

perseverance, size and duration of the attack makes us believe that this group

has either been successful in receiving payments or they have extensive

financial resources to continue their attacks," the report states.

Five Reasons You Shouldn't Reproduce Issues in Remote Environments

When attempting to reproduce an issue across multiple environments, one area

that teams must have solid processes around is test data management. Test data

can be critical in the reproduction of bugs in that if you don’t have the

right test data in your environment, the bug may not be reproducible. Due to

the sheer size of production data sets, teams must often work with subsets of

that data across test environments. The holy grail of test data management

processes is to allow teams to easily quickly subset production data based on

the data needed to reproduce an issue. In practice, things don’t always work

out so easily. It’s hard to know what attributes of your test data may be

influencing a specific bug. In addition, data security when dealing with PII

data can be a major challenge when subsets of data are used across

environments. Teams need to ensure that they are in compliance with corporate

data privacy standards by masking or generating new relevant data sets. Many

times it takes lots of logging and hands on investigation to uncover how data

discrepancies can cause those hard to find bugs. If you cannot easily manage

and set up test data on demand, teams will suffer the consequences when it

comes to trying to reproduce bugs in remote environments.

AI ethics: Learn the basics in this free online course

If you are interested, an excellent place to start might be the free online

course The Ethics of AI, offered by the University of Helsinki in partnership

with "public sector authorities" in Finland, the Netherlands, and the

UK. Anna-Mari Rusanen, a university lecturer in cognitive science at the

University of Helsinki and course coordinator, explains why the group

developed the course: "In recent years, algorithms have profoundly impacted

societies, businesses, and us as individuals. This raises ethical and legal

concerns. Although there is a consensus on the importance of ethical

evaluation, it is often the case that people do not know what the ethical

aspects are, or what questions to ask." Rusanen continues, "These

questions include how our data is used, who is responsible for decisions made

by computers, and whether, say, facial recognition systems are used in a way

that acknowledges human rights. In a broader sense, it's also about how we

wish to utilize advancing technical solutions." The course, according to

Rusanen, provides basic concepts and cognitive tools for people interested in

learning more about the societal and ethical aspects of AI. "Given the

interdisciplinary background of the team, we were able to handle many of the

topics in a multidisciplinary way," explains Rusanen.

Zero trust: A solution to many cybersecurity problems

CISOs of organizations that have been hit by the attackers are now mulling

over how to make sure that they’ve eradicated the attackers’ presence from

their networks, and those with very little risk tolerance may decide to “burn

down” their network and rebuild it. Whichever decision they end up making,

Touhill believes that implementing a zero trust security model across their

enterprise is essential to better protect their data, their reputation, and

their mission against all types of attackers. And, though a good start, this

should be followed by the implementation of the best modern security

technologies, such as software defined perimeter (SDP), single packet

authorization (SPA), microsegmentation, DMARC (for email), identity and access

management (IDAM), and others. SDP, for example, is an effective, efficient,

and secure technology for secure remote access, which became one of the top

challenges organizations have been faced with due to the COVID-19 pandemic and

the massive pivot from the traditional office environment to a

work-from-anywhere environment. Virtual private network (VPN) technology,

which was the initial go-to tech for secure remote access for many

organizations, is over twenty years old and, from a security standpoint, very

brittle, he says.

Comparing Different AI Approaches to Email Security

Supervised machine learning involves harnessing an extremely large data set

with thousands or millions of emails. Once these emails have come through,

an AI is trained to look for common patterns in malicious emails. The system

then updates its models, rules set, and blacklists based on that data. This

method certainly represents an improvement to traditional rules and

signatures, but it does not escape the fact that it is still reactive and

unable to stop new attack infrastructure or new types of email attacks. It

is simply automating that flawed, traditional approach – only, instead of

having a human update the rules and signatures, a machine is updating them

instead. Relying on this approach alone has one basic but critical flaw: It

does not enable you to stop new types of attacks it has never seen before.

It accepts there has to be a "patient zero" – or first victim – in order to

succeed. The industry is beginning to acknowledge the challenges with this

approach, and huge amounts of resources – both automated systems and

security researchers – are being thrown into minimizing its limitations.

This includes leveraging a technique called "data augmentation," which

involves taking a malicious email that slipped through and generating many

"training samples" using open source text augmentation libraries to create

"similar" emails.

Why Is Agile Methods Literacy Key To AI Competency Enablement?

First, quality AI is a highly iterative experimentation, design, build and

review process. Organizations that are aspiring to build strong AI and data

sciences competency centers will flounder if their core cultures are not

building agile skills into all operating functions, from top to bottom.

Given the incredible speed and uncertainties of everything becoming more

digital and smarter, the imperative for all talent to continually adapt,

reflect, and make decisions based on new information is a business

imperative. Leaders do not have the luxury to procrastinate too long before

acting on the new insights, and making decisions with confidence. Some

times, cultures can build a capacity for inaction versus action oriented

behavior. Agile leadership demands rapid precision, involving diverse

stakeholders, which in turn, yields more positive change dynamics (momentum)

and more importantly innovation capacity grows as a result of this energy

force. In a recent Harvard article, the authors pointed out that, “If people

lack the right mindset to change and the current organizational practices

are flawed, digital transformation will simply magnify those flaws.” Truly

agile organizations are able to capitalize on new information and make the

next move because they have what we call the capacity to act.

10 ways to prep for (and ace) a security job interview

Hiring managers typically look for strong technical skills and specific

cybersecurity experience in the candidates they want to interview,

particularly for candidates filling entry- and mid-level positions within

enterprise security. But managers use interviews to determine how well

candidates can apply those skills and, more specifically, whether candidates

can apply those skills to support the broader objectives of the

organization, says Sounil Yu, CISO-in-resident at YL Ventures. As such, Yu

says he and others look for “T-shaped individuals”—those with deep expertise

in one area but with general knowledge across the broader areas of business.

The candidates who get job offers are those who have, and demonstrate, both.

“Security is a multidisciplinary problem, so that depth is an important

asset,” Yu adds. Candidates love to say they’re passionate about security,

but many can’t figure out how to showcase it. Those who can, however, stand

out. Yu once interviewed a candidate via video and could see a server rack

in the background of this person’s home office. “He clearly liked tinkering

outside of work. You could see that he had tech skills and a passion for

them and a drive to learn about new technologies,” Yu says.

The changing role of IT & security – navigating remote work cybersecurity threats

The move to remote working and the complication of multiple devices and

locations is also raising the important questions related to software

licensing. Are you licensed for the apps that people are using at home, or

are you licensed on their computer in the office and on their computer at

home? Several businesses are now having to buy thousands of additional

software licenses so that employees can work on more than one computer, at a

time when cost optimisation is extremely important. One of the related

threats to businesses is running afoul of regulatory data privacy

protections like GDPR and CCPA, among others. Given the current state of

things, it is unlikely that a regulator would currently be hunting for

companies that might be improperly managing employee and customer data. It

appears regulators are largely being more lenient at this stage while

companies are busy just trying to survive. Whilst it is reasonably to

consider that, for a time, this will continue, there will come a time when

we see a return to enforcement and, in the meantime, there is no guarantee

that regulators will not review issues that come up as a result of a data

breach or loss. It’s always important to reinforce the best security

practices to your workforce, but it is especially important when your

employees are out of their normal routines.

Weighing Doubts of Transformation in the Face of the Future

You don’t have to [change], but you will be left behind. Seventy-four

percent of CEOs believe that their talent force and organization need to be

a digitally transformed organization, yet they feel like only 17% of their

talent is capable and ready to do that. That gap is glaring. That’s coming

from the tops of organizations and businesses. The first mover advantage has

kind of passed already. Now we’re getting into the phase of cloud migration

and the concept of everything-as-a-service. Digital transformation is easier

to attain. You don’t have to be the first mover or early adopter. The

companies that help you live, work, and play inside your home were pretty

resilient during the COVID-19 pandemic. Tech, media, and fitness companies

like NordicTrack and Peloton that helped you stay inside your house, they

were the ones that needed to transform digitally immediately to deal with

the significant increase in demand along with significant supply chain

challenges. Now we are seeing other industries that saw a bit of a pause

during COVID -- consumer, travel, entertainment, energy -- those businesses

are seeing or expecting this uptick in the summer travel period, the pent-up

demand of Americans. Interest rates are very low, and they haven’t been able

to spend [as much] money for the last 12 to 18 months by the time the summer

comes around.

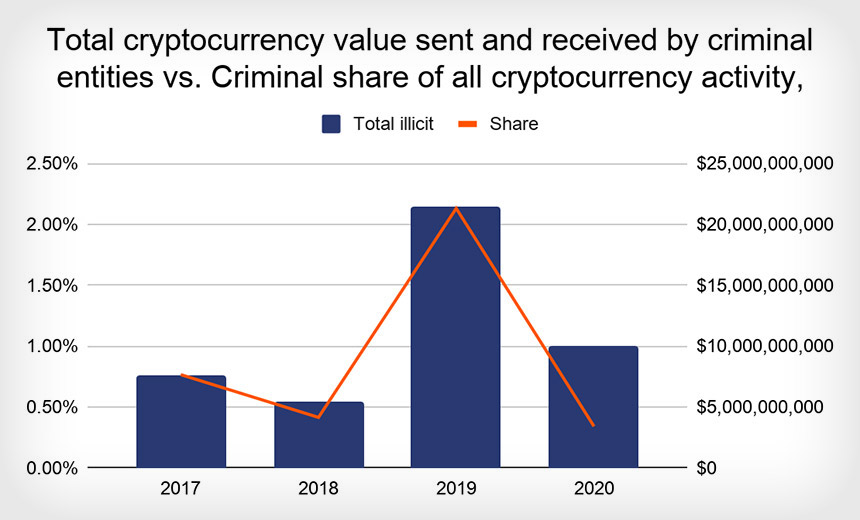

Good News: Cryptocurrency-Enabled Crime Took a Dive in 2020

While the total cryptocurrency funds received by illicit entities declined in

2020, Chainalysis reports, criminals continue to love cryptocurrency - with

bitcoin still dominating - because using pseudonymizing digital currencies

gives them a way to easily receive funds from victims. Cryptocurrency also

supports darknet market transactions, with many markets offering escrow

services to help protect buyers and sellers against fraud. Using

cryptocurrency, criminals can access a variety of products and services, such

as copies of malware or hacking tools, complete sets of credit card details

known as fullz, and tumbling or mixing services, which are provided by a

third-party service or technology that attempts to mix bitcoins by routing

them between numerous addresses, as a way of laundering the bitcoins.

Criminals have also been using a legitimate concept called "coinjoin," which

is sometimes built into cryptocurrency wallets as a feature. It allows users

to mix virtual coins together while paying for separate transactions, which

can complicate attempts to trace any individual transactions. Intelligence and

law enforcement agencies have some closely held ability to correlate the

cashing out of cryptocurrency with deposits that get made into individuals'

bank accounts.

Quote for the day:

"To have long term success as a coach or in any position of leadership, you have to be obsessed in some way." -- Pat Riley