The lessons SaaS businesses must learn from 2020

Put simply, there are two types of churn for SaaS businesses, and two stages

when it happens. Voluntary, or “active” churn is when a customer chooses to

cancel their subscription with a business. Involuntary, or “passive” churn

comes from subscriptions being cancelled due to accidental reasons, like

failed payments. Typically, you would expect 20-40% of churn to be

involuntary, and most of that will be coming from card payment users, where a

payment fails because customers haven’t been charged successfully. This is

positive, because it means you can put in place measures for better payment

acceptance to stop involuntary churn happening. However, because of the Covid

crisis, and the higher number of companies competing for the same amount of

customers, it’s likely that the percentage of voluntary churn will be higher

in 2021, as customers shop around for a better deal. At Paddle, we talk to

around 200 new software companies a month, as well as our 2,000 existing

customers when advising them on how to sell into over 200 countries across the

world. Therefore, we’ve seen first-hand the impact churn reduction strategies

can have on a software business’s growth.

Mac Attackers Remain Focused Mainly on Adware, Fooling Users

A recent report by The Citizen Lab at the University of Toronto underscored

that the commercial sale of zero-click exploits in iMessages, for example,

continues to allow governments to buy access to target dissidents. Now,

malware families that have previously only targeted Windows, and sometimes

Linux, are also being ported to target Macs, says Ian Davis, a senior threat

researcher at BlackBerry. "Historically MacOS threats mainly centered around

adware and trojanized downloaders of well-known software," he says. "While

these less-than-lethal families are still the majority of encountered samples,

advanced attacks and toolsets are now being developed and deployed along with

their counterparts for Windows and Linux." Overall, the sophistication of

MacOS threats is increasing, the two researchers say. Previously encountered

families on Windows or Linux are also now targeting MacOS systems. In 2020,

the community saw increased cases of ransomware, botnet campaigns, and

information-stealing backdoors in MacOS environments. Mac User = The

Vulnerability While at least a quarter of the threats encountered by Windows

systems are malware, less than 1% of those encountered by Mac systems are

considered malware, Malwarebytes stated in its February report. Instead,

attackers targeting the Mac look to fool the user into taking the necessary

steps to allow malware to run.

2021 blockchain predictions from Energy Web

Numerous countries—from China and Singapore in Asia to Sweden and France in

Europe to Saudi Arabia and the United Arab Emirates in the Middle East—are all

exploring centralized bank digital currency (CBDC) equivalents of their

respective fiat currencies. Crypto exchanges like Kraken are taking the

unprecedented step of getting bank licenses. Decentralized exchanges are

overtaking centralized incumbents (in August, for example, Uniswap surpassed

Coinbase Pro in trading volume). And in mid-December Bitcoin reached an

all-time high, for the first time crested US$23,000, mainly driven this time

by the interest of large enterprises. Meanwhile, the ‘data for free’ model

that has existed for years is coming to an end, and not just because of

legislation such as the EU’s GDPR and California’s CCPA. Consumers are

fighting back against losing control of their own data as tech giants find

themselves the target of lawsuits. In April, a U.S. federal appeals court

revived litigation that accused Facebook of violating users’ privacy rights by

illegally tracking their Internet activity. In September, a coalition of

Canadian provinces sued Google in a proposed class action lawsuit alleging the

Internet giant was collecting data without consent. That same month the Irish

Data Protection Commission issued a preliminary decision to halt Facebook’s

trans-Atlantic data transfers.

Team-Level Agile Anti-Patterns - Why They Exist and What to Do about Them

At the team level, lack of adequate training, mentoring and coaching is

responsible for a good bit of it, but it is hard to divorce the team from the

organisation. Negative organisational culture will of course affect its teams.

Agile can be counter intuitive, especially when it contradicts traditional

business experience, but a good Scrum Master/Coach should not only explain a

best practice, but should also explain why it’s best practice and should

explain what bad things happen if the anti-pattern remains unaddressed. Some

examples in my personal experience: I once worked on a team where a tech lead

met with the rest of the Development team immediately after Sprint Planning to

allocate Stories to each member of the team. I initially didn’t know this was

happening, but my suspicions were soon raised by a couple of

things: Sprint Backlog items were not being picked up in priority order;

and The tech lead only worked on the easier items. I asked

individuals why they were working on lower priority stories when there was a

higher priority story remaining in the To Do column. That’s when it came out

in the wash. The tech lead didn’t mean any harm. When I spoke with him, he

told me that’s what was expected of him by managers in his previous postings.

The Great Data Protection Debate: India’s new Data Protection Bill

The Data Protection Bill suggests that personal data should include data

“…relating to a natural person who is directly or indirectly identifiable,

having regard to any characteristic, trait, attribute or any other feature of

the identity… or any combination of such features, or any combination of such

features with any other information…” [Section 3(28)]. Verbiage apart, the

Bill essentially says that any data that identifies you in connection with any

other information is your personal data. Naturally, this creates a recipe for

competing claims. What if ‘any other information’ were to include somebody

else’s personal data? All these complications have led data experts to argue

that citizens should hold control over their data collectively, rather than

individually. These ‘data-co-operatives’ would act as trade unions within

conventional markets. Among others, they may negotiate rates for data, ensure

quality digital output, invoice organizations that benefit from the output,

and distribute the profits. Global data trusts may not be far away. In

January, Microsoft’s CEO, Satya Nadella, at the World Economic Forum called

for greater respect for “data dignity” - meaning individuals should have

greater control over their data and a larger share in the value it creates.

The need for zero trust security a certainty for an uncertain 2021

After a few years of relative predictability, data privacy promises to get

more “interesting” in 2021. The GDPR and CCPA regulatory regimes each notched

milestones in 2020. The GDPR (as of this writing) had assessed a record level

of fines totaling €220 million. California’s CCPA enforcement kicked in on

July 1st, and voters in that state passed additional privacy restrictions via

a November ballot initiative (the California Privacy Rights Act or CRPA). The

CRPA extends and modifies the CCPA, with new mandates taking effect at the end

of 2022. Here’s where things are going to get interesting. Optimistically,

effective COVID-19 vaccines will facilitate the ability for in-person work by

mid-year. But it’s just as likely delays in distribution, reluctance to

inoculate and lingering stress on the healthcare system will extend

work-from-home practices for many through 2021. Either way, organizations will

face obligations and temptations to collect more data on their employees –

about their immunization status, health situation, work habits, even their

social interaction patterns – than ever before. Today, most practitioners

focus on risks from external threat actors. But with a bracing action in

October, the GDPR authority showed they’re equally concerned with human

resources data when they slapped clothing retailer H&M with a €35 million

fine for illegal employee surveillance.

DevSecOps: The good, the bad, and the ugly

DevSecOps requires patience and tenacity. Any DevSecOps implementation takes a

minimum of a year—anything less than that is incomplete. It will involve a lot

of planning and designing before you start setting up the solution. You must

first identify the gaps in your current process and then determine the tools

required to support the process you intend to implement. You will need to

coordinate with a variety of teams to get buy-in and instruct them to

implement the required changes. None of this happens overnight. Making changes

to your process affects all people involved in the process and all

applications following the process. If all your applications are being scanned

using a common set of libraries, any change in these libraries will impact all

apps unless you put in specific conditions. Adding a new application to this

process may take a long time. Onboarding .Net applications usually take a lot

more time because they must build correctly. Visual Studio tends to hide a lot

of build errors and provides dependencies at runtime; this is less true for

MSBuild. In cases when the app team built an application using Visual Studio

and checks it in, an automated process using the MSBuild command line can

break due to a variety of reasons.

Reference Architecture For Healthcare – Core Capabilities

Users of the reference architecture are planners, managers, and architects.

They need to be able to deal with various aspects – the delivery of

healthcare, use of technology, commercial viability, adherence to quality,

regulatory compliance. They need to plan, establish, and maintain capabilities

required in their healthcare organization. For these users, we need to provide

a formal and versioned specification that outlines the elements of the

reference architecture, and how these elements relate to each other. In

addition, this specification needs to provide guidance how to implement and

use the reference architecture. To make the reference architecture actionable

asks for a reference implementation, which is a released model of the

specification. Ideally, the authors of the reference architecture should make

this reference implementation available for download. Let us assume the

reference implementation is developed in a specific modeling tool. For users

of different modeling tools, the reference implementation should also be

available in a neutral industry-standard exchange format, such as XMI or MOF.

... In many countries, healthcare organizations need to establish a Quality

Management System. They want to use a blueprint to achieve compliance with ISO

9001 for healthcare.

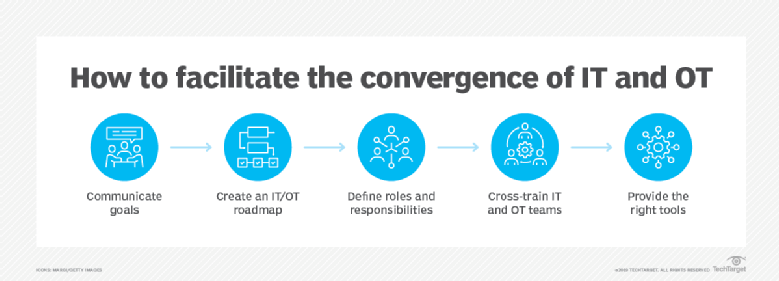

Trends push IT and OT convergence opportunities and challenges

Historically, IT excluded real-time OT localized data and OT lacked IT data

aggregation. Edge AI capabilities require both real-time computing and

aggregation. Organizations have struggled to incorporate IoT and edge data

into current processes because the data must be actionable in real-time,

Devine said. Organizations must feed the data from the physical OT system to

learn from it and make decisions from it. To aggregate data, organizations

must break down data silos in different systems, such as manufacturing supply

chains. Approximately 75% of data loses its value in milliseconds and data is

only valuable to organizations if it is actionable, Devine said. If

organizations must send data from the edge to the cloud, then real-time

actions aren't viable. The challenge is getting an aggregate view across data

silos to take localized action, but when real-time aggregation is achieved,

organizations can derive more insights and look for new revenue opportunities.

"IoT is the great provider of data. CEOs and CIOs [must] continually look to

see how data can fuel digital transformation and drive innovation. IoT data is

the fuel for analytics, machine learning… but it's also the source for CIOs to

help fuel new business models [such as] as-a-service [and] work from

anywhere," Turner said.

Using Microsoft 365 Defender to protect against Solorigate

From the threat analytics report, you can quickly locate devices with alerts

related to the attack. The Devices with alerts chart identifies devices with

malicious components or activities known to be directly related to Solorigate.

Click through to get the list of alerts and investigate. Some Solorigate

activities may not be directly tied to this specific threat but will trigger

alerts due to generally suspicious or malicious behaviors. All alerts in

Microsoft 365 Defender provided by different Microsoft 365 products are

correlated into incidents. Incidents help you see the relationship between

detected activities, better understand the end-to-end picture of the attack, and

investigate, contain, and remediate the threat in a consolidated manner. ... The

threat analytics report also provides advanced hunting queries that can help

analysts locate additional related or similar activities across endpoint,

identity, and cloud. Advanced hunting uses a rich set of data sources, but in

response to Solorigate, Microsoft has enabled streaming of Azure Active

Directory (Azure AD) audit logs into advanced hunting, available for all

customers in public preview. These logs provide traceability for all changes

done by various features within Azure AD.

Quote for the day:

"As a leader, you set the tone for your entire team. If you have a positive attitude, your team will achieve much more." -- Colin Powell