How Emerging Demands Of AI-Powered Solutions Help Gain Momentum Of Businesses

AI helps take the BI game leaps and bounds ahead with machine learning and

deep learning. It empowers BI with the ability to analyze data coming from

multiple sources, learn from this data in real-time, and provide accurate

granular predictive insights for faster business growth. AI always stays one

step ahead of humans in terms of analyzing large data sets at scale with speed

and accuracy. The influence of AI is simply not limited to analytics but also

to data engineering. Data coming from multiple structured, unstructured, and

semi-structured sources, needs to be transformed from silos to unified data.

AI can accelerate and automate this process creating a single view and saving

data analyst's time and providing much-needed independence for business users.

AI-powered NLP bots take BI altogether to the next level by enabling users to

extract insights via voice or chat using any language. For example, these BI

bots can easily answer questions like 'What is the sales forecast for the next

two quarters?' With this, business users can skip any complex query and leave

it up to the bots to process the analysis.

How to deal with the escalating phishing threat

“Working from home, where there are more distractions, makes it even less

likely that people really pay attention to these trainings. That’s why it’s

not uncommon to see the same people who tune out training falling for scams

again and again,” he noted. That’s why defenders must preempt attacks, he

says, and reinforce a lesson during a live attack. When something gets through

and someone clicks on a malicious URL, defenders must be able to

simultaneously block the attack and show the victim what the phisher was

attempting to do. Harr, who has over 20 years of experience as a senior

executive and GM at industry leading security and storage companies and as a

serial entrepreneur and CEO at multiple successful start-ups, is now leading

SlashNext, a cybersecurity startup that uses AI to predict and protect

enterprise users from phishing threats. He says that most CISOs assume

phishing is a corporate email problem and their current line of defense is

adequate, but they are wrong. “We are detecting 21,000 new phishing attacks a

day, many of which have moved beyond corporate email and simple credential

stealing. These attacks can easily evade email phishing defenses that rely on

static, reputation-based detection.

The cryptocurrency sector is overflowing with dead projects

It’s a good question if the world really needs a blockchain-based information

and trading platform for the pet market. I wouldn’t say there are many

problems with over-centralization there. Pet shops are usually chosen by

customers after analyzing brand reputation and online presence. Some problems

that customers on this market may face include unreliable information about

the acquired animal’s health or previous owners. However, these difficulties

comprise not a technical, but a legal problem that is unlikely to be solved

using blockchain technology. Moreover, since animal welfare laws vary between

different countries, creating a unified international platform in this field

is a legally challenging task, hardly suitable for a small technological

startup. The Petchain project team consisted mainly of no-names who had no

proven experience in any serious projects. It was not even possible to say for

sure whether these were real people — some of the project advisors turned out

to have been presented with fake photos. Despite some marketing efforts, no

serious funding was attracted to the project. At the moment, the official

website of the project is inactive and its social media accounts haven’t been

updated for more than a year.

The Road to MicroProfile 4.0

The primary driver behind creating the MicroProfile Working Group is to close

intellectual property gaps identified by the Eclipse Foundation for

specification projects. So, there are more legal protections in place now that

MicroProfile is a Working Group. A Working Group also places more processes on

MicroProfile. Historically, MicroProfile moved quickly with minimal process

and late-binding decisions. It was quite an agile project that delivered

specifications at quite a quick pace. However, I personally feel like we were

reaching a point where adding *some* process can benefit the project. For

instance, we now have to put more thought and formality up-front into planning

a specification, which requires a Steering Committee vote. Better planning

gives implementors, tool vendors, and the community more up-front visibility

into what is coming and prepare. However, we codified "limited processes" in

the MicroProfile Charter to keep processes to a minimum. ... A big challenge

was switching from being a fast-moving agile project to fitting into the

process structure required by a Working Group. We wanted to maintain as much

of our existing culture as possible because the community was consistently

delivering three annual releases.

Blockchain adoption 2021: Going mainstream through enterprise use

According to Bennet, many of the blockchain-based systems that are live today

share a common factor: less time involved to resolve discrepancies. In some

cases, this could even be instant. Bennet noted this common factor applies to

supply chain use cases as well as in financial services: “It’s not just about

needing fewer people to accomplish certain tasks; it’s also about shortening

elapsed time and freeing up liquidity. A key point is that it’s possible to

make it happen today, in the context of existing processes and operating

models.” While this may be, Bennet shared that the more long-term strategic

projects in financial services tend to revolve around potential changes in

market structure and operating models. Many of these cases also require

regulatory adjustments. “This takes time, resource and effort. That’s the main

reason why COVID-related volatility and uncertainty has led many banks to pull

back from some of those more long-term DLT-related projects for the time

being,” Bennet said. The report also states that almost all the initiatives

set to go from pilot into production next year will run on enterprise

blockchain platforms that utilize the cloud. These most likely will include

solutions from Alibaba, Huawei, IBM, Microsoft, OneConnect and Oracle.

How open source makes me a better manager

As an open source enthusiast, it was easy for me to transition my management

style to the open management philosophy, which fosters transparency,

inclusivity, adaptability, and collaboration. As an open manager, one of my

primary goals is to engage and empower associates to be their best. It is easy

to adopt this philosophy when you understand the open source values. By being

transparent, I help create the context for the team and the "why." This is a

building block in creating trust. Being consciously inclusive is another value

that I regard highly. Making sure everyone in the team is included and

everyone's voice is heard is extremely important for individual and

organizational growth. In an environment that is constantly evolving and where

innovation is key, being nimble and adaptable is of utmost importance.

Encouraging associates' growth mindset and continuous learning helps foster

these traits. For effective collaboration, I believe we need an environment

where there is trust, open communication, and respect. By paying attention to

these values, an open manager can create an environment that is inclusive,

treats others with respect, and encourages everyone to support each other.

Marriott Hit With $24 Million GDPR Privacy Fine Over Breach

One notable aspect about the fine imposed on Marriott is that it is just

one-fifth of the fine that the ICO originally recommended in July 2019, which

Marriott had contested. But the reduction is not nearly as big as with the

final fine that the ICO recently imposed on British Airways, in connection

with a 2018 data breach that exposed the personal information of about 430,000

customers, with 244,000 possibly having their names, addresses, payment card

numbers and CVVs compromised. In its initial July 2019 penalty notice, the ICO

had proposed fining BA a record £184 million ($238 million). But last month,

the regulator issued a final fine of just £20 million ($26 million). Legal

experts say the final fines being lower than the proposed penalties is not

surprising. Indeed, the ICO earlier this year noted that because of the

ongoing coronavirus outbreak, it planned to adjust its regulatory approach,

not least because of the staffing and financial impact that COVID-19 was

having on organizations. Under GDPR, after proposing a fine, regulators have

12 months to issue a final fine, unless it proposes delaying the imposition of

the fine, and the organization that is being investigated agrees.

What Is The Value Proposition of Enterprise Architecture Today?

The first one of these is Strategy Advancement. This is basically concerned

with how the business can achieve its target outcomes and also identifying the

means to do so. So what are we trying to achieve – do we know, do we have

doubts about that? If we’re sure our goals are solid, then how do we make them

happen, how do we ensure that every investment, or strategic decision, or new

business process we set up is inline and actively supports achieving those

goals. EA connects all these concepts across the different enterprise domains

beautifully and when done in a leading platform like HoriZZon, the quality of

the business intelligence insights that can be produced and delivered to the

relevant audiences, in order to make sure everyone’s eyes remain on the prize,

is invaluable. So strategy advancement is key in ensuring coordinated change

across the entire business. The second one of these areas is Risk

Identification & Mitigation. Security and the risks to personal data have

never been more relevant than now. This area of enterprise architecture’s

value proposition deals with identifying the risks faced by the organization

in a way that allows architects to engage in a meaningful conversation with

stakeholders on the business side about how we can address these risks.

Three Intelligent Automation Capabilities to Look for When Evaluating RPA Tools

Making decisions based on rules only works when outcomes are predictable. What

happens when outcomes are less certain and conditions more varied — conditions

under which people have to make decisions all the time? For instance, how

would a bot respond to a query like Is the supplier reliable? Choosing an

answer like Extremely Reliable, Very Reliable, Sometimes Reliable, or Not

Reliable requires an element of human reasoning. Bots can achieve this by

applying an AI technique called fuzzy logic. Fuzzy logic uses mathematical

models defined by the RPA developer to represent variations and uncertainty.

For example, on a scale from 1 to 10, an Extremely Reliable supplier may be

rated between 7 and 10, whereas a Very Reliable supplier may be rated between

6 and 8. The bot uses these mathematical models to convert precise input data

into fuzzy input values. The bot then applies business rules defined by the

RPA developer to the fuzzy input values. The mathematical model is then

applied to the fuzzy output values to generate the result. ... As the amount

of digital work increases, RPA solutions need scalability to provide greater

performance capacity. Most RPA vendors solve this problem by enabling

customers to add more bots to scale capacity horizontally.

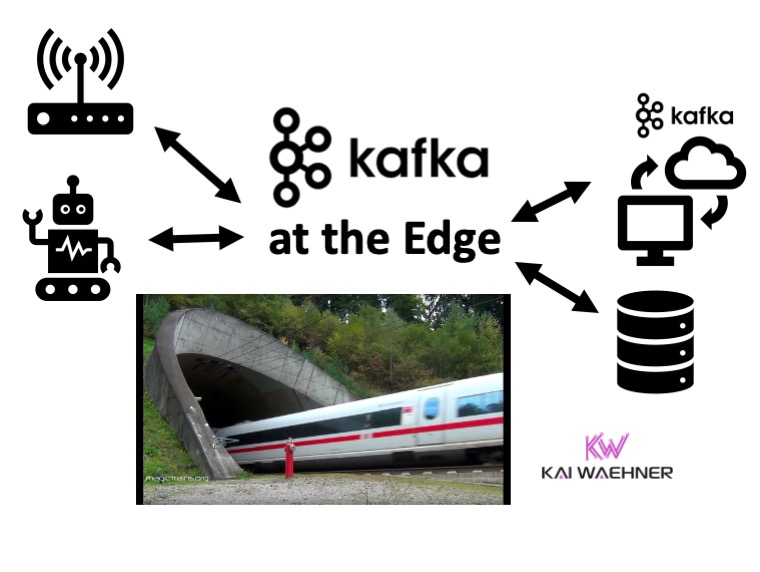

How Data Gravity Is Forcing a Shift to a Data-Centric Enterprise Architecture

The new demands brought on by AI and ML create new opportunities for

data-centric architecture that supports businesses and their need to operate

ubiquitously so they can meet customer expectations and make business

decisions on-demand. It’s informed by real-time intelligence to power

innovation and scale digital business. ... With a modernized infrastructure

strategy, enterprises can support the influx of data from several users,

locations, clouds, and networks and create centers of data exchange. Traffic

can then be aggregated and maintained via public or private clouds, at the

core or the edge, and from every point of business presence, helping lessen

data gravity barriers and its effects. By implementing a secure, hybrid IT and

data-centric architecture globally at key points of business presence,

businesses can harness data to create centers of data exchange for better

digital decision-making. Data gravity impacts businesses of all sizes, and

every industry has unique requirements around addressing data gravity. In

order for the industry to tackle the next era of compute, companies including

data center, cloud, and HPC solution providers, are coming together to help

mitigate the challenges associated with data gravity by creating an ecosystem

of partners so that enterprises can solve their global coverage, capacity, and

ecosystem connectivity.

Quote for the day:

"The greatest thing is, at any moment, to be willing to give up who we are in order to become all that we can be." -- Max de Pree