What Is Dark Data Within An Organisation?

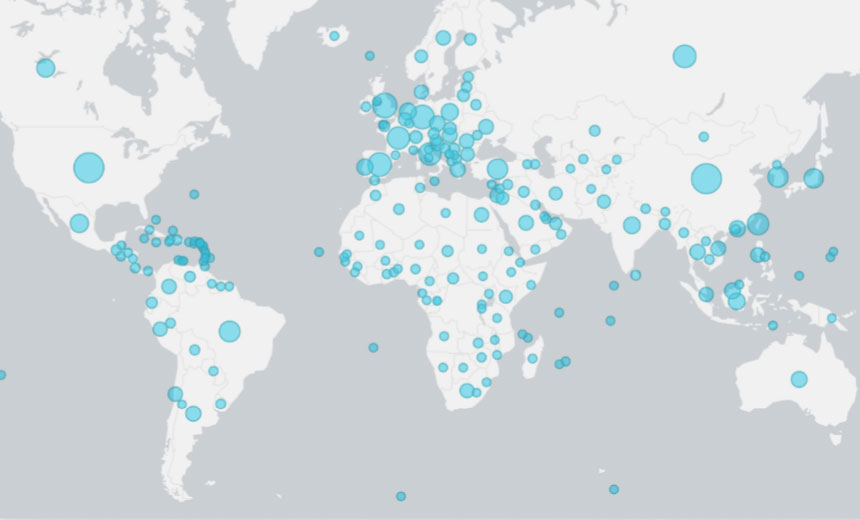

In the universe of information assets, data may be deemed dark for a number of

various reasons either because it’s unstructured or because it’s behind a

firewall. Or it may be dark due to the speed or volume or because people

simply have not made the connections between the different data sets. This

could also be because they do not lie in a relational database or because

until recently, the techniques required to leverage the data effectively did

not exist. Dark data is often text-based and stays within company firewalls

but remains very much untapped. For instance, supply chain complexity is

a significant challenge for organisations. The supply chain is a data-driven

industry traversing across a network of global suppliers distribution channels

and customer base. This industry churns out data in huge numbers given that an

estimated that only 5% of data is being used. So while 95% of such data is not

being utilised for analytics, it presents an opportunity for big data

technologies to bring this dark data to light. To date, organisations

have explored only a small fraction of the digital universe for data analytic

value. Dark analytics is about turning dark data into intelligence and insight

that a company can use.

Quantum computing meets cloud computing

As part of Leap, developers can also use a feature called the hybrid solver

service (HSS), which combines both quantum and classical resources to solve

computational problems. This "best-of-both-worlds" approach, according to

D-Wave, enables users to submit problems of ever-larger sizes and

complexities. Advantage comes with an improved HSS, which can run

applications with up to one million variables – a jump from the previous

generation of the technology, in which developers could only work with 10,000

variables. "When we launched Leap last February, we thought that we were at

the beginning of being able to support production-scale applications," Alan

Baratz, the CEO of D-Wave, told ZDNet. "For some applications, that was the

case, but it was still at the small end of production-scale applications."

"With the million variables on the new hybrid solver, we really are at the

point where we are able to support a broader array of applications," he

continued. A number of firms, in fact, have already come to D-Wave with a

business problem, and a quantum-enabled solution in mind. According to Baratz,

in many cases customers are already managing the small-scale deployment of

quantum services, and are now on the path to full-scale implementation.

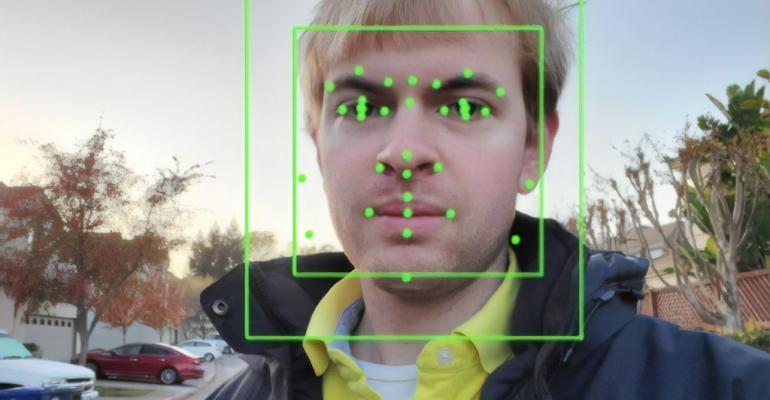

H&M Hit With Record-Breaking GDPR Fine Over Illegal Employee Surveillance

Swedish multinational retail company H&M has been hit with a monumental

€35 million ($41.3 million) GDPR fine for illegally surveilling employees in

Germany. The Data Protection Authority of Hamburg (HmbBfDI) announced the fine

on Thursday after the company was found to have excessively monitored several

hundred employees in a Nuremberg service centre. The watchdog said that since

at least 2014, parts of the workforce had been subject to "extensive recording

of details about their private lives". "After absences such as vacations

and sick leave the supervising team leaders conducted so-called Welcome Back

Talks with their employees. After these talks, in many cases not only the

employees' concrete vacation experiences were recorded, but also symptoms of

illness and diagnoses,” HmbBfDI said. “In addition, some supervisors acquired

a broad knowledge of their employees' private lives through personal and floor

talks, ranging from rather harmless details to family issues and religious

beliefs.” The extensive data collection was exposed in October 2019 when such

data became accessible company-wide for several hours due to a configuration

error.

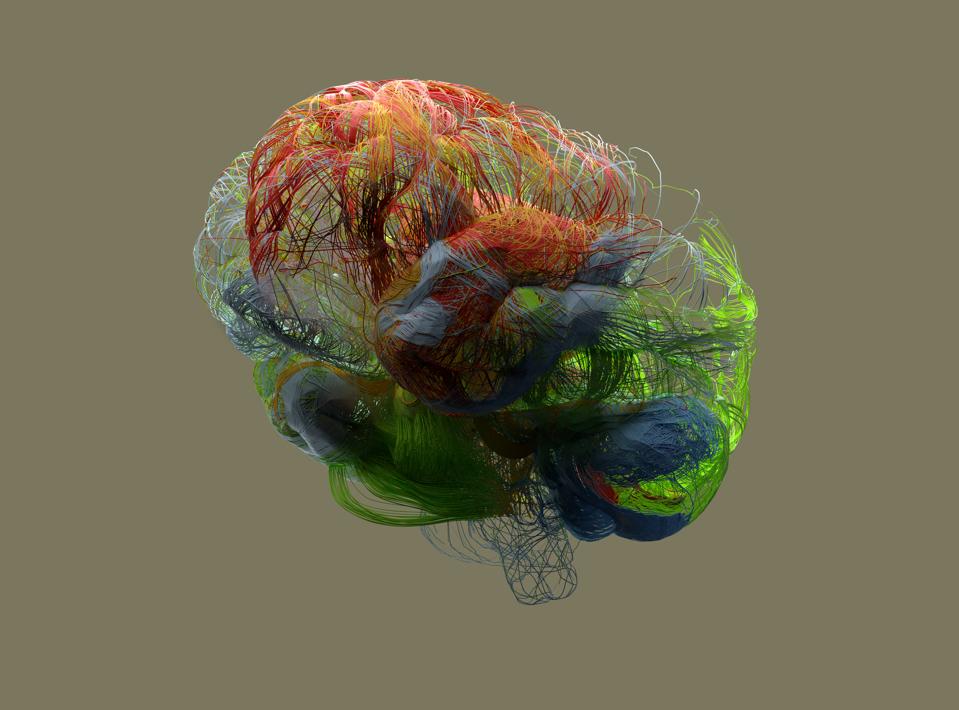

How CIOs can convert Data Lakes into profit centres

Most CIOs today are comfortable with traditional concepts of BI and Data

Warehousing. These mature technologies have worked well to help the

organization gain insights into what happened in the past - but are no longer

sufficient by themselves. ML and AI are required technologies today for

generating the next set of competitive advantages - predicting the future,

gaining deep insights from unstructured data and creating data-driven

products. Relational Databases are often incapable of handling rapidly

evolving data formats and unstructured data, like natural language text and

multimedia, which are the fuel for this ML and AI-driven revolution. ...

Exploding data sizes, increasing Data Democratization and increasingly rich

and complex data processing workloads mean the traditional on-premise hardware

has a hard time keeping up. Processing power of modern processors for AI and

ML (GPUs/TPUs) are doubling every few months - leaving Moore’s law in the

dust. Capital sunk in on-premise hardware becomes obsolete faster than ever.

Rapidly innovating hardware in the cloud enables new classes of applications

or breaks performance barriers for old ones.

Data Governance & Privacy Best Practices to Lower Risk and Drive Value

An enterprise-wide data governance program is your key to accelerating

digital transformation programs such as cloud migration, improving customer

experience with trust assurance, and lowering operating expenses when data

use is optimized, in line with your corporate policies. In today’s

world with more data being available from more sources, it’s no surprise

that we look for an automated and scalable methodology to manage all this

information. Data governance is a discipline that encompasses the rules,

policies, roles, responsibilities, and tools we put in place to ensure our

data is accurate, consistent, complete, available, and secure to enable

trust in the outcomes we plan to achieve. From my experience, these

are three best practices around governing data to maximize the success of

business transformation agendas, reduce uncertainty, and ensure safe and

appropriate data use. ... Leading global organizations are leveraging

Informatica’s integrated and intelligent Data Governance and Privacy

solution portfolio to proactively add value to their bottom line today. It

is about getting the right information to the right people at the right

time, enabling the entire organization to be proactive, in order to identify

and act on new opportunities and plan for the best results, instead of

reacting to unanticipated surprises.

Cyber-attack victim CMA CGM struggling to restore bookings, say customers

As CMA CGM’s IT engineers continue, for the fifth day, to try to restore its

systems following a cyber-attack at the weekend, the French carrier has come

under mounting criticism from customers that its back-up booking process is

inadequate. Yesterday, the carrier said its “back-offices [shared services

centres] are gradually being reconnected to the network, thus improving

bookings and documentation processing times”. And it reiterated that

bookings could still be made through the INTTRA portal, as well as manually

via an Excel form attached to an email. However, Australian forwarder and

shipper representatives, the Freight & Trade Alliance (FTA) and

Australian Peak Shippers Association (APSA), described the measures as

“failing to adequately provide contingency services”. John Park, head of

business operations at FTA/APSA, said its members ought to be due

compensation from the carrier and its subsidiary, Australia National Line,

which operates some 14 services to Australia, according to the eeSea liner

database. “FTA/APSA has reached out again to senior CMA CGM management to

seek advice as to when we can expect full service to be re-instated,

implementation of workable contingency arrangements and acceptance that

extra costs incurred ...

Researchers create a graphene circuit that makes limitless power

The breakthrough is an offshoot of research conducted three years ago at the

University of Arkansas that discovered that freestanding graphene, which is

a single layer of carbon atoms, ripples, and buckles in a way that holds

potential energy harvesting capability. The idea was controversial because

it does refute a well-known assertation from physicist Richard Feynman about

the thermal motion of atoms, known as Brownian motion, cannot do work.

However, the University researchers found at room temperature thermal motion

of graphene does induce an alternating current in a circuit. The achievement

was previously thought to be impossible. Researchers also discovered their

design increased the amount of power delivered. Researchers say they found

the on-off, switch-like behavior of the diodes amplifies the power delivered

rather than reducing it as previously believed. Scientists on the project

were able to use a relatively new field of physics to prove diodes increase

the circuit’s power. That emerging field is called stochastic

thermodynamics. Researchers say that the graphene and the circuit share a

symbiotic relationship.

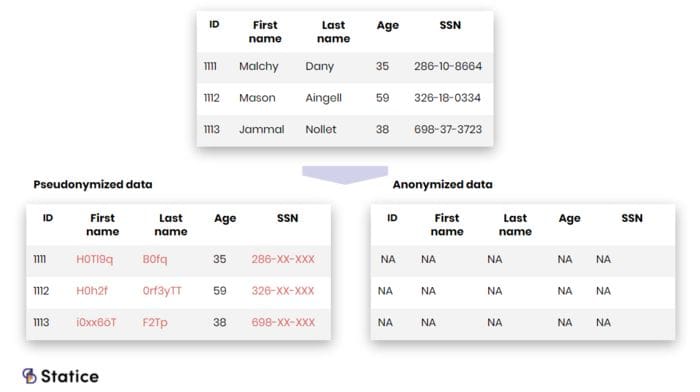

Frameworks for Data Privacy Compliance

As new privacy regulations are introduced, organizations that conduct

business and have employees in different states and countries are subject to

an increasing number of privacy laws, making the task of maintaining

compliance more complex. While these laws require organizations to

administer reasonable security implementations, they do not outline what

specific actions should be taken to satisfy this requirement. As a result,

many risk managers are turning to proven security frameworks that

specifically address privacy. Doing so can help organizations build privacy

and security programs that make compliance more manageable, even when

beholden to multiple regulations. While no two frameworks are the same, each

is designed to help organizations identify and address potential security

gaps that could negatively impact data privacy. Such frameworks include the

Center for Internet Security (CIS) Top 20, Health Information Trust Alliance

Common Security Framework (HITRUST CSF), and the National Institute of

Standards and Technology (NIST) Framework. ... Originally designed for

health care organizations and third-party vendors that serve health care

clients, HITRUST CSF leads organizations beyond baseline security practices

to establish a strong, mature security program.

Selecting Security and Privacy Controls: Choosing the Right Approach

The baseline control selection approach uses control baselines, which are

pre-defined sets of controls assembled to address the protection needs of a

group, organization, or community of interest. Security and privacy control

baselines serve as a starting point for the protection of information,

information systems, and individuals’ privacy. Federal security and privacy

control baselines are defined in draft NIST Special Publication 800-53B. The

three security control baselines contain sets of security controls and control

enhancements that offer protection for information and information systems

that have been categorized as low-impact, moderate-impact, or high-impact—that

is, the potential adverse consequences on the organization’s missions or

business operations or a loss of assets if there is a breach or compromise to

the system. The system security categorization, risk assessment, and security

requirements derived from stakeholder protection needs, laws, executive

orders, regulations, policies, directives, and standards can help guide and

inform the selection of security control baselines from draft Special

Publication 800-53B.

Emerging challenges and solutions for the boards of financial-services companies

Actions by boards reflect the increased attention all financial firms are now

devoting to cyberrisk. Ninety-five percent of board committees, for example,

discuss cyberrisks and tech risks four times or more a year (Exhibit 1). One

such firm holds optional deep-dive sessions the week before each quarter’s board

meeting. These sessions cover relevant topics, such as updates on the current

intelligence on threats, case studies of recent breaches that could affect the

company or others in the industry, and the impact of regulatory changes. ...

There has been a remarkable shift in board awareness of cybersecurity in the

past few years: for example, earlier McKinsey research, from 2017, suggested

that only 25 percent of all companies gave their boards information-technology

and security updates more than once a year. More frequent and consistent

communication between board members and senior management on this topic now

enables boards to understand the financial, operational, and technological

implications of emerging cybersecurity threats for the business and to guide its

direction accordingly. Firms increasingly recruit experts for these

committees.

Quote for the day:

"Superlative leaders are fully equipped to deliver in destiny; they locate eternally assigned destines." -- Anyaele Sam Chiyson