The 5 Biggest Technology Trends In 2021 Everyone Must Get Ready For Now

In recent years we have seen the emergence of robots in the care and assisted

living sectors, and these will become increasingly important, particularly

when it comes to interacting with members of society who are most vulnerable

to infection, such as the elderly. Rather than entirely replacing the human

interaction with caregivers that is so important to many, we can expect

robotic devices to be used to provide new channels of communication, such as

access to 24/7 in-home help, as well as to simply provide companionship at

times when it may not be safe to be sending nursing staff into homes.

Additionally, companies finding themselves with premises that, while empty,

still require maintenance and upkeep, will turn to robotics providers for

services such as cleaning and security. This activity has already led to

soaring stock prices for enterprises involved in supplying robots. Drones will

be used to deliver vital medicine and, equipped with computer vision

algorithms, used to monitor footfall in public areas in order to identify

places where there is an increased risk of viral transmission.

New BlindSide attack uses speculative execution to bypass ASLR

Speculative execution is a performance-boosting feature of modern processors.

During speculative execution, a CPU runs operations in advance and in parallel

with the main computational thread. When the main CPU thread reaches certain

points, speculative execution allows it to pick an already-computed value and

move on to the next task, a process that results in faster computational

operations. All the values computed during speculative execution are

discarded, with no impact on the operating system. Academics say that this

very same process that can greatly speed up CPUs can also "[amplify] the

severity of common software vulnerabilities such as memory corruption errors

by introducing speculative probing." Effectively, BlindSide takes a

vulnerability in a software app and exploits it over and over in the

speculative execution domain, repeatedly probing the memory until the attacker

bypasses ASLR. Since this attack takes place inside the realm of speculative

execution, all failed probes and crashes don't impact the CPU or its stability

as they take place and are suppressed and then discarded.

Five things businesses need to think about when implementing AI

The phrase “first time right” rarely applies to implementing AI, and that is

especially true in making predictions and forecasts. Achieving an acceptable

level of accuracy could take a number of iterations and continuous course

corrections. Failure, then, must happen fast in order to learn what to

correct. Since the stakes are high and there is always a risk of failure, it

is also important to start with a smaller problem or a subsection of a large

problem. This helps to reduce the risk associated with the cost of failure.

There is no shame in dropping an idea and rethinking the approach. In fact,

that willingness to rethink is vital. If the viability of a solution is in

doubt, persisting with it – and, by so doing, wasting time and money – is

never the right way to go. It is always advisable to change course or, in some

cases, drop the idea altogether and pick a new one. Once a smaller problem is

resolved and the business can see its value – and associated ROI – the

solution can be scaled up to solve a bigger problem. ... IT and AI projects

are inherently different. IT projects proceed with a clear idea and a set

target for the desired output from day one. AI, by contrast, is mostly used in

the quest to understand the unknown. It is therefore impossible to know what

the output will be ahead of time.

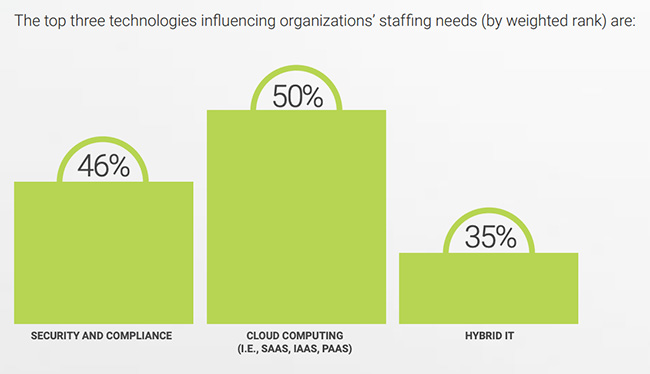

Edge computing: The next generation of innovation

Edge computing may be relatively new on the scene, but it’s already having a

transformational impact. In “4 essential edge-computing use cases,” Network

World’s Ann Bednarz unpacks four examples that highlight the immediate,

practical benefits of edge computing, beginning with an activity about as

old-school as it gets: freight train inspection. Automation via digital

cameras and onsite image processing not only vastly reduces inspection time

and cost, but also helps improve safety by enabling problems to be identified

faster. Bednarz goes on to pinpoint edge computing benefits in the hotel,

retail, and mining industries. CIO contributing editor Stacy Collett trains

her sights on the gulf between IT and those in OT (operational technology) who

concern themselves with core, industry-specific systems – and how best to

bridge that gap. Her article “Edge computing’s epic turf war” illustrates that

improving communication between IT and OT, and in some cases forming hybrid

IT/OT groups, can eliminate redundancies and spark creative new initiatives.

One frequent objection on the OT side of the house is that IoT and edge

computing expose industrial systems to unprecedented risk of malicious

attack.

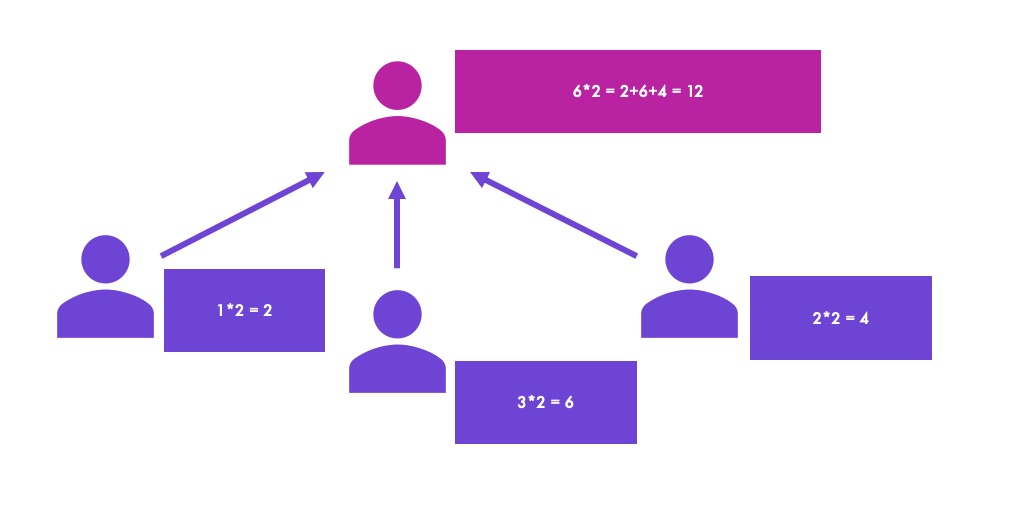

5 SMART goals for a QA analyst

Software testers need a basic knowledge of these programming language staples

for continued career growth. Successful execution of manual tests and

automated scripts is helpful, but testing activities only go so far. It's even

more important to know the conditions under which data enters into one of the

programming structures, and what must happen for that data to exit it. Let's

start with if-then-else logic. In this structure, if is whether a condition

exists. If it does exist, then execute the then function. Otherwise, execute

the else function (or do nothing). The if-then-else structure works well when

a condition is true or false. A case structure might be appropriate, when a

condition falls into one slot in a range of possibilities. A case structure

expands on if-then-else by providing multiple functions to execute if certain

conditions exist. For example, an if-then-else structure might check if a

number falls in a range between 2-10 and, if it does, then the number is

multiplied by five. If the number is not in that range, it will fall into the

else condition, and is not multiplied at all. A case structure specifies what

to do when a number falls into one of many ranges. In this example, when a

number is between 2-10, it falls into Case A and is multiplied by five.

Q&A on the Book The Art of Leadership

Given leadership is a career restart, there are daily mistakes. The one I see

the most with engineering leaders (but I suspect it applies to all leaders) is

the tendency to regress when the stakes are highest. It’s when a new manager

thinks he or she is helping during crunch time by helping finish the feature,

fixing bugs, or otherwise regressing to their prior role because they think

they are helping. Let’s catalog the reasons they aren’t helping: They’ve put

the team in a situation where they appear to be unable to complete the

necessary work. Bad planning; They’re doing the work their team should

do, so they’re sending unintentional signals to the team that they don’t

believe the team can do the work. Bad signal.; They’re not giving the team the

chance to rise to the occasion. To figure out a creative means to complete the

work. This might be impossible because of bad planning, but assume it’s not.

What does the team think when the leader keeps saving the day by fixing bugs?

It’s a safety net, sure, but it’s a net that isn’t allowing others to grow.

Leaders often rationalize this behavior as “I want to remain technical.” I

want engineering leaders to be deeply technical, too.

What A Remote Workforce Means For Innovation

Though managers might periodically have doubts about remote workers’

productivity, it’s vital for companies to create a culture of trusting their

employees to complete their work and continue innovating even when they’re

off-site, Strawmyer added. As personnel continue to work from home, companies

might need to reevaluate how they assess productivity. After all, spending a

lot of time in the office isn’t an effective productivity indicator. Employees

in the office environment are just as, if not more, capable of wasting time,

Strawmyer said. In the beginning of the pandemic, workers were focused on

getting used to their remote workflow. But now that they are adjusting to the

current working conditions, Bang anticipates that more innovation will follow.

Depending on their unique situation, removing a lengthy commute or wrapping up

other projects has freed some employees up to focus on long-term ideas, he

added. However, while working from home does free up time, there’s a big

difference between transitioning to remote work under normal circumstances and

shifting to remote work during a pandemic, Strawmyer acknowledged.

5 Best Techniques for Automated Testing

To obtain better results through automated testing, testing must be started

earlier and ran frequently as required. The sooner QA team get engaged in the

project life cycle, the better you test, the more glitches and anomalies you

find. Automated unit tests can be executed on first day and then the tester

can progressively build their automated test suite. On the flip side,

detection of bugs on early phase is cost lesser to fix than those identified

later in deployment or production. Hence, with the shift left movement,

proficient testers and software developers are now empowered to create and run

tests. The significant automated testing tools allow users to carry out

functional user interface tests for desktop and web apps easily using

preferred IDEs. ... Automation can’t replace manual testers. Automation

tests represent executing some tests more frequently. The expert tester has to

start few by attempting the smoke tests first and afterward cover the build

acceptance testing. After that they can move onto recurrent performed tests,

then onto the time taking tests. Besides this, QA tester has to ensure every

test they automate, should saves time for a manual tester to concentrate on

other vital things.

Is low-code/ no-code the dark horse in enterprise technology for a Covid-19 afflicted world?

Tthe adoption of low-code by enterprises is still in its infancy on account of

a host of issues which range from technology complexity, vendor lock-in, or

maybe even a basic lack of understanding of how low-code functions. “Scaling

automation is a typical problem and many companies could have invested in

licenses not knowing how to leverage its potential. Secondly, enterprise

automation can seem very complex if you’re not using the right tools and

technologies,” Persistent’s Dixit said. “To ensure the success of any project,

execution requires the right mix of domain, tech and skilled consultants,” he

added. LeapLearner’s Ranjan sees a lack of integration using APIs as a

hindrance. “This limits the ability to create applications and systems which

can solve complex problems and are AI enabled. Hopefully as the low-code/

no-code eco system grows, this will change,” he said. But some low-code

platform startups, such as Bengaluru based Mate Labs, are working towards

creating a low-code environment that would be able to address these

challenges.

How to approach Agile team organization

Forming a productive Agile team requires a significant commitment of

individual energy, time and concentration from each member. When Agile teams

first come together, they go through what psychologist Bruce Tuckman termed

the stages of forming, storming, norming and performing. At first, everyone

must figure out the team dynamics. After a team forms, it establishes

hierarchies of decision-making and leadership. Everyone finds their place and

function within the team. This process takes time, as team members find their

niche within the group's overall normal. In the normalizing phase, the team

functions smoothly, with less arguing or trying to rise above the others. The

group becomes a team rather than a collection of co-workers. When a team

stabilizes, it's a sign that members learned how to optimize their skills

within working relationships. The most successful Agile teams develop bonds

that allow them to be productive and interact on a personal, genuine level.

When project managers switch out team members, team development starts all

over again. When a stable team is disrupted, it must go through the forming,

storming, norming, performing process all over again.

Quote for the day:

"Leadership is an opportunity to serve. It is not a trumpet call to self-importance." -- J. Donald Walters