Facebook's Libra Cryptocurrency Prompts Privacy Backlash

Facebook's cryptocurrency plans have raised bipartisan concerns, with Rep. Patrick McHenry, R-N.C., telling The Verge: "It is incumbent upon us as policymakers to understand Project Libra. We need to go beyond the rumors and speculations and provide a forum to assess this project and its potential unprecedented impact on the global financial system." On Wednesday, the U.S. Senate Banking Committee announced it would hold a hearing about the company's cryptocurrency plans on July 16. So far, the committee has not released a list of witnesses it intends to call, according to Reuters. A Facebook spokesman tells Information Security Media Group: "We look forward to responding to lawmakers' questions as this process moves forward." Besides new concerns over its cryptocurrency plans, Facebook is already facing scrutiny from the U.S. Federal Trade Commission regarding its data-sharing practices, with the company preparing to pay as much as $3 billion fine. Facebook has been bound by an agreement with the FTC since 2011 that stems from previous privacy missteps, including sharing data without consent.

A CISO's Insights on Breach Detection

You have to identify what potentially anomalous behavior is, know what you're logging and reporting on, and make sure you have team members who are available to address these anomalies." Key steps, the CISO says, include using appropriate technologies, such as security incident and event monitoring tools, as well as effectively using security team resources "to conduct root cause analysis to identify what's going on." Parker will be a featured speaker at ISMG's Healthcare Security Summit in New York on June 25. He will join other CISOs and security experts who will address breach detection and an array of other top security challenges. In the interview (see audio link below photo), Parker also discusses: Conquering "alarm fatigue," which often slows the process of identifying breaches; Why many insider breaches are more difficult to detect than some incidents involving hackers; and The growing breach risks posed by supply chain vendors and other third parties, including incidents potentially involving compromised application programming interfaces.

Rise in business-led IT spend increases risks and opportunities

Despite commanding larger budgets for technology, CIOs also seem to be losing influence, with the percentage of CIOs sitting on the board falling from 71% to 58% in two years, according to the research. However, Bates does not think fewer CIOs sitting on the board will have a negative impact on business-led IT projects – or even IT projects in general. “CIOs continue to exert a strong degree of influence and are being joined by a new generation of technology-savvy executives like the chief technology officer, chief digital officer and chief data officer,” he said. “As organisations mature into this new paradigm of a coalition of technology leaders, there will be more effective governance at all levels. “We are at a moment in time where the CIO is still best positioned to advise the board and senior business leaders on technology and will increasingly have deep subject matter expertise from fellow executives to inform decision-making.” Beyond the disconnect between business and IT, another issue highlighted by the study is the slow progress in diversity and inclusion, with 74% of IT leaders polled saying related initiatives are, at most, “moderately successful”, with only minimal growth in women on tech teams – rising to 22% this year, compared with 21% last year.

MongoDB grows its solution portfolio while boosting its flagship platform

Positioning its document database as a platform for AI/machine learning app developers, MongoDB this week announced the beta of MongoDB Atlas Data Lake. This new serverless offering supports rich data analytics via the MongoDB Query Language. It supports multiple polymorphic data in multiple schema-free formats at any scale, compressed or uncompressed. It will support a consolidated user interface and billing with on-demand usage-based pricing. For storage, MongoDB Atlas Data Lake allows customers to “bring your own bucket” such as AWS S3, with MongoDB only charging customers for the ability to query the stored data through the Data Lake Service. It allows customers to query data quickly on S3 in any format, including JSON, BSON, CSV, TSV, Parquet and Avro, using the MongoDB Query Language. By bringing the MongoDB Query Language to the MongoDB Atlas Data Lake, this service enables developers to use that language across data on S3, making the querying of massive data sets easier and more cost-effective.

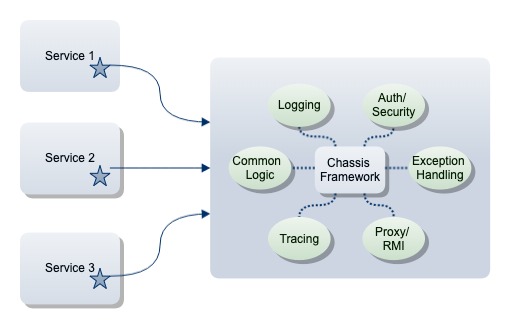

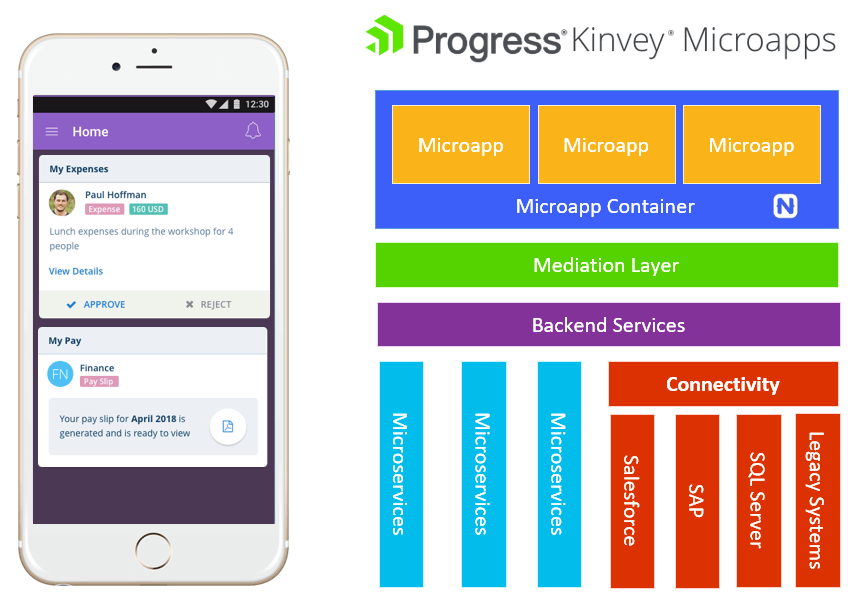

Using a Microservices Architecture to Develop Microapps

Let's look at microapps in terms of mobility. Because today we have a problem. Modern enterprises often have 20 or more web and mobile apps that you, as an employee, have to use just to get your job done. And how much functionality do you really use within these apps? It can be hard to find what we want, when we want. We are also suffering from app fatigue, both as app consumers in our personal lives and in our work lives as we deal with tens to hundreds of apps on our devices. As app developers we are also suffering, because these 20+ apps have to be maintained. We are also fielding requests for more and more apps, just adding to the maintenance pile. ... However, with a proper microapps platform, instead of marching through these steps for each new mobile experience we create, microapps allow us to do the hard and boring stuff once, and focus on the practical features and engaging experiences that we ultimately want to deliver! ... From a technical perspective, Kinvey Microapps enables you, as an app developer, to be dramatically more productive delivering mobile experiences to your users.

Ransomware gang hacks MSPs to deploy ransomware on customer systems

Hanslovan said hackers breached MSPs via exposed RDP (Remote Desktop Endpoints), elevated privileges inside compromised systems, and manually uninstalled AV products, such as ESET and Webroot. In the next stage of the attack, the hackers searched for accounts for Webroot SecureAnywhere, remote management software (console) used by MSPs to manage remotely-located workstations (in the network of their customers). According to Hanslovan, the hackers used the console to execute a Powershell script on remote workstations; script that downloaded and installed the Sodinokibi ransomware. The Huntress Lab CEO said at least three MSPs had been hacked this way. Some Reddit users also reported that in some cases, hackers might have also used the Kaseya VSA remote management console, but this was never formally confirmed. "Two companies mentioned only the hosts running Webroot were infected," Hanslovan said.

Navigating the Path Toward Becoming an Intelligent Enterprise

From an operations standpoint, the Index indicates that 82 percent of surveyed companies are sharing information from their IoT solutions with employees more than once a day. This is an increase of 12 percent from the previous year. In fact, approximately two-thirds of these companies share operational data about enterprise assets, including status, location, utilization or preferences, in real- or near-real time to help drive better more timely decisions. This shows that brands are making the transition to Industry 4.0—using connected, automated systems to collect and analyze data during every step of their processes and bridging the gap between the digital and physical to maximize efficiency, productivity, and transparency. ... It is not an easy task to quantify how “intelligent” an enterprise is or how much the manufacturing and T&L space is changing to adopt IoT solutions. This intelligence cannot simply be determined by which technology solutions a company utilizes or how open-minded they are about new processes.

Why Cybersecurity Takeovers Are Surging As Stocks Reach New Highs

Cybersecurity investor Ron Gula noted that chatter of a forthcoming recession often allows private backers to put more pressure on startups to raise money, thus putting more pressure on them to cash out sooner. As more companies see rivals go the M&A or public route, “this can create a sense of urgency,” Gula told Fortune. Another factor driving the exit wave is the timing of the cybersecurity venture capital boom, which started about five years ago, making many companies ripe for an exit around the same time. Meanwhile, there are more potential buyers across industries. That's because companies not traditionally regarded as cybersecurity firms are looking to add the offering to their portfolios. “They see the benefit of saying, We have lots of data, we’re gonna look to add security to that data,” explained Enrique Salem, former CEO of cybersecurity company Symantec and a current investor at Bain Capital Ventures, per Fortune.

“This research highlights the fact that building a strong cyber security culture and subscribing to the right best practices can help organisations of any size maximise their security effectiveness,” said Wesley Simpson, (ISC)2 chief operating officer. “It’s a good reminder that in any partner ecosystem, the responsibility for protecting systems and data needs to be a collaborative effort, and multiple fail safes should be deployed to maintain a vigilant and secure environment. The blame game is a poor deterrent to cyber attacks.” Nearly two-thirds (64%) of large enterprises outsource at least a quarter (26%) of their daily business tasks, which requires them to allow third-party access to their data. These outsourced functions can include anything from research and development, to IT services and accounts payable. This data access and sharing is necessary as a large enterprise scales its operations, but the research indicates that access management and vulnerability mitigation is often overlooked.

Top 5 Aspects That Can Strengthen Your Data Governance Framework

There is a reason why the term ‘data dump’ is popular. The only job of a data source is to collect information and ‘dump’ it where you can access it. This is why businesses have to sift through petabytes of data just to find something meaningful to gain business insights from. It is only after this data has been categorized into usable, helpful portions that it starts being realized as an asset. Data quality is, therefore, the simple act of converting raw data into a usable form and maintaining it as an asset. Data governance helps you uncover new sources of information and draw better business value from your data. It can also identify broken/missing pieces of information and prevent duplicates from interfering with one another. Through data governance, outdated information can be flagged for attention, and critical data can be highlighted to the right teams within the organization. Broken links, incomplete files, incorrect prioritization, etc. are all incidents that greatly affect data quality. Data governance practices help fix such occurrences and also maintain it.

Quote for the day:

"Character matters; leadership descends from character." -- Rush Limbaugh