What are the ingredients of digital transformation success?

Finding the right tech talent is a pressing issue for executives and a new study

finds that the right talent is hard to come by regardless of how successful

firms are with their enterprise modernization efforts. Successful firms recruit,

invest and retain knowledgeable staff (71%) and work with trusted partners (76%)

to compensate for whatever skill and culture gaps exist within their

organization, according to the report, "Secrets of Successful Digital

Transformation," by Forrester and global software consultancy ThoughtWorks. ...

Decision-makers at successful organizations reported that a true

cross-functional transformation process includes stakeholders from all parts of

the organization such as IT, business, finance and more having involvement in

the modernization initiatives, according to the report. "An effective

modernization culture and strategy must include strong leadership, including

support and guidance from executives and, perhaps most importantly, a dedicated

budget to execute transformations,'' the report said. It also requires a

monetary commitment. In fact, 71% of successful organizations fund their

enterprise modernization programs through a dedicated digital transformation

budget.

Staying Safe Online: 6 Threats, 9 Tips, & 1 Infographic

Sharing pictures of major life events or everyday moments on social media may

seem fairly innocent. However, you should probably be more careful. Everyone has

access to that information. Skilled cybercriminals have no trouble tracking down

your relationships and other details about your life. They may use what they

find to trick your friends into giving up sensitive information. It’s not hard

to find out dates of birth, email addresses, interests, and details about family

members, which makes it even easier for hackers to break into your account (see

the first tip to avoid this!). ... Sometimes, website content may seem too

appealing not to visit. You might even go ahead and create a profile, sharing

your personal information. You should be careful, though, because not all

websites are safe places. Who knows what malicious programs and scams are hidden

there? Before doing anything, make sure you check the website address. URLs

beginning with “https” are safer than ones with “http” because the letter “s”

stands for security. Another thing to look out for is a small lock sign near the

URL. Nowadays, web browsers are able to recognize safe websites and mark them as

secure with this sign.

Return to Office Risks Worth Considering

"Organizations should have adjusted their business continuity and disaster

recovery plans to account for the shift to remote work at the onset of the

pandemic," said John Beattie, principal consultant at business continuity

solution provider Sungard Availability Services. "These plans need to be

readjusted again to account for employees being back in the office and any

changes made to the IT environments as a result." Failing to tighten

cybersecurity protocols upon the return to the workplace could leave networks

vulnerable to cyberattacks and breaches. Additionally, failing to update the

business contingency and recovery plans and failing to provide employees notice

of plan changes could lead to outages or the inability to promptly act on

contingency plans when the time comes, Beattie said. ... Ger Doyle, head of

Manpower IT brand Experis and head of digital and innovation at ManpowerGroup,

warns that companies moving toward a new, hybrid way of working must be careful

to avoid a two-speed workplace in which those in the office get access to

opportunities that work-from-home employees miss.

Five ways to use data to make better business decisions

Even though data might be digitized, it still may not be relevant for

decision-making. If the data isn't valuable, it should be considered for

elimination. Deciding which data to keep is a balancing act. There is data

that isn't important today but could become valuable at a later date. However,

there is other data (IoT 'jitter', for example, or memos about a company

holiday party 20 years ago) that most likely won't ever be relevant to

decision-making and should be eliminated. Master data management frequently

focuses on normalizing or consolidating disparate data fields from different

systems that refer to the same piece of information. However, there is also

the need to aggregate unlike types of data, such as aggregating a weather

report with photos or videos of a storm system. Data aggregation is most

successful when business use cases are clearly identified, along with all of

the data and data combinations that are needed for decision making. With the

growth of citizen development and separate IT budgets in business user

departments, it's more difficult for IT to know where packets of

underexploited data might reside, and how to bring these data troves into a

central data repository so that everyone in the business can use

them.

Dubai’s DMCC opens Crypto Centre to tap into blockchain's potential

The Dubai Multi Commodities Centre has set up a new space that will house

companies developing crypto and blockchain technology. The Crypto Centre is

the result of a partnership with Switzerland’s CV Labs, the organisation

behind the Swiss government-backed Crypto Valley. It is part of the free

zone’s own Crypto Valley – an ecosystem for cryptographic, blockchain and

distributed ledger technology entities in the UAE. “This is a fantastic new

development. Crypto and blockchain technology has enormous potential to

transform global trade and supply chains ... and this aligns perfectly with

the DMCC’s vision to drive the future of trade,” said Ahmad Hamza, free zone

executive director at the DMCC. “Over the next few weeks and months, we will

see this centre filled with [companies] ... looking to scale up their crypto

businesses,” he said. He did not disclose the number of entities that DMCC

expects to attract to the centre. The DMCC, which presides over companies

involved in the trade of commodities that range from pulses to diamonds,

registered 2,050 new companies last year, a five-year high for the free

zone.

Nikola Tesla: 5G Network Could Realize His Dream of Wireless Electricity

The experiments used new types of antenna to facilitate wireless charging. In

the laboratory, the researchers were able to beam 5G power over a relatively

short distance of just over 2 meters, but they expect that a future version of

their device will be able to transmit 6μW (6 millionths of a watt) at a

distance of 180 meters. To put that into context, common Internet of Things

(IoT) devices consume around 5μW—but only when in their deepest sleep mode. Of

course, IoT devices will require less and less power to run as clever

algorithms and more efficient electronics are developed, but 6μW is still a

very small amount of power. That means, for the time being at least, that 5G

wireless power is unlikely to be practical for charging your mobile phone as

you go about your day. But it could charge or power IoT devices, like sensors

and alarms, which are expected to become widespread in the future. In

factories, for instance, hundreds of IoT sensors are likely to be used to

monitor conditions in warehouses, to predict failures in machinery, or to

track the movement of parts along a production line.

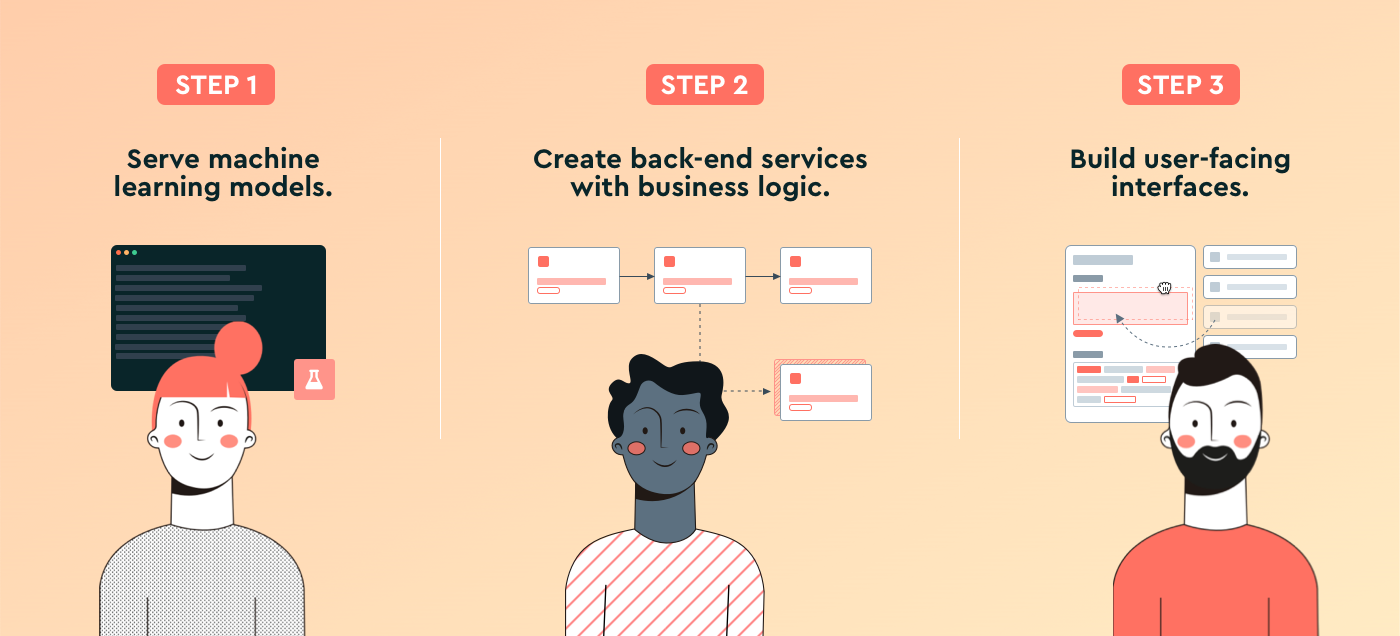

Understanding AI cloud

The most compelling advantages of AI cloud are the challenges it addresses. It

democratises AI, making it more accessible. By lowering adoption costs and

facilitating co-creation and innovation, it drives AI-powered transformation

for enterprises. The cloud is veritably becoming a force multiplier for AI,

making AI-driven insights available for everyone. Besides, though cloud

computing technology now is far more prevalent than the use of AI itself, we

can safely assume that AI will make cloud computing significantly more

effective. AI-driven initiatives, providing strategic inputs for

decision-making, are backed by the cloud’s flexibility, agility, and scale to

power such intelligence massively. The cloud dramatically increases the scope

and sphere of influence of AI, beginning with the user enterprise itself and

then in the larger marketplace. In fact, AI and the cloud will feed off each

other, aiding the true potential of AI flower through the cloud. The pace of

this will depend only on the AI expertise that enterprises can bring to bear

in their workplace activities, for the cloud is already here and seeping

everywhere.

Navigating the benefits and challenges of network and security transformation

Obtaining any necessary board-level buy in for transformation projects is only

half the battle. Any significant change project will require consideration of

the organisational arrangement and availability of specialist skills. The

Netskope/Censuswide research found that 50% of global CIOs believe that a lack

of collaboration between specialist teams is stopping them from realising the

benefits of digital transformation projects. For context, assuming that 50% of

CIOs are responsible for 50% of the $6.8 trillion digital transformation spend

IDC predicts, then we are looking at a situation where a spend equivalent to

the entire annual US tax income is in jeopardy because teams are failing to

work together effectively. ... The researchers discovered that while just

under half of security and networking teams report to the same boss, 37% of

participants stated that ‘the security and networking teams don’t really work

together much’. In fact, nearly half of the networking and security

professionals described the relationship between the two teams as ‘combative’

‘dysfunctional’, ‘frosty’ or ‘irrelevant’. They all agree that this imperfect

relationship has the potential to derail huge plans.

Real estate tech takes on the housing boom in this seller's market

"Proptech is most important in cities where there is a large transient

population, places that have a strong presence of universities, hospitals and

a strong job market," said Blum. "This includes major cities like

Philadelphia, Boston, San Francisco, NYC, Houston, Chicago, Miami. These

cities have already begun to rebound quickly. I've spoken to agents from major

cities across the country and they all say the same thing that anything that

can help save them time with their business is greatly welcomed." The new

platform Localize "harnesses the power of AI [artificial intelligence] to

provide a cutting-edge experience for homebuyers and brokers," explained Omer

Granot, Localize president and COO. "[W]e streamline the house-hunting journey

through" property insights and "our concierge texting service, Hunter by

Localize." Hunter curates properties specifically for each homebuyer through

its "Smart Matching technology." It uses more than 100 data insights that are

associated with a listing as well as a homebuyer's specific preferences to

send daily recommendations to prospective buyers "to find them the perfect

home.

Ethical Decisions in a Wicked World: the Role of Technologists, Entrepreneurs, and Organizations

"Wicked problem" is a term introduced by the theorists Rittel and Webber

(1973) to describe problems that cannot be definitively described, with no

"solutions" in the sense of definitive and objective answers. It is also

understood as a super-category of "complexity", problems that overwhelm us in

some sense. There is also a class of "super-wicked" problems: climate change,

poverty, food security, energy supply, education policy and public health.

They all have many interdependent factors making them seem impossible to

solve. The software industry faces wicked problems in different ways: by

developing complex software systems and by managing them as part of a larger

social, economic, and environmental fabric. Wicked problems always existed in

our industry, but the internet and globalization undoubtedly created

conditions for new forms of interaction, thus expanding the universe of

related wicked problems. Examples of wicked problems closely associated with

software are: social networks, sharing economy platforms, and air traffic

control. In business, a new strategy (e.g. re-branding) or a modification in a

product (e.g. introducing a new version of a video game) are classic

examples.

Quote for the day:

"No great manager or leader ever fell

from heaven, its learned not inherited." -- Tom Northup