Universities Are Failing Software Developers

First, universities need to re-examine their curricula -- and do so often, because technology, trends, and best practices move lightning-fast in our industry. You would think that the ever-evolving nature of software development is common knowledge, yet year after year, I meet with candidates who only know Python, Java, or C++. These coding languages are often taught because of existing school material, exercises, tests, and labs, but they aren’t as widespread in professional settings because, frankly, there are better languages with larger communities targeting a larger set of applications or devices. At my company, for instance, we prefer to primarily work with Typescript/Javascript, C#, and PHP, all of which come with great frameworks and libraries. In theory, software development or computer science is a very practical university major, with many obvious applications available immediately after graduation. But if universities want this to be true in practice, they need to do a much better job of teaching real, marketable skills that employers actually value. In addition to updating the hard skills being taught to students, university leaders need to emphasize the importance of softer skills like critical-thinking, problem-solving, communication, and project management.

Cybersecurity And The Vaccine Passport: A Dream Ticket Or A Flight Of Fancy?

There is currently no clearly defined standard for the vaccine passport. The

Biden administration has announced that there would be no central, national

policy, leaving the private sector to create its own solutions. Many projects —

including those of IBM, the International Air Transport Association and several

individual airlines — are already underway. Depending on where you intend to

travel, this could mean handing over personal information and login credentials

to multiple airlines and industry bodies. The more places that store this

information, the more vulnerable it is to breach and loss. This lack of any

agreed standard also opens the system up to fraud and manipulation.

Cybercriminals are already working the impact of the pandemic to their

advantage, and a patchwork of vaccine passport systems presents another golden

opportunity. Because of the emphasis on equity for rollout, vaccine passports

will have to be both paper and digital. For those who do not have digital

devices, paper passports will show up on the dark web — the same way that we see

fake vaccine passports already showing up for a few hundred bucks.

Cybersecurity, emerging technology and systemic risk: What it means for the medical device industry?

A personal goal of mine is that within 5 years, I can talk to any medical device

developer about cybersecurity and find that they have comprehensive knowledge of

all aspects of creating a secure device. To achieve that, I partnered with Axel

Wirth to write and publish the world’s first comprehensive, how-to book on

medical device cybersecurity. Also, Velentium has launched a training

certification process to train engineers, developers, and managers at medical

device manufacturers (MDMs) and other embedded and IoT device designers, so

they’ll have qualified, knowledgeable cybersecurity expertise on-staff.

According to a recent (ISC)² report, the global cybersecurity talent gap remains

at more than 3 million. Cybersecurity employment must grow by 41 percent in the

U.S. and by 89 percent worldwide to fill the existing gap. Clearly there is huge

shortfall of talent in the IT arena, but the situation is far worse for the

embedded device arena. Skilled people simply are not available.

Hacker's guide to deep-learning side-channel attacks: the theory

A side-channel attack is an implementation specific attack that exploits the

fact that using different inputs results in the algorithm implementation

behaving differently to gain knowledge about the secret value such as a

cryptographic key used during the computation using an indirect measurement such

as the time the algorithm took to execute the computation. One of the most

infamous cases of timing attack, is the fact that the time taken by the naive

square-and-multiply algorithm used in textbook implementation of the RSA modular

exponentiation depends linearly on the number of "1" bits in the key. This

linear relation can be exploited by an attacker, to infer the number of "1" bits

in the key by timing how long it takes to perform the computation for diverse

RSA keys. He can then use this knowledge to guess the number 1 in an unknown RSA

key stored in a hardware crypto device by simply measuring how long the code

takes to run. While nowadays most hardware crypto-implementation have constant

time implementation, timing attacks are still actively used, mostly in blind SQL

injection.

JavaScript API to Recognize Humans vs Bots in Chrome

Privacy Pass, an open-source web extension, was the step towards the right

direction, keeping privacy at its core. It helps to bypass CAPTCHA challenge

repetition by using a set of Tokens/Passes. Let’s look at how it works. Users

have to download the Privacy Pass extension for Chrome/Firefox web browser. You

can see the Privacy Pass icon; Visit the CAPTCHA website and answer the CAPTCHA

challenge, which grants 30 Tokens/Passes; These tokens are stored in the

extension for future use. The concept is simple when the user visit’s another

page, the Privacy Pass extension issue these Token/Passes. And the great thing

here is that each of these Token/Passes goes through a cryptographic process

known as “blinding” that shields users’ privacy. ... Google has recently started

developing a Trust Token API. It was developed as a substitute solution for

third-party cookies to fight against fraud in online advertising by

differentiates Bots vs. human. More importantly, Google Trust Tokens will

distinguish bots from real humans and obsolete Third-party Cookies in Google

Chrome.

Three Ways Machine Learning Can Change Incident Management

Without fully addressing the underlying issue, companies virtually guarantee

that the same problem – or a similar one – will reoccur. Not identifying root

cause often prevents a durable fix. In addition, companies lose the opportunity

to proactively improve application code or infrastructure based on real-world

experiences and issues. Postmortems may only result in reviews of monitoring and

observability solutions and the inevitable updates to alert rules. Most DevOps

professionals not only understand but have lived through these frustrations on

an ongoing basis. Management, then, often wonders why their systems are so

unstable. Changing the model for incident management has been limited by a

combination of the overriding urgency combined with short-staffed, overworked

teams. Although AI and machine learning has been positioned as the panacea for

nearly every kind of technical ill, this is a clear case where “machines” could

fundamentally enhance human efforts to improve a situation. The best

troubleshooters exhibit a combination of instinct, experience and patience to

carefully sift through reams of data, spotting unusual events and their

correlation with bad outcomes.

How to get your multi-cloud data architecture strategy on track for long-term success

Technology innovation is constantly moving forward so don’t think single cloud.

Even though you might feel that you’re saving time. Internal applications teams,

and the databases and tools they leverage for data-rich applications, need to

support multiple clouds. Take a long-term view towards resiliency when you might

need to leverage multiple clouds for scale or times of duress for critical

applications. Your strategy needs to work across multiple clouds and while you

should pick the application that suits your immediate needs. Keep flexibility in

mind so that you’re able to pick another cloud further down the line. The cloud

now has very clear standards as defined by the Cloud Native Computing Foundation

(CNCF). You should demand the same of your database. Most proprietary

innovations are now becoming open source and standards across multiple cloud

vendors. A perfect example of this is Kubernetes, which originated from Google

over ten years ago. Stick to the standards, reduce custom development, and set

yourself up for multi-cloud success. We have a lot to thank the cloud platform

vendors including Amazon, Google, Microsoft, and others for.

The Dark Side Of Tech: Four Potential Problems To Keep An Eye On In 2021

One of the biggest mistakes companies make when trying to automate their

processes is they think technology, in and of itself, is the answer. As a

result, they go out and buy subscriptions to software tools they never end up

using — because they fail to properly integrate the tools into their existing

organization and processes. As a result, between 32%-41% of what a company

spends on software internally gets wasted. The reason is because the tools were

not properly integrated into the human processes already in place. As AI

continues to promise endless automation potential, this problem of “buying but

failing to integrate” will likely accelerate. Companies will buy tools they

think will solve all their problems without taking the time to think deeply

about how to properly integrate those tools into their existing systems and

infrastructure. A decade ago, it was unfathomable for most companies to host their

data in the public cloud. Today, not only is that conventional wisdom, but it’s

becoming increasingly popular and expected. For example, Netflix has been a

prime example of how companies can get through the long and arduous process of

migrating to the cloud — which started in 2008 when Netflix “experienced a major

database corruption.”

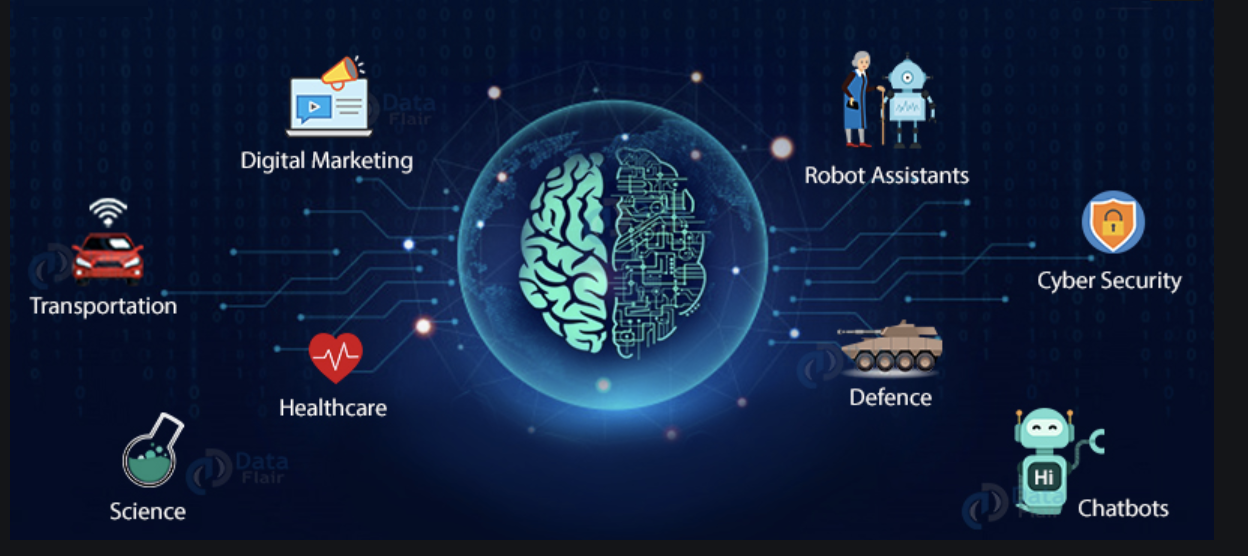

Future of AI

Today, AI is hugely integrated in all aspects of our day-to-day lives. From

chatbots, online shopping, smartphones, social networking to ride sharing - AI

is being applied in everyday apps that we use. The huge amounts of data that all

these apps are gathering about our likes and dislikes, our searches, our

purchases, our movements and almost every aspect of our lives, is contributing

further to advancement in AI. All this data is being used to train and fine-tune

these AI and ML algorithms to learn and predict what we want with even more

accuracy. ... AI has already being applied in healthcare with the use of

chatbots to provide real time assistance to patients and to predict ICU

transfers or patient risks. It has huge potential to transform how we administer

healthcare in the future. AI algorithms will enable healthcare providers to

analyze data and tailor healthcare to each patient. ML algorithms will keep

learning as they interact with training data, to provide precise and accurate

clinical decisions with respect to patient diagnostics, treatment and care, and

predict patient outcomes. In the filed of transportation, one area where AI will

continue to make improvements is self-driving vehicles. Google and Tesla have

already launched autonomous cars.

Cloud Security Blind Spots: Where They Are and How to Protect Them

While most security practitioners know accidental data exposure is a common

cloud security issue, many don't know when it's happening to them. This was the

crux of a talk by Jose Hernandez, principal security researcher, and Rod Soto,

principal security research engineer, both with Splunk, who explored the ways

corporate secrets are exposed on public repositories. In today's environments,

credentials are everywhere: SSH key pairs, Slack tokens, IAM secrets, SAML

tokens, API keys for AWS, GCP, and Azure, and many others. A common risk

scenario is when credentials aren't properly protected and left exposed, most

often in a public repository – Bitbucket, Gitlabs, Github, Amazon S3, and Open

DB, are the main public repos for software. "If you are an attacker and you're

trying to find somebody that, either by omission or neglect, embedded

credentials that could be reused, these would be your sources of leaked

credentials," Soto said, noting these can help attackers pivot between endpoints

and the cloud. Splunk researchers found there are 276,165 companies with leaked

secrets in Github. ... More organizations have a "converged perimeter," a term

he used to define environments with assets both behind an Internet gateway, such

as DevOps and ITOps, and in the cloud.

Quote for the day:

"I think leadership's always been about

two main things: imagination and courage." -- Paul Keating

No comments:

Post a Comment