Quote for the day:

"Life is 10% what happens to me and 90% of how I react to it." -- Charles Swindoll

Hybrid AI: The future of certifiable and trustworthy intelligence

An emerging approach in AI innovation is hybrid AI, which combines the

scalability of machine learning (ML) with the constraint-checking and

provenance of symbolic models. Hybrid AI forms a foundation for system-level

certification and helps CIOs balance the pursuit of performance with the need

for accountability. ... Clustering, a core unsupervised learning technique,

organizes unlabeled data into groups based on similarity. It’s widely used to

segment customers, group documents or analyze sensor data by measuring

distances in a numeric feature space. But conventional clustering works on

similarity alone and has no grasp of meaning. This can group items by

coincidence rather than concept. ... For enterprise leaders, verifiability

isn’t optional; it’s a governance requirement. Systems that support strategic

or regulatory decisions must show constraint conformance and leave a traceable

decision path. Ontology-driven clustering provides that foundation, creating

an auditable chain of logic aligned with frameworks such as the NIST AI Risk

Management Framework. In both government and industry, this hybrid approach

makes AI more accountable and reliable. Trustworthiness is not a checkbox but

an assurance case that connects data science, compliance and oversight. An

organization that cannot trace what was allowed into a model or which

constraints were applied does not truly control the decision.

An emerging approach in AI innovation is hybrid AI, which combines the

scalability of machine learning (ML) with the constraint-checking and

provenance of symbolic models. Hybrid AI forms a foundation for system-level

certification and helps CIOs balance the pursuit of performance with the need

for accountability. ... Clustering, a core unsupervised learning technique,

organizes unlabeled data into groups based on similarity. It’s widely used to

segment customers, group documents or analyze sensor data by measuring

distances in a numeric feature space. But conventional clustering works on

similarity alone and has no grasp of meaning. This can group items by

coincidence rather than concept. ... For enterprise leaders, verifiability

isn’t optional; it’s a governance requirement. Systems that support strategic

or regulatory decisions must show constraint conformance and leave a traceable

decision path. Ontology-driven clustering provides that foundation, creating

an auditable chain of logic aligned with frameworks such as the NIST AI Risk

Management Framework. In both government and industry, this hybrid approach

makes AI more accountable and reliable. Trustworthiness is not a checkbox but

an assurance case that connects data science, compliance and oversight. An

organization that cannot trace what was allowed into a model or which

constraints were applied does not truly control the decision.

Upwork study shows AI agents excel with human partners but fail independently

The research challenges both the hype around fully autonomous AI agents and

fears that such technology will imminently replace knowledge workers. "AI

agents aren't that agentic, meaning they aren't that good," Andrew Rabinovich,

Upwork's chief technology officer and head of AI and machine learning, said in

an exclusive interview with VentureBeat. "However, when paired with expert

human professionals, project completion rates improve dramatically, supporting

our firm belief that the future of work will be defined by humans and AI

collaborating to get more work done, with human intuition and domain expertise

playing a critical role." ... The research reveals stark differences in how AI

agents perform with and without human guidance across different types of work.

For data science and analytics projects, Claude Sonnet 4 achieved a 64%

completion rate working alone but jumped to 93% after receiving feedback from

a human expert. In sales and marketing work, Gemini 2.5 Pro's completion rate

rose from 17% independently to 31% with human input. OpenAI's GPT-5 showed

similarly dramatic improvements in engineering and architecture tasks,

climbing from 30% to 50% completion. The pattern held across virtually all

categories, with agents responding particularly well to human feedback on

qualitative, creative work requiring editorial judgment — areas like writing,

translation, and marketing — where completion rates increased by up to 17

percentage points per feedback cycle.

The research challenges both the hype around fully autonomous AI agents and

fears that such technology will imminently replace knowledge workers. "AI

agents aren't that agentic, meaning they aren't that good," Andrew Rabinovich,

Upwork's chief technology officer and head of AI and machine learning, said in

an exclusive interview with VentureBeat. "However, when paired with expert

human professionals, project completion rates improve dramatically, supporting

our firm belief that the future of work will be defined by humans and AI

collaborating to get more work done, with human intuition and domain expertise

playing a critical role." ... The research reveals stark differences in how AI

agents perform with and without human guidance across different types of work.

For data science and analytics projects, Claude Sonnet 4 achieved a 64%

completion rate working alone but jumped to 93% after receiving feedback from

a human expert. In sales and marketing work, Gemini 2.5 Pro's completion rate

rose from 17% independently to 31% with human input. OpenAI's GPT-5 showed

similarly dramatic improvements in engineering and architecture tasks,

climbing from 30% to 50% completion. The pattern held across virtually all

categories, with agents responding particularly well to human feedback on

qualitative, creative work requiring editorial judgment — areas like writing,

translation, and marketing — where completion rates increased by up to 17

percentage points per feedback cycle.Debunking AI Security Myths for State and Local Governments

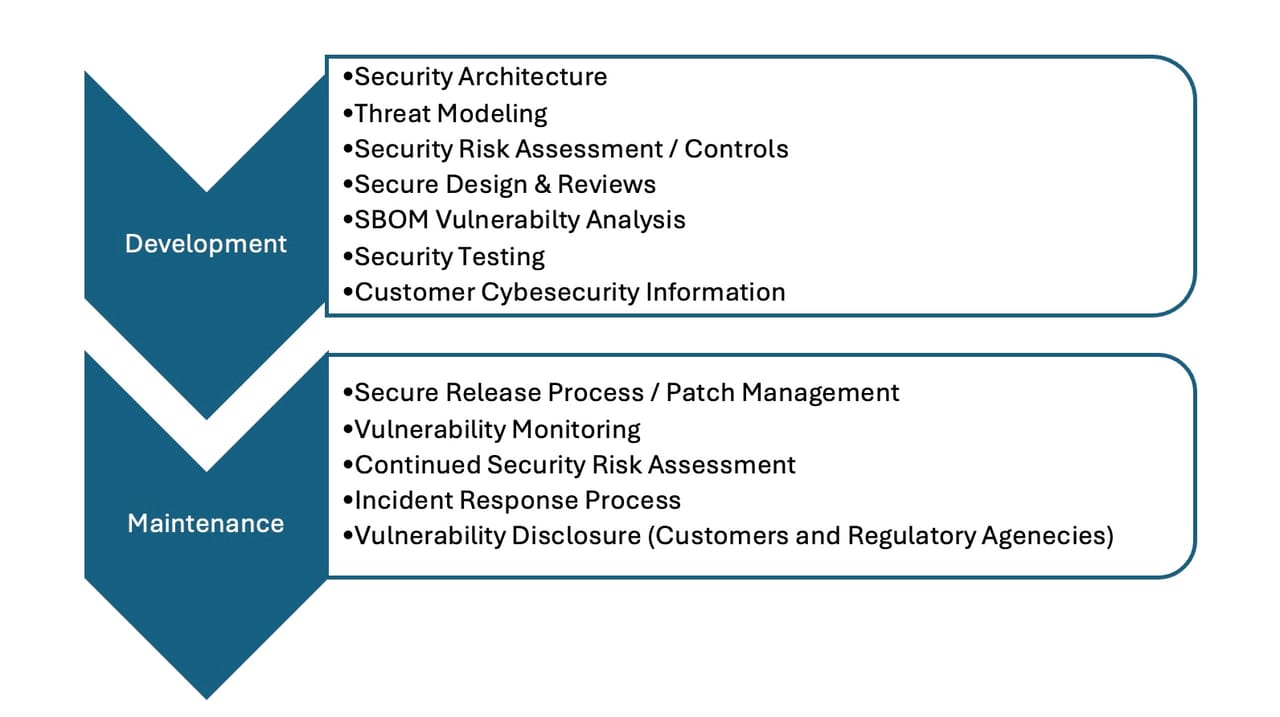

As state and local governments adopt AI, they must return to cybersecurity basics and strengthen core principles to help build resilience and earn public trust. For AI workloads, governments should apply zero-trust principles; for example, continuously verifying identities, limiting access by role and segmenting system components. Clear data policies for access, protection and backups help safeguard sensitive information and keep systems resilient. Perhaps most important, security teams need to be involved early in AI design conversations to build in security from the start. ... As state and local governments deploy more sophisticated AI systems, it’s crucial to view the technology as a partner, not a replacement for human intelligence. There is a misconception that advanced AI — particularly agentic AI, which can make its own decisions — eliminates the need for human oversight. The truth is, responsible AI deployment hinges on human oversight and strong governance. The more autonomous an AI system becomes, the more essential human governance is. ... Securing AI is not a one-time milestone. It’s an ongoing process of preparation and adaptation as the threat landscape evolves. For state and local governments advancing their AI initiatives, the path forward centers on building resilience and confidence. And the good news is, they don’t need to start from scratch. The tools and strategies already exist.When Open Source Meets Enterprise: A Fragile Alliance

The answer is by no means simple; it is determined by a number of factors,

of which the vendor’s ethos is one of the most important. Some vendors

genuinely give back to the open-source communities from which they gain

value. Others are more extractive, building closed proprietary layers atop

open foundations and pushing little back to the community. The difference

matters enormously. Organisations hold true optionality when a vendor

actively maintains the open-source core, while keeping its proprietary

features genuinely additive rather than substitutive. In theory, they could

shift to another provider or take the open-source components in-house should

the relationship sour. ... Commercial open-source vendors can provide

training, certification, and managed services to fill this gap, for a fee

naturally. Then there is innovation velocity. Open-source communities can

move incredibly quickly, with contributions from numerous sources, enabling

organisations to adopt cutting-edge features faster than conventional

enterprise procurement cycles allow. Conversely, vital security patches can

stall if a project lacks maintainers, creating unacceptable exposure for

risk-averse organisations. ... Ultimately, the question is not whether open

source should exist within the enterprise; that debate has been resolved.

The challenge lies in thoughtfully incorporating open-source components into

broader technology strategies that balance innovation, resilience,

sovereignty, and pragmatic risk management.

The answer is by no means simple; it is determined by a number of factors,

of which the vendor’s ethos is one of the most important. Some vendors

genuinely give back to the open-source communities from which they gain

value. Others are more extractive, building closed proprietary layers atop

open foundations and pushing little back to the community. The difference

matters enormously. Organisations hold true optionality when a vendor

actively maintains the open-source core, while keeping its proprietary

features genuinely additive rather than substitutive. In theory, they could

shift to another provider or take the open-source components in-house should

the relationship sour. ... Commercial open-source vendors can provide

training, certification, and managed services to fill this gap, for a fee

naturally. Then there is innovation velocity. Open-source communities can

move incredibly quickly, with contributions from numerous sources, enabling

organisations to adopt cutting-edge features faster than conventional

enterprise procurement cycles allow. Conversely, vital security patches can

stall if a project lacks maintainers, creating unacceptable exposure for

risk-averse organisations. ... Ultimately, the question is not whether open

source should exist within the enterprise; that debate has been resolved.

The challenge lies in thoughtfully incorporating open-source components into

broader technology strategies that balance innovation, resilience,

sovereignty, and pragmatic risk management.The Hidden Cost of Technical Debt in Databases

At its core, technical debt represents the trade-off between speed and quality. When a development team chooses a “quick and dirty” path to meet a deadline, debt is incurred. The database world sees the same phenomenon. ... The first step to eliminating technical debt is recognition. DBAs must adopt a mindset that managing technical debt is part of the job. Although it can be enticing to quickly fix a problem and move on, it should always be a part of the job to reflect on the potential future impact of any change that is made. ... Importantly, DBAs also sit at the crossroads between technical staff and business stakeholders. They can explain how technical debt translates into business impact: lost productivity, slower application delivery, higher infrastructure costs, and greater operational risk. This ability to connect database health to business outcomes is essential for winning support to tackle debt. In practice, the DBA’s role involves three things: identification, communication, and advocacy. DBAs must identify where debt exists, communicate its impact clearly, and advocate for resources to remediate it. Sometimes that means lobbying for time to redesign a schema, other times it means convincing leadership that archiving inactive data will save more money than buying new storage. Yet other times it may involve championing a new tool or process to be put in place to automate required tasks to thwart technical debt.Seek Skills, Not Titles

Titles feel good—at first. They make your resume and LinkedIn profile look

prettier. But when you confuse your title for your identity, you’re setting

yourself up for a rude awakening. Titles can be taken away. Or they just

expire, like milk in the back of the fridge. Your skills, on the other hand?

No one can take those away from you. ... Some roles taught me how to work

hard and build trust. Some taught me to communicate clearly and adapt

quickly. Others taught me to see the big picture and act decisively. The

titles didn’t teach me those skills; the experience did. ... It’s easy to

let your job title become your identity, especially when you’re leading at a

high level. Everyone wants something from you. Board members, investors,

employees. They project their version of who they think you should be. You

must have clarity on your core values. Not the company’s core values, but

your own. Otherwise, you’ll find yourself playing a dozen different roles

without knowing which one is actually you. ... Don’t wait for the title to

teach you a skill. Start now. The best way to grow is to pursue skills that

will open up opportunities, especially the ones that align with your

personal values. Because when your values and skills match, your impact

multiplies, regardless of the title. When has pursuing a title led you away

from the skills you truly needed? What impact have you seen when your skills

are aligned with your values? How might you need to detour to get back on

the right track?

Titles feel good—at first. They make your resume and LinkedIn profile look

prettier. But when you confuse your title for your identity, you’re setting

yourself up for a rude awakening. Titles can be taken away. Or they just

expire, like milk in the back of the fridge. Your skills, on the other hand?

No one can take those away from you. ... Some roles taught me how to work

hard and build trust. Some taught me to communicate clearly and adapt

quickly. Others taught me to see the big picture and act decisively. The

titles didn’t teach me those skills; the experience did. ... It’s easy to

let your job title become your identity, especially when you’re leading at a

high level. Everyone wants something from you. Board members, investors,

employees. They project their version of who they think you should be. You

must have clarity on your core values. Not the company’s core values, but

your own. Otherwise, you’ll find yourself playing a dozen different roles

without knowing which one is actually you. ... Don’t wait for the title to

teach you a skill. Start now. The best way to grow is to pursue skills that

will open up opportunities, especially the ones that align with your

personal values. Because when your values and skills match, your impact

multiplies, regardless of the title. When has pursuing a title led you away

from the skills you truly needed? What impact have you seen when your skills

are aligned with your values? How might you need to detour to get back on

the right track?Strategic Autarky for the AI Age

AI is still emerging. Overspecifying rules, enforcing rigid certification

pathways, or creating sector wise chokepoints too early can stifle the very

innovation we aim to promote. Burdensome compliance layers, mandated

algorithmic disclosures, prescriptive model testing protocols, and

fragmented approval processes can all create friction. Overregulation can

discourage experimentation, elevate the cost of market entry, and drain our

fastest growing startups. The risk is simple. Innovation flight. Loss of

competitive edge. A domestic ecosystem slowed down before it reaches

maturity. Balancing sovereignty and innovation, therefore, becomes the

central task. India cannot afford to remain dependent, but it also cannot

smother its own technological growth. India’s new AI Governance Framework

addresses this balance directly. It follows seven guiding principles built

around trust, accountability, transparency, privacy, security, human

centricity, and collaboration. The standout feature is its “light touch”

approach. Instead of imposing rigid controls, the framework sets high level

principles that can evolve with technology. It relies on India’s existing

legal foundation, including the Digital Personal Data Protection Act and the

Information Technology Act, and is supported by institutional structures

like the AI Governance Group and the AI Safety Institute. The framework

contains several strong provisions. It encourages voluntary risk assessments

rather than mandatory rigid audits for most systems.

AI is still emerging. Overspecifying rules, enforcing rigid certification

pathways, or creating sector wise chokepoints too early can stifle the very

innovation we aim to promote. Burdensome compliance layers, mandated

algorithmic disclosures, prescriptive model testing protocols, and

fragmented approval processes can all create friction. Overregulation can

discourage experimentation, elevate the cost of market entry, and drain our

fastest growing startups. The risk is simple. Innovation flight. Loss of

competitive edge. A domestic ecosystem slowed down before it reaches

maturity. Balancing sovereignty and innovation, therefore, becomes the

central task. India cannot afford to remain dependent, but it also cannot

smother its own technological growth. India’s new AI Governance Framework

addresses this balance directly. It follows seven guiding principles built

around trust, accountability, transparency, privacy, security, human

centricity, and collaboration. The standout feature is its “light touch”

approach. Instead of imposing rigid controls, the framework sets high level

principles that can evolve with technology. It relies on India’s existing

legal foundation, including the Digital Personal Data Protection Act and the

Information Technology Act, and is supported by institutional structures

like the AI Governance Group and the AI Safety Institute. The framework

contains several strong provisions. It encourages voluntary risk assessments

rather than mandatory rigid audits for most systems.Google Brain founder Andrew Ng thinks you should still learn to code - here's why

"Because AI coding has lowered the bar to entry so much, I hope we can

encourage everyone to learn to code -- not just software engineers," Ng said

during his keynote. How AI will impact jobs and the future of work is still

unfolding. Regardless, Ng told ZDNET in an interview that he thinks everyone

should know the basics of how to use AI to code, equivalent to knowing "a

little bit of math," -- still a hard skill, but applied more generally to

many careers for whatever you may need. "One of the most important skills of

the future is the ability to tell a computer exactly what you want it to do

for you," he said, noting that everyone should know enough to speak a

computer's language, without needing to write code yourself. "Syntax, the

arcane incantations we use, that's less important." ... The new challenge

for developers, Ng said during the panel, will be coming up with the concept

of what they want. Hedin agreed, adding that if AI is doing the coding in

the future, developers should focus on their intuition when building a

product or tool. "The thing that AI will be worst at is understanding

humans," he said. ... He cited the overhiring sprees tech companies went on

-- and then ultimately reversed -- during the COVID-19 pandemic as the

primary reason entry-level coding jobs are hard to come by. Beyond that,

though, it's a question of grads having the right kind of coding skills.

While low-code/no-code platforms accelerate development, they can become

challenging when trying to achieve high levels of customization or when

dealing with complex systems. Custom solutions might be more cost-effective

for highly specialized applications. Low-code and no-code platforms must

provide clear guidance to users within a structured framework to minimize

mistakes, and they may offer less flexibility compared to traditional

coding. AI tools can be easily used to generate code, suggest optimizations,

or even create entire applications based on natural language prompts.

However, they work best when integrated into a broader development

ecosystem, not as standalone solutions. ... The future of software

development appears to be a blended approach, where traditional programming,

low-code/no-code platforms, and AI each play a role. The key to success in

this dynamic landscape is understanding when to use each method, ensuring

C-level executives, team leaders, and team members are versatile and

leverage technology to enhance, rather than replace, human ingenuity. Let me

share my firsthand experience. When I asked my developers a year ago how

they thought using AI tools at work would evolve, many said: “I expect that

as the tools improve, I’ll shift from mostly writing code to mostly

reviewing AI-generated code.” Fast forward a year, and when we posed the

same question, a common theme emerged: “We are spending less time writing

the mundane stuff.”

While low-code/no-code platforms accelerate development, they can become

challenging when trying to achieve high levels of customization or when

dealing with complex systems. Custom solutions might be more cost-effective

for highly specialized applications. Low-code and no-code platforms must

provide clear guidance to users within a structured framework to minimize

mistakes, and they may offer less flexibility compared to traditional

coding. AI tools can be easily used to generate code, suggest optimizations,

or even create entire applications based on natural language prompts.

However, they work best when integrated into a broader development

ecosystem, not as standalone solutions. ... The future of software

development appears to be a blended approach, where traditional programming,

low-code/no-code platforms, and AI each play a role. The key to success in

this dynamic landscape is understanding when to use each method, ensuring

C-level executives, team leaders, and team members are versatile and

leverage technology to enhance, rather than replace, human ingenuity. Let me

share my firsthand experience. When I asked my developers a year ago how

they thought using AI tools at work would evolve, many said: “I expect that

as the tools improve, I’ll shift from mostly writing code to mostly

reviewing AI-generated code.” Fast forward a year, and when we posed the

same question, a common theme emerged: “We are spending less time writing

the mundane stuff.”

How Development Teams Are Rethinking the Way They Build Software

While low-code/no-code platforms accelerate development, they can become

challenging when trying to achieve high levels of customization or when

dealing with complex systems. Custom solutions might be more cost-effective

for highly specialized applications. Low-code and no-code platforms must

provide clear guidance to users within a structured framework to minimize

mistakes, and they may offer less flexibility compared to traditional

coding. AI tools can be easily used to generate code, suggest optimizations,

or even create entire applications based on natural language prompts.

However, they work best when integrated into a broader development

ecosystem, not as standalone solutions. ... The future of software

development appears to be a blended approach, where traditional programming,

low-code/no-code platforms, and AI each play a role. The key to success in

this dynamic landscape is understanding when to use each method, ensuring

C-level executives, team leaders, and team members are versatile and

leverage technology to enhance, rather than replace, human ingenuity. Let me

share my firsthand experience. When I asked my developers a year ago how

they thought using AI tools at work would evolve, many said: “I expect that

as the tools improve, I’ll shift from mostly writing code to mostly

reviewing AI-generated code.” Fast forward a year, and when we posed the

same question, a common theme emerged: “We are spending less time writing

the mundane stuff.”

While low-code/no-code platforms accelerate development, they can become

challenging when trying to achieve high levels of customization or when

dealing with complex systems. Custom solutions might be more cost-effective

for highly specialized applications. Low-code and no-code platforms must

provide clear guidance to users within a structured framework to minimize

mistakes, and they may offer less flexibility compared to traditional

coding. AI tools can be easily used to generate code, suggest optimizations,

or even create entire applications based on natural language prompts.

However, they work best when integrated into a broader development

ecosystem, not as standalone solutions. ... The future of software

development appears to be a blended approach, where traditional programming,

low-code/no-code platforms, and AI each play a role. The key to success in

this dynamic landscape is understanding when to use each method, ensuring

C-level executives, team leaders, and team members are versatile and

leverage technology to enhance, rather than replace, human ingenuity. Let me

share my firsthand experience. When I asked my developers a year ago how

they thought using AI tools at work would evolve, many said: “I expect that

as the tools improve, I’ll shift from mostly writing code to mostly

reviewing AI-generated code.” Fast forward a year, and when we posed the

same question, a common theme emerged: “We are spending less time writing

the mundane stuff.”