Quote for the day:

"Ideas are easy, implementation is hard." -- Guy Kawasaki

India’s AI Paradox: Why We Need Cloud Sovereignty Before Model Sovereignty

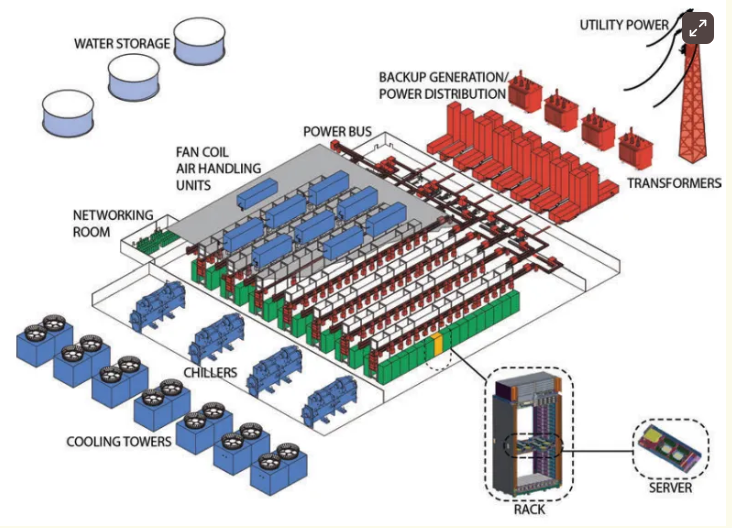

As is clear, cloud sovereignty is the new pillar supporting national security

and having control over infrastructure, data, and digital operations. It has the

capacity to safeguard the country’s national interests, including (but not

limited to) industrial data, citizen information, and AI workloads. For India,

specifically, building a sovereign digital infrastructure guarantees continuity

and trust. It gives the country power to enforce its own data laws, manage

computing resources for homegrown AI systems, and stay insulated from the

tremors of foreign policy decisions or transnational outages. It’s the digital

equivalent of producing energy at home—self-reliant, secure, and governed by

national priorities. ... Sovereign infrastructure is less a matter of where data

sits and more about who controls it and how securely it is managed. With

connected systems, AI workloads spread across networks. This makes it imperative

for security to be built into every layer and stage. As systems grow more

connected and AI workloads spread across networks, security needs to be built

into every layer of technology, not added as an afterthought. That’s where edge

computing and modern cloud-security frameworks come in. ... There is a real cost

involved in neglecting cloud sovereignty. If our AI models continue to depend

upon infrastructure that lies outside our jurisdiction, any changes in foreign

regulations might suddenly restrict access to critical training

datasets.

As is clear, cloud sovereignty is the new pillar supporting national security

and having control over infrastructure, data, and digital operations. It has the

capacity to safeguard the country’s national interests, including (but not

limited to) industrial data, citizen information, and AI workloads. For India,

specifically, building a sovereign digital infrastructure guarantees continuity

and trust. It gives the country power to enforce its own data laws, manage

computing resources for homegrown AI systems, and stay insulated from the

tremors of foreign policy decisions or transnational outages. It’s the digital

equivalent of producing energy at home—self-reliant, secure, and governed by

national priorities. ... Sovereign infrastructure is less a matter of where data

sits and more about who controls it and how securely it is managed. With

connected systems, AI workloads spread across networks. This makes it imperative

for security to be built into every layer and stage. As systems grow more

connected and AI workloads spread across networks, security needs to be built

into every layer of technology, not added as an afterthought. That’s where edge

computing and modern cloud-security frameworks come in. ... There is a real cost

involved in neglecting cloud sovereignty. If our AI models continue to depend

upon infrastructure that lies outside our jurisdiction, any changes in foreign

regulations might suddenly restrict access to critical training

datasets. Do CISOs need to rethink service provider risk?

Security leaders face mounting pressure from boards to provide assurance about

third-party risks, while services provider vetting processes are becoming more

onerous — a growing burden for both CISOs and their providers. At the same time,

AI is becoming integrated into more business systems and processes, opening new

risks. CISOs may be forced to rethink their vetting processes with partners to

maintain a focus on risk reduction while treating partnerships as a shared

responsibility. ... When looking to engage a services provider, his vetting

process starts with building relationships first and then working towards a

formal partnership and delivery of services. He believes dialogue helps

establish trust and transparency and underpin the partnership approach. “A lot

of that is ironed out in that really undocumented process. You build up those

relationships first, and then the transactional piece comes after that.” ... “If

your questions stop once the form is complete, you’ve missed the chance to

understand how a partner really thinks about security,” Thiele says. “You learn

a lot more from how they explain their risk decisions than from a yes/no tick

box.” Transparency and collaboration are at the heart of stronger partnerships.

“You can’t outsource accountability, but you can become mature in how you manage

shared responsibility,” Thiele says. ... With AI, Cruz has started to monitor

vendors acquiring ISO 42001 certification for AI governance. “It’s a trend I’m

seeing in some of the work that we’re doing,” she says.

Security leaders face mounting pressure from boards to provide assurance about

third-party risks, while services provider vetting processes are becoming more

onerous — a growing burden for both CISOs and their providers. At the same time,

AI is becoming integrated into more business systems and processes, opening new

risks. CISOs may be forced to rethink their vetting processes with partners to

maintain a focus on risk reduction while treating partnerships as a shared

responsibility. ... When looking to engage a services provider, his vetting

process starts with building relationships first and then working towards a

formal partnership and delivery of services. He believes dialogue helps

establish trust and transparency and underpin the partnership approach. “A lot

of that is ironed out in that really undocumented process. You build up those

relationships first, and then the transactional piece comes after that.” ... “If

your questions stop once the form is complete, you’ve missed the chance to

understand how a partner really thinks about security,” Thiele says. “You learn

a lot more from how they explain their risk decisions than from a yes/no tick

box.” Transparency and collaboration are at the heart of stronger partnerships.

“You can’t outsource accountability, but you can become mature in how you manage

shared responsibility,” Thiele says. ... With AI, Cruz has started to monitor

vendors acquiring ISO 42001 certification for AI governance. “It’s a trend I’m

seeing in some of the work that we’re doing,” she says.The Silent Technical Debt: Why Manual Remediation Is Costing You More Than You Think

A far more challenging and costly form of this debt has silently embedded itself

into the daily operations of nearly every software development team, and most

leaders don’t even have a line item for it. This liability is remediation debt:

The ever-growing cost of manually fixing vulnerabilities in the open source

components that form the backbone of modern applications. For years, we’ve

accepted this process as a necessary chore. A scanner finds a flaw, an alert is

sent, and a developer is pulled from their work to hunt down a patch. ... The

complexity doesn’t stop there. The report reveals that 65% of manual remediation

attempts for a single critical vulnerability require updating at least five

additional “transitive” dependencies, or a dependency of a dependency. This is

the dreaded “dependency conundrum” that developers lament, where fixing one

problem creates a cascade of new compatibility issues. ... It’s time to reframe

our way of dealing with this: the goal is not just to find vulnerabilities

faster but to remediate them instantly. The path forward lies in shifting from

manual labor to intelligent remediation. This means evolving beyond tools that

simply populate dashboards with problems and embracing platforms that solve them

at their source. Imagine a system where a vulnerability is identified, and

instead of creating a ticket, the platform automatically builds, tests, and

delivers a fully patched and compatible version of the necessary component

directly to the developer.

A far more challenging and costly form of this debt has silently embedded itself

into the daily operations of nearly every software development team, and most

leaders don’t even have a line item for it. This liability is remediation debt:

The ever-growing cost of manually fixing vulnerabilities in the open source

components that form the backbone of modern applications. For years, we’ve

accepted this process as a necessary chore. A scanner finds a flaw, an alert is

sent, and a developer is pulled from their work to hunt down a patch. ... The

complexity doesn’t stop there. The report reveals that 65% of manual remediation

attempts for a single critical vulnerability require updating at least five

additional “transitive” dependencies, or a dependency of a dependency. This is

the dreaded “dependency conundrum” that developers lament, where fixing one

problem creates a cascade of new compatibility issues. ... It’s time to reframe

our way of dealing with this: the goal is not just to find vulnerabilities

faster but to remediate them instantly. The path forward lies in shifting from

manual labor to intelligent remediation. This means evolving beyond tools that

simply populate dashboards with problems and embracing platforms that solve them

at their source. Imagine a system where a vulnerability is identified, and

instead of creating a ticket, the platform automatically builds, tests, and

delivers a fully patched and compatible version of the necessary component

directly to the developer. AI Isn’t Coming for Data Jobs – It’s Coming for Data Chaos

Data chaos arises when organizations lose control of their information landscape. It’s the confusion born from fragmentation, duplication, and inconsistency when multiple versions of “truth” compete for authority. Poor data quality and disconnected data governance processes often amplify this chaos. This chaos manifests as conflicting reports, inaccurate dashboards, mismatched customer profiles, and entire departments working from isolated datasets that refuse to align. ... Recent industry analyses reveal an accelerating imbalance in the data economy. While nearly 90% of the world’s data has been generated in just the past two years, data professionals and data stewards represent only about 3% of the enterprise workforce, creating a widening gap between information growth and the human capacity to govern it. ... Data chaos doesn’t just strain systems, it strains people. As enterprises struggle to keep pace with growing data volume and complexity, the very professionals tasked with managing it find themselves overwhelmed by maintenance work. ... When applied strategically, AI can transform the data management lifecycle from ingestion to governance reducing human toil and freeing engineers to focus on design, quality, and strategy. Paired with an intelligent data catalog, these systems make information assets instantly discoverable and reusable across business domains. AI-driven data classification tools now tag, cluster, and prioritize assets automatically, reducing manual oversight.Why IT projects still fail

Failure today means an IT project doesn’t deliver expected benefits, according

to CIOs, project leaders, researchers, and IT consultants. Failure can also mean

a project doesn’t produce returns, runs so late as to be obsolete when

completed, or doesn’t engage users who then shun it in response. ... IT leaders

and now business leaders, too, get enamored with technologies, despite years of

admonishments not to do so. The result is a misalignment between the project

objectives and business goals, experienced CIOs and veteran project managers

say. ... Stettler says a business owner with clear accountability is needed to

ensure that business resources are available when required as well as to ensure

process changes and worker adoption happen. He notes that having CIOs — instead

of a business owner — try to make those things happen “would be a

tail-wagging-the-dog scenario.” ... “Executives need to make more time and

engage across all levels of the program. They can’t just let the leaders come

talk to them. They need to do spot checks and quality reviews of deliverable

updates, and check in with those throughout the program,” Stettler says. “And

they have to have the attitude of ‘Bring stuff to me when I can be helpful.’”

... Phillips acknowledges that project teams don’t usually overlook entire

divisions, but they sometimes fail to identify and include all the stakeholders

they should in the project process. Consequently, they miss key requirements to

include, regulations to consider, and opportunities to capitalize on.

Failure today means an IT project doesn’t deliver expected benefits, according

to CIOs, project leaders, researchers, and IT consultants. Failure can also mean

a project doesn’t produce returns, runs so late as to be obsolete when

completed, or doesn’t engage users who then shun it in response. ... IT leaders

and now business leaders, too, get enamored with technologies, despite years of

admonishments not to do so. The result is a misalignment between the project

objectives and business goals, experienced CIOs and veteran project managers

say. ... Stettler says a business owner with clear accountability is needed to

ensure that business resources are available when required as well as to ensure

process changes and worker adoption happen. He notes that having CIOs — instead

of a business owner — try to make those things happen “would be a

tail-wagging-the-dog scenario.” ... “Executives need to make more time and

engage across all levels of the program. They can’t just let the leaders come

talk to them. They need to do spot checks and quality reviews of deliverable

updates, and check in with those throughout the program,” Stettler says. “And

they have to have the attitude of ‘Bring stuff to me when I can be helpful.’”

... Phillips acknowledges that project teams don’t usually overlook entire

divisions, but they sometimes fail to identify and include all the stakeholders

they should in the project process. Consequently, they miss key requirements to

include, regulations to consider, and opportunities to capitalize on.

The Human Plus AI Quotient: Inside Ascendion's strategy to make AI an amplifier of human talent

Technical skills evolve—mainframes lasted forty years, client-server about twenty, and digital waves even less. Skills will come and go, so we focus on candidates with a strong willingness to learn and invest in themselves. That’s foundational. What’s changed now is the importance of being open to AI. We don’t require deep AI expertise at the outset, but we do look for those who are ready to embrace it. This approach explains why our workforce is so quick to adapt to AI—it’s ingrained in how we hire and develop our people. ... The war for talent has always existed—it’s just the scale and timing that change. For us, the quality of work and the opportunities we provide are key to retention. Being fundamentally an AI-first company is a big differentiator, and our “AI-first” mindset is wired into our DNA. Our employees see a real difference in how we approach projects, always asking how AI can add value. We’ve created an environment that encourages experimentation and learning, and the IP our teams develop—sometimes even around best practices for AI adoption—becomes part of our organisational knowledge base. ... The good news is that for a large cross-section of the workforce, "skilling in AI" is not about mastery of mathematics; it's about improving English writing skills to prompt effectively. We often share prompt libraries with clients because the ability to ask the right question and interpret the output is a significant win.Recruitment Class: What CIOs Want in Potential New Hires

Candidates should be comfortable operating in a very complex, deep digital

ecosystem, Avetisyan said. Now, digital fluency means much more than knowing how

to use a certain tool that is currently popular, including AI tools. There needs

to be an awareness of the broader implications and responsibilities that come

with implementing AI. "It's about integrating AI responsibly and designing for

accessibility," Avetisyan said -- both of which represent big challenges that

must be tackled and kept continuously top of mind. AI should elevate user

experiences. ... There's still a need to demonstrate technical skills with human

skills such as problem-solving, communication, and ethical awareness, she said.

"You can't just be an exceptional coder and right away be effective in our

organization if you don't understand all these other aspects," she said. One

more thing: While vibe coding -- letting AI shoulder much or most of the work --

is a buzzy concept, she said she is not ready to turn her shop of developers

into vibe coders. A more grounded approach to teaching AI fluency is -- or

should be -- the educational mission. ... As for programming? A programmer is

still a programmer, but the job has evolved to become more strategic, Ruch said.

Technical talent will be needed; however, the first few revisions of code will

be pre-written based on the specifications given to AI, he said.

Candidates should be comfortable operating in a very complex, deep digital

ecosystem, Avetisyan said. Now, digital fluency means much more than knowing how

to use a certain tool that is currently popular, including AI tools. There needs

to be an awareness of the broader implications and responsibilities that come

with implementing AI. "It's about integrating AI responsibly and designing for

accessibility," Avetisyan said -- both of which represent big challenges that

must be tackled and kept continuously top of mind. AI should elevate user

experiences. ... There's still a need to demonstrate technical skills with human

skills such as problem-solving, communication, and ethical awareness, she said.

"You can't just be an exceptional coder and right away be effective in our

organization if you don't understand all these other aspects," she said. One

more thing: While vibe coding -- letting AI shoulder much or most of the work --

is a buzzy concept, she said she is not ready to turn her shop of developers

into vibe coders. A more grounded approach to teaching AI fluency is -- or

should be -- the educational mission. ... As for programming? A programmer is

still a programmer, but the job has evolved to become more strategic, Ruch said.

Technical talent will be needed; however, the first few revisions of code will

be pre-written based on the specifications given to AI, he said.

Do programming certifications still matter?

“Certifications are shifting from a checkbox to a compass. They’re less about

proving you memorized syntax and more about proving you can architect systems,

instruct AI coding assistants, and solve problems end-to-end,” says Faizel Khan,

lead AI engineer at Landing Point, an executive search and recruiting firm. ...

Certifications really do two things, Khan adds. “First, they force you to learn

by doing,” he says. “If you’re taking AWS Solutions Architect or Terraform, you

don’t pass by guessing—you plan, build, and test systems. That practice matters.

Second, they act as a public signal. Think of it like a micro-degree. You’re not

just saying, ‘I know cloud.’ You’re showing you’ve crossed a bar that thousands

of other engineers recognize.” But there are cons, too. “In tech, employers

don’t just want credentials, they want proof you can deliver,” says Kevin

Miller, CTO at IFS. “Programming certifications can be a valuable indicator of

your baseline knowledge and competencies, especially if you’re early in your

career or pivoting into tech, but their importance is dwindling.” ... “I’m more

interested in a candidate’s attitude and aptitude: what problems they’ve solved,

what they’ve built, and how they’ve approached challenges,” Watts says.

“Certifications can show commitment and discipline, and they’re especially

useful in highly specialized roles. But I’m cautious when someone presents a

laundry list of certifications with little evidence of real-world application.”

“Certifications are shifting from a checkbox to a compass. They’re less about

proving you memorized syntax and more about proving you can architect systems,

instruct AI coding assistants, and solve problems end-to-end,” says Faizel Khan,

lead AI engineer at Landing Point, an executive search and recruiting firm. ...

Certifications really do two things, Khan adds. “First, they force you to learn

by doing,” he says. “If you’re taking AWS Solutions Architect or Terraform, you

don’t pass by guessing—you plan, build, and test systems. That practice matters.

Second, they act as a public signal. Think of it like a micro-degree. You’re not

just saying, ‘I know cloud.’ You’re showing you’ve crossed a bar that thousands

of other engineers recognize.” But there are cons, too. “In tech, employers

don’t just want credentials, they want proof you can deliver,” says Kevin

Miller, CTO at IFS. “Programming certifications can be a valuable indicator of

your baseline knowledge and competencies, especially if you’re early in your

career or pivoting into tech, but their importance is dwindling.” ... “I’m more

interested in a candidate’s attitude and aptitude: what problems they’ve solved,

what they’ve built, and how they’ve approached challenges,” Watts says.

“Certifications can show commitment and discipline, and they’re especially

useful in highly specialized roles. But I’m cautious when someone presents a

laundry list of certifications with little evidence of real-world application.”

Guarding the Digital God: The Race to Secure Artificial Intelligence

Securing an AI is fundamentally different from securing a traditional computer

network. A hacker doesn’t need to breach a firewall if they can manipulate the

AI’s “mind” itself. The attack vectors are subtle, insidious, and entirely new.

... The debate over whether people or AI should lead this effort presents a

false choice. The only viable path forward is a deep, symbiotic partnership. We

must build a system where the AI is the frontline soldier and the human is the

strategic commander. The guardian AI should handle the real-time, high-volume

defense: scanning trillions of data points, flagging suspicious queries, and

patching low-level vulnerabilities at machine speed. The human experts, in turn,

must set the strategy. They define the ethical red lines, design the security

architecture, and, most importantly, act as the ultimate authority for critical

decisions. If the guardian AI detects a major, system-level attack, it shouldn’t

act unilaterally; it should quarantine the threat and alert a human operator who

makes the final call. ... The power of artificial intelligence is growing at an

exponential rate, but our strategies for securing it are lagging dangerously

behind. The threats are no longer theoretical. The solution is not a choice

between humans and AI, but a fusion of human strategic oversight and AI-powered

real-time defense. For a nation like the United States, developing a

comprehensive national strategy to secure its AI infrastructure is not optional.

Securing an AI is fundamentally different from securing a traditional computer

network. A hacker doesn’t need to breach a firewall if they can manipulate the

AI’s “mind” itself. The attack vectors are subtle, insidious, and entirely new.

... The debate over whether people or AI should lead this effort presents a

false choice. The only viable path forward is a deep, symbiotic partnership. We

must build a system where the AI is the frontline soldier and the human is the

strategic commander. The guardian AI should handle the real-time, high-volume

defense: scanning trillions of data points, flagging suspicious queries, and

patching low-level vulnerabilities at machine speed. The human experts, in turn,

must set the strategy. They define the ethical red lines, design the security

architecture, and, most importantly, act as the ultimate authority for critical

decisions. If the guardian AI detects a major, system-level attack, it shouldn’t

act unilaterally; it should quarantine the threat and alert a human operator who

makes the final call. ... The power of artificial intelligence is growing at an

exponential rate, but our strategies for securing it are lagging dangerously

behind. The threats are no longer theoretical. The solution is not a choice

between humans and AI, but a fusion of human strategic oversight and AI-powered

real-time defense. For a nation like the United States, developing a

comprehensive national strategy to secure its AI infrastructure is not optional.

/filters:no_upscale()/articles/three-questions-better-architecture/en/resources/1Figure1-Answers-to-3-Key-Questions-Determine-the-MVA-Decisions-and-Tradeoffs-1760609283583.jpg)