Effective Strategies for Talking About Security Risks with Business Leaders

Like every difficult conversation, CISOs must pick the right time, place and

strategy to discuss cyber risks with the executive team and staff. Instead of

waiting for the opportunity to arise, CISOs should proactively engage with

individuals at all levels of the organization to influence them and ensure an

understanding of security policies and incident response. These conversations

could come in the form of monthly or quarterly meetings with senior

stakeholders to maintain the cadence and consistency of the conversations,

discuss how the threat landscape is evolving and review their part of the

business through a cybersecurity lens. They could also be casual watercooler

chats with staff members, which not only help to educate and inform employees

but also build vital internal relationships that can affect online behaviors.

In addition to talking, CISOs must also listen to and learn about key

stakeholders to tailor conversations around their interests and

concerns. ... If you're talking to the board, you'll need to know the

people around that table. What are their interests, and how can you

communicate in a way that resonates with them and gets their attention? Use

visualization techniques and find a "cyber ally" on the board who will back

you and help reinforce your ideas and the information you share.

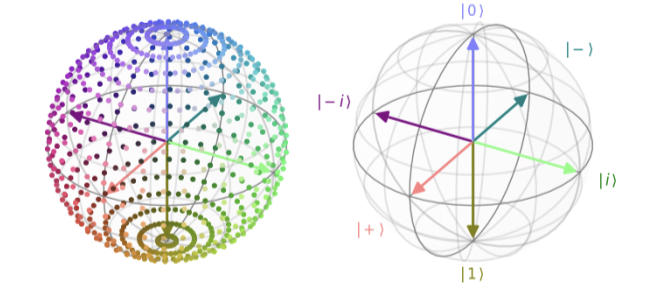

Is Explainable AI Explainable Enough Yet?

“More often than not, the higher the accuracy provided by an AI model, the

more complex and less explainable it becomes, which makes developing

explainable AI models challenging,” says Godbole. “The premise of these AI

systems is that they can work with high-dimensional data and build non-linear

relationships that are beyond human capabilities. This allows them to identify

patterns at a large scale and provide higher accuracy. However, it becomes

difficult to explain this non-linearity and provide simple, intuitive

explanations in understandable terms.” Other challenges are providing

explanations that are both comprehensive and easily understandable and the

fact that businesses hesitate to explain their systems fully for fear of

divulging intellectual property (IP) and losing their competitive advantage.

“As we make progress towards more sophisticated AI systems, we may face

greater challenges in explaining their decision-making processes. For

autonomous systems, providing real-time explainability for critical decisions

could be technically difficult, even though it will be highly necessary,” says

Godbole. When AI is used in sensitive areas, it will become increasingly

important to explain decisions that have significant ethical implications, but

this will also be challenging.

The challenge of cloud computing forensics

Data replication across multiple locations complicates forensics processes

that require the ability to pinpoint sources for analysis. Consider the

challenge of retrieving deleted data from cloud systems—not just a technical

obstacle, but a matter of accountability that is often not addressed by IT

until it’s too late. Multitenancy involves shared resources among multiple

users, making it difficult to distinguish and segregate data. This is a

systemic problem for cloud security, and it is particularly problematic for

cloud platform forensics. The NIST document acknowledges this challenge and

recommends the implementation of access mechanisms and frameworks so companies

can maintain data integrity and manage incident response. Thus, the mechanisms

are in place to deal with issues once they occur because accounting happens on

an ongoing basis. The lack of location transparency is a nightmare. Data

resides in various physical jurisdictions, all with different laws and

cultural considerations. Crimes may occur on a public cloud point of presence

in a country that disallows warrants to examine the physical systems, whereas

other countries have more options for law enforcement. Guess which countries

the criminals choose to leverage.

Is the rise of genAI about to create an energy crisis?

Though data center power consumption is expected to double by 2028, according

to IDC research director Sean Graham, it’s still a small percentage of overall

energy consumption — just 18%. “So, it’s not fair to blame energy consumption

on AI,” he said. “Now, I don’t mean to say AI isn’t using a lot of energy and

data centers aren’t growing at a very fast rate. Data Center energy

consumption is growing at 20% per year. That’s significant, but it’s still

only 2.5% of the global energy demand. “It’s not like we can blame energy

problems exclusively on AI,” Graham said. ... Beyond the pressure from genAI

growth, electricity prices are rising due to supply and demand dynamics,

environmental regulations, geopolitical events, and extreme weather events

fueled in part by climate change, according to an IDC study published today.

IDC believes the higher electricity prices of the last five years are likely

to continue, making data centers considerably more expensive to operate. Amid

that backdrop, electricity suppliers and other utilities have argued that AI

creators and hosts should be required to pay higher prices for electricity —

as cloud providers did before them — because they’re quickly consuming greater

amounts of compute cycles and, therefore, energy compared to other users.

20 Years in Open Source: Resilience, Failure, Success

The rise of Big Tech has emphasized one of the most significant truths I’ve

learned: the need for digital sovereignty. Over time, I’ve observed how

centralized platforms can slowly erode consumers’ authority over their data

and software. Today, more than ever, I believe that open source is a crucial

path to regaining control — whether you’re an individual, a business, or a

government. With open source software, you own your infrastructure, and you’re

not subject to the whims of a vendor deciding to change prices, terms, or even

direction. I’ve learned that part of being resilient in this industry means

providing alternatives to centralized solutions. We built CryptPad — to offer

an encrypted, privacy-respecting alternative to tools like Google Docs. It

hasn’t been easy, but it’s a project I believe in because it aligns with my

core belief: people should control their data. I would improve the way the

community communicates the benefits of open source. The conversation all too

frequently concentrates on “free vs. paid” software. In reality, what matters

is the distinction between dependence and freedom. I’ve concluded that we need

to explain better how individuals may take charge of their data, privacy, and

future by utilizing open source.

20 Tech Pros On Top Trends In Software Testing

The shift toward AI-driven testing will revolutionize software quality

assurance. AI can intelligently predict potential failures, adapt to changes

and optimize testing processes, ensuring that products are not only

reliable, but also innovative. This approach allows us to focus on creating

user experiences that are intuitive and delightful. ... AI-driven test

automation has been the trend that almost every client of ours has been

asking for in the past year. Combining advanced self-healing test scripts

and visual testing methodologies has proven to improve software quality.

This process also reduces the time to market by helping break down complex

tasks. ... With many new applications relying heavily on third-party APIs or

software libraries, rigorous security auditing and testing of these services

is crucial to avoid supply chain attacks against critical services. ... One

trend that will become increasingly important is shift-left security

testing. As software development accelerates, security risks are growing.

Integrating security testing into the early stages of development—shifting

left—enables teams to identify vulnerabilities earlier, reduce remediation

costs and ensure secure coding practices, ultimately leading to more secure

software releases.

How to manage shadow IT and reduce your attack surface

To effectively mitigate the risks associated with shadow IT, your

organization should adopt a comprehensive approach that encompasses the

following strategies:Understanding the root causes: Engage with different

business units to identify the pain points that drive employees to seek

unauthorized solutions. Streamline your IT processes to reduce friction and

make it easier for employees to accomplish their tasks within approved

channels, minimizing the temptation to bypass security measures. Educating

employees: Raise awareness across your organization about the risks

associated with shadow IT and provide approved alternatives. Foster a

culture of collaboration and open communication between IT and business

teams, encouraging employees to seek guidance and support when selecting

technology solutions. Establishing clear policies: Define and communicate

guidelines for the appropriate use of personal devices, software, and

services. Enforce consequences for policy violations to ensure compliance

and accountability. Leveraging technology: Implement tools that enable your

IT team to continuously discover and monitor all unknown and unmanaged IT

assets.

How software teams should prepare for the digital twin and AI revolution

By integrating AI to enhance real-time analytics, users can develop a more

nuanced understanding of emerging issues, improving situational awareness

and allowing them to make better decisions. Using in-memory computing

technology, digital twins produce real-time analytics results that users

aggregate and query to continuously visualize the dynamics of a complex

system and look for emerging issues that need attention. In the near future,

generative AI-driven tools will magnify these capabilities by automatically

generating queries, detecting anomalies, and then alerting users as needed.

AI will create sophisticated data visualizations on dashboards that point to

emerging issues, giving managers even better situational awareness and

responsiveness. ... Digital twins can use ML techniques to monitor thousands

of entry points and internal servers to detect unusual logins, access

attempts, and processes. However, detecting patterns that integrate this

information and create an overall threat assessment may require data

aggregation and query to tie together the elements of a kill chain.

Generative AI can assist personnel by using these tools to detect unusual

behaviors and alert personnel who can carry the investigation forward.

The Open Source Software Balancing Act: How to Maximize the Benefits And Minimize the Risks

OSS has democratized access to cutting-edge technologies, fostered a culture

of collaboration and empowered businesses to prioritize innovation. By

tapping into the vast pool of open source components available, software

developers can accelerate product development, minimize time-to-market and

drive innovation at scale. ... Paying down technical debt requires two

things, consistency and prioritization. First, organizations should opt for

fewer high-quality suppliers with well-maintained open source projects

because they have greater reliability and stability, reducing the likelihood

of introducing bugs or issues into their own codebase that rack up tech

debt. In terms of transparency, organizations must have complete visibility

into their software infrastructure. This is another area where SBOMs are

key. With an SBOM, developers have full visibility into every element of

their software, which reduces the risk of using outdated or vulnerable

components that contribute to technical debt. There’s no question that open

source software offers unparalleled opportunities for innovation,

collaboration and growth within the software development ecosystem.

Is AI really going to burn the planet?

Trying to understand exactly how energy-intensive the training of datasets

is, is even more complex than understanding exactly how big data center GHG

sins are. A common “AI is environmentally bad” statistic is that training a

large language model like GPT-3 is estimated to use just under

1,300-megawatt hours (MWh) of electricity, about as much power as consumed

annually by 130 US homes, or the equivalent of watching 1.63 million hours

of Netflix. The source for this stat is AI company Hugging Face, which does

seem to have used some real science to arrive at these numbers. It also, to

quote a May Hugging Face probe into all this, seems to have proven that

"multi-purpose, generative architectures are orders of magnitude more

[energy] expensive than task-specific systems for a variety of tasks, even

when controlling for the number of model parameters.” It’s important to note

that what’s being compared here are task-specific AI runs (optimized,

smaller models trained in specific generative AI tasks) and multi-purpose (a

machine learning model that should be able to process information from

different modalities, including images, videos, and text).

Quote for the day:

"Leadership is particularly necessary to ensure ready acceptance of the

unfamiliar and that which is contrary to tradition." -- Cyril Falls

/dq/media/media_files/OexavGA5G5w0jZEnOBT3.jpg)

_imageBROKER.com_GmbH_&_Co_KG_Alamy.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)