How to Build a Successful AI Strategy for Your Business in 2024

With a solid understanding of AI technology and your organization’s priorities, the next step is to define clear objectives and goals for your AI strategy. Focus on identifying the problems that AI can solve most effectively within your organization. These objectives should be specific, measurable, achievable, relevant, and time-bound (SMART). ... By setting well-defined objectives, you can create a targeted AI strategy that delivers tangible results and aligns with your overall business priorities. An AI implementation strategy often requires specialized expertise and tools that may not be available in-house. To bridge this gap, identify potential partners and vendors who can provide the necessary support for your AI strategy.Start by researching AI and machine learning companies that have a proven track record of working in your industry. When evaluating potential partners, consider factors such as their technical capabilities, the quality of their tools and platforms, and their ability to scale as your AI needs grow. Look for vendors who offer comprehensive solutions that cover the entire AI lifecycle, from data preparation and model development to deployment and monitoring.

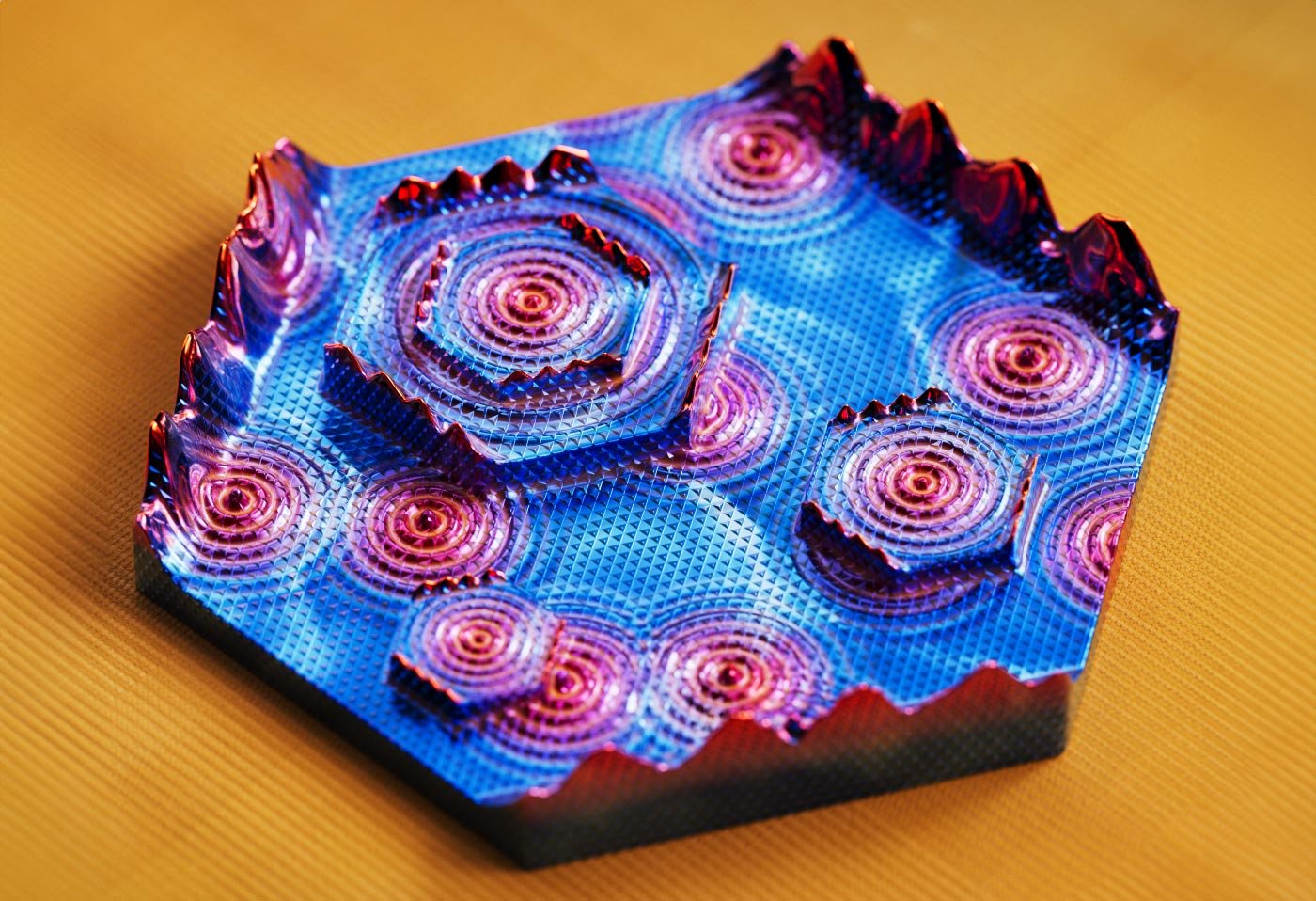

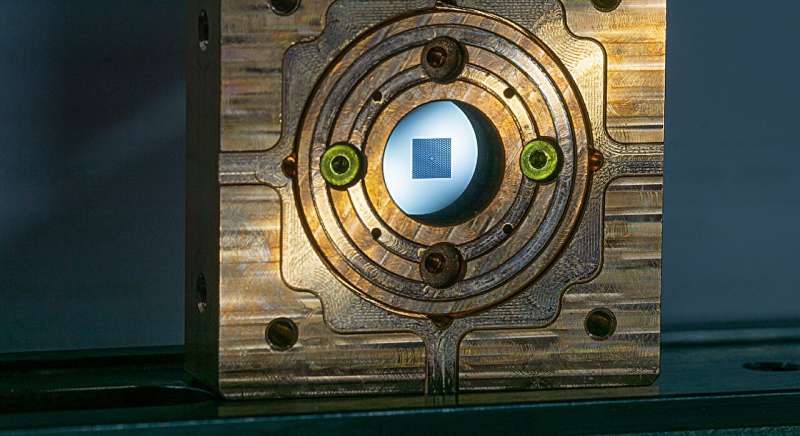

Internet can achieve quantum speed with light saved as sound

When transferring information between two quantum computers over a distance—or

among many in a quantum internet—the signal will quickly be drowned out by

noise. The amount of noise in a fiber-optic cable increases exponentially the

longer the cable is. Eventually, data can no longer be decoded. The classical

Internet and other major computer networks solve this noise problem by

amplifying signals in small stations along transmission routes. But for quantum

computers to apply an analogous method, they must first translate the data into

ordinary binary number systems, such as those used by an ordinary computer. This

won't do. Doing so would slow the network and make it vulnerable to

cyberattacks, as the odds of classical data protection being effective in a

quantum computer future are very bad. "Instead, we hope that the quantum drum

will be able to assume this task. It has shown great promise as it is incredibly

well-suited for receiving and resending signals from a quantum computer. So, the

goal is to extend the connection between quantum computers through stations

where quantum drums receive and retransmit signals, and in so doing, avoid noise

while keeping data in a quantum state," says Kristensen.

Better application networking and security with CAKES

A major challenge in enterprises today is keeping up with the networking

needs of modern architectures while also keeping existing technology

investments running smoothly. Large organizations have multiple IT teams

responsible for these needs, but at times, the information sharing and

communication between these teams is less than ideal. Those responsible for

connectivity, security, and compliance typically live across networking

operations, information security, platform/cloud infrastructure, and/or API

management. These teams often make decisions in silos, which causes

duplication and integration friction with other parts of the organization.

Oftentimes, “integration” between these teams is through ticketing systems.

... Technology alone won’t solve some of the organizational challenges

discussed above. More recently, the practices that have formed around

platform engineering appear to give us a path forward. Organizations that

invest in platform engineering teams to automate and abstract away the

complexity around networking, security, and compliance enable their

application teams to go faster.

AI set to enhance cybersecurity roles, not replace them

Ready or not, though, AI is coming. That being the case, I’d caution

companies, regardless of where they are on their AI journey, to understand

that they will encounter challenges, whether from integrating this

technology into current processes or ensuring that staff are properly

trained in using this revolutionary technology, and that’s to be expected.

As a cloud security community, we will all be learning together how we can

best use this technology to further cybersecurity. ... First, companies need

to treat AI with the same consideration as they would a person in a given

position, emphasizing best practices. They will also need to determine the

AI’s function — if it merely supplies supporting data in customer chats,

then the risk is minimal. But if it integrates and performs operations with

access to internal and customer data, it’s imperative that they prioritize

strict access control and separate roles. ... We’ve been talking about a

skills gap in the security industry for years now and AI will deepen that in

the immediate future. We’re at the beginning stages of learning, and

understandably, training hasn’t caught up yet.

Why employee recognition doesn't work: The dark side of boosting team morale

Despite the importance of appreciation, many workplaces prioritise

performance-based recognition, inadvertently overlooking the profound impact

of genuine appreciation. This preference for recognition over appreciation

can lead to detrimental outcomes, including conditionality and scarcity.

Conditionality in recognition arises from its link to past achievements and

performance outcomes. Employees often feel pressured to outperform their

peers and surpass their past accomplishments to receive recognition,

fostering a hypercompetitive work environment that undermines collaboration

and teamwork. Furthermore, the scarcity of recognition exacerbates this

issue, as tangible rewards such as bonuses or promotions are limited. In

this competitive landscape, employees may feel undervalued, leading to

disengagement and disillusionment. To foster an inclusive and supportive

workplace culture, organisations must recognise the intrinsic value of

appreciation alongside performance-based recognition. Embracing appreciation

cultivates a culture of gratitude, empathy, and mutual respect,

strengthening interpersonal connections and boosting employee morale.

Improving decision-making in LLMs: Two contemporary approaches

Training LLMs in context-appropriate decision-making demands a delicate

touch. Currently, two sophisticated approaches posited by contemporary

academic machine learning research suggest alternate ways of enhancing the

decision-making process of LLMs to parallel those of humans. The first,

AutoGPT, uses a self-reflexive mechanism to plan and validate the output;

the second, Tree of Thoughts (ToT), encourages effective decision-making by

disrupting traditional, sequential reasoning. AutoGPT represents a

cutting-edge approach in AI development, designed to autonomously create,

assess and enhance its models to achieve specific objectives. Academics have

since improved the AutoGPT system by incorporating an “additional opinions”

strategy involving the integration of expert models. This presents a novel

integration framework that harnesses expert models, such as analyses from

different financial models, and presents it to the LLM during the

decision-making process. In a nutshell, the strategy revolves around

increasing the model’s information base using relevant information.

Unpacking the Executive Order on Data Privacy: A Deeper Dive for Industry Professionals

For privacy professionals, the order underscores the ongoing challenge of

protecting sensitive information against increasingly sophisticated threats.

That’s important, and shouldn’t be overlooked. Yet the White House has

admitted that this order isn’t a silver bullet for all the nation’s data

privacy challenges. That candor is striking. It echoes a sentiment familiar

to many of us in the industry: the complexities of protecting personal

information in the digital age cannot be fully addressed through singular

measures against external threats. Instead, this task requires a long-term,

thoughtful, multi-faceted approach – one that also confronts the internal

challenges to data privacy posed by Big Tech, domestic data brokers, and

foreign governments that exist outside of the designated “countries of

concern” category. ... The extensive collection, usage, and sale of personal

data by domestic entities—including but not limited to Big Tech companies,

data brokers, and third-party vendors—poses significant risks. These

practices often lack transparency and accountability, fueling privacy

breaches, identity theft, and eroding public trust and individual

autonomy.

10 tips to keep IP safe

CSOs who have been protecting IP for years recommend doing a risk and

cost-benefit analysis. Make a map of your company’s assets and determine

what information, if lost, would hurt your company the most. Then consider

which of those assets are most at risk of being stolen. Putting those two

factors together should help you figure out where to best spend your

protective efforts (and money). If information is confidential to your

company, put a banner or label on it that says so. If your company data is

proprietary, put a note to that effect on every log-in screen. This seems

trivial, but if you wind up in court trying to prove someone took

information they weren’t authorized to take, your argument won’t stand up if

you can’t demonstrate that you made it clear that the information was

protected. ... Awareness training can be effective for plugging and

preventing IP leaks, but only if it’s targeted to the information that a

specific group of employees needs to guard. When you talk in specific terms

about something that engineers or scientists have invested a lot of time in,

they’re very attentive. As is often the case, humans are often the weakest

link in the defensive chain.

Types of Data Integrity

Here are a few data integrity issues and risks many organizations face:

Compromised hardware: Power outages, fire sprinklers, or a clumsy person

knocking a computer to the floor are examples of situations that can cause

the loss of vital data or its corruption. Security considers compromised

hardware to be hardware that has been hacked. Cyber threats: Cyber security

attacks – phishing attacks, malware – present a serious threat to data

integrity. Malicious software can corrupt or alter critical data within a

database. Additionally, hackers gaining unauthorized access can manipulate

or delete data. If changes are made as a result of unauthorized access, it

may be a failure in data security. ... Human error: A significant source of

data integrity problems is human error. Mistakes that are made during manual

entries can produce inaccurate or inconsistent data that then gets stored in

the database. Data transfer errors: During the transfer of data, data

integrity can be compromised. Transfer errors can damage data integrity,

especially when moving massive amounts of data during extract, transform,

and load processes, or when moving the organization’s data to a different

database system.

Sisense Breach Highlights Rise in Major Supply Chain Attacks

Many of the details of the attack are not yet clear, but the breach may have

exposed hundreds of Sisense's prominent customers to a supply chain attack

that gave hackers a backdoor into the company's customer networks, a CISA

official told Information Security Media Group. Experts said the attack

suggests trusted companies are still failing to implement proactive

defensive measures to spot supply chain attacks - such as robust access

controls, real-time threat intelligence and regular security assessments -

at a time when organizations are increasingly reliant on interconnected

ecosystems. "These types of software supply chain attacks are only possible

through compromised developer credentials and account information from an

employee or contractor," said Jim Routh, chief trust officer for the

software security company Saviynt. The breach highlights the need for

enterprises to improve their identity access management capabilities for

cloud-based services and other third parties, he said. Security intelligence

platform Censys published insights into the Sisense breach Friday.

Quote for the day:

"Success is the progressive

realization of predetermined, worthwhile, personal goals." --

Paul J. Meyer