Generative AI is even more of a mixed bag when it comes to writing secure code. Many hope that, by ingesting best coding practices from public code repositories — possibly augmented by a company’s own policies and frameworks — the code AI generates will be more secure right from the very start and avoid the common mistakes that human developers make. ... Generative AI has the potential to help DevSecOps teams to find vulnerabilities and security issues that traditional testing tools miss, to explain the problems, and to suggest fixes. It can also help with generating test cases. Some security flaws are still too nuanced for these tools to catch, says Carnegie Mellon’s Moseley. “For those challenging things, you’ll still need people to look for them, you’ll need experts to find them.” However, generative AI can pick up standard errors. ... A bigger question for enterprises will be about automating the generative AI functionality — and how much to have humans in the loop. For example, if the AI is used to detect code vulnerabilities early on in the process. “To what extent do I allow code to be automatically corrected by the tool?” Taglienti asks.

White House Advisory Team Backs Cybersecurity Tax Incentives

Technology trade groups and cybersecurity experts have long called for financial incentives to help drive the implementation of new cybersecurity standards, but proposals differ on how to best encourage industries to prioritize cybersecurity investments. A white paper published in 2011 by the U.S. Chamber of Commerce, the Center for Democracy and Technology and other industry groups urged the federal government to focus on cybersecurity incentives over mandates, warning that "a more government-centric set of mandates would be counterproductive to both our economic and national security." In April 2023, the Federal Energy Regulatory Commission approved a rule allowing utility companies to include cybersecurity spending as part of their calculation for settling rates. FERC acting Chairman Willie Phillips said at the time that financial incentives must accompany federal mandates "to encourage utilities to proactively make additional cybersecurity investments in their systems." While the FERC rule allows utilities to recover cybersecurity expenses through customer rates, the NSTAC model suggests providing tax incentives upfront so critical infrastructure operators pay less when they spend money on enhanced cybersecurity standards.

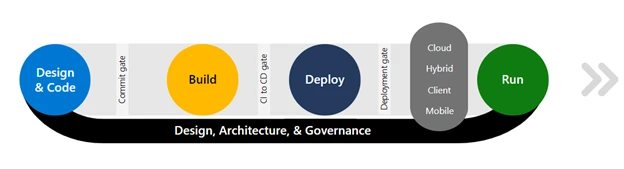

Continuous Delivery: Gold Standard for Software Development

In the context of CD, developers must be able to easily and quickly understand

why a product or update has failed. Given that between 50% and 80% of updates to

software fail, developers need to be able to rapidly identify the exact point of

failure and resolve it. This reduction in incident resolution time — or bug

fixing — is one of the significant benefits of developers consistently working

toward the metric of releasability. This means that when problems arise, they

are easy to fix and recovery cycles are quick. To meet increasingly quick

development targets, developers need to find ways to reduce the time they spend

on incident response and troubleshooting. To help with this, they need access to

real-time insights that allow them to identify, diagnose and resolve any

incidents as they arise. These insights can give developers an instant,

digestible understanding of how changes affect their software development

pipelines, even when changes may not be significant enough to cause an incident.

These “change events” offer a trail of breadcrumbs through every change made to

a product throughout its development cycle, allowing developers to see the

direct effects of each update.

Transitioning to memory-safe languages: Challenges and considerations

We encourage the community to consider writing in Rust when starting new

projects. We also recommend Rust for critical code paths, such as areas

typically abused or compromised or those holding the “crown jewels.” Great

places to start are authentication, authorization, cryptography, and anything

that takes input from a network or user. While adopting memory safety will not

fix everything in security overnight, it’s an essential first step. But even the

best programmers make memory safety errors when using languages that aren’t

inherently memory-safe. By using memory-safe languages, programmers can focus on

producing higher-quality code rather than perilously contending with low-level

memory management. However, we must recognize that it’s impossible to rewrite

everything overnight. OpenSSF has created a C/C++ Hardening Guide to help

programmers make legacy code safer without significantly impacting their

existing codebases. Depending on your risk tolerance, this is a less risky path

in the short term. Once your rewrite or rebuild is complete, it’s also essential

to consider deployment.

Personalised learning for Gen Z: How customised content is reshaping education

As no two students possess the same skills, learning gaps and future goals, a

range of personalised learning methods is necessary. This includes adaptive and

blended learning, together with student-directed and project-based learning.

Thereby, students imbibe lessons more speedily and effectively while retaining

them longer. Conversely, traditional learning is based on physical classroom

learning and standard curricula. It’s also time-consuming and cumbersome, with a

one-size-fits-all approach that overlooks individual needs. Given the numerous

mandatory textbooks and reading material, it’s expensive, unlike the more

cost-effective e-learning modules. Additionally, technology facilitates the

delivery of customized content via small videos and other bite-sized content

more suitable for tech-savvy Gen Zs. With instant access to information that

facilitates shopping, travel and more, these youthful groups hold the same

expectations regarding learning. As a result, Gen Zs like consuming information

via videos, podcasts or personalised learning modules that may be accessed

later.

Agile Architecture, Lean Architecture, or Both?

Creating an architecture for a software product requires solving a variety of

complex problems; each product faces unique challenges that its architecture

must overcome through a series of trade-offs. We have described this decision

process in other articles in which we have described the concept of the Minimum

Viable Architecture (MVA) as a reflection of these trade-off decisions. The MVA

is the architectural complement to a Minimum Viable Product or MVP. The MVA

balances the MVP by making sure that the MVP is technically viable, sustainable,

and extensible over time; it is what differentiates the MVP from a throw-away

proof of concept. Lean approaches want to look at the core problem of software

development as improving the flow of work, but from an architectural

perspective, the core problem is creating an MVP and an MVA that are both

minimal and viable. One key aspect of an MVA is that it is developed

incrementally over a series of releases of a product. The development team uses

the empirical data from these releases to confirm or reject hypotheses that they

form about the suitability of the MVA.

How generative AI will change low-code development

“Skill sets will evolve to encompass a blend of traditional coding expertise,

along with proficiency in utilizing low/no-code platforms, understanding how to

integrate AI technologies, and effectively collaborating in teams using these

tools,” says Ed Macosky, chief product and technology officer at Boomi. “The

combination of low code alongside copilots will allow developers to enhance

their skills and focus on supporting business outcomes, rather than spending the

bulk of their time learning different coding languages.” Armon Petrossian, CEO

and co-founder of Coalesce, adds, “There will be a greater emphasis on

analytical thinking, problem-solving, and design thinking with less of a burden

on the technical barrier of solving these types of issues.” Today, code

generators can produce code suggestions, single lines of code, and small

modules. Developers must still evaluate the code generated to adjust interfaces,

understand boundary conditions, and evaluate security risks. But what might

software development look like as prompting, code generation, and AI assistants

in low-code improve? “As programming interfaces become conversational, there’s a

convergence between low-code platforms and copilot-type tools,” says Srikumar

Ramanathan, chief solutions officer at Mphasis.

Is It Too Late for My Organization to Leverage AI?

The short answer is no, but a pragmatic approach to adopting AI is becoming

increasingly valuable. ... The key to efficient AI implementation is caution

and planning. Leaders must assess their enterprise’s organizational,

operational, and business challenges and use those findings to guide an

intelligent AI strategy.Organizationally, successful AI implementation

requires interdepartmental collaboration and training. Stakeholders --

including leaders and the daily drivers of productivity -- should understand

the benefits of AI implementation. Otherwise, employee anxieties or

misinformation might impede progress. Operational challenges to AI deployment

include inefficient manual processes and a lack of standardization. Remember,

AI is not a silver bullet for resolving existing tech inefficiencies. Before

implementation, leaders must assess their tech stack, ensuring that all

relevant software is in conversation with one another. From a business

perspective, unclear AI use cases are a recipe for disaster. AI and machine

learning (ML) investments should have specific KPIs. Furthermore, all

investments should take a phased approach that prioritizes a solid data

foundation before deployment.

Has the CIO title run its course?

“It’s time for the rest of organizations to recognize there is not a single

CIO role anymore but layers of CIOs,’’ he says. The chief of technology needs

to be a digital leader “and that’s why the name is so important.” While

acknowledging that every company is different, Wenhold says if he were on the

outside looking in at a senior executive meeting, “the person sitting there

with the CBTO title isn’t talking about keeping the lights on, and the

internet connection up, and what technologies we’re using. They’re talking

about how is the business absorbing the latest deployment into production.”

The person responsible for keeping the lights on should be a director, he

adds, and “I don’t see that role at the table.” Although technology’s role has

been widely elevated in most companies across all industries, Wenhold believes

it will take some time for other organizations to understand what the CBTO

role can and should be. “I still believe we have a lot of work to do in the

industry. The CIO name is more important to your peers than to the person

holding the title,’’ he maintains. Sule agrees, saying that the CBTO title is

effective because it helps to “blur the lines” between technology and business

and instills a sense that everyone in Sule’s department is there to serve the

business.

Japan Blames North Korea for PyPI Supply Chain Cyberattack

"This attack isn't something that would affect only developers in Japan and

nearby regions, Gardner points out. "It's something for which developers

everywhere should be on guard." Other experts say non-native English speakers

could be more at risk for this latest attack by the Lazarus Group. The attack

"may disproportionately impact developers in Asia," due to language barriers

and less access to security information, says Taimur Ijlal, a tech expert and

information security leader at Netify. "Development teams with limited

resources may understandably have less bandwidth for rigorous code reviews and

audits," Ijlal says. Jed Macosko, a research director at Academic Influence,

says app development communities in East Asia "tend to be more tightly

integrated than in other parts of the world due to shared technologies,

platforms, and linguistic commonalities." He says attackers may be looking to

take advantage of those regional connections and "trusted relationships."

Small and startup software firms in Asia typically have more limited security

budgets than do their counterparts in the West, Macosko notes.

Quote for the day:

"After growing wildly for years, the

field of computing appears to be reaching its infancy." --

John Pierce

/dq/media/post_banners/wp-content/uploads/2023/08/cyber-4610993-1280.jpg)

:format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/24347780/STK095_Microsoft_04.jpg)

:format(webp)/cloudfront-us-east-1.images.arcpublishing.com/coindesk/7KX4QWBYHJDW7LLOH53VFK4EGI.jpg)