2024 cybersecurity forecast: Regulation, consolidation and mothballing SIEMs

CISOs’ jobs are getting harder. Many are grappling with an onslaught of

security threats, and now the legal and regulatory stakes are higher. The new

SEC cybersecurity disclosure requirements have many CISOs concerned they’ll be

left with the liability when an attack occurs. ... After the Cyber Resilience

Act, policymakers and developers drive adoption of security-by-design. The CRA

wisely avoided breaking the open source software ecosystem, but now the hard

work starts: helping manufacturers adopt modern software development practices

that will enable them to ship secure products and comply with the CRA, and

driving public investment in open source software security to efficiently

raise all boats. ... With the increase in digital business-as-usual,

cybersecurity practitioners are already feeling lost in a deluge of inaccurate

information from mushrooming multiple cybersecurity solutions coupled with a

lack of cybersecurity architecture and design practices, resulting in porous

cyber defenses.

Expert Insight: Adam Seamons on Zero-Trust Architecture

Zero trust goes beyond restricting access by need to know and the principle of

least privilege. It’s about properly verifying access and being 110% certain

that the access is legitimate. That means things like limiting access to

specific criteria, such as by port or protocol, time period, IP address and/or

physical location. ... A zero-trust network is about verification or

double-checking. You want to be verifying not just the person, but also the

device and limiting that access to specific permissions and rights that have

been approved in advance. And you’re also restricting data access,

particularly in situations like the example I just gave. Think of it like the

difference between a key to the front door that gives you access to the whole

house, and needing a key for the front door as well as separate keys for all

the different rooms. ... AI and machine learning have both been used in

detecting anomalies and suspicious patterns for some time, and will only

continue to be used more. I expect SOCs to become increasingly reliant on AI.

Getting more specific, log analysis is a key area for AI to automate.

6 innovative and effective approaches to upskilling

Beverage maker Torani has been mixing up L&D by flipping the traditional

performance review — which can be “demoralizing” — on its head. It puts the

onus on future rather than past performance and on employee learning

aspirations, rather than manager assessment. ... Devine adds: “With today’s

shift to agile working, some firms believe yearly performance objectives and

appraisals are insufficient and inflexible. They need something more frequent,

nimble, and focused on feedback, skills and future needs. But you still need

managers to assess performance to justify and provide transparency on

promotions and pay decisions.” ... Microsoft is supporting workers across its

organization gain skills related to AI — from non-techies to IT professionals

and leaders. Simon Lambert, chief learning officer at Microsoft UK, says: “One

lesson we’ve learned from our AI learning journey is that upskilling means far

more than merely equipping employees with skills. It requires an ecosystem

that fosters adaptability and continuous learning. In the face of

AI-upskilling demand, employees need faster, seamless access to learning

infrastructure.

2024 Data Center Un-Predictions: Five Unlikely Industry Forecasts

The potential impact of data centers on local communities is an important

issue. At a recent conference in Virginia, we had activists from the community

right alongside data center leaders to discuss the challenges and

opportunities we face. While there were still some disconnects, we met in the

middle on some critical topics around power, community engagement, and

ensuring we create a more sustainable future. ... Leveraging self-driving

technology, robots independently chart and traverse the data center, gathering

real-time sensor data. This lets them immediately juxtapose present patterns

against pre-defined norms, facilitating swift identification of deviations for

human examination. In an ever more interconnected and intricate environment,

this robotic technology grants decision-makers enhanced visibility, rapidity,

and a breadth of intelligence that surpasses what humans or stationary cameras

can provide. This advanced capability is vital for maintaining the efficiency

and security of data centers in our increasingly digital world.

US DOD’s CMMC 2.0 rules lift burdens on MSPs, manufacturers

The proposed rules also let manufacturers off the hook for complying with NIST

SP 800-171. SP 800-171 is a set of NIST cybersecurity rules to protect

sensitive federal information. “The requirements of 171 set of cyber standards

are designed for IT networks and information systems,” Metzger says. “They

were never really designed for a manufacturing environment. It’s now said

clearly in the proposed rules that the assessments won’t apply to operational

technology." "That, to me, should cause manufacturers to breathe a huge sigh

of relief because being required to meet NIST standards that simply don’t fit

a manufacturing or OT environment is a recipe for trouble of many forms,"

Metzger says. “The most important change is what did not change. The document

has essentially the same structure and strategy that was in 1.0. It requires

third-party assessments for a very large number of defense suppliers.” The

proposed version 2.0 of the CMMC rules was published in the Federal Register

December 26. Interested parties have until February 26 to file comments with

the DOD before the agency finalizes the rules.

Banking Innovation is Paramount Even as Regulatory and Competitive Pressures Mount

Guiding technology-forward regulations can empower banks to harness

innovation, enhancing security, transparency, and customer value. Regulators

should seek thoughtful oversight that encourages innovation while safeguarding

against excessive risks instead of attempting to prevent the recurrence of a

once-in-a-century financial crisis. Banks face a growing challenge to their

market share from alternative lending platforms, which poses an existential

threat, as noted in McKinsey’s 2023 Global Banking Annual Review. Over 70% of

the growth in global financial assets since 2015 has shifted away from

traditional bank lending, finding its way into private markets, institutional

investors and the realm of “shadow banking.” Near-zero interest rates have

enabled private equity firms and non-bank lenders to offer lower-cost loans.

With its digitally savvy consumer base, the fintech sector has further

accelerated this transition, particularly during the pandemic.

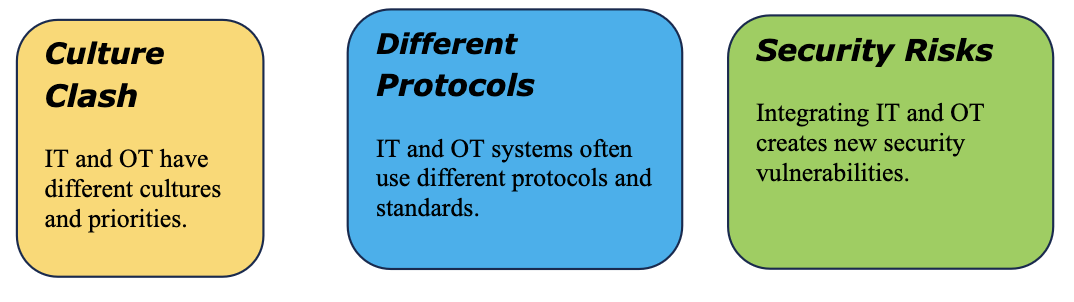

IT and OT cybersecurity: A holistic approach

As OT becomes more interconnected, the need to safeguard OT systems against

cyber threats is paramount. Many cyber threats and vulnerabilities

specifically target OT systems, which emphasizes the potential impact on

industrial operations. Many OT systems still use legacy technologies and

protocols that may have inherent vulnerabilities, as they were not designed

with modern cybersecurity standards in mind. They may also use older or

insecure communication protocols that may not encrypt data, making them

susceptible to eavesdropping and tampering. Concerns about system stability

often lead OT environments to avoid frequent updates and patches. This can

leave systems exposed to known vulnerabilities. OT systems are not immune to

social engineering attacks either. Insufficient training and awareness among

OT personnel can lead to unintentional security breaches, such as clicking on

malicious links or falling victim to social engineering attacks. Supply chain

risks also pose a threat, as third-party suppliers and vendors may introduce

vulnerabilities into OT systems if their products or services are not

adequately secured.

Exploring the Future of Information Governance: Key Predictions for 2024

In today’s rapidly evolving digital landscape, information governance has

become a collective responsibility. Looking ahead to 2024, we can anticipate a

significant shift towards closer collaboration between the legal, compliance,

risk management, and IT departments. This collaborative effort aims to ensure

comprehensive data management and robust protection practices across the

entire organization. By adopting a holistic approach and providing

cross-functional training, companies can empower their workforce to navigate

the complexities of information governance with confidence, enabling them to

make informed decisions and mitigate potential risks effectively. Embracing

this collaborative mindset will be crucial for organizations to adapt and

thrive in an increasingly data-driven world. ... Blockchain technology, with

its decentralized and immutable nature, has the tremendous potential to

revolutionize information governance across industries. By 2024, as businesses

continue to recognize the benefits, we can expect a significant increase in

the adoption of blockchain for secure and transparent transaction ledgers.

Data Professional Introspective: Demystifying Data Culture

We are discussing data culture from several points of view: what new content

should be added to the DCAM, where would it fall within the current framework

structure, what changes we propose to that structure, what modifications

should be made to existing content, how the new/modified content would be

assessed, and so on. ... One can begin decomposing data culture from a

high-level vision, which summarizes what the organization has accomplished

when it can feel confident in asserting that, “We have a strong data culture.”

One can also compile a collection of activities and behaviors that demonstrate

a developed data culture, and then categorize them and parse them into the

DCAM. Or, one can apply a combination of the two approaches, which is the path

the working group has followed. The working definition posited to date

includes a summary description of a strong data culture: “A strong data

culture promotes data-driven decision-making, data transparency, and the

alignment of data and analytics to business objectives. It prioritizes

strategic data use and encourages sharing and collaboration around data.”

Tackling technical debt in the insurance industry

The impact of technical debt on insurers spans various dimensions. Data

inefficiencies arise, leading to compliance issues and difficulties in

recruiting and retaining talent. Outdated processes hinder optimal

decision-making, impacting both established and newer insurers. Addressing

technical debt requires insurers to foster a culture of change, emphasising

the risks of neglecting this issue and aligning strategies with broader

organisational objectives. Tackling technical debt involves immediate action,

prioritised backlog creation, and adaptive development processes. Insurers are

advised to navigate technical debt through a combination of incremental and

transformational changes. Incremental adjustments and breakthrough

advancements should complement comprehensive restructuring efforts for

sustained and effective resolution. The roadmap to a resilient, innovative

future in insurance hinges on proactive management of technical debt. Insurers

must embark on their journey towards pricing transformation to remain

competitive and future-ready.

Quote for the day:

"In the end, it is important to

remember that we cannot become what we need to be by remaining what we are."

-- Max De Pree

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/archetype/EINOQ3JS4NCVRALXYXLGZV5CYM.jpg)