CISOs Rethink Data Security With Info-Centric Framework

"Data is on a logarithmic curve; for every amount of data that I have next year,

it's probably 2.5 times more than the amount of data I had this year," he says.

"We're data hoarders, for lack of a better term; no one wants to get rid of

people's information who have signed up to websites and forums and everything

else, so we have this enormous data sprawl. That, in turn, leaves behind

security blind spots." Further adding to the challenge is the fact that some

data is of course more sensitive than other information, and some information

doesn't need protecting at all, Rushing points out. And there's dynamism in

terms of defining appropriate security levels as data ages. He uses a product

launch to illustrate his point. "With a product release, we start off with a

situation where no one knows about it, everything's embargoed, and you're

protecting this important intellectual property," he explains. "And the next

thing you know, it's released for public consumption. And it's suddenly not top

secret anymore, in fact, you want the whole world to know about it."

How ‘Data Clean Rooms’ Evolved From Marketing Software To Critical Infrastructure

Data clean rooms as we know them today represent the first phase in leveraging

“clean data.” User privacy is protected, while advertisers retain access to

the necessary information. This model is now being extended and expanded upon

in the enterprise. It is no longer about just protecting personal data.

Companies need to act fast on data-derived insights, and therefore cannot

compromise efficiency and collaborative abilities. They need truly

comprehensive and dynamic data-sharing capabilities that can be quickly

configured with little code and setup. ... As one of the key reasons for data

clean rooms is the expanding IoT, businesses increasingly find themselves

needing to demonstrate the provenance and veracity of their IoT data for

business transactions or regulatory requirements. A data clean room must

provide a single pane of glass for the trust and protection of IoT devices,

the data they transmit and their data operations. This will require the need

to authenticate IoT devices, protect the data as it travels from the device to

the cloud and back to the device, and provide additional data points for

audits.

ACID Transactions Change the Game for Cassandra Developers

For years, Apache Cassandra has been solving big data challenges such as

horizontal scaling and geolocation for some of the most demanding use cases.

But one area, distributed transactions, has proven particularly challenging

for a variety of reasons. It’s an issue that the Cassandra community has been

hard at work to solve, and the solution is finally here. With the release of

Apache Cassandra version 5.0, which is expected later in 2023, Cassandra will

offer ACID transactions. ACID transactions will be a big help for developers,

who have been calling for more SQL-like functionality in Cassandra. This means

that developers can avoid a bunch of complex code that they used for applying

changes to multiple rows in the past. ... The advantage of ACID transactions

is that multiple operations can be grouped together and essentially treated as

a single operation. For instance, if you’re updating several points of data

that depend on a specific event or action, you don’t want to risk some of

those points being updated while others aren’t. ACID transactions enable you

to do that.

Corporate boards pressure CISOs to step up risk mitigation efforts

The report also found that general misunderstandings in common cyber risk

terminology could be a deterrent in developing effective strategies and

communicating risk to company leadership. Cyberattacks have been increasing

for several years now and resulting data breaches cost businesses an average

of $4.35 million in 2022, according to an IBM report. Given the financial and

reputational consequences of cyberattacks, corporate board rooms are putting

pressure on CISOs to identify and mitigate cyber/IT risk. Yet, despite the new

emphasis on risk management, business leaders still don’t have a firm grasp on

how cyber risk can impact different business initiatives—or that it could be

used as a strategic asset and core business differentiator. To better

understand the current cybersecurity and IT risk challenges companies are

facing, as well as steps executives are taking to combat risk, RiskOptics

fielded a survey of 261 U.S. InfoSec and GRC leaders. Respondents varied in

job level from manager to the C-Suite and worked across various industries.

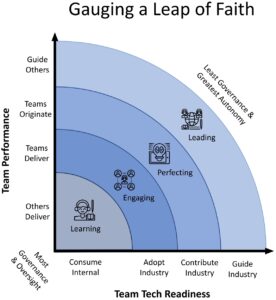

What is the Spotify model in agile?

The Spotify model is just the autonomous scaling of agile, as hinted at in the

paper’s name. It’s based on agile principles and unique features specific to

Spotify’s organizational structure. This framework became wildly popular and

was dubbed the “Spotify model,” with Henrik Kniberg credited as the inventor.

... Every other company wanted to adopt this framework for themselves. Spotify

enjoyed a reputation for being innovative, and people assumed that if this

framework worked so well for Spotify, it must also work great for them.

Companies began to feel as if this framework was perfect, but nothing is

perfect Spotify has changed its practices and ways of working over time —

adapting its strategies and methodologies to changes in the market, user

preferences, and more. The Spotify model itself was built with the company’s

culture, values, and organizational structure in mind, with the ultimate goal

of promoting cross-collaboration and innovation. As a result, it’s not a

one-size-fits-all — the Spotify model was built around a foundation the

company had already laid out.

Embracing zero-trust: a look at the NSA’s recommended IAM best practices for administrators

Knowing that credentials are a key target for malicious actors, utilizing

techniques such as identity federation and single sign-on can mitigate the

potential for identity sprawl, local accounts, and a lack of identity

governance. This may involve extending SSO across internal systems and also

externally to other systems and business partners. SSO also brings the benefit

of reducing the cognitive load and burden on users by allowing them to use a

single set of credentials across systems in the enterprise, rather than

needing to create and remember disparate credentials. Failing to implement

identity federation and SSO inevitably leads to credential sprawl with

disparate local credentials that generally aren’t maintained or governed and

represent ripe targets for bad actors. SSO is generally facilitated by

protocols such as SAML or Open ID Connect (OIDC). These protocols help

exchange authentication and autorization data between entities such as

Identity Providers (IdP)’s and service providers.

10 habits of people who are always learning new things

They’re the ones who are infinitely curious about the world around them –

those who take things apart to find out how they work, or go on nature walks

and prod everything with a stick, or do science experiments… Reading helps

them stay informed about the world, learn from others’ experiences, and

develop new perspectives. Whether it’s books, articles, blogs, or even social

media, they make a habit of consuming content that feeds their mind and

broadens their horizons. And they don’t just read books because they have to.

No, they WANT to; they read just for pleasure and personal growth, across

various genres and subjects. That’s why they have a well-rounded knowledge

base and are very open-minded about other people’s perspectives! Just because

you’ve gotten goal-setting down to an art doesn’t mean it’s all smooth

sailing. Of course not. You’ll definitely be making mistakes. But mistakes

don’t have to get you down. In fact, mistakes are perfect vehicles for

learning, but only if you have a growth mindset.

How Security Leaders Should Approach a Challenging Budgeting Environment

Organizations need to understand that cybercriminals don’t care about the

scope of the security controls. CIOs and CISOs cannot continue to operate in

the dark without confidence about how well processes work; they need an

understanding of what needs to be protected beyond the classical understanding

of cybersecurity coverage. That means addressing cybersecurity from a business

perspective. CISOs and CIOs can gain complete insight into the security

posture and performance by converging tools like SIEM, SOAR, UEBA and

business-critical security solutions, expanding the visibility beyond the IT

infrastructure and into business-critical applications that contain invaluable

information. A converged security solution can turn unqualified alerts into

real, actionable intelligence by adding contextual information and automating

responses. Another important thing to be mindful of is the pricing model for

security solutions. Many are based on data volumes, which means the pricing is

continuously increasing and unpredictable.

Is Web3 tech in search of a business model?

Changing their business models. I have worked with various startups in the

distributed ledger technology space and what they were doing may have been

revolutionising industries but all they were doing was replacing one

technology with a new one without replacing the business model. The biggest

challenge is how to use this new democratic power with its lower transaction

costs and improved security to create new business models. The question is how

to come up with such a commercial model? How can you monetise the system? In

every other system, you monetise the system by creating a middleman. But the

ultimate benefit of DLT is to do away with all middlemen. My fear is that all

businesses will do if find a new way of creating new intermediaries using this

technology. By definition, a business makes profits by adding value and that

value is created through economies of scale and adding some form of brokerage

in the process. The irony is that the whole purpose of this technology is to

do away with the concept of the transaction, which is what capitalism is based

on!

Defending Against the Evolving Infostealer Malware Threat

Flatley says employee education is very important in this space, as helping

employees understand why following security policies is important will

encourage compliance. “People are more apt to follow the rules if they

understand the consequences. However, no amount of training will reduce this

risk enough,” he says. That means security policies need to be enforced by

technical means that are designed to prevent accidental or intentional

non-compliance. “Even more important, we must understand that no amount of

training or technical defenses will entirely stop this threat,” he says.

Organizations must not only instrument a network to detect malicious activity

and craft formal plans for remediating stolen identity information well in

advance, but they must also practice them well so an attack can be acted upon

quickly. Hazam points to education training tools such as simulated phishing

attacks, which can help employees recognize and respond to real phishing

emails, and gamified training programs, which can make the training more

engaging and enjoyable for employees.

Quote for the day:

"Coaching isn't an addition to a

leader's job, it's an integral part of it." --

George S. Odiorne