Cyberattackers Double Down on Bypassing MFA

MFA flooding, where an attacker will repeatedly attempt to log in using stolen

credentials to create a deluge of push notifications, aims at taking advantage

of users' fatigue for security warnings. "Push notifications are a step up from

SMS, but are susceptible to MFA flooding and MFA fatigue attacks, bombarding the

victim with notifications in the hope they will click 'Allow' on one of them,"

Caulfield says. Another popular tactic — the account reset attack — aims to fool

tech support into giving attackers control of a targeted account, an approach

that led to the successful compromise of the developer Slack channel for

Take-Two Interactive's Rockstar Games, the maker of the Grand Theft Auto

franchise. "An attacker will compromise a user’s credentials, and then pose as a

vendor or IT employee and ask the user for a verification code or to approve an

MFA prompt on their phone," says Jordan LaRose, practice director for

infrastructure security at NCC Group. "Attackers will often use the information

they’ve already compromised as part of the social engineering attack to lull

users into a false sense of security."

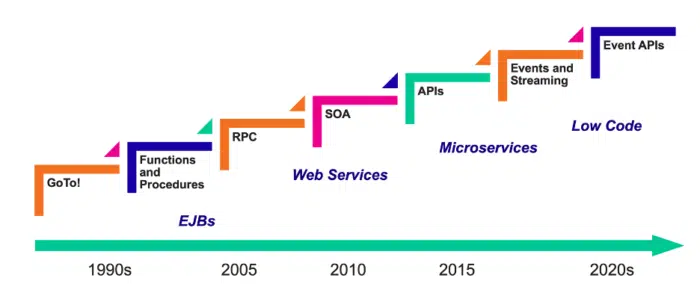

Three Trends That Could Impact Data Management In 2023

Like cybercrime, the digitization of the customer experience is almost as old as

the computer itself, but it really came into its own in the mobile age. What

some call digitization 1.0 was all about “mobile, simplified design and new

kinds of applications.” Digitization 2.0 homed in on customer demand—“apps

anywhere, anytime, on any interface, and with any method of interaction: voice,

social media, chat, texting, wearables, and even when you are sitting in your

car.” What I’m calling digitization 3.0 here is a doubling down on both 1.0 and

2.0 to make data even more usable and provide unprecedented access to it. I

began this article by highlighting the value of data. I think the companies that

can extract even more value from their data while maintaining its security,

resiliency and privacy will be the ones that not only survive today’s economic

uncertainty but thrive during and after it. The key is contextualizing your data

to make it more useful. This starts with the steps I’ve listed in the preceding

two sections as a foundation.

Best and worst data breach responses highlight the do's and don'ts of IR

When it comes to data breaches, is there a sliding scale? In other words, if a

tiny school district gets hit with a ransomware attack, do we give the IT team a

partial pass because they probably lack the resources and skill level of a more

tech-savvy company? On the other hand, if a company whose entire business model

is based on protecting user passwords gets hacked, do we judge them more

harshly? Which brings us to LastPass, which experienced an embarrassing breach

that was first announced in August 2022 as simply a minor incident confined to

the application development environment. By December that breach had spread to

customer data including company names, end-user names, billing addresses, email

addresses, telephone numbers, and IP addresses. LastPass gets high marks for

transparency. The company continued to issue public updates following the

initial August announcement.

‘Digital twin’ tech is twice as great as the metaverse

A “digital twin” is not an inert model. It’s a personalized, individualized,

dynamically evolving digital or virtual model of a physical system. It’s dynamic

in the sense that everything that happens to the physical system also happens to

the digital twin — repairs, upgrades, damage, aging, etc.Companies are already

using “digital twins” for integration, testing, monitoring, simulation,

predictive maintenance on bridges, buildings, wind farms, aircraft and

factories. But these are still very early days in the “digital twin” realm. ...

A digital twin system has three parts: The physical system, the virtual digital

copy of that physical system and a communications channel linking the two.

Increasingly, this communication is the relaying of sensor data from the

physical system. It’s made from three major technology categories. If you

imagine a Venn diagram of “metaverse” technologies in one circle, “IoT” in a

second circle and “AI” in the third, “digital twin” technology occupies the

overlapping center. Digital twins are different from models or simulations in

that they are far more complex and extensive and change with incoming data from

the physical twin....”

Backup testing: The why, what, when and how

The aim of all testing is to ensure you can recover data. Those recoveries might

be of individual files, volumes, particular datasets – associated with an

application, for example, or even an entire site, or several. So, testing has to

happen at differing levels of granularity to be effective. That means the

differing levels of file, volume, site, and so on, as above. But it also means

by workload and system type, such as archive, database, application, virtual

machine or discrete systems. At the same time, the backup landscape in an

organisation is subject to constant change, as new applications are brought

online, and as the location of data changes. This is more the case than ever

with the use of the cloud, as applications are developed in increasingly rapid

cycles, and by novel methods of deployment such as containers. ... So, it’s

likely that testing will take place at different levels of the organisation on a

schedule that balances practicality with necessity and importance. Meanwhile,

that testing must consider the constantly changing backup landscape.

Considerations for Developing Cybersecurity Awareness Training

Regular, ongoing cybersecurity awareness training is important, and the best

time to start is during the new employee onboarding process. This sets the

correct expectations in terms of what to do and what not to do before a new

employee has access to the enterprise’s information assets or data. ...

Enterprises may consider using classroom-based training (physical, virtual or a

mixture of both) or a learning management system (LMS) to automate the delivery

and tracking of cybersecurity awareness training. There are many online LMS

providers, such as Absorb LMS and SAP Litmos, and they provide useful tools for

creating online courses, quizzes and surveys. After online courses are created,

an enterprise can use the LMS to organize and distribute online courses to its

employees as needed. The LMS can also be used to monitor training progress, view

analytics and allow employees to provide feedback in order for the enterprise to

recalibrate its learning program for maximum impact.

AI and data privacy: protecting information in a new era

First of all, business technology leaders should consider whether they need AI

and whether their problems can't be solved by more conventional methods. There

is nothing worse than the "I want ML/AI solutions in my business, but I don't

know what for yet" approach. To introduce AI you need to consider the entire

architecture that will build, train and deploy models and consider how to

collect and process large amounts of data. This requires assembling a good

team, consisting of people such as data engineers, ML engineers and data

scientists. It’s necessary to process large amounts of data and master many

tools, so it is not as simple as writing a web application in a standard

framework. Tech leaders should also be aware that AI comes with risks. They

will need more and more computing resources to build increasingly

sophisticated AI platforms. They will need to stay constantly abreast of news

from the world of AI where everything changes rapidly, and it may turn out

that in six months a much better solution or model for a particular problem

has already been created.

Think carefully before considering cloud repatriation

It’s particularly difficult for smaller companies to repatriate, simply

because, at their scale, the savings aren’t worth the effort. Why buy real

estate and hardware and pay extra salaries only to save a small amount? By

contrast, very large companies have the scale to repatriate, But do they want

to? “Do Visa, American Express or Goldman Sachs want to be in the IT hardware

business?” asks Sample, rhetorically. “Do they want to try to take a modest

gain by moving far outside their competency?” Switching can also be

complicated when the cost of change isn’t considered part of the calculation.

A marginal run rate savings gained from pulling an application back on-prem

may be offset by the cost of change, which includes disrupting the business

and missing out on opportunities to do other things, such as upgrading the

systems that help generate revenue. A major transition may also cause down

time—sometimes planned and other times unplanned. “A seamless cutover is

rarely possible when you’re moving back to private infrastructure,” says

Sample.

The High Costs of Going Cloud-Native

When it comes to reasons to move to the public cloud, “saving money” has long

since been replaced with “increased agility.” Like the systems vendors they

are in the process of displacing, AWS, Microsoft Azure, and Google Cloud have

figured out how to maximize their customers’ infrastructure spend, with a

little (actually a lot of) help from the unalterable economics of data

gravity. ... Titled “Cloud-Native Development Report: The High Cost of

Ownership,” the white paper tracks the journey of a hypothetical company as it

embarks on a transition to cloud-native computing. ... While many of the

technologies at the core of the cloud-native approach, such as Kubernetes, are

free and open source, there are substantial costs incurred, predominantly

around the infrastructure and the personnel. In particular, the costs of

finding IT practitioners with experience in using and deploying cloud-native

technologies was among the biggest hurdles identified by the report, which you

can read here.

Security automation: 3 priorities for CIOs

To begin with, the CIO has choices to make about the testing approaches that

will be deployed. Automation in AppSec can refer to tools and processes,

ranging from automated vulnerability scanning (dynamic analysis) and static

code analysis to software composition analysis and other types of security

testing. The most advanced approaches can take things a step further by

combining multiple forms of testing – perhaps augmenting DAST with interactive

application security testing (IAST) and software composition analysis (SCA) –

into a single scan for a comprehensive analysis of the organization’s security

risk posture in a single frame. ... Meanwhile, in workflow terms, IT leaders

should use customizable solutions to trigger scans at certain points in the

development pipeline or based on a predefined schedule. This will allow CIOs

and their teams to coordinate scans at specific times or in response to

certain events like deploying new code or detecting a security incident.

Quote for the day:

"If you don't demonstrate leadership

character, your skills and your results will be discounted, if not

dismissed." -- Mark Miller

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/archetype/T2H62V6BKFHJZGBBAOJADH2I4Q.jpg)

/2023/02/23/image/jpeg/5uwc1r7veTsSNWGE2Ny3REj8vKi7RYZcaARp7Ol7.jpg)

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/archetype/OY5PU6NEE5D5XOAE72CE3XV3ZU.jpg)