Taking Security Strategy to the Next Level: The Cyber Kill Chain vs. MITRE ATT&CK

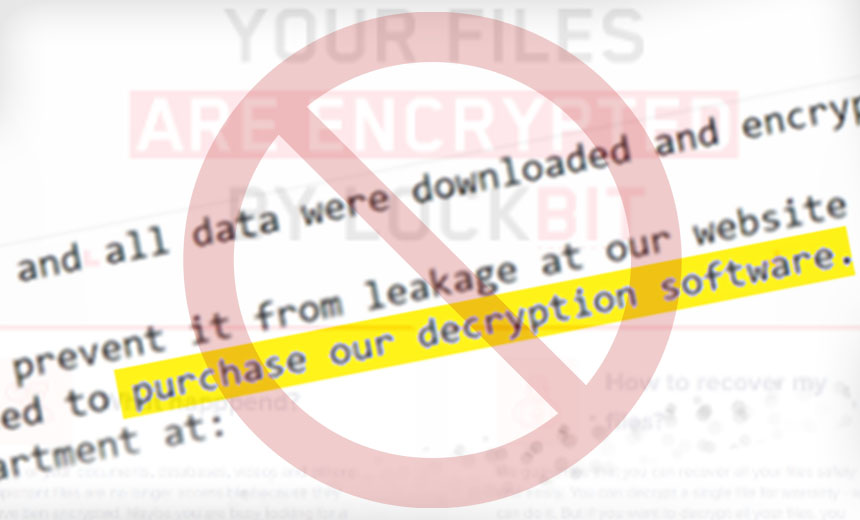

There are 2 models that can help security professionals harden network resources

and protect against modern-day threats and attacks: the cyber kill chain

(CKC) and the MITRE ATT&CK framework. The CKC, developed by Lockheed

Martin more than a decade ago, provides a high-level view of the sequence of a

cyberattack from initial reconnaissance through weaponization and action. While

it is widely used by security teams, it has its limitations. For example, host

attack behaviors are not included in the model, and attackers may bypass or

combine multiple steps. The newer MITRE ATT&CK framework maps closely to the

CKC but focuses more on cyberresilience to withstand emergent threats. This

open-source project also provides substantial support for tracing host attack

behaviors. ... Present-day attacks utilize encryption over the network, making

it very difficult to detect attack behaviors via the network itself. To overcome

this limitation, enterprises typically deploy host security products alongside

their network security products. Host security products might include

traditional antivirus programs, endpoint detection and response (EDR) solutions

or endpoint protection platforms (EPPs).

Why Cloud Databases Need to Be In Your Tech Stack

Companies need to operate at a constantly increasing scale — more data, more

speed, more customer touchpoints. IDC estimates that there will be 41.6 billion

connected IoT devices, or “things,” generating 79.4 zettabytes (ZB) of data in

2025. The only way to keep up with this moving train is to have a cloud database

that can handle huge amounts of data and can do so with extreme agility and low

latency. There are two types of scaling: horizontal (adding more nodes to a

system) and vertical (adding more resources to a single node). Relational

databases of old are not elastic, as in they cannot scale based on the volume

and velocity of data access. They are built more like airplanes. If you want to

add 20 more seats to your flight, you have to get a new plane that is built with

20 more seats. In other words, you can’t extend this plane to accommodate 20

more passengers. This is vertical scaling. Cloud databases are built more like

trains. If you want to add 20 more seats to your popular train route, all you

have to do is add another coach. On the other hand, cloud databases are more

like trains. If you want to add 20 more seats to your popular train route, all

you have to do is add another coach.

Report: Organ Transplant Data Security Needs Strengthening

The newest criticism comes from a federal watchdog review of the Health

Resources and Services Administration and the nonprofit United Network of Organ

Sharing. As of January, nearly 107,000 individuals were candidates on the Organ

Procurement and Transplantation Network waitlist. OPTN is designated by the

federal government as a "high-value asset." UNOS, which manages its network at

the administration's behest, lacked system monitoring and only had draft

procedures for access controls when federal auditors conducted their review. The

OPTN "is a very 'just in time' system where the time between an organ becoming

available and getting it into the right patient can be measured in days or even

hours," says Benjamin Denkers, chief innovation officer at consultancy

CynergisTek. "Hackers breaching the system could create any number of

disruptions to the system connecting available organs with patients in need." A

statement from an UNOS spokeswoman shared with Information Security Media Group

notes that auditors concluded that "OPTN security controls 'protect the

confidentiality, integrity, and availability of transplant data.'"

Spinning uncertainty into success

The Upside of Uncertainty delivers helpful takeaways and, perhaps most

important, offers anyone struggling with a murky future the courage to

persevere. The book also contains useful insights into shifting one’s

perspective in tough times, describing entrepreneurial heuristics that can

help shrewd thinkers tap into potential opportunity. For example: pressing on

when uncertainty emerges, even at the risk of failure; reframing failure as an

opportunity for learning and adaptation; exploiting resources and skills at

hand instead of investing too deeply in research before experimenting; and

thinking entrepreneurially by leveraging existing resources in new ways. They

cite the example of Pokémon Go, which was created by a multiplayer-game

designer and digital mapping expert who’d helped create what became Google

Maps. He realized that Google Maps’ geopositioning technology could be paired

with Pokémon characters to form an engaging augmented reality game. Similarly,

the founders of Traveling Spoon, a startup that connects food-focused

travelers with local home cooks, saw entrepreneurial potential hiding in plain

sight when a local woman shared a delicious homemade meal with them in

Mexico.

Design For Security Now Essential For Chips, Systems

“There’s a real danger in

security, because of its complications and being really hard to understand, to

run into the equivalent of what in sustainability is called green-washing,”

said Frank Schirrmeister, senior group director, solutions and ecosystem at

Cadence. “This is ‘secure-washing,’ and while there may be government

regulations, it’s all about customers in the commercial world. Semiconductor

companies and system vendors have to serve their end customers, and for them

it’s like selling insurance. You really didn’t know that you needed security

until you ran into a real issue. That’s when they say, ‘If I just would have

had insurance.’ But how to implement it is really an intricate issue, and it’s

hard to understand from technology perspective. I fear it may be similar to a

clean energy ‘Energy Star’ sticker on a washing machine, which may just mean,

‘Yes, I have documented processes.’ That’s why I think there’s a danger of

secure-washing, where the end consumer is lulled into a sense that ‘this thing

is secure,’ without really understanding what’s underneath, who confirmed it,

and what the process was. That’s why standardization is crucial. But it also

needs to be transparent.”

The risks of neglecting data governance

Data governance will make or break your organisation’s reputation. The impact

of the brand degradation that businesses are likely to suffer once their lax

approach to data protection is revealed could be significant. No one wants to

transact with a business that will not protect their data. In fact, data

protection is set to become the next ‘badge of honour’ for businesses. Whilst

sustainability, diversity and fair trade have previously been accolades that

customers look for when choosing which businesses to interact with, being a

data guardian is a growing phenomenon. The reputational impact that a GDPR

fine can have on a business is, therefore, huge and can result in significant

customer loss. With the growth of competition in many markets, it is easy for

customers to find an alternative. Financially, this loss will often amount to

more than the fine itself. Such negligence can also have a negative impact on

your supply chain. As with customers, partners, suppliers, and service

providers will also choose not to work with organisations who fail to comply

with standards such as GDPR.

Choosing the Right Cloud Infrastructure for Your SaaS Start-up

The first consideration is the company’s ability to manage the infrastructure,

including the time required, whether humans are needed for the day-to-day

management, and how resilient the product is to future changes. If the product

is used primarily by enterprises and demands customization, then you may need

to deploy the product multiple times, which could mean more effort and time

from the infra admins. The deployment can be automated, but the automation

process requires the product to be stable. ROI might not be good for an

early-stage product. My recommendation in such cases would be to use managed

services such as PaaS for infrastructure, managed services for the

database/persistent, and FaaS—Serverless architecture for compute. ... And the

key to fast development to release is to spend more time in coding and testing

than in provisioning and deployments. Low-code and no-code platforms are good

to start with. Serverless and FaaS are designed to solve these problems. If

your system involves many components, building your own boxes will consume too

much time and effort. Similarly, setting up Kubernetes will not make it

faster.

Edge infrastructure: 7 key facts CIOs should know about security

There is no blanket security solution that will mitigate every risk – that’s

true at the edge, in the cloud, and in your datacenter or corporate offices.

Your IT stack has multiple layers; even a single application has multiple

layers. Your security posture should, too. Edge computing boosts the case for

a multi-layered approach to security. This whitepaper describes a layered

approach to container and Kubernetes security. While the details may differ in

an edge environment, the core concept here remains relevant: A well-planned

mix (or layers) of processes, policies, and tools – that lean heavily on

automation wherever possible – is vital to securing inherently distributed

systems. ... “You have to ensure that you enforce security controls at the

granularity of the edge location, and that any edge location that is breached

can be isolated away without impacting all the other edge locations,” says

Priya Rajagopal, director of product management at Couchbase. This is similar

in concept to limiting “east-west” traffic and other forms of isolation and

segmentation in container and Kubernetes security. There’s no such thing as

zero risk – things happen.

How to Optimize Your Organization for Innovation

Building a culture that encourages creativity usually requires starting small

and supporting frequent iteration. “Be willing to try ideas and approaches

that may not work,” suggests Christine Livingston, managing director in the

emerging technology practice at business consulting firm Protiviti.

Employee-led technology advisory teams and initiative groups allow staffers to

feel a sense of ownership while finding solutions to complex issues, observes

Susan Tweed, vice president of enterprise technologies at analytics,

artificial Intelligence and data management software and services provider

SAS. “People can participate in ways that maximizes their strengths,” she

says. “Some participants may be great at throwing out ideas while others love

the challenge of digging deep to validate the solutions identified as the best

options.” Giving teams the freedom to experiment is essential. “When teams are

offered the space to create, try, fail, and try again, they are given the

opportunity to learn from those experiences and bring that insight into their

next projects,” Hapanowicz says.

Protect the Pipe! Secure CI/CD Pipelines With a Policy-Based Approach

Improved security for production systems has forced attackers to look for

other avenues. The improvements may be due to the increase in cloud and

managed services and general security awareness and availability of tools.

With the adoption of programmable infrastructure and Infrastructure-as-Code

(IaC), build, and delivery systems now have access to production systems. This

means a compromise in the build system can be used to access production

systems and, in the case of a software vendor, access to customer

environments. Applications are increasingly composed of hundreds of OSS

and commercial components. This increases the application exposure and

presents several ways to add malicious code to an application. All of these

factors contributed to attackers shifting focus to Continuous Integration and

Continuous Delivery (CI/CD) systems as an easier target to infiltrate multiple

production systems. Therefore, it is essential that organizations give equal

consideration to securing our CI/CD pipelines, just as they do their

production workloads.

Quote for the day:

"Superlative leaders are fully

equipped to deliver in destiny; they locate eternally assigned destines." --

Anyaele Sam Chiyson

:quality(70)/cloudfront-eu-central-1.images.arcpublishing.com/thenational/VQ56ARTBSKPJ4OO3DMFY6AU3ZY.jpg)