Can automated test tools eliminate QA?

The traditional quality assurance process is multi-step and requires at least

two types of software testers: The first tester exercises data edit and

processing functions in applications, and they ensure that all of these

processes are working correctly. The second QA tester is more familiar with the

business’s needs and how the application should address them. This tester is

usually savvy about application technical details as well as the business

systems with which the application is going to interact. But there’s more to QA

than just these two front-running functions. Applications must be

integration-tested to ensure that they interact and exchange data with all of

the different systems and data that they work with. They must also be moved to

application staging areas where they can be regression tested. This ensures that

they don’t break any other existing software with which they interface and that

they can run the maximum amount of transactions for which they were designed in

production. From an IT standpoint, applications must pass through all of these

hurdles before they can go live.

The downside of digital transformation: why organisations must allow for those who can’t or won’t move online

Through our current research we find the reality of a digitally enabled society

is, in fact, far from perfect and frictionless. Our preliminary findings point

to the need to better understand the outcomes of digital transformation at a

more nuanced, individual level. Reasons vary as to why a significant number of

people find accessing and navigating online services difficult. And it’s often

an intersection of multiple causes related to finance, education, culture,

language, trust or well-being. Even when given access to digital technology and

skills, the complexity of many online requirements and the chaotic life

situations some people experience limit their ability to engage with digital

services in a productive and meaningful way. The resulting sense of

disenfranchisement and loss of control is regrettable, but it isn’t inevitable.

Some organisations are now looking for alternatives to a single-minded focus on

transferring services online. Other organisations are considering partnerships

with intermediaries who can work with individuals who find engaging with digital

services difficult.

Authentic leadership: Building an organization that thrives

Becoming an authentic leader takes a lot of self-reflection and self-awareness.

You’ll need to work to understand yourself and others, using empathy and

compassion as your driving force. For examples of authentic leadership in the

tech industry, you can look to former CEO of Apple Steve Jobs, former CEO of GE

Jack Welch, former CEO of Xerox Anne Mulcahy, and former CEO of IBM Sam

Palmisano. These leaders are all known for their authentic leadership styles

that helped them drive business success. To become an authentic leader, you’ll

need to embark on a path of self-discovery, establish a strong set of values and

principles that will guide you in your decision-making, and be completely honest

with yourself about who you are. An authentic leader isn’t afraid to make

mistakes or to own up to mistakes when they happen. You’ll need to make sure

you’re someone who takes accountability, maintains calm under pressure, and can

be vulnerable with coworkers and employees. It’s important to know your own

strengths and weaknesses as an authentic leader and to identify how you cope

with success, failure, and setbacks.

Reporting to build trust: A framework

Whether you’re preparing an integrated annual report or a stand-alone

sustainability report, the publication has to be informed by steps one and two.

It’s also critical to put the right resources in place, in terms of both time

and people, along with the right incentives and the right oversight. Companies

can truly be confident in what they report only when it is subject to board

oversight, relevant to the company’s strategy, and has the right governance,

systems and controls in place to measure progress towards targets and plans.

Many large companies that have teams of hundreds working on financial reporting

often have only a handful of people working on sustainability reporting. Even

with the best intentions, less-resourced areas have a higher potential to miss

something that turns out to be critically important. The business world’s

financial reporting capabilities have been built over 170 years. When it comes

to sustainability reporting, we need to move quickly to build the right

capabilities—using what we’ve learned from financial reporting. And if

sustainability reporting is to be on par with financial reporting for informing

resource allocation decisions, it needs to be just as robust and

relevant.

Six reasons successful leaders love questions

Comparing questions to dreams is Straus’s way of saying that questions hold the

key to better understanding the subconscious dimensions of the person asking the

questions. It can be extremely difficult to understand why employees think the

way they do, and how to help them change their mindset and behavior if required.

It then stands to reason that questions might also help leaders better

understand the culture and habits of their organization. In his 1988 article,

“Toward a History of the Question,” Dutch philosopher C.E.M. Struyker Boudier

writes, “In and by way of his questions the human being can reach out to the

divine, and likewise degrade himself to the demonic inferno of evil.”

Questioning forces people to the line between good and bad, yes and no, pro and

con. Asking questions is closely related to making a choice. We cannot address

everything at once, so to ask a question, we must decide what to focus on and

how. We have the choice to take an approach that is optimistic or pessimistic,

abstract or concrete, individual or collective, broad or narrow, past- or

future-oriented, etc.

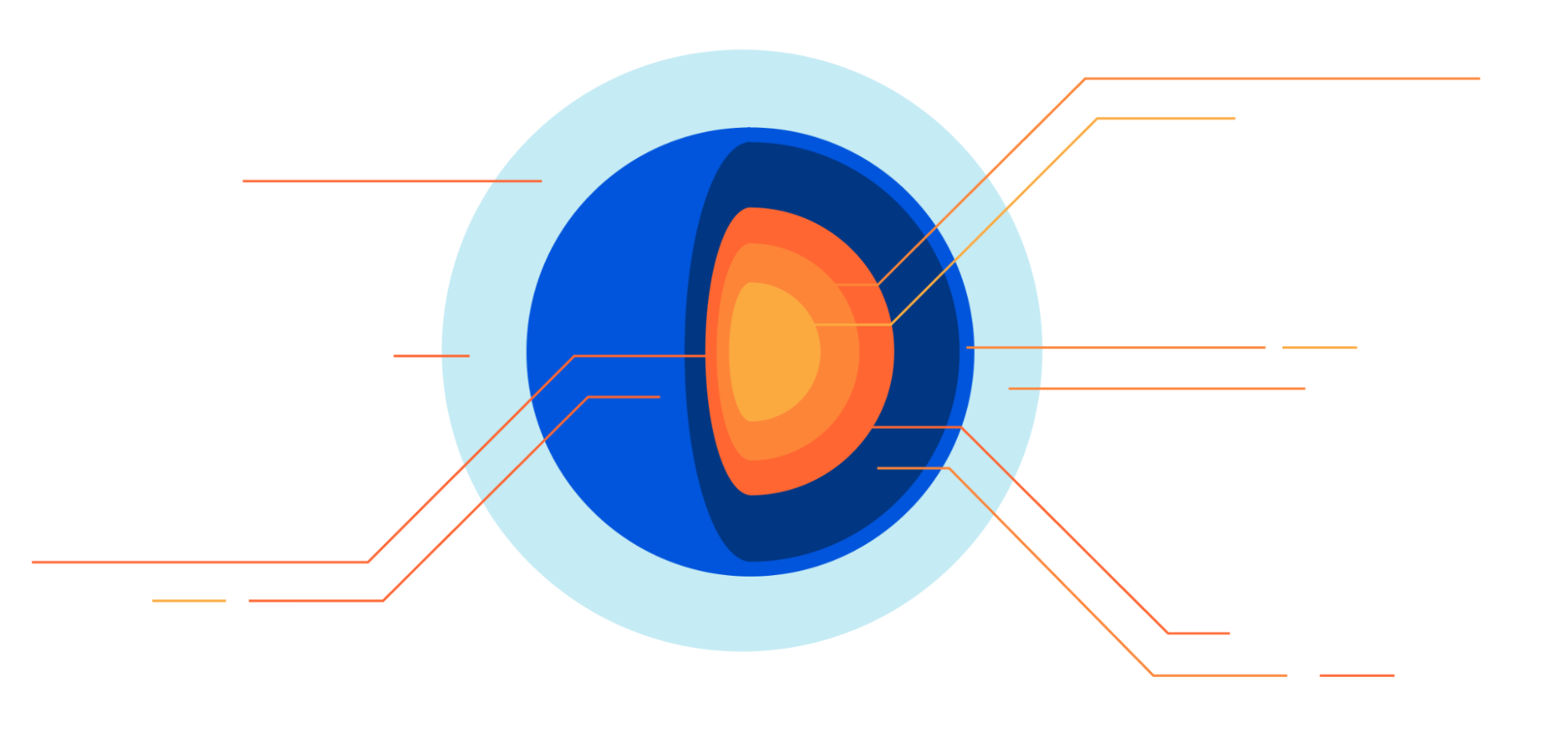

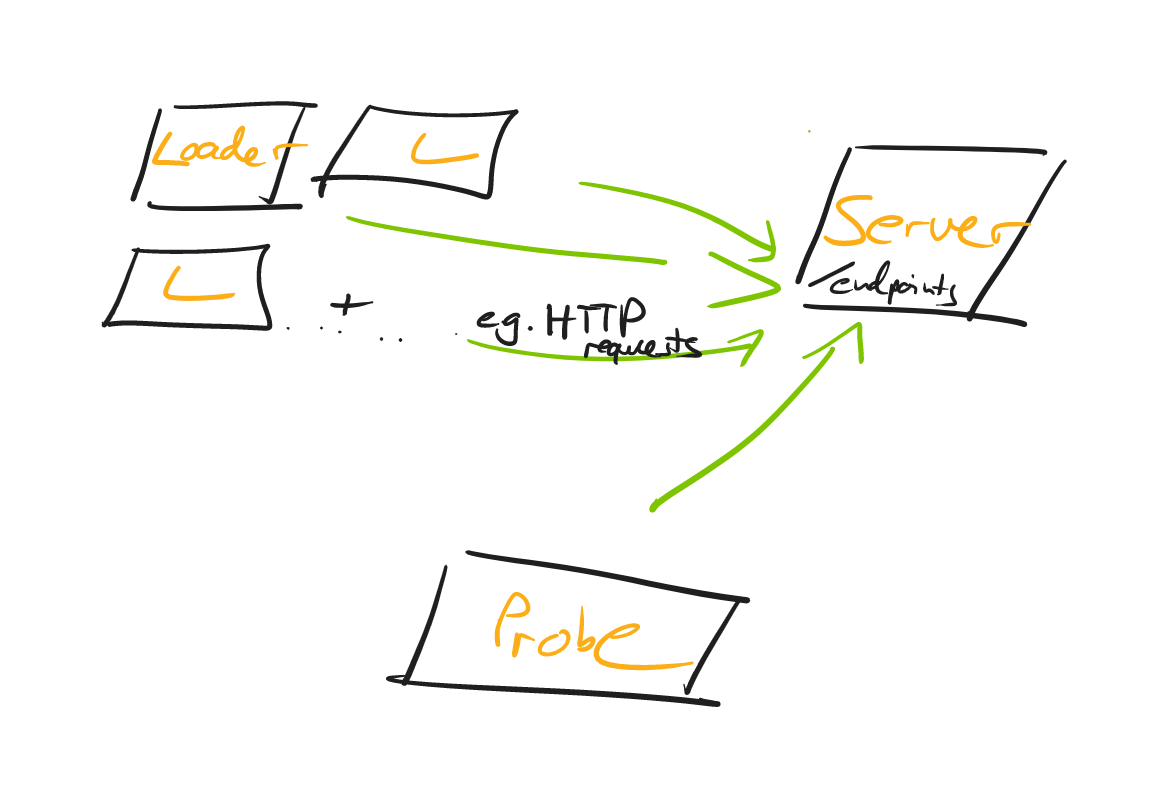

Discovering the Versatility of OpenEBS

OpenEBS provides storage for stateful applications running on Kubernetes;

including dynamic local persistent volumes (like the Rancher local path

provisioner) or replicated volumes using various "data engines". Similarly to

Prometheus, which can be deployed on a Raspberry Pi to monitor the temperature

of your beer or sourdough cultures in your basement, but also scaled up to

monitor hundreds of thousands of servers, OpenEBS can be used for simple

projects, quick demos, but also large clusters with sophisticated storage

needs. OpenEBS supports many different "data engines", and that can be a bit

overwhelming at first. But these data engines are precisely what makes OpenEBS

so versatile. There are "local PV" engines that typically require little or no

configuration, offer good performance, but exist on a single node and become

unavailable if that node goes down. And there are replicated engines that

offer resilience against node failures. Some of these replicated engines are

super easy to set up, but the ones offering the best performance and features

will take a bit more work.

Cyber Resiliency: What It Is and How To Build It

Creating a cyber-resilience plan requires buy-in and input from all parts of

the organization, including finance, IT, and operations. “It’s important that

departments work together to classify information and risk, as well as to

determine where to put controls and where responsibilities lie,” Piker says.

“Once a plan has been agreed upon, a budget must be carved out to fund the

actual implementation of the plan.” It's important to engage the entire

organization. “This is not just a technical issue under the control of a CIO

or CISO,” Adkins says. “Your employees and vendors can play a critical role in

spotting potential attacks to limit their impact.” Additionally, with the

continuing trend toward remote work, employee cyber awareness and training is

more important than ever. “This means formal policies, training, exercises

simulation, and ongoing analysis of risks,” Adkins says. Adkins advises

organizations to use tabletop exercises to test incident practices and times.

“It's much easier to fix a flaw in your planning and processes when you’re not

in the middle of a crisis,” he says.

How kitemarks are kicking off IoT regulation

Interestingly, all those we have seen apply for the scheme have chosen to go

for Gold because they want to be seen to be adhering to the highest levels and

it’s been attracting some big international consumer brands. The smaller

players that previously had difficulty understanding and navigating the red

tape involved in the Code of Practice/ETSI have also valued the guidance and

human touch of an assessor. The theory is that the product assurance scheme

will spur compliance ahead of the PSTI, making the transition that much easier

for the IoT industry, and the fact that many have aimed high suggests the

approach is working. Manufacturers like the visibility conferred by the badge,

which then becomes a differentiator in the marketplace, as well as ensuring

future compliance. It’s for these reasons that many watching the assurance

rollout with interest. IoT kitemark schemes vary internationally, from labels

that denote compliance with a set of cybersecurity criteria, to a single label

that attests basic security features are provided, to several tiers or even a

label that lists cybersecurity information about the IoT device.

4 tips for leading remote IT teams

Traditional enterprises tend to have a “we will train our employees only as

much as we have to” mentality. However, this approach will make your employees

more likely to seek other opportunities where they feel more valued and

prepared. Of course, there is always the risk of employees leaving with their

newfound skills, but having undertrained employees can be worse for your

business and the organization. Set aside a generous annual budget for training

and development and help map out a personalized training path for each

employee. This is critical to employee happiness and long-term business

planning. These plans should also demonstrate growth opportunities that

benefit each employee – not just the organization. In-person training is

great, but don’t underestimate the value of virtual training. While a personal

connection with instructors can often provide more knowledge and attention,

the convenience of virtual training makes it a popular alternative these days.

Encourage your employees to explore training opportunities where they’re

located.

How Microcontainers Gain Against Large Containers

A microcontainer is an optimized container modified toward better efficiency.

It still contains all the files to provide more scaling, isolation, and parity

to the software application. However, it is an improved container, with an

optimized number of files kept in the image. Important files left in the

microcontainer are shell, package manager, and standard C library. In

parallel, there exists a concept of ‘distroless’ in a field of containers,

where all the unused files are fully extracted from the image. It is worth

emphasizing the distinction between the concept of microcontainer and

distroless. Microcontainer still contains unused files, as they are required

for the system to stay completed. Microcontainer is based on the same system

of operation as the regular container and performs all the same functions,

with the only difference that its internal files have been enhanced and its

size got smaller due to the improvements done by developers. Microcontainer

contains an optimized number of files, so it still includes all files and

dependencies required for application run, but in a lighter and smaller

format.

Quote for the day:

"The first task of a leader is to keep

hope alive." -- Joe Batten