How Platform Ops Teams Should Think About API Strategy

Rules and policies that control how APIs can connect with third parties and

internally are a critical foundation of modern apps. At a high level,

connectivity policies dictate the terms of engagement between APIs and their

consumers. At a more granular level, Platform Ops teams need to ensure that APIs

can meet service-level agreements and respond to requests quickly across a

distributed environment. At the same time, connectivity overlaps with security:

API connectivity rules are essential to ensure that data isn’t lost or leaked,

business logic is not abused and brute-force account takeover attacks cannot

target APIs. This is the domain of the API gateway. Unfortunately, most API

gateways are designed primarily for north-south traffic. East-west traffic

policies and rules are equally critical because in modern cloud native

applications, there’s actually far more east-west traffic among internal APIs

and microservices than north-south traffic to and from external customers.

What will it take to stop fraud in the metaverse?

While some fraud in the metaverse can be expected to resemble the scams and

tricks of our ‘real-world’ society, other types of fraud must be quickly

understood if they are to be mitigated by metaverse makers. When Facebook’s

Metaverse first launched, investors rushed to pour billions of dollars into

buying acres of land. The so-called ‘virtual real estate’ sparked a land boom

which saw $501 million in sales in 2021. This year, that figure is expected to

grow to $1 billion. Selling land in the metaverse works like this: pieces of

code are partitioned to create individual ‘plots’ within certain metaverse

platforms. These are then made available to purchase as NFTs on the blockchain.

While we might have laughed when one buyer paid hundreds of thousands of dollars

to be Snoop Dogg’s neighbour in the metaverse, this is no laughing matter when

it comes to security. Money spent in the metaverse is real, and fraudsters are

out to steal it. One of the dangers of the metaverse is that, while the virtual

land and property aren’t real, their monetary value is. On purchase, they become

real assets linked to your account. Therefore, fraud doesn’t look like it used

to.

How IoT data is changing legacy industries – and the world around us

Massive, unstructured IoT data workloads — typically stored at the edge or

on-premise — require infrastructure that not only handles big data inflows,

but directs traffic to ensure that data gets where it needs to be without

disruption or downtime. This is no easy feat when it comes to data sets in the

petabyte and exabyte range, but this is the essential challenge: prioritizing

the real-time activation of data at scale. By building a foundation that

optimizes the capture, migration, and usage of IoT data, these companies can

unlock new business models and revenue streams that fundamentally alter their

effects on the world around us. ... As legacy companies start to embrace their

IoT data, cloud service providers should take notice. Cloud adoption, long

understood to be a priority among businesses looking to better understand

their consumers, will become increasingly central to the transformation of

traditional companies. The cloud and the services delivered around it will

serve as a highway for manufacturers or utilities to move, activate, and

monetize exabytes of data that are critical to businesses across

industries.

The security gaps that can be exposed by cybersecurity asset management

There is a plethora of tools being used to secure assets, including desktops,

laptops, servers, virtual machines, smartphones, and cloud instances. But

despite this, companies can struggle to identify which of their assets are

missing the relevant endpoint protection platform/endpoint detection and

response (EPP/EDR) agent defined by their security policy. They may have the

correct agent but fail to understand why its functionality has been disabled,

or they are using out-of-date versions of the agent. The importance of

understanding which assets are missing the proper security tool coverage and

which are missing the tools’ functionality cannot be underestimated. If a

company invests in security and then suffers a malware attack because it has

failed to deploy the endpoint agent, it is a waste of valuable resources.

Agent health and cyber hygiene depends on knowing which assets are not

protected, and this can be challenging. The admin console of an EPP/EDR can

provide information about which assets have had the agent installed, but it

does not necessarily prove that the agent is performing as it should.

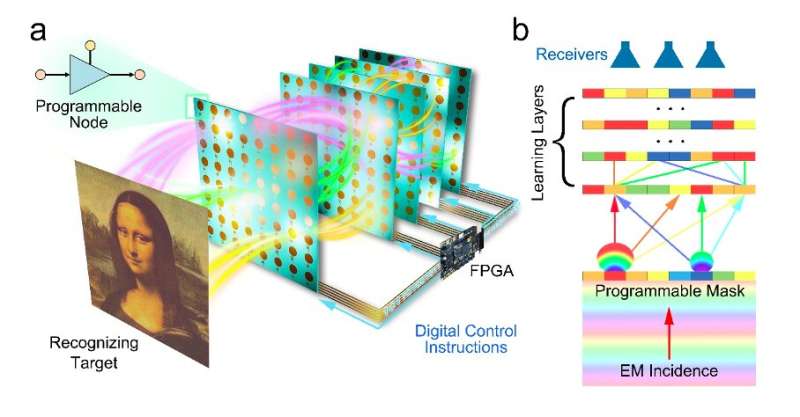

Google AI and UC Berkely Researchers Introduce A Deep Learning Approach Called ‘PRIME’

PRIME develops a robust prediction model that isn’t easily tricked by

adversarial cases to overcome this restriction. To architect simulators, this

model is simply optimized using any standard optimizer. More crucially, unlike

previous methods, PRIME can learn what not to construct by utilizing existing

datasets of infeasible accelerators. This is accomplished by supplementing the

learned model’s supervised training with extra loss terms that particularly

punish the learned model’s value on infeasible accelerator designs and

adversarial cases during training. This method is similar to adversarial

training. One of the main advantages of a data-driven approach is that it

enables learning highly expressive and generalist optimization objective

models that generalize across target applications. Furthermore, these models

have the potential to be effective for new applications for which a designer

has never attempted to optimize accelerators. The trained model was altered to

be conditioned on a context vector that identifies a certain neural net

application desire to accelerate to train PRIME to generalize to unseen

applications.

Use zero trust to fight network technical debt

In a ZT environment, the network not only doesn’t trust a node new to it, but

it also doesn’t trust nodes that are already communicating across it. When a

node is first seen by a ZT network, the network will require that the node go

through some form of authentication and authorization check. Does it have a

valid certificate to prove its identity? Is it allowed to be connected where

it is based on that identity? Is it running valid software versions, defensive

tools, etc.? It must clear that hurdle before being allowed to communicate

across the network. In addition, the ZT network does not assume that a trust

relationship is permanent or context free: Once it is on the network, a node

must be authenticated and authorized for every network operation it attempts.

After all, it may have been compromised between one operation and the next, or

it may have begun acting aberrantly and had its authorizations stripped in the

preceding moments, or the user on that machine may have been fired.

IT professionals wary of government campaign to limit end-to-end encryption

Many industry experts said they were worried about the possibility of

increased surveillance from governments, police and the technology companies

that run the online platforms. Other concerns were around the protection of

financial data from hackers if end-to-end encryption was undermined. There

were concerns that wider sharing of “secret keys”, or centralised management

of encryption processes, would significantly increase the risk of compromising

the confidentiality they are meant to preserve. BCS’s Mitchell said: “It’s odd

that so much focus has been on a magical backdoor when other investigative

tools aren’t being talked about. Alternatives should be looked at before

limiting the basic security that underpins everyone’s privacy and global free

speech.” Government and intelligence officials are advocating, among other

ways of monitoring encrypted material, technology known as client-side

scanning (CSS) that is capable of analysing text messages on phone handsets

and computers before they are sent by the user.

Hypernet Labs Scales Identity Verification and NFT Minting

A majority of popular NFT projects so far have been focused on profile

pictures and art projects, where early adopters have shown a willingness to

jump through hoops and bear the burden of high transaction fees on the

Ethereum Network. There’s growing enthusiasm for NFTs that serve more

utilitarian purposes, like unlocking bonus content for subscription services

or as a unique token to allow access to experiences and events. With the

release of Hypernet.Mint, Hypernet Labs is taking the same approach toward

simplifying the user experience that it applied to Hypernet.ID. Hypernet.Mint

offers lower-cost deployment by leveraging Layer 2 blockchains like Polygon

and Avalanche that don’t have the same high fee structure as the Ethereum

mainnet. The company also helps dApps create a minting strategy that aligns

with business goals, supporting either mass minting or minting that is based

on user onboarding flows that may acquire additional users over time. “We’re

working on a lot of onboarding flow for new types of users, which comes back

to ease of use for users,” Ravlich said.

How decision intelligence is helping organisations drive value from collected data

While AI can be a somewhat nebulous concept, decision intelligence is more

concrete. That’s because DI is outcome-focused: a decision intelligence

solution must deliver a tangible return on investment before it can be

classified as DI. A model for better stock management that gathers dust on a

data scientist’s computer isn’t DI. A fully productionised model that enables

a warehouse team to navigate the pick face efficiently and decisively, saving

time and capital expense — that’s decision intelligence. Since DI is outcome

focused, it requires models to be built with an objective in mind and so

addresses many of the pain points for businesses that are currently struggling

to quantify value from their AI strategy. By working backwards from an

objective, businesses can build needed solutions and unlock value from AI

quicker. ... Global companies, including Pepsico, KFC and ASOS have already

emerged as early adopters of DI, using it to increase profitability and

sustainability, reduce capital requirements, and optimise business

operations.

Insights into the Emerging Prevalence of Software Vulnerabilities

Software quality is not always an indicator of secure software. A measure of

secure software is the number of vulnerabilities uncovered during testing and

after production deployment. Software vulnerabilities are a sub-category of

software bugs that threat actors often exploit to gain unauthorized access or

perform unauthorized actions on a computer system. Authorized users also

exploit software vulnerabilities, sometimes with malicious intent, targeting

one or more vulnerabilities known to exist on an unpatched system. These users

can also unintentionally exploit software vulnerabilities by inputting data

that is not validated correctly, subsequently compromising its integrity and

the reliability of those functions that use the data. Vulnerability exploits

target one or more of the three security pillars; Confidentiality, Integrity,

or Availability, commonly referred to as the CIA Triad. Confidentiality

entails protecting data from unauthorized disclosure; Integrity entails

protecting data from unauthorized modification and facilitates data

authenticy.

Quote for the day:

"To be a good leader, you don't have

to know what you're doing; you just have to act like you know what you're

doing." -- Jordan Carl Curtis