A CIO’s Guide To Hybrid Work

CIOs reimagining an organization’s digital strategy need to ensure that their

employees can communicate effectively and have complete access to resources

needed to perform their jobs. This means that employees do not receive just

their laptops and an email account but have full access to a complete tech stack

and set of solutions that empower them to interact with their peers and

customers. AI- and ML-powered solutions help enhance the employee experience by

saving time for people to connect with their teams and helping infuse mental

well-being along with a company’s values and purpose. The best way to understand

whether your employees are well supported to carry on their job is by gathering

feedback from them. Send out a simple form with both open and closed questions

on the potential communication gaps, remote work support and access to available

resources. Once you have all the information, analyze the gaps and improvement

opportunities to pick the right tools. Make sure that the tools you choose

integrate with your organization’s tech ecosystem while delivering value.

Whatever Happened to Business Supercomputers?

Supercomputers are primarily used in areas in which sizeable models are

developed to make predictions involving a vast number of measurements, notes

Francisco Webber, CEO at Cortical.io, a firm that specializes in extracting

value from unstructured documents. “The same algorithm is applied over and over

on many observational instances that can be computed in parallel," says Webber,

hence the acceleration potential when run on large numbers of CPUs.”

Supercomputer applications, he explains, can range from experiments in the Large

Hadron Collider, which can generate up to a petabyte of data per day, to

meteorology, where complex weather phenomena are broken down to the behavior of

myriads of particles. There's also a growing interest in graphics processing

unit (GPU)-and tensor processing unit (TPU)-based supercomputers. “These

machines may be well suited to certain artificial intelligence and machine

learning problems, such as training algorithms [and] analyzing large volumes of

image data,” Buchholz says.

The State of Hybrid Workforce Security 2021

The time is right for IT leaders to turn to their teams and gain a clear

understanding of what they actually have in place. While the initial response to

the pandemic was reactionary, now is a moment to assess an organization’s app

and security landscape and what is actually providing access to users no matter

where they are, whether they’re at home, in the branch, or anywhere in between.

Rationalizing the purpose and usage of solutions that are in place today

provides a real opportunity for consolidation—one that did not seriously exist

previously. Many organizations will be able to drive better outcomes around

security posture, reducing risk, and improving total cost of ownership.

Consolidating the number of disparate tools in use to provide secure user access

improves security posture consistency and reduces the number of policies that

have to be administered. Besides reducing needed multi-product training and

management effort, a platform approach drives better economies of scale,

resulting in a lower total cost of ownership. Net-net, consolidation delivers a

far more effective approach for security.

What is Web3, is it the new phase of the Internet and why are Elon Musk and Jack Dorsey against it?

In the Web3 world, search engines, marketplaces and social networks will have no

overriding overlord. So you can control your own data and have a single

personalised account where you could flit from your emails to online shopping

and social media, creating a public record of your activity on the blockchain

system in the process. A blockchain is a secure database that is operated by

users collectively and can be searched by anyone. People are also rewarded with

tokens for participating. It comes in the form of a shared ledger that uses

cryptography to secure information. This ledger takes the form of a series of

records or “blocks” that are each added onto the previous block in the chain,

hence the name. Each block contains a timestamp, data, and a hash. This is a

unique identifier for all the contents of the block, sort of like a digital

fingerprint. ... The idea of a decentralised internet may sound far-fetched but

big tech companies are already betting big on it and even assembling Web3

teams.

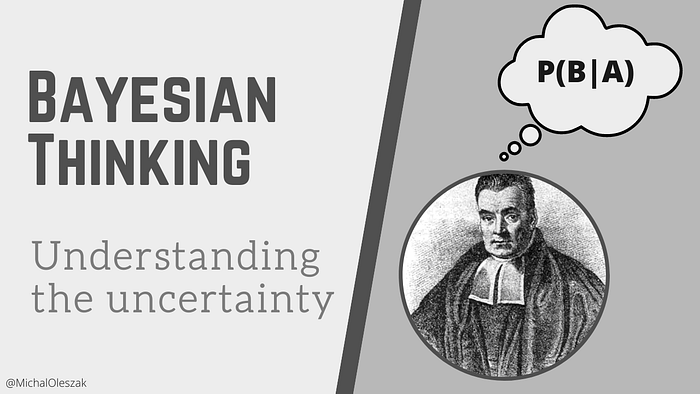

Will A.I. Guarantees Our Humane Futures?

Both private firms and governments, which would be adopting A.I. drove

technologies, could be attracted to the opportunity of violating the

individual’s privacy and data security for their own selfish reasons. Large

private corporations, especially technology and social media companies such as

the big four of the big tech, which includes Google, Amazon, Apple, and

Facebook, they’re already sitting on massive quantities of user data, which

they’re looking to monetize, and such monetization of data in the name of

customized services and targeted advertisements could have a disastrous impact

on the user’s privacy and data security. The bigger threat will emerge when such

sensitive user data is misused for social engineering to alter the customer's

behavior and choices. ... Today, algorithms are so sophisticated that they can

predict the user's next action based on their private data analysis. It’s very

much possible to make use of such user data to nudge the individual discretely

to alter his behavior and choices, and this has far-reaching implications for

the economy, for society, and as well as for the security of a democratic

nation.

Protection against the worst consequences of a cyberattack

Businesses need an incident response plan that will clearly outline the steps to

be followed when a data breach occurs. By neglecting to do so, the organization

will become the low hanging fruit that attackers go after. Even a rudimentary

plan is better than no plan at all, and those without one will suffer a much

higher impact. The incident response plan needs to outline the steps to be

followed when a data breach occurs. Teams need to identify and classify data to

understand what levels of protection are needed, a step that is regrettably

missed all the time. For instance, personal identifiable customer information

needs a different level of protection to the photos from the last Christmas

party. Teams also need to maintain cyber hygiene through regular patching, and

since 90% of breaches start with an email, it is very important to have email

protection, multi-factor authentication and end-point protection to prevent any

lateral movements by cybercriminals. Perhaps my biggest piece of advice is to

have experienced personnel monitoring your environment 24/7, 365 days a year

(including Christmas).

Initial access brokers: How are IABs related to the rise in ransomware attacks?

Initial access brokers sell access to corporate networks to any person wanting

to buy it. Initially, IABs were selling company access to cybercriminals with

various interests: getting a foothold in a company to steal its intellectual

property or corporate secrets (cyberespionage), finding accounting data allowing

financial fraud or even just credit card numbers, adding corporate machines to

some botnets, using the access to send spam, destroying data, etc. There are

many cases for which buying access to a company can be interesting for a

fraudster, but that was before the ransomware era. ... Ransomware groups saw an

opportunity here to suddenly stop spending time on the initial compromise of

companies and to focus on the internal deployment of their ransomware and

sometimes the complete erasing of the companies' backup data. The cost for

access is negligible compared with the ransom that is demanded of the victims.

IAB activities became increasingly popular in the cybercriminal underground

forums and marketplaces.

8 Real Ways CIOs Can Drive Sustainability, Fight Climate Change

The concept of the circular economy has been around for a while, but it’s now

taking off in a big way. NTT’s Lombard says that it’s a key to getting to net

zero. This means establishing business and IT supply chains that focus on

optimizing the lifespan of equipment, moving toward zero-emission closed loop

recycling and curtailing e-waste. For example, there’s a growing second-hand

market for high-end gear, including hyperscale infrastructure. Companies like IT

Renew recertify these systems and place them under warranty. “Everyone wins,”

says Lucas Beran, principal analyst at consulting firm Dell’Oro Group. “The

original user gets two or three years of use; the buyer gets another three or

four years -- all while TCO and the carbon footprint drop.” ... Data centers are

expected to consume about 8% of the world's electricity by 2030. While

refreshing legacy servers, optimizing data, virtualizing workloads,

consolidating virtual machines and green hosting all deliver benefits, these

strategies aren’t enough to tackle climate change. Organizations must

fundamentally rethink data center design and function.

How Safety Became One of The Most Critical Smart City Applications

For cities, it can be challenging to ensure citizen and worker safety when

natural disasters occur. Incidents such as hurricanes, floods, fires and gas

leaks are unpredictable and often impossible to prevent. To put it in

perspective, most people have lived through some disaster, with 87% of consumers

saying they’ve been impacted by one in the last five years (not counting the

COVID pandemic). Safety will only become more critical over the next few decades

as natural disasters are becoming more frequent, intense and costly. Since 1970,

the number of disasters worldwide has more than quadrupled to around 400 a year.

Since 1998, natural disasters worldwide have killed more than 1.3 million people

and left another 4.4 billion injured, homeless, displaced, or in need of

emergency assistance. Smart sensors and advanced analytics can help communities

better predict, prepare and respond to these emergency situations. For example,

IoT sensors, such as pole tilt, electric distribution line, leak detection and

air quality sensors, can be leveraged to mitigate risk minimize damage.

Avoiding Technical Bankruptcy: a Whole-Organization Perspective on Technical Debt

It is regrettable that the meaning of the technical debt metaphor has been diluted in this way, but in language as in life in general, pragmatics trump intentions. This is where we are: what counts as "technical debt" is largely just the by-product of normal software development. Of course, no-one wants code problems to accumulate in this way, so the question becomes: why do we seem to incur so much inadvertent technical debt? What is it about the way we do software development that leads to this unwanted result? These questions are important, since if we can go into technical debt, then it follows that we can become technically insolvent and go technically bankrupt. In fact, this is exactly what seems to be happening to many software development efforts. Ward Cunningham notes that "entire engineering organizations can be brought to a stand-still under the debt load of an unconsolidated implementation". That stand-still is technical bankruptcy.

Quote for the day:

“When you take risks you learn that

there will be times when you succeed and there will be times when you fail,

and both are equally important.” -- Ellen DeGeneres

/filters:no_upscale()/articles/measure-outcomes-not-outputs/en/resources/3figure-1-1639490993399.jpg)