New Passwordless Verification API Uses SIM Security for Zero Trust Remote Access

On the spectrum between passwords and biometrics lies the possession factor –

most commonly the mobile phone. That's how SMS OTP and authenticator apps came

about, but these come with fraud risk, usability issues, and are no longer the

best solution. The simpler, stronger solution to verification has been with us

all along – using the strong security of the SIM card that is in every mobile

phone. Mobile networks authenticate customers all the time to allow calls and

data. The SIM card uses advanced cryptographic security, and is an established

form of real-time verification that doesn't need any separate apps or hardware

tokens. However, the real magic of SIM-based authentication is that it requires

no user action. It's there already. Now, APIs by tru.ID open up SIM-based

network authentication for developers to build frictionless, yet secure

verification experiences. Any concerns over privacy are alleviated by the fact

that tru.ID does not process personally identifiable information between the

network and the APIs. It's purely a URL-based lookup.

On the spectrum between passwords and biometrics lies the possession factor –

most commonly the mobile phone. That's how SMS OTP and authenticator apps came

about, but these come with fraud risk, usability issues, and are no longer the

best solution. The simpler, stronger solution to verification has been with us

all along – using the strong security of the SIM card that is in every mobile

phone. Mobile networks authenticate customers all the time to allow calls and

data. The SIM card uses advanced cryptographic security, and is an established

form of real-time verification that doesn't need any separate apps or hardware

tokens. However, the real magic of SIM-based authentication is that it requires

no user action. It's there already. Now, APIs by tru.ID open up SIM-based

network authentication for developers to build frictionless, yet secure

verification experiences. Any concerns over privacy are alleviated by the fact

that tru.ID does not process personally identifiable information between the

network and the APIs. It's purely a URL-based lookup.Cognitive AI meet IoT: A Match Made in Heaven

The progressive trends of Mobile edge computing and Cloudlets are diffusing

edge-based intelligence in connected and more controlled enterprise systems.

However, within the diversity of pervasive cyber-physical ecosystems, the

autonomy of the discrete edge nodes would require gain in operational

intelligence with minimum supervision. The emerging innovation in cognitive

computational intelligence is revealing a great potential to introduce a

contemporary soft computing-based algorithm, architectural rethinking, and

progressive system design of the next generation of IoT systems. The cognitive

IoT Systems crush the strong partition between the silos and interdependencies

of software and hardware subsystems. The flexibility of the edge-native AI

component is flexible enough to recognize the changes in the physical

environment and dynamically adjust the analytical outcomes in real-time. As a

result, the interaction between human-machine or machine to machine becomes more

dynamic, interoperable, and contextual to the time and scope of any operation.

The progressive trends of Mobile edge computing and Cloudlets are diffusing

edge-based intelligence in connected and more controlled enterprise systems.

However, within the diversity of pervasive cyber-physical ecosystems, the

autonomy of the discrete edge nodes would require gain in operational

intelligence with minimum supervision. The emerging innovation in cognitive

computational intelligence is revealing a great potential to introduce a

contemporary soft computing-based algorithm, architectural rethinking, and

progressive system design of the next generation of IoT systems. The cognitive

IoT Systems crush the strong partition between the silos and interdependencies

of software and hardware subsystems. The flexibility of the edge-native AI

component is flexible enough to recognize the changes in the physical

environment and dynamically adjust the analytical outcomes in real-time. As a

result, the interaction between human-machine or machine to machine becomes more

dynamic, interoperable, and contextual to the time and scope of any operation.

How the pandemic delivered the future of corporate cybersecurity faster

At some point it becomes untenable and inefficient to manage all these

separate solutions. That point gets closer every day as teams have to deal

with the complexities and identity management challenges of remote work.

Siloed solutions also mean IT staff must monitor several different consoles

and may not connect the dots when incidents are flagged on separate platforms.

They also require complex and costly integration projects to get the

functionality needed. And even then, they’ll likely still require manual

oversight. Moving toward all-in-one security solutions can help replicate the

sense of cohesion that once existed in on-premises network security along with

new efficiencies. All-in-one solutions can share data across the different

components, leading to better and more efficient function. And by adding new

modules instead of products when new tools are needs, you eliminate the

expense and complications of integration. Companies and individuals have

already gotten used to paying for things like data, cloud storage and web

hosting based on how much they use them.

At some point it becomes untenable and inefficient to manage all these

separate solutions. That point gets closer every day as teams have to deal

with the complexities and identity management challenges of remote work.

Siloed solutions also mean IT staff must monitor several different consoles

and may not connect the dots when incidents are flagged on separate platforms.

They also require complex and costly integration projects to get the

functionality needed. And even then, they’ll likely still require manual

oversight. Moving toward all-in-one security solutions can help replicate the

sense of cohesion that once existed in on-premises network security along with

new efficiencies. All-in-one solutions can share data across the different

components, leading to better and more efficient function. And by adding new

modules instead of products when new tools are needs, you eliminate the

expense and complications of integration. Companies and individuals have

already gotten used to paying for things like data, cloud storage and web

hosting based on how much they use them.

OnePercent ransomware group hits companies via IceID banking Trojan

The OnePercent group's ransom note directs victims to a website hosted on

the Tor anonymity network where they can see the ransom amount and contact

the attackers via a live chat feature. The note also includes a Bitcoin

address where the ransom must be paid. If victims do not pay or contact the

attackers within one week, the group attempts to contact them via phone

calls and emails sent from ProtonMail addresses. "The actors will

persistently demand to speak with a victim company’s designated negotiator

or otherwise threaten to publish the stolen data," the FBI said. "When a

victim company does not respond, the actors send subsequent threats to

publish the victim company’s stolen data via the same ProtonMail email

address." The extortion has different levels. If the victim does not agree

to pay the ransom quickly, the group threatens to release a portion of the

data publicly and if the ransom is not paid even after this, the attackers

threaten to sell the data to the REvil/Sodinokibi group to be auctioned off.

Aside from the REvil connection, OnePercent might have been tied to other

ransomware-as-a-service (RaaS) operations in the past too.

The OnePercent group's ransom note directs victims to a website hosted on

the Tor anonymity network where they can see the ransom amount and contact

the attackers via a live chat feature. The note also includes a Bitcoin

address where the ransom must be paid. If victims do not pay or contact the

attackers within one week, the group attempts to contact them via phone

calls and emails sent from ProtonMail addresses. "The actors will

persistently demand to speak with a victim company’s designated negotiator

or otherwise threaten to publish the stolen data," the FBI said. "When a

victim company does not respond, the actors send subsequent threats to

publish the victim company’s stolen data via the same ProtonMail email

address." The extortion has different levels. If the victim does not agree

to pay the ransom quickly, the group threatens to release a portion of the

data publicly and if the ransom is not paid even after this, the attackers

threaten to sell the data to the REvil/Sodinokibi group to be auctioned off.

Aside from the REvil connection, OnePercent might have been tied to other

ransomware-as-a-service (RaaS) operations in the past too.Why Agile Transformations Fail In The Corporate Environment

One key reason an Agile transformation will fail is when all the focus is

concentrated in just one of the three circles above. It is imperative that

we consider these three circles like a Venn diagram and regularly monitor

our operating presence. Ideally, we want to operate in all three circles,

but it is hard to find balance. Suppose we are working in the mindset and

framework circles and trying to build a perfect product with perfect

architecture. Spending too much time making things perfect, we are likely to

miss the market window, or run into financial difficulties. Similarly, if we

operate in the mindset and business agility circles, for example, it could

be great for the short term to get a prototype to market quickly, but we

will be drowning in technical debt in the long run. Or, imagine that we

operate in the framework and business agility circle to build a perfect

hotel for our customers — we could miss the fact that they really need a

bed-and-breakfast, not a hotel, by not considering the mindset circle. All

three perspectives are essential, so to maximize the efficiencies, we need

to keep finding the balance.

One key reason an Agile transformation will fail is when all the focus is

concentrated in just one of the three circles above. It is imperative that

we consider these three circles like a Venn diagram and regularly monitor

our operating presence. Ideally, we want to operate in all three circles,

but it is hard to find balance. Suppose we are working in the mindset and

framework circles and trying to build a perfect product with perfect

architecture. Spending too much time making things perfect, we are likely to

miss the market window, or run into financial difficulties. Similarly, if we

operate in the mindset and business agility circles, for example, it could

be great for the short term to get a prototype to market quickly, but we

will be drowning in technical debt in the long run. Or, imagine that we

operate in the framework and business agility circle to build a perfect

hotel for our customers — we could miss the fact that they really need a

bed-and-breakfast, not a hotel, by not considering the mindset circle. All

three perspectives are essential, so to maximize the efficiencies, we need

to keep finding the balance.How the tech sector can provide opportunities and address skills gaps in young people

After all, as far as technology is concerned, none of us are beyond the need

for further training and development. The McKinsey Global Institute has

recently suggested that as many as 357 million people will need to acquire

new skills in the next decade due to the predicted rise of artificial

intelligence and automation – skills that few, even in tech-adjacent

industries, currently possess. Keeping this kind of projection firmly in

mind helps us to remember that the acquisition of new and essential skills

is an ongoing process for everyone. As such, employers should not discount

those potential candidates who don’t necessarily come from a tech-heavy

background. With robust on-the-job training processes and a supportive,

inclusive approach towards IT talent, young workers who perhaps missed out

on IT fundamentals at school or who chose to focus, for example, on

humanities-based university courses can absolutely receive the same

attention and prospects as those from a tech-heavy background. A recent

government report on aspects of the skills gap has already uncovered an

uplifting trend in this direction, with 57% of employers confident that they

can find resources to train their employees.

After all, as far as technology is concerned, none of us are beyond the need

for further training and development. The McKinsey Global Institute has

recently suggested that as many as 357 million people will need to acquire

new skills in the next decade due to the predicted rise of artificial

intelligence and automation – skills that few, even in tech-adjacent

industries, currently possess. Keeping this kind of projection firmly in

mind helps us to remember that the acquisition of new and essential skills

is an ongoing process for everyone. As such, employers should not discount

those potential candidates who don’t necessarily come from a tech-heavy

background. With robust on-the-job training processes and a supportive,

inclusive approach towards IT talent, young workers who perhaps missed out

on IT fundamentals at school or who chose to focus, for example, on

humanities-based university courses can absolutely receive the same

attention and prospects as those from a tech-heavy background. A recent

government report on aspects of the skills gap has already uncovered an

uplifting trend in this direction, with 57% of employers confident that they

can find resources to train their employees.A closer look at two newly announced Intel chips

Intel’s upcoming next-generation Xeon is codenamed Sapphire Rapids and

promises a radical new design and gains in performance. One of its key

differentiators is its modular SoC design. The chip has multiple tiles that

appears to the system as a monolithic CPU and all of the tiles communicate

with each other, so every thread has full access to all resources on all

tiles. In a way it’s similar to the chiplet design AMD uses in its Epyc

processor. By breaking the monolithic chip up into smaller pieces it’s easier

to manufacture. In addition to faster/wider cores and interconnects, Sapphire

Rapids has a new feature called Last Level Cache (LLC) that features up to

100MB of cache that can be shared across all cores, with up to four memory

controllers and eight memory channels of DDR5 memory, next-gen Optane

Persistent Memory, and/or High Bandwidth Memory (HBM). Sapphire Rapids also

offers Intel Ultra Path Interconnect 2.0 (UPI), a CPU interconnect used for

multi-socket communication. UPI 2.0 features four UPI links per processor with

16GT/s of throughput and supports up to eight sockets.

Intel’s upcoming next-generation Xeon is codenamed Sapphire Rapids and

promises a radical new design and gains in performance. One of its key

differentiators is its modular SoC design. The chip has multiple tiles that

appears to the system as a monolithic CPU and all of the tiles communicate

with each other, so every thread has full access to all resources on all

tiles. In a way it’s similar to the chiplet design AMD uses in its Epyc

processor. By breaking the monolithic chip up into smaller pieces it’s easier

to manufacture. In addition to faster/wider cores and interconnects, Sapphire

Rapids has a new feature called Last Level Cache (LLC) that features up to

100MB of cache that can be shared across all cores, with up to four memory

controllers and eight memory channels of DDR5 memory, next-gen Optane

Persistent Memory, and/or High Bandwidth Memory (HBM). Sapphire Rapids also

offers Intel Ultra Path Interconnect 2.0 (UPI), a CPU interconnect used for

multi-socket communication. UPI 2.0 features four UPI links per processor with

16GT/s of throughput and supports up to eight sockets.How to encourage healthy conflict: 8 tips from CIOs

We unpack ideas and differences, seeking to understand each other’s points of

view and the experiential lens through which the issue(s) are being evaluated,

and then work collaboratively in the spirit of best serving our customers

(external and internal) to reach the best decision and path to resolution. In

the end, and most importantly, we are a team; so, when we work through the

conflict and land on a course of action or decision, we all align, rally, and

go into full-on execution mode as one team, with one agenda. Recognize that

each team member brings a unique set of experiences, ideas, and beliefs to

every conversation and decision. As a leader, you need to be acutely aware of

when and how team members engage in conflict and the behaviors that precede

and follow such discussions. Encourage team members to participate and share

their ideas; candidly and directly elicit their honest and important views on

the matters, even when the topics may be challenging and the conflict intense,

and especially if the team member may be more quiet or prone to avoid the heat

of the debate.

We unpack ideas and differences, seeking to understand each other’s points of

view and the experiential lens through which the issue(s) are being evaluated,

and then work collaboratively in the spirit of best serving our customers

(external and internal) to reach the best decision and path to resolution. In

the end, and most importantly, we are a team; so, when we work through the

conflict and land on a course of action or decision, we all align, rally, and

go into full-on execution mode as one team, with one agenda. Recognize that

each team member brings a unique set of experiences, ideas, and beliefs to

every conversation and decision. As a leader, you need to be acutely aware of

when and how team members engage in conflict and the behaviors that precede

and follow such discussions. Encourage team members to participate and share

their ideas; candidly and directly elicit their honest and important views on

the matters, even when the topics may be challenging and the conflict intense,

and especially if the team member may be more quiet or prone to avoid the heat

of the debate. The Office Of Strategy In the Age Of Agility

Agile methods such as scrum, kanban and lean development have gone beyond the

realm of product design and development to other organizational functions, such

as customer engagement, employee motivation, and execution amid uncertainty.

From the earliest Agile Manifesto, what we know are the following principles: 1)

people over process and tools, 2) working prototypes over excessive

documentation, 3) respond to change rather than follow a plan, and 4) customer

collaboration over rigid contracts. However, in the realm of strategy, agile is

often confused with adhocism, and that it would lead to more chaos than value.

But as Jeff Bezos instructs us, when making strategy, one must focus on the long

term, the things that will remain largely constant over time. In the case of

Amazon, the strategy is three-fold: customer obsession, invention, and being

patient, and that for customers what matters is greater speed, wider selection,

and lower cost. With so few strategic priorities, how does the company manage to

remain relevant?

Agile methods such as scrum, kanban and lean development have gone beyond the

realm of product design and development to other organizational functions, such

as customer engagement, employee motivation, and execution amid uncertainty.

From the earliest Agile Manifesto, what we know are the following principles: 1)

people over process and tools, 2) working prototypes over excessive

documentation, 3) respond to change rather than follow a plan, and 4) customer

collaboration over rigid contracts. However, in the realm of strategy, agile is

often confused with adhocism, and that it would lead to more chaos than value.

But as Jeff Bezos instructs us, when making strategy, one must focus on the long

term, the things that will remain largely constant over time. In the case of

Amazon, the strategy is three-fold: customer obsession, invention, and being

patient, and that for customers what matters is greater speed, wider selection,

and lower cost. With so few strategic priorities, how does the company manage to

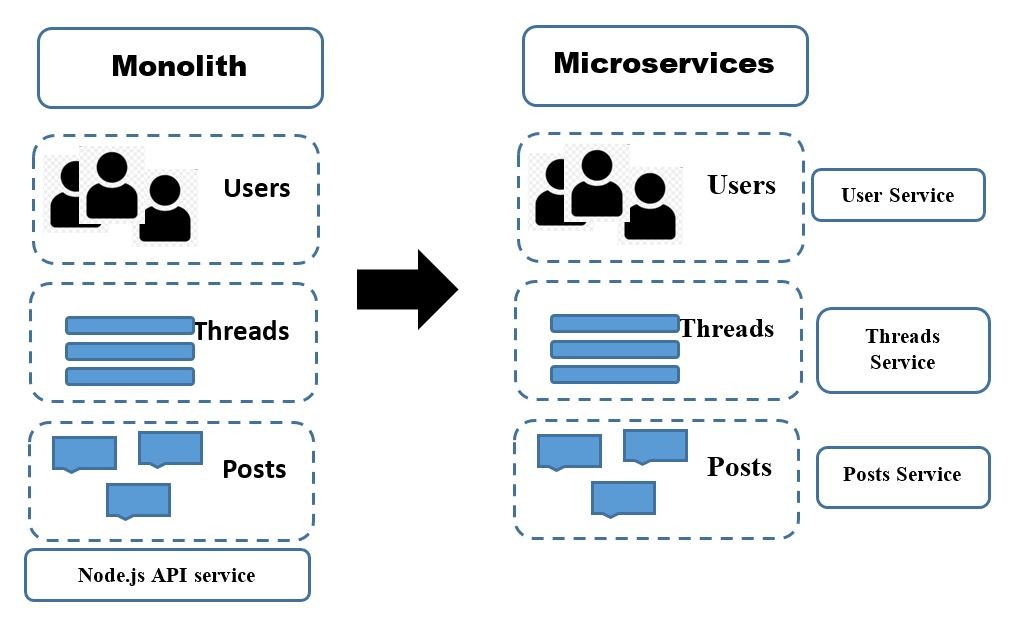

remain relevant? Microservice Architecture and Agile Teams

As services can be worked on in parallel, a team can bring more developers to

bear on a problem without them getting into each other’s actions. It can also be

simpler for those developers to understand their part of the system, as they can

focus their concern on just one part of it. Process isolation also causes it

feasible for us to alter the technology choices team makes, perhaps mixing

different programming languages, programming styles, deployment platforms, or

databases to discover the perfect blend. Microservice architecture does allow

the team more concrete boundaries in a system around which ownership lines can

be marked, allowing the team much more flexibility regarding how you reduce this

problem. The microservice architecture enables each service to be developed

independently by a team that is concentrated on that service. As a result, it

produces continuous deployment possible for complex applications. The

microservice architecture enables each service to be scaled individually. It has

been observed when a team or organization adopts Microservice architecture the

legitimate gain is the built-in agility an organization gets.

As services can be worked on in parallel, a team can bring more developers to

bear on a problem without them getting into each other’s actions. It can also be

simpler for those developers to understand their part of the system, as they can

focus their concern on just one part of it. Process isolation also causes it

feasible for us to alter the technology choices team makes, perhaps mixing

different programming languages, programming styles, deployment platforms, or

databases to discover the perfect blend. Microservice architecture does allow

the team more concrete boundaries in a system around which ownership lines can

be marked, allowing the team much more flexibility regarding how you reduce this

problem. The microservice architecture enables each service to be developed

independently by a team that is concentrated on that service. As a result, it

produces continuous deployment possible for complex applications. The

microservice architecture enables each service to be scaled individually. It has

been observed when a team or organization adopts Microservice architecture the

legitimate gain is the built-in agility an organization gets.Quote for the day:

"When your values are clear to you, making decisions becomes easier." -- Roy E. Disney

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/69754581/VRG_1777_Android_12_002.0.jpg)