Cybersecurity professionals: Positive reinforcement works wonders with users

Sai Venkataraman, CEO of SecurityAdvisor, in his Help Net Security article, The

power of positive reinforcement in combating cybercriminals, said he wants

management to rethink its approach and use positive reinforcement instead. "It's

important to recognize that cognitive bias is part of the human brain's makeup

and functionality," Venkataraman said in his introduction. "While these

subconscious mental shortcuts make it difficult to change behaviors, it's not

impossible." Cognitive bias is hands down the culprit. Charlotte Ruhl, in her

Simple Psychology article What Is Cognitive Bias? defined cognitive bias as: "A

subconscious error in thinking that leads you to misinterpret information from

the world around you and affects the rationality and accuracy of decisions and

judgments. "Biases are unconscious and automatic processes designed to make

decision-making quicker and more efficient. Cognitive biases can be caused by a

number of different things, such as heuristics (mental shortcuts), social

pressures and emotions."

Hackers are using CAPTCHA techniques to scam email users

Researchers found that quantity continues to beat quality in email attacks.

Proofpoint found that the highest number of clicks came from a threat actor

linked to the Emotet botnet. “This total reflects their effectiveness and the

sheer volume of emails they sent in each campaign,” the report notes. The group,

whose infrastructure was knocked out by international law enforcement earlier

this year, has gone virtually dormant since. Cybersecurity researchers also say

that companies shouldn’t underestimate basic cyber hygiene in combatting

ransomware. Hackers are increasingly turning to email to distribute initial

malware that’s used later to download ransomware rather than using email as the

initial attack vector. In 2020, Proofpoint detected 48 million emails that

contained malware that was used to launch ransomware. Top threats detected by

Proofpoint included names like The Trick, Dridex and Qbot. Concerns over

ransomware have only skyrocketed in 2021 after a series of high-profile attacks

against critical industries in the United States.

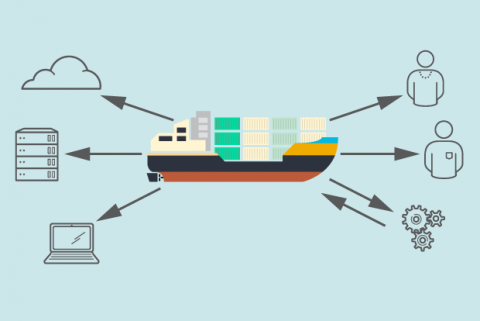

To Protect Consumer Data, Don’t Do Everything on the Cloud

Restricting private data collection and processing to the edge is not without

its downsides. Companies will not have all their consumer data available to go

back and re-run new types of analyses when business objectives change. However,

this is the exact situation we advocate against to protect consumer privacy.

Information and privacy operate in a tradeoff — that is, a unit increase in

privacy requires some loss of information. By prioritizing data utility with

purposeful insights, edge computing reduces the quantity of information from a

“data lake” to the sufficient data necessary to make the same business decision.

This emphasis on finding the most useful data over keeping heaps of raw

information increases consumer privacy. The design choices that support this

approach — sufficiency, aggregation, and alteration — apply to structured data,

such as names, emails or number of units sold, and unstructured data, such as

images, videos, audio, and text. To illustrate, let us assume the retailer in

our wine-tasting example receives consumer input via video, audio, and text.

Do You Have the Empathy to Make the Move to Architect?

Solution and API architects may focus on different levels of the stack, but also

perform very similar roles. Usually, an architect is a more senior, but

non-executive role. An architect typically makes high-level design decisions,

enforces technical standards and looks to guide teams with a mix of technical

and people skills. “Being an architect takes social skills built on the

foundation of the technical,” said Keith Casey an independent contractor, API

consultant and author of “The API Design Book.” “No matter how good at the

socials you are, you need to have the technical. Have you built a system like

this? Have you shipped a system like this? You can read cookbooks all day, until

you’ve put that in the oven, you haven’t cooked. You actually have to succeed

and fail a few times before you can really offer advice to everyone. Social has

to come after the technical foundation.” While a developer likes to dig deep

into the weeds of a particular product or language, an architect is ready to

broaden their understanding of enterprise architecture and how it fits into the

business as a whole.

California's privacy law raises risks of legal action and fines over data collection

The upcoming California Privacy Rights Act (CPRA) is considered a pioneer in

data privacy and it strengthens the current California Consumer Privacy Act with

stricter rules. Enforcement is also beefed up with the creation of the

California Privacy Protection Agency (CPPA) plus the ability of individual

Californians to file suits against companies for non-compliance. The law was

passed November 2020 and it applies to any company of sufficient size that does

business in California which includes online sales without requiring a physical

location. California residents can request from a company how their personal

data has been used, and for what purpose, and they can request that their

personal data not be sold or demand it be deleted including any data that has

been sold to third parties. Each company must also state if artificial

intelligence was applied to any of their personal data, and if it was, what the

logic was behind the AI. This is essentially asking for companies to reveal how

their algorithms rank the data.

How to Explain Complex Technology Issues to Business Leaders

Business leaders generally trust their tech counterparts to successfully address

and resolve all the necessary technical details. What colleagues most want is

assurance that whatever technology IT is proposing delivers benefits that

outweigh capital and operating expenses. "We need to rise above the technology

itself to explain the impact it will have," Kelker said. Jerry Kurtz, executive

vice president of insights and data, at IT advisory firm Capgemini North

America, also stressed the importance of focusing on the project's potential

business outcome and value. "Rather than getting into the details of the

technology, challenge, or solution in technical terms, showcase the outcomes the

solution can bring and how they will impact the business as a whole," he

explained. "Once this has been accomplished, it's time to develop a roadmap to

reach the agreed upon target state." Using analogies rooted in shared

experiences is a good way to find a common ground with business leaders, advised

Mike Bechtel, chief futurist at business and IT advisory firm Deloitte

Consulting.

How universities can facilitate blended learning through smart campus infrastructure

Smart campus infrastructure doesn’t only provide a reliable solution to short

term connectivity issues, but it also offers long term scalability that can

continuously be tweaked, upgraded and expanded to fit the institution’s needs as

they shift. The ideal scenario would be to have low levels of latency on a high

capacity network, creating breathing room so that any significant uptake in

usage levels wouldn’t cause any issues. Alternative network providers (AltNets)

can overprovision to ensure that this scenario plays out ideally for the

university. By providing much more bandwidth than is needed, bottlenecks can be

removed and users can enjoy a seamless connectivity experience. As broadband

demand inevitably grows over time, optic kit can be upgraded in line with what

is required. ... With Wi-Fi 6 deployed across the entire campus, the technology

can take universities to new heights. Reliable, high speed connections

implemented across the university would enable the student experience to take on

a new form through third party deployments. Suddenly, smart homes can be

utilised effectively across the entire campus.

Recover from ransomware: Why the cloud is the way to go

Recovery in the cloud can happen before you ever need it. It starts with

automatically and periodically performing an incremental restore of your

computing environment to an IaaS vendor. This means your entire

environment—including backups of both structured and unstructured data—is

already restored before it’s needed. Yes, you will lose some amount of data

depending on the window between the last restore and the ransomware attack, so

you will need to decide up front how often you execute the pre-restore process

to minimize the loss. You also need to agree on what amount of data loss is

acceptable, which is officially referred to as your recovery point objective

(RPO). Technically, this type of recovery doesn’t require the cloud, but using

the cloud makes it financially feasible for most environments. Doing it with a

physical data center requires the cosly route of paying for the data center

before you need it. With the cloud you pay only for the storage associated with

your pre-restored images. Cloud-friendly backup and DR products and services can

proactively restore your entire environment to the cloud of your choice—once a

day, once an hour, or continuously.

Hybrid work model: 5 advantages

Organizations with the biggest productivity increases during the pandemic have

supported and encouraged “small moments of engagement” among their employees,

according to McKinsey. These small moments are where coaching, idea sharing,

mentoring, and collaborative work happen. This productivity boost stems from

training managers to reimagine processes and rethink how employees can thrive at

work. Autonomy is the key to employee satisfaction: If you provide full autonomy

and decision-making on how, where, and when your team members work, employee

satisfaction will skyrocket. Autonomy is important for on-site workers, too.

Employees who return to the office after over a year of setting their own

schedule will need to feel that they are trusted to get work done without a

manager standing by. At our company, mutual appreciation and positive

assumptions are guiding principles. When we don’t see each other every day, it’s

easy to make assumptions about other employees – we keep these assumptions

positive, trusting that everyone is doing their best and making responsible

decisions.

A New Approach to Securing Authentication Systems' Core Secrets

With SAML, user management is shifted from the service provider (SP) to an

identity provider (IdP), and authentication and directory are decoupled from the

service. Instead of worrying about dozens of different apps and their

authentication measures, admins configure the IdP to verify all employees'

identities. The SP and IdP only communicate with each other with a key pair: The

IdP signs with the private key, and the SP verifies with the public key. A

Golden SAML attack occurs when the attackers steal a private key from the

identity provider and become a "rogue IdP," Be'ery said. This allows them to

generate arbitrary access SAML tokens offline, within the attackers'

environment. Doing this would let attackers access a system as any user, in any

role, while bypassing security policies and MFA. They could also slip past

access monitoring, if access is only monitored by the identity provider, Be'ery

said. The security community saw this technique in the SolarWinds attack, which

also marked the first publicly known use of Golden SAML in the wild, he

noted.

Quote for the day:

"Great things are not something

accidental, but must certainly be willed." --

Vincent van Gogh