The computer will see you now: is your therapy session about to be automated?

AI research has not improved significantly since that review, she argues. “Based

on the available evidence, I’m not optimistic.” Yet she added that a

personalized approach could work better. Rather than assuming a bedrock of

emotional states that are universally recognizable, algorithms could be trained

on a single person over many sessions, including their facial expressions, their

voice and physiological measures like their heart rate, while accounting for the

context of those data. Then you’d have better chances of developing reliable AI

for that person, Barrett says. If such AI systems eventually can be made more

effective, ethical issues still have to be addressed. In a newly published

paper, Torous, Depp and others argue that, while AI has the potential to help

identify mental problems more objectively, and it could even empower patients in

their own treatment, first it must address issues like bias. During the training

of some AI programs, when they are fed huge databases of personal information so

they can learn to discern patterns in them, white people, men, higher-income

people, or younger people are often overrepresented. As a result they might

misinterpret unique facial features or a rare dialect.

WhatsApp Just Gave 2 Billion Users A Reason To Stay

The specter of regulation continues to hang over Facebook and its Big Tech

rivals, but this has raised a different regulatory question: At what point does

a privately held communication platform become a utility. Social media can be

turned on or off with little consequence. But replacing regulated mobile

networks with a multinational “over the top” that is used by almost everyone is

a different deal. WhatsApp’s biggest victory—the reason it’s now on almost all

our phones—was its displacement of SMS as the world’s most popular, most

ubiquitous, messaging tool. The nearest equivalent is Apple’s iMessage in some

markets, especially the U.S. But iMessage isn’t a separate platform from core,

regulated messaging. And, more to the point, it’s owned by a product giant not a

data-based advertising giant. WhatsApp’s numbers are interesting. While its

penetration in Europe is strong, in the developing world it’s staggering. In

Kenya, South Africa, Nigeria, Argentina, Malaysia, Colombia and Brazil it has

secured more than 90% of total adult internet users. In most countries, WhatsApp

is now the market leader. Think that through when next reading about WhatsApp’s

shift into payments and shopping.

Insurance to Mitigate the Risk of AI Systems Coming into View

It’s not clear that AI software suppliers guarantee the accuracy of their

algorithms, or that insurance companies cover the risks associated with AI

products. Having insurance against AI risk could smooth the path to AI adoption.

Among manufacturers trying out AI, many are stuck in “pilot purgatory”–not yet

successfully scaling digital transformation. “Greater support for businesses

looking to implement new solutions could help to improve the adoption rate,”

Yoskovitch stated. Insurers could help enterprises at these three stages of AI

adoption, Yoskovitch suggests ... AI failure models are an evolving area of

research. “It is not possible to provide prescriptive technological

mitigations,” the authors stated. Cyber insurance comes the closest, but is not

a perfect fit. If bodily harm occurs because of an AI failure, such as if the

image recognition system on an autonomous car fails to perform in snow or frost

conditions, cyber insurance is not likely to cover the damage, although it may

cover the losses from the interruption of business that results, the authors

suggest.

‘Back to human’: Why HR leaders want to focus on people again

Delivering a great employee experience relies on the same principles used in

design thinking for products and services. Like skilled designers, CHROs are

starting with the customer and working backward. Where there is a customer

journey with its associated pain points, so there are career journeys in every

big organization, each with its own identifiable moments of frustration. One

thing HR leaders can do along these lines is to harness the energy and insight

of their colleagues to increase engagement among new hires and current

employees. Cisco, for instance, launched a 24-hour “breakathon” with more than

800 employees that used design-thinking principles to identify the moments that

matter most in the interactions between HR and employees. This session led to a

complete redesign of onboarding: YouBelong@Cisco, a full prototype solution that

targeted common pain points for people starting careers at the company. HR

leaders want to use these technologies to help customize and track the needs of

each individual on the employee journey, whether that means advancing

educational efforts, helping customers and clients to solve problems, supporting

the development of colleagues, or simply being part of a great team.

Plea To ML Researchers: Give Data Curation A Chance

Many experts believe data must be used in their natural form to give an

unvarnished output. While there is no problem with this argument, Rogers said,

it needs more elaboration. “In that case, the “natural” distribution may not

even be what we want: e.g. if the goal is a question answering system, then the

“natural” distribution of questions asked in daily life (with most questions

about time and weather) will not be helpful,” wrote Rogers. She further added

there is still a lot of research work that needs to be done before developers

can study the world as it is. Some developers feel their data is large enough

for their training set to encompass the ‘entire data universe’. Rogers said

collecting all data is impossible as it will pose legal, ethical, and practical

challenges Meanwhile, many are in favour of developing algorithmic alternatives

to data curation. As per Rogers, this is a good possibility; however, having

such solutions, in the current scenario, could be a complementary approach to

data curation rather than completely replacing it. A few experts believe data

curation is part of the process and should not become a task big enough to

forget the original purpose of developing a model.

Ultra-high-density hard drives made with graphene store ten times more data

Graphene enables two-fold reduction in friction and provides better corrosion

and wear than state-of-the-art solutions. In fact, one single graphene layer

reduces corrosion by 2.5 times. Cambridge scientists transferred graphene onto

hard disks made of iron-platinum as the magnetic recording layer, and tested

Heat-Assisted Magnetic Recording (HAMR) – a new technology that enables an

increase in storage density by heating the recording layer to high temperatures.

Current COCs do not perform at these high temperatures, but graphene does. Thus,

graphene, coupled with HAMR, can outperform current HDDs, providing an

unprecedented data density, higher than 10 terabytes per square inch.

“Demonstrating that graphene can serve as protective coating for conventional

hard disk drives and that it is able to withstand HAMR conditions is a very

important result. This will further push the development of novel high areal

density hard disk drives,” said Dr Anna Ott from the Cambridge Graphene Centre,

one of the co-authors of this study. A jump in HDDs’ data density by a factor of

ten and a significant reduction in wear rate are critical to achieving more

sustainable and durable magnetic data recording.

Implementing An Effective Intelligent Master Data Management Strategy

Since MDM is not a one-time implementation or cleansing exercise, business

owners must own the data along with the business processes from various

departments and units. The data governance process implemented must identify,

measure, capture, and rectify data quality issues in the source system itself.

In order to keep the strategy running, a formal model to manage said data as a

strategic resource should comprise detailed business rules, data stewardship,

data control, and compliance mechanisms. The governance aspect of data needs to

be treated as part of daily responsibilities rather than a one-off initiative

for it to be effective and supported by stakeholders or senior management. ...

Before diving deep into the MDM implementation process, defining a future

roadmap is crucial in showing how later stages will be accomplished, consistent

with the strategic objectives of an organization. This ensures that your MDM

exercise does not turn into a catastrophic event due to abject failures from

structural flaws that corrupt your entire data system. Further, infuse upgrades,

conduct regular testing on standard communication interfaces, and set benchmarks

to quantify your KPI success, until they are proven to be stable before opening

up the gates to the rest of your data stream.

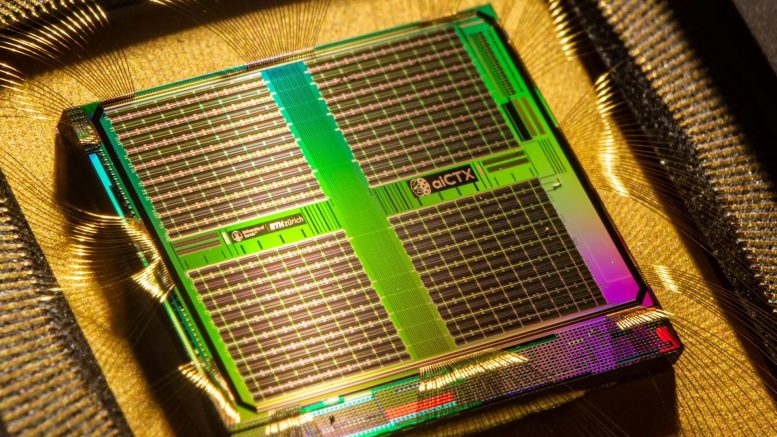

Neuromorphic Chip: Artificial Neurons Recognize Biosignals in Real Time

The researchers first designed an algorithm that detects HFOs by simulating the

brain’s natural neural network: a tiny so-called spiking neural network (SNN).

The second step involved implementing the SNN in a fingernail-sized piece of

hardware that receives neural signals by means of electrodes and which, unlike

conventional computers, is massively energy efficient. This makes calculations

with a very high temporal resolution possible, without relying on the internet

or cloud computing. “Our design allows us to recognize spatiotemporal patterns

in biological signals in real time,” says Giacomo Indiveri, professor at the

Institute for Neuroinformatics of UZH and ETH Zurich. The researchers are now

planning to use their findings to create an electronic system that reliably

recognizes and monitors HFOs in real time. ... However, this is not the only

field where HFO recognition can play an important role. The team’s long-term

target is to develop a device for monitoring epilepsy that could be used outside

of the hospital and that would make it possible to analyze signals from a large

number of electrodes over several weeks or months.

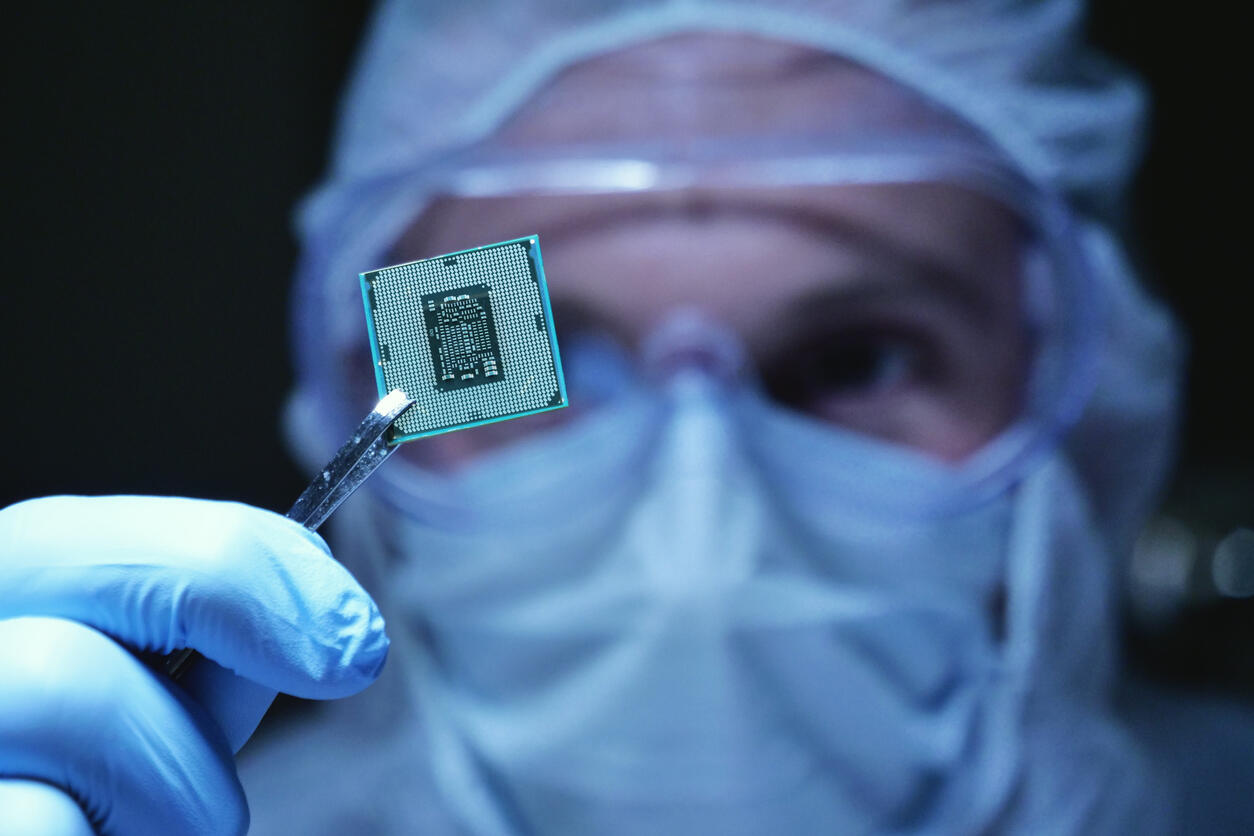

Hardware buyers are scrambling to find chip shortage work-arounds

Because World Insurance runs most of its operations on a private cloud in their

own data center, finding the servers they need to expand their operations is an

ongoing battle. Before the chip shortage, they would primarily buy white label

servers to add capacity. Now, they, like so many others, are sourcing servers

from wherever they can find them. Many manufacturers are in the same boat, said

Jens Gamperl, CEO of Sourcengine, an online marketplace for electronic

components. Gamperl's customers are scrambling to find chips from any

source—regardless of whether or not the supplier and its products have been

vetted or not. ... To ensure some sort of quality control, manufacturers

are asking Sourcengine to perform those functions. Price gouging also is a big

issue. Parts that cost pennies pre-pandemic are now going for thousands of times

more. "I came across, four weeks or five weeks ago, a situation where a 50 cent

part was offered to us for $41," he said. For large businesses, these increased

expenses shouldn't have a noticeable impact on the bottom line given the other

expenses like travel went to zero, he said.

Hybrid work: How to prepare for the turnover tsunami

Among the multiple factors at play, according to the Prudential Financial

survey, are employee concerns about career advancement. ... Additionally, the

wide and rapid acceptance of remote work has opened up new job opportunities to

work from anywhere. It's a perfect storm for creating some degree of turnover,

says Brian Abrahamson, CIO and the associate laboratory director for

communications and IT at the U.S. Department of Energy's Pacific Northwest

National Lab. "We used to talk about the impacts of fear, uncertainty, and doubt

on people. Add to this the impacts of burnout and isolation and you have a

recipe for workforce chaos," Roberts says. "A question every CIO should be

asking their people managers is, 'Are the recruiters who are trying to poach our

people painting a better picture of a future working with their company than we

are of ours?'" The time to start addressing anticipated turnover is now. "If you

acknowledge that the risk factors affecting the likelihood of increased

attrition in the near term are there, the first recommendation I would make is

simple: Accept and prepare for it," says Selective Insurance CIO John

Bresney.

Quote for the day:

"If you care enough for a result, you

will most certainly attain it." -- William James