A Day in the Life of a DevSecOps Manager

The goal of a DevSecOps team, in my view, is embedding application security into development through enablement, iteration, and continuous feedback – also sometimes called "shifting security left." This requires talking to other folks and making sure you can offer them something that solves your problem while enabling them to solve theirs. No one wants to "stop" producing value to take care of security concerns, which can often be how it feels to interact with security teams. Everyone already has a full roadmap. Why does this security concern need to be addressed now? Through a DevSecOps philosophy, which mostly means taking agile principles from engineering and applying them to security work, I use those aforementioned days of meetings to determine how a particular security concern can be mitigated or eradicated without adding friction to the development pipeline. ... Our DevSecOps team, for example, can write a cryptography library for engineering that uses standard libraries in an appropriate manner, avoiding common implementation mistakes that could lead to data exposure. Sometimes we may mandate a particular approach, but typically we offer a library like this to engineering and sell it as saving them development time.

Artificial Intelligence is the Key to Economic Recovery

Artificial Intelligence technologies have already tremendous economic potential

in the private and business sector. The value of the global AI market in 2019 is

estimated by Gartner and McKinsey at USD 1.9 trillion and forecasts for 2022 is

USD 3.9 trillion. ... There are reasons to believe it will be even more so in

the post-Corona era. About two years ago, before the outbreak of the plague,

Prime Minister Netanyahu asked me and my colleague Professor Eviatar Matanya to

lead a national initiative in the field of intelligent systems that would make

Israel one of the top five countries in the world in this technology within five

years. ... AI has a much wider spectrum than Cyber technology. Its applications

have far-reaching implications in most areas of our lives, including security,

medicine, transportation, automation, retail, sales, customer service and

virtually every field relevant to modern life. The various learning algorithms,

along with the tremendous increase in computing power, are already beginning to

penetrate all areas of our lives, and their understanding requires mastery not

only of the “natural” technological disciplines – such as computer science,

mathematics and engineering – but also of social, legal, business and even

philosophical aspects.

The war against the virus also fueling a war against digital fraud

The study also found that as of March 16, 2021 the 36% of consumers who said

they are being targeted by digital fraud related to COVID-19 in the last three

months is higher than approximately one year ago. In April 2020, 29% said they

had been targeted by digital fraud related to COVID-19. In the U.S., this

percentage increased from 26% to 38% in the same timeframe. Gen Z, those born

1995 to 2002, is currently the most targeted out of any generation at 42%. They

are followed by Millennials (37%). Similarities were observed in the U.S. where

Gen Z was most targeted at 53% followed by Millennials at 40%. “TransUnion

documented a 21% increase in reported phishing attacks among consumers who were

globally targeted with COVID-19-related digital fraud just from November 2020 to

recently,” said Melissa Gaddis, senior director of customer success, Global

Fraud Solutions at TransUnion. “This revelation shows just how essential

acquiring personal credentials are for carrying out any type of digital fraud.

Consumers must be vigilant and businesses should assume all consumer information

is available on the dark web and have alternatives to traditional password

verification in place.”

‘Hacktivism’ adds twist to cybersecurity woes

Earlier waves of hacktivism, notably by the amorphous collective known as

Anonymous in the early 2010s, largely faded away under law enforcement pressure.

But now a new generation of youthful hackers, many angry about how the

cybersecurity world operates and upset about the role of tech companies in

spreading propaganda, is joining the fray. And some former Anonymous members are

returning to the field, including Aubrey Cottle, who helped revive the group’s

Twitter presence last year in support of the Black Lives Matter protests.

Anonymous followers drew attention for disrupting an app that the Dallas police

department was using to field complaints about protesters by flooding it with

nonsense traffic. They also wrested control of Twitter hashtags promoted by

police supporters. “What’s interesting about the current wave of the Parler

archive and Gab hack and leak is that the hacktivism is supporting antiracist

politics or antifascism politics,” said Gabriella Coleman, an anthropologist at

McGill University, Montreal, who wrote a book on Anonymous.

Sweden’s Fastest Supercomputer for AI Now Online

“Research in machine learning requires enormous quantities of data that must be

stored, transported and processed during the training phase. Berzelius is a

resource of a completely new order of magnitude in Sweden for this purpose, and

it will make it possible for Swedish researchers to compete among the global

vanguard in AI,” said Ynnerman. Berzelius will initially be equipped with 60 of

the latest and fastest AI systems from Nvidia, with eight graphics processing

units and Nvidia Networking in each. Jensen Huang is Nvidia’s CEO and founder.

“In every phase of science, there has been an instrument that was essential to

its advancement, and today, the most important instrument of science is the

supercomputer. With Berzelius, Marcus and the Wallenberg Foundation have created

the conditions so that Sweden can be at the forefront of discovery and science.

The researchers that will be attracted to this system will enable the nation to

transform itself from an industrial technology leader to a global technology

leader,” said Huang. The facility has networks from Nvidia, application tools

from Atos, and storage capacity from DDN. The machine has been delivered and

installed by Atos. Pierre Barnabé is Senior Executive Vice-President and Head of

the Big Data and Cybersecurity Division at Atos.

Why data classification should be every organisation’s first step on the path to effective protection

The value of classification was once limited to protection from insider threats.

However, with the growth in outsider threats, classification takes on a new

importance. It provides the guidance for information security pros to allocate

resources towards defending the crown jewels against all threats. Internal

actors cause both malicious and unintentional data loss. With a classification

program in place, the mistyped email address in a message with sensitive data is

flagged. Files that are intentionally being leaked are classified as sensitive

and get the attention of security solutions, such as Data Loss Prevention (DLP).

On the other hand, external threat actors seek data that can be monetised.

Understanding which data within your organisation has the greatest value, and

the greatest risk for theft, is where classification delivers value. By

understanding the greater potential impact of an attack on sensitive data,

advanced threat detection tools escalate alarms accordingly to allow more

immediate response. Organisations generate data every day. This comes as no

surprise. However, what might be surprising is the accelerating volume at which

the data is being created.

6 Principles for Hybrid Work Wellbeing

Wellbeing is both an individual and a team sport. Everyone’s individual

circumstances are unique—from caring for a sick parent to juggling the demands

of remote learning to struggling with racial injustice. Each of us needs to

define our boundaries based on what we can and can’t do—and own them. In

practice, this means deciding what time you start work, deciding what time you

finish work, and sticking to those commitments while communicating them to your

team, whether you’re working remotely or in person. Technology can be your

friend here. For example, set your status message in Teams to indicate when

you're prioritizing family time. When we all own and respect boundaries, we

create a culture of mutual support that promotes everyone’s wellbeing. ...

Meeting bloat is one of remote work’s most counterproductive trends, though the

reasons for it aren’t hard to understand. Without well-defined ways to indicate

progress and participation, showing up to a meeting has become the signal of

doing work. It’s the 21st-century version of punching the clock. This helps

neither employees nor employers. Organizations can undercut this expectation—and

the drain on wellbeing that comes from too many meetings—by fostering a meeting

culture centered on preparation and purpose.

Remote working burn-out a factor in security risk

“Lockdown has been a stressful time for everyone, and while employers have

admirably supported remote working with technology and connectivity, the human

factor must not be overlooked,” said Margaret Cunningham, Forcepoint’s

principal research scientist. “Interruptions, distractions and split attention

can be physically and emotionally draining and, as such, it’s unsurprising

that decision fatigue and motivated reasoning continues to grow. “Companies

and business leaders need to take into account the unique psychological and

physical situation of their home workers when it comes to effective IT

protection. “They need to make their employees feel comfortable in their home

offices, raise their awareness of IT security and also model positive

behaviours. Knowing the rules, both written and implied, and then designing

behaviour-centric metrics surrounding the rules can help us mitigate the

negative impact of these risky behaviours.” Cunningham said that although both

older and younger employees tended to report they were receiving similar

levels of organisational support while working remotely, the emotional

experience, and how different generations use technology, was markedly

different.

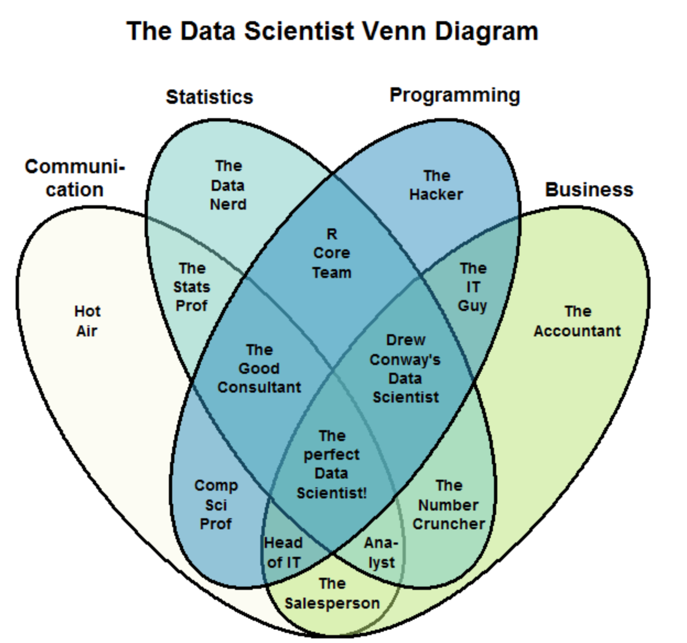

Impact of Big Data on Innovation, Competitive Advantage, Productivity, and Decision Making

Advances in the field of technology enabled individuals and businesses to

collect large amounts of data (structured and unstructured) from various

sources like never before. Data from social media, user-generated, internet,

health care, manufacturing, supply chain, financial institution, and sensors

have grown exponentially. This paper’s objective is to review how big data

drive and impact innovation, competitive advantage, productivity, and decision

support. Methodology: A comprehensive literature review on big data and

identifying the impact of big data analytics on innovation, competitive

advantage, productivity, and decision support are studied. The reviewed

literature created the foundation for studying, a model that was developed

based on an extensive review of literature as well as case studies and future

forecast by market leaders. Big data is the latest buzzword among businesses.

A new model is suggested identifying big data and the correlation between

innovation, competitive advantage, productivity, and decision support.

Findings: A review of scholarly literature and existing case studies finds

that there is a gap between existing frameworks and the integration of big

data into various business and management functions and objectives.

Rethinking data strategies: Shifting the focus from technology to insights

We need to redefine data strategy. Businesses need to move away from

collecting data for data’s sake. Instead, we need to focus on data-driven

technological innovation that delivers meaningful customer experiences, using

targeted data to provide the right insights about customers. Today, businesses

are collecting data en masse. But what are the benefits of collecting this

data? What insight does it provide about customers or competitors? Most

businesses believe they know their customer profile, and acquire more

technology and data to meet this perceived customer profile. By rethinking

data strategies, however, and exploring the value of the data being collected

and how it is being collected, businesses will understand their customers’

wants and needs more effectively. Indeed, knowing your customer is not only

about tracking and tracing their behaviour digitally, you first need to define

what kind of data insight you want to learn from your customer. Then you can

work out how to leverage new data insights amassed through a targeted data

collection to deliver tailored features back to the customer quickly and

easily – engaging customers in a product or service when they need it most.

Quote for the day:

"The leader has to be practical and a

realist, yet must talk the language of the visionary and the idealist." --

Eric Hoffer