How Robotic Process Automation Can Become More Intelligent

Artificial Intelligence (AI) and its intrinsic disciplines, including Machine

Learning (ML), Natural Language Processing (NLP), and so forth, help to

acquire the learning and decision-making abilities in an RPA task. Basically,

RPA is for doing. Artificial intelligence is for contemplating ‘what should be

done’. Artificial intelligence makes RPA intelligent. Together, these advances

offer ascent to Cognitive Automation, which automates many use cases, which

were just inconceivable before. The most recent transformation was the point

when the virtualized platforms permitted the expansion and expulsion of assets

required for processes dependent on the workloads. This permitted the

organizations to investigate opportunities to characterize their processes

based on automated rules. This was the development of Robotic process

automation. RPA goes above and beyond making the monotonous process automated

so human intercession is lost. A straightforward application for this could be

rule-based reactions you need to accommodate certain work processes. When you

code in the Rules once they don’t need any kind of intervention and the RPA

deals with everything. Organizations have profited by executing RPA based

solutions and processes to reduce expenses multiple times.

So You Want to Be a Data Protection Officer?

The GDPR states the Data Protection Officer must be capable of performing

their duties independently, and may not be “penalized or dismissed” for

performing those duties. (The DPO’s loyalties are to the general public, not

the business. The DPO’s salary can be considered a tax for doing business on

the internet.) Philip Yannella, a Philadelphia attorney with Ballard Spahr,

said: “A Data Protection Officer can’t be fired because of the decisions he or

she makes in that role. That spooks some U.S. companies, which are used to

employment at will. If a Data Protection Officer is someone within an

organization, he or she should be an expert on GDPR and data privacy.” Not

having a Data Protection Officer could get quite expensive, resulting in stiff

fines on data processors and controllers for noncompliance. Fines are

administered by member state supervisory authorities who have received a

complaint. Yannella went on to say, “No one yet knows what kind of behavior

would trigger a big fine. A lot of companies are waiting to see how this all

shakes out and are standing by to see what kinds of companies and activities

the EU regulators focus on with early enforcement actions.”

The State of Enterprise Architecture and IT as a Whole

EA is an enterprise-wide, business-driven, holistic practice to help steer

companies towards their desired longer-term state, to respond to planned and

unplanned business and technology change. Embracing EA Principles is a central

part of EA, though rarely adopted. The focus in those early days was reducing

complexity by addressing duplication, overlap, and legacy technology. With the

line between technology and applications blurring, and application sprawl

happening almost everywhere, a focus on rationalizing the application

portfolio soon emerged. I would love to say that EA adoption was smooth, but

there were many distractions and competing industry trends, everything from

ERP to ITIL to innovation. The focus was on delivery and operations, and there

was little mindshare for strategic, big-picture, and longer-term thinking.

Practitioners were rewarded only for supporting project delivery. Many left

the practice. And frankly, a lot of people who didn’t have EA-skills were

thrust into the role. That further exacerbated adoption challenges and defined

the delivery-oriented technology-focused path EA would follow. It is still

dominant today.

Managing and Governing Data in Today’s Cloud-First Financial Services Industry

Artificial intelligence and machine learning technologies have proven to

accelerate the ability for banks, insurance companies, and retail brokerages

to successfully combat fraud, manage risk, cross sell and upsell, and provide

tailored services to existing clients. To harness the power and potential of

these solutions, financial institutions will look to leverage external data

from third-party vendors and partners and in-house data to mine for the best

answers and recommendations. Today’s cloud-native and cloud-first solutions

offer financial institutions the ability to capture, process, analyze, and

leverage the intelligence from this data much faster, more efficiently, and

more effectively than trying to do it internally. Improving Customer

Experience Through Digital Modernization: Banks and insurance companies have

been modernizing and/or replacing legacy core systems, many of which have been

around for decades, with cloud-native and cloud-first solutions. These include

offerings from organizations like FIS Global, nCino, and my former employer

EllieMae in the banking industry, and offerings from Guidewire and DuckCreek

for cloud-native policy administration, claims, and underwriting solutions in

the insurance sector.

Optimizing Privacy Management through Data Governance

Data governance is responsible for ensuring data assets are of sufficient

quality, and that access is managed appropriately to reduce the risk of

misuse, theft, or loss. Data governance is also responsible for defining

guidelines, policies, and standards for data acquisition, architecture,

operations, and retention among other design topics. In the next blog post, we

will discuss further the segregation of duties shown in figure 1; however, at

this point it is important to note that modern data governance programs need

to take a holistic view to guide the organization to bake quality and privacy

controls into the design of products and services. Privacy by design is an

important concept to understand and a requirement of modern privacy

regulations. At the simplest level it means that processes and products that

collect and or process personal information must be architected and managed in

a way that provides appropriate protection, so that individuals are not harmed

by the processing of their information nor by a privacy breach. Malice is not

present in all privacy breaches. Organizations have experienced breaches

related to how they managed physical records containing personal information,

because staff were not trained to properly handle the information.

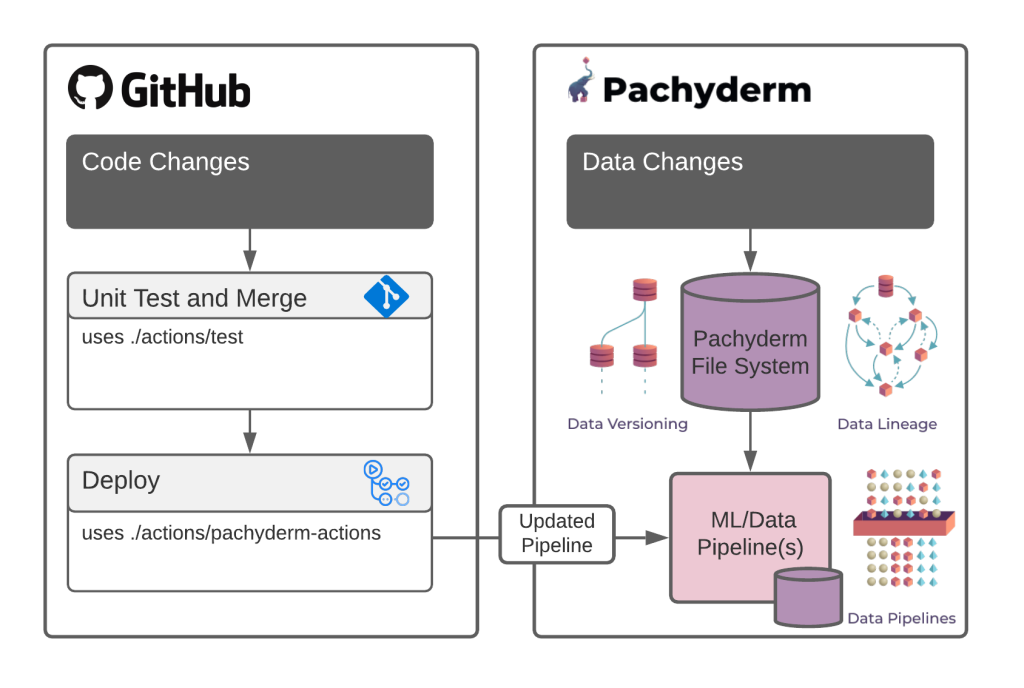

The Definitive Guide to Delivering Value from Data using DataOps

The DataOps solution to the hand-over problem is to allow every stakeholder

full access to all process phases and tie their success to the overall success

of the entire end-to-end process ... Value delivery is a sprint, not a relay.

Treat the data-to-value process as a unified team sprint to the finish line

(business value) rather than a relay race where specialists pass the baton to

each other in order to get to the goal. It is best to have a unified team

spanning multiple areas of expertise responsible for overall value delivery

instead of single specialized groups responsible for a single process phase.

... A well architected data infrastructure accelerates delivery times,

maximizes output, and empowers individuals. The DevOps movement played an

influential role in decoupling and modularizing software infrastructure from

single monolithic applications to multiple fail-safe microservices. DataOps

aims to bring the same influence to data infrastructure and technology. ... At

its core, DataOps aims to promote a culture of trust, empowerment, and

efficiency in organizations. A successful DataOps transformation needs

strategic buy-in starting from C-suite executives to individual contributors.

How do Organizations Choose a Cloud Service Provider? Is it Like an Arranged Marriage?

While not as critical a decision as marriage, most organizations today face a

similar trust-based dilemma- which cloud service provider to trust with their

data? There is no debate over the clear value drivers for cloud computing-

performance, cost and scalability to name a few. However, the lack of control

and oversight could make organizations hesitant to hand over their most

valuable asset- information, to a third party, trusting they have adequate

information protection controls in place. With any trust-based decision,

external validation can play an important role. Arranged marriages rely on

positive feedback and references, mostly attested by the matchmaker. It

also relies on supporting evidence such as corroborations of relatives and

more tangible factors such as education/career history of the potential bride/

groom. In case of cloud service providers, independent validation such as

certifications, attestation or other information protection audits could make

or break a deal. The notion of cloud computing may have existed as far

back as the 1960s but cloud services took the form we know of today with the

launch of services from big players such as Amazon, Google and Microsoft in

2006-2007.

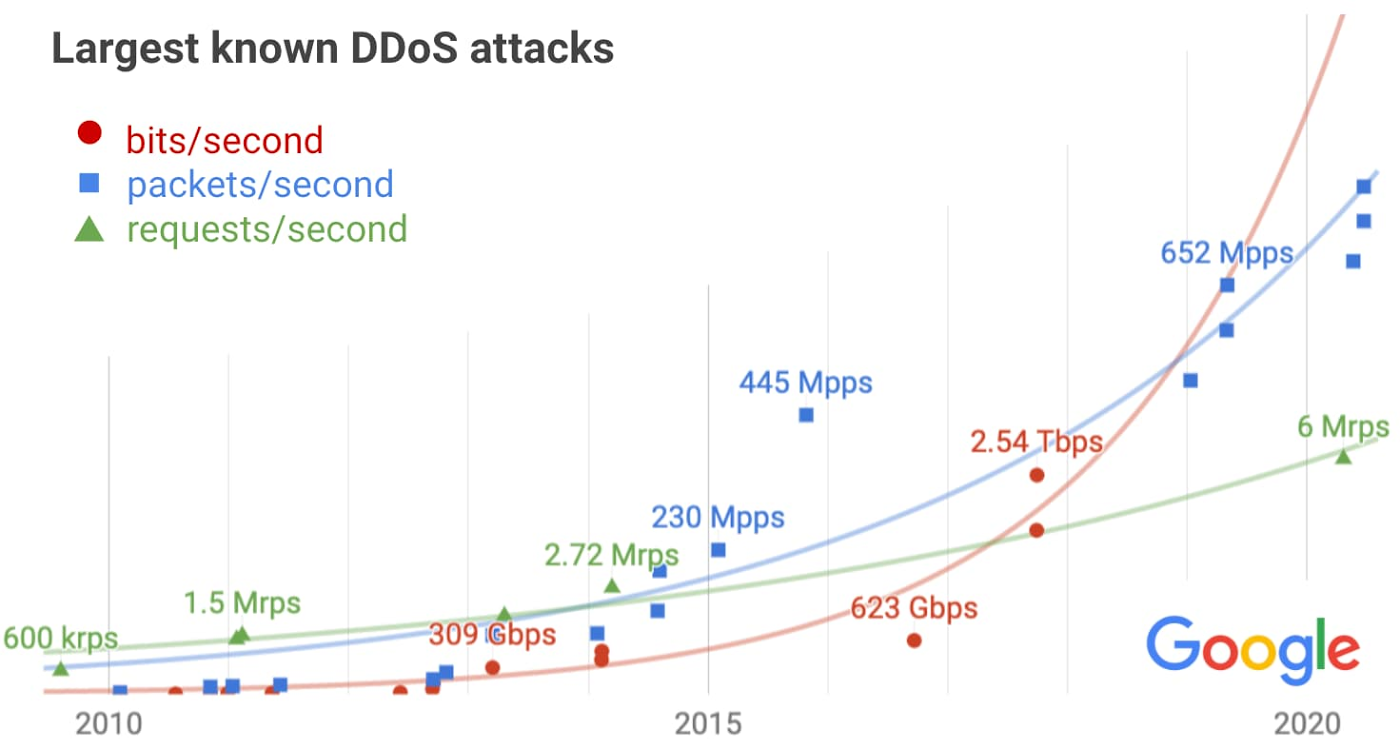

Professor creates cybersecurity camp to inspire girls to choose STEM careers

The way I got into cybersecurity, I got into cybersecurity I would say maybe five years ago. But in the field of IT, I always like to pull things apart, and figure out how they work and problem solve. I was always in the field of IT. I worked as a programmer at IBM for a couple of years, and then I segued into the academy, because I felt I could be more impactful in front of a classroom. In an IBM setting and programming setting, I noticed I was one only woman and woman of color in that field. I said, "OK, I need to do something to change this." I went into the Academy and said, "Maybe if I was an instructor, I could then empower more young women to go and pursue this field of study." Then five years, as time went on, the cybersecurity discipline really became very hot. And really, that was very, very intriguing, how hackers were hacking in and sabotaging systems. Again, it was like a puzzle, problem solving, how can we, out-think the hacker, and how can we make things safe? That became very, very intriguing to me. Then when I wrote this grant, the GenCyber grant, which Dr. Li-Chiou Chen, my chair at the time, recommended that I explore a grant for GenCyber and I submitted it, and I was shocked that I won the grant.Germany’s blockchain solution hopes to remedy energy sector limitations

If successfully executed, Morris explained that BMIL could serve as the basis

for a wide range of DERs supporting both Germany’s wholesale and retail

electricity markets: “This will make it easy, efficient and low cost for any

DER in Germany to participate in the energy market. Grid operators and utility

providers will also gain access to an untapped decarbonized Germany energy

system.” However, technical challenges remain. Mamel from DENA noted that BMIL

is a project built around the premise of interoperability — one of

blockchain’s greatest challenges to date. While DENA is technology agnostic,

Mamel explained that DENA aims to test a solution that will be applicable to

the German energy sector, which already consists of a decentralized framework

with many industry players using different standards. As such, DENA decided to

take an interoperability approach to drive Germany’s energy economy, testing

two blockchain development environments in BMIL. Both Ethereum and Substrate,

the blockchain-building framework for Polkadot, will be applied, along with

different concepts regarding decentralized identity protocols.

How to Overcome the Challenges of Using a Data Vault

Within the data vault approach, there are certain layers of data. These range

from the source systems where data originates, to a staging area where data

arrives from the source system, modeled according to the original structure, to

the core data warehouse, which contains the raw vault, a layer that allows

tracing back to the original source system data, and the business vault, a

semantic layer where business rules are implemented. Finally, there are data

marts, which are structured based on the requirements of the business. For

example, there could be a finance data mart or a marketing data mart, holding

the relevant data for analysis purposes. Out of these layers, the staging area

and the raw vault are best suited to automation. The data vault modeling

technique brings ultimate flexibility by separating the business keys, which

uniquely identify each business entity and do not change often, from their

attributes. These results, as mentioned earlier, in many more data objects being

in the model, but also provides a data model that can be highly responsive to

changes, such as the integration of new data sources and business rules.

Quote for the day:

"The closer you get to excellence in your life, the more friends you'll lose. People love average and despise greatness." -- Tony Gaskins