Data literacy skills key to cost savings, revenue growth

"The bottom line is that bad data is costly, because decision-makers,

managers, data scientists and others who have to work with data have to

compensate for that bad data," she said. "That's time-consuming, but the real

cost of that bad data is that it's an obstacle in their journey to become

insights-driven." To prevent those losses -- and to help people make

data-driven decisions that have the potential to spur revenue growth --

organizations should enable employees with data literacy skills. Employees

need an education in data. Data-driven companies simply grow faster, Belissent

said, noting that Forrester has studied hundreds of companies. And

organizations do want to be data-driven, she continued, adding that 88% of

those surveyed by Forrester want to improve the use of data insights in their

decision-making. But if their data is low quality, or if the data isn't there

at all, it serves as a significant impediment to growth. And in fact,

according to Forrester's research, fewer than half of all decisions are made

based on quantitative analysis. Organizations, therefore, need to implement

training programs to give employees the data literacy skills -- the ability to

evaluate, work with, communicate and apply data -- to do their jobs.

Real Time APIs in the Context of Apache Kafka

One of the challenges that we have always faced in building applications, and

systems as a whole, is how to exchange information between them efficiently

whilst retaining the flexibility to modify the interfaces without undue impact

elsewhere. The more specific and streamlined an interface, the likelihood that

it is so bespoke that to change it would require a complete rewrite. The inverse

also holds; generic integration patterns may be adaptable and widely supported,

but at the cost of performance. Events offer

a Goldilocks-style approach in which real-time APIs can be used as the

foundation for applications which is flexible yet performant; loosely-coupled

yet efficient. Events can be considered as the building blocks of most

other data structures. Generally speaking, they record the fact that something

has happened and the point in time at which it occurred. An event can capture

this information at various levels of detail: from a simple notification to a

rich event describing the full state of what has happened. From events, we can

aggregate up to create state—the kind of state that we know and love from its

place in RDBMS and NoSQL stores.

Emotional AI — can chatbots convey empathy?

Maya Angelou once said — “I’ve learned that people will forget what you said,

people will forget what you did, but people will never forget how you made

them feel.” So, since emotions are our most human quality, what if we could

teach artificial intelligence (AI) to understand our feelings? In recent

years, AI and machine learning algorithms have held the world spellbound with

the rapid pace of development and integration in various industries and

verticals. The goal of AI research has shifted over the years; to compute

what humans could not, to beat us in specific tasks, and most recently to

create an algorithm that can show how it’s working. To put how rapidly AI

is growing in context, a Pew Research Center study reports that by 2025, AI

and robotics will permeate most segments of daily life, while another an

Oxford University Study projects that within the next 25 years, developed

nations will experience job loss rates of up to 47%. AI is displacing the

roles of both white and blue-collar workers, from travel agents to bank

tellers, gas station attendants to factory workers. This has tremendous

implications for industries such as home maintenance, transport and logistics,

healthcare, and most significantly, customer service.

The brain of the SIEM and SOAR

What the nerves need is a brain that can receive and interpret their signals.

An XDR engine, powered by Bayesian reasoning, is a machine-powered brain that

can investigate any output from the SIEM or SOAR at speed and scale. This

replaces the traditional Boolean logic (that is searching for things that IT

teams know to be somewhat suspicious) with a much richer way to reason about

the data. This additional layer of understanding will work out of the box with

the products an organization already has in place to provide key correlation

and context. For instance, imagine that a malicious act occurs. That malicious

act is going to be observed by multiple types of sensors. All of that

information needs to be put together, along with the context of the internal

systems, the external systems and all of the other things that integrate at

that point. This gives the system the information needed to know the who,

what, when, where, why and how of the event. This is what the system’s brain

does. It boils all of the data down to: “I see someone bad doing something

bad. I have discovered them. And now I am going to manage them out.” What the

XDR brain is going to give the IT security team is more accurate, consistent

results, fewer false positives and faster investigation times.

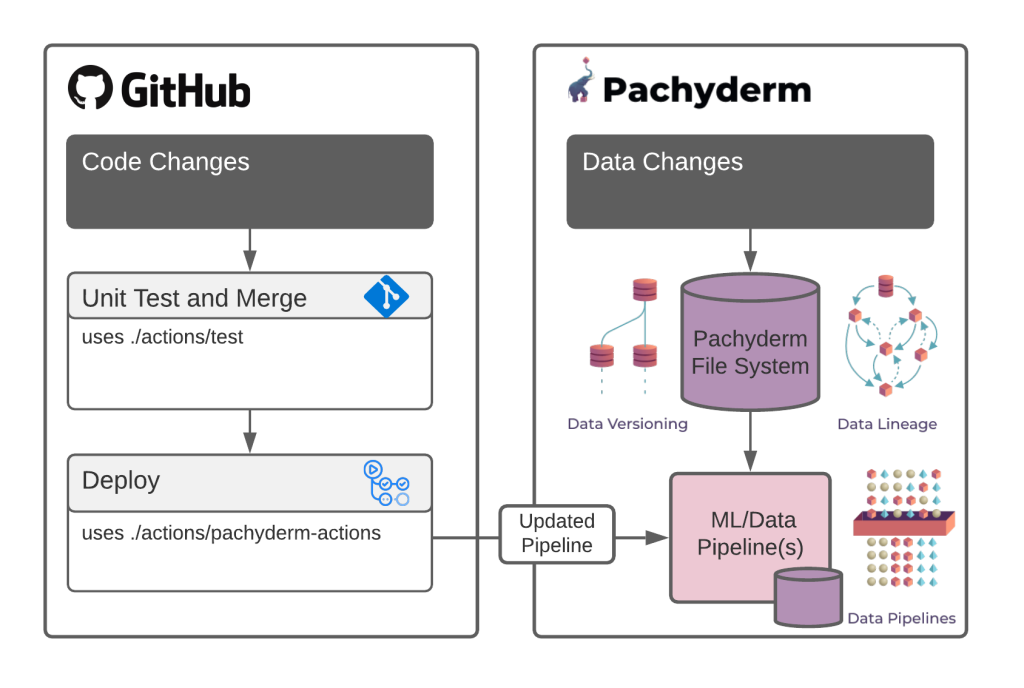

Pachyderm and the power of GitHub Actions: MLOps meets DevOps

The kinds of problems we face in machine learning are fundamentally different

than the ones we face in traditional software coding. Functional issues, like

race conditions, infinite loops, and buffer overflows, don’t come into play

with machine learning models. Instead, errors come from edge cases, lack of

data coverage, adversarial assault on the logic of a model, or overfitting.

Edge cases are the reason so many organizations are racing to build AI Red

Teams to diagnose problems before things go horribly wrong. It’s simply not

enough to port your CI/CD and infrastructure code to machine learning

workflows and call it done. Handling this new generation of machine learning

operations (MLOps) problems requires a brand new set of tools that focus on

the gap between code-focused operations and MLOps. The key difference is data.

We need to version our data and datasets in tandem with the code. That means

we need tools that specifically focus on data versioning, model training,

production monitoring, and many others unique to the challenges of machine

learning at scale. Luckily, we have a strong tool for MLOps that does seamless

data version control: Pachyderm.

Microsoft: Learn JavaScript Node.js with this new free course

The Node.js course teaches beginners what they need to know to build things

like web servers, microservices, command-line apps, web interfaces, drivers

for database access, desktop apps using Electron, IoT client and server

libraries for single-board computers like Raspberry Pi, machine-learning

models and more. Yohan Lasorsa, a senior Microsoft cloud developer

advocate and main host of the Node.js series, recommends students complete the

JavaScript video series before starting the Node.js series. To accompany the

video tutorials, Microsoft has also published an extensive interactive Node.js

course consisting of five modules. The modules include an introduction to

Node.js that explains what it is, how it works, and when it could be useful.

The second module explains how to use dependencies obtained from the NPM

registry, while the third takes students through debugging Node.js apps with

the built-in debugger and the debugger available in Microsoft's Visual Studio

Code (VS Code) editor. The fourth and fifth modules teach students how to work

with files and directories in Node.js apps and how to build a web API with

Node.js and the Express.js framework for adding things like authentication.

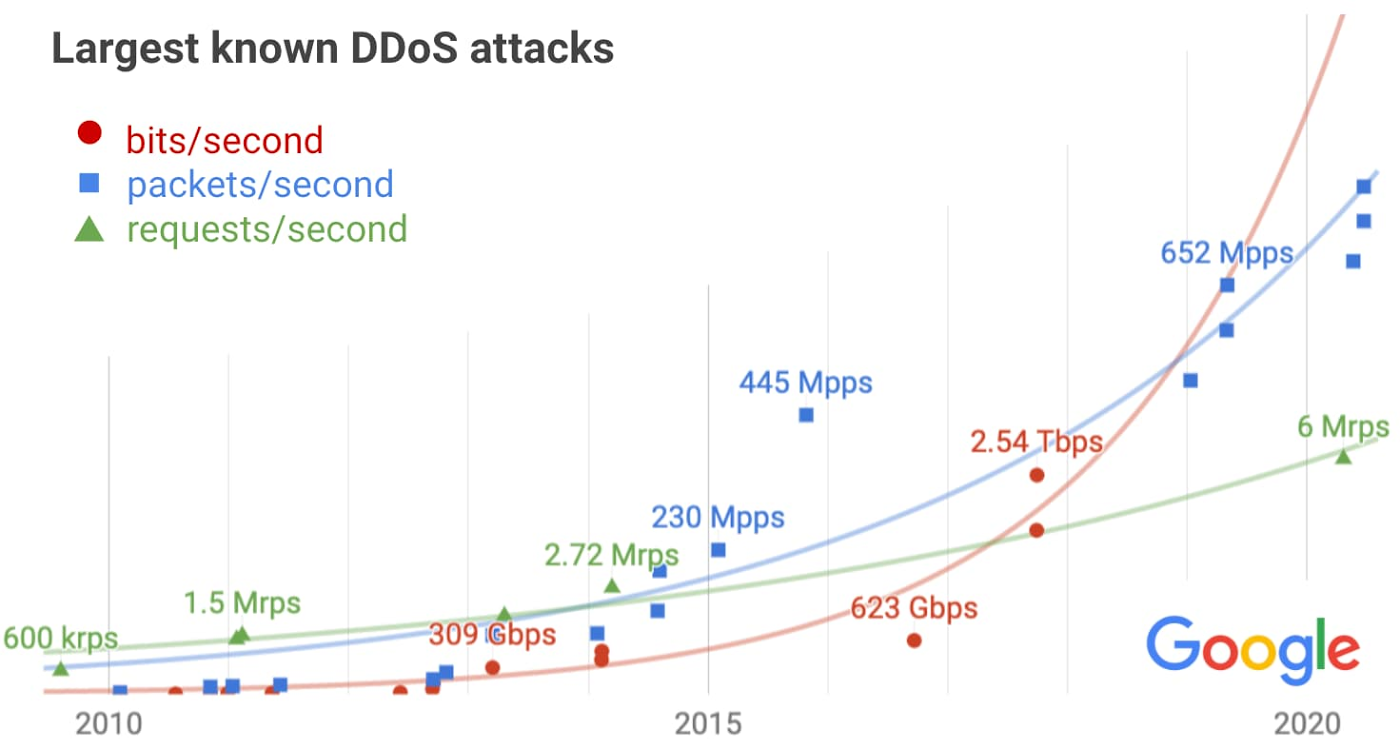

Exponential growth in DDoS attack volumes

We recognize the scale of potential DDoS attacks can be daunting. Fortunately,

by deploying Google Cloud Armor integrated into our Cloud Load Balancing

service—which can scale to absorb massive DDoS attacks—you can protect

services deployed in Google Cloud, other clouds, or on-premise from attacks.

We recently announced Cloud Armor Managed Protection, which enables users to

further simplify their deployments, manage costs, and reduce overall DDoS and

application security risk. Having sufficient capacity to absorb the largest

attacks is just one part of a comprehensive DDoS mitigation strategy. In

addition to providing scalability, our load balancer terminates network

connections on our global edge, only sending well-formed requests on to

backend infrastructure. As a result it can automatically filter many types of

volumetric attacks. For example, UDP amplification attacks, synfloods, and

some application-layer attacks will be silently dropped. The next line of

defense is the Cloud Armor WAF, which provides built-in rules for common

attacks, plus the ability to deploy custom rules to drop abusive application

layer requests using a broad set of HTTP semantics.

Best Practices for Managing Remote IT Teams

Many DBAs and developers have been working remotely for months now, but as IT

budgets grow tighter, they’ll need to do more with less. Ensuring DBAs have

the ability to monitor the database from anywhere will be a core part of a

continued successful remote working strategy. There are many reasons for

database professionals to embrace remote monitoring, whether it’s migrating to

the cloud, adapting to new challenges, keeping an eye on multiple instances in

many environments or gaining fine-grained access to monitoring data. ... Cloud

adoption is up significantly this year as development teams turn to it,

particularly for greenfield projects. But with all of that data migration,

database professionals are struggling with being able to monitor cloud-based

servers alongside on-premises servers, and having a distributed team doesn’t

make it easier. Adopting remote monitoring tools can simplify monitoring of

the cloud—once you’re monitoring a remote database server it doesn’t matter

where the server is. It’s impossible to say what might happen next month or

even next year, but as companies grapple with these cloud challenges, advanced

remote monitoring tools can help monitor disparate, hybrid environments from

one screen.

Hearing The World Through Machine Learning

With ML, companies can apply cutting-edge technology to transform an age-old

problem. Startups are leveraging deep learning and advanced signal processing

at a granularity not previously possible to improve hearing quality.

Some incumbent hearing aid companies have recently touted their ability to add

“AI” features such as Alexa integrations and step counters. Unfortunately,

these features don’t seem to improve actual hearing quality nor take advantage

of true ML capabilities beyond generating marketing buzz. ... In my

conversation with Andre Esteva, the Head of Medical AI at Salesforce, he noted

that “traditional approaches have been limited by extensive manual efforts to

acquire data, hand-craft it into a usable format, prepare rudimentary

algorithms and deploy them to devices. In contrast, ML has a natural flywheel

effect in which devices collect data at scale, ML training protocols

automatically process the data, update themselves and redeploy. The effect is

a significant reduction in product feedback cycles and an increase in the

range of capabilities available. The beauty of this approach is that the

underlying intelligence improves over time as the neural nets go through

iterative training.”

Q&A on the Book Leading with Uncommon Sense

It is a three-step practice that includes pausing, introspecting, and acting.

It requires leaders to continually cycle through the three steps: pause,

introspect and act. At the core of the practice is the need to slow down.

Leaders can pause both in the moment when reacting to a difficult situation or

in a planned, proactive way to prepare for challenges and to harvest

learnings. When introspecting, leaders look inward and examine their own

thoughts or feelings, carefully investigating what is happening with their

thinking. Introspecting allows leaders to pay attention to four areas:

recognizing what is outside of our awareness, learning from our emotions,

tracking the impact of social identities, and embracing uncertainty. After

investigating these four areas and gathering useful information, leaders are

in a better position to take action. Finally, by pausing and introspecting, we

argue that leaders are in a better position to take action. In addition, we

know that leaders cannot allow themselves to be paralyzed by the complexities

of any given moment and that they must have the courage to make decisions and

take action in the very face of that complexity.

Quote for the day:

"Just because you can't have what you want NOW doesn't mean never. Be patient, persistent and resourceful." -- Tim Fargo

No comments:

Post a Comment