When ‘quick wins’ in data science add up to a long fail

The nature of the quick win is that it does not require any significant overhaul of business processes. That’s what makes it quick. But a consequence of this is that the quick win will not result in a different way of doing business. People will be doing the same things they’ve always done, but perhaps a little better. For example, suppose Bob has been operating a successful chain of lemonade stands. Bob opens a stand, sells some lemonade, and eventually picks the next location to open. Now suppose that Bob hires a data scientist named Alice. For their quick win project, Alice decides to use data science models to identify the best locations for opening lemonade stands. Alice does a great job, Bob uses her results to choose new locations, and the business sees a healthy boost in profit. What could possibly be the problem? Notice that nothing in the day-to-day operations of the lemonade stands has changed as a result of Alice’s work. Although she’s demonstrated some of the value of data science, an employee of the lemonade stand business wouldn’t necessarily notice any changes. It’s not as if she’s optimized their supply chain, or modified how they interact with customers, or customized the lemonade recipe for specific neighborhoods.

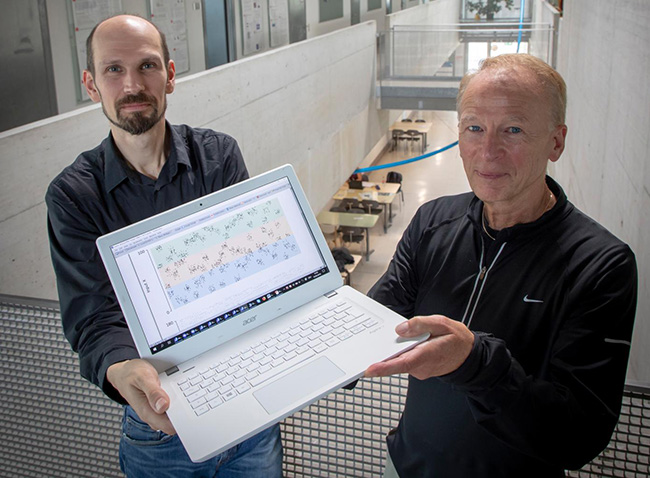

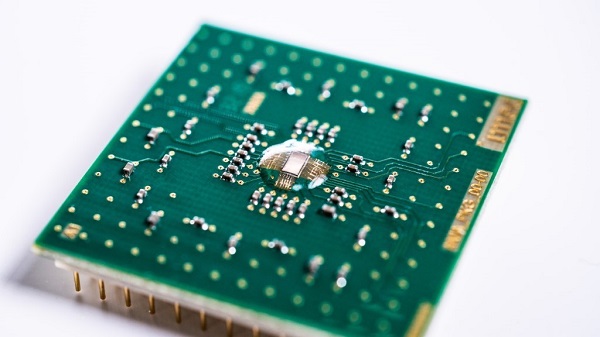

How New Hardware Can Drastically Reduce the Power Consumption of Artificial Intelligence

Currently, AI calculations are mainly performed on graphical processors

(GPUs). These processors are not specially designed for this kind of

calculations, but their architecture turned out to be well suited for it. Due

to the wide availability of GPUs, neural networks took off. In recent years,

processors have also been developed to specifically accelerate AI calculations

(such as Google’s Tensor Processing Units – TPUs). These processors can

perform more calculations per second than GPUs, while consuming the same

amount of energy. Other systems, on the other hand, use FPGAs, which consume

less energy but also calculate much less quickly. If you compare the ratio

between calculation speed and energy consumption, the ASIC, a competitor of

the FPGA, scores best. Figure 1 compares the speed and energy consumption of

different components. The ratio between both, the energy performance, is

expressed in TOPS/W (tera operations per second per Watt or the number of

trillion calculations you can perform per unit of energy). However, in order

to drastically increase energy efficiency from 1 TOPS/W to 10 000 TOPS/W,

completely new technology is needed.

Maintaining Business Continuity With Proper IT Infrastructure And Security Tools A Challenge For IT Pros

Business continuity plans are integral to companies’ ability to withstand an

unanticipated crisis. 86% of companies have a business continuity plan in

place prior to COVID-19, 12% of respondents have minimal or no confidence at

all in their organization’s plan to withstand an unanticipated crisis; only

35% of respondents feel very confident in their plan, according to the

LogicMonitor study. IT decision makers also expressed overall

reservations about their IT infrastructure’s resilience in the face of a

crisis. Globally, only 36% of IT decision makers feel that their

infrastructure is very prepared to withstand a crisis. And while a majority of

respondents (53%) are at least somewhat prepared to take on an unexpected IT

emergency, 11% feel they are minimally prepared or believe their

infrastructure will collapse under pressure. 84% of global IT leaders are

responsible for ensuring their customers’ digital experience, but nearly

two-thirds (61%) do not have high confidence in their ability to do so,

according to the LogicMonitor’s study. The study further revealed more than

half (54%) of IT leaders experienced initial IT disruptions or outages with

their existing software, productivity, or collaboration tools as a result of

shifting to remote work in the first half of 2020.

Why blockchain-powered companies should target a niche audience

The main problem lies in the fact that blockchain technology has a vast array

of potential applications. So it’s all too easy to have an overly broad value

proposition. But this creates a lack of clarity and precision, which can, in

turn, drive customers away. A generic ‘go-to’ marketing strategy for a tech

company often fails to take into account how the adoption of new technology

works. This is especially the case with blockchain-powered companies because

they usually involve complex partnerships with financial institutions. When

marketing a technology company, the focus on technology potential often

distracts from a solid Minimum Viable Product (MVP), and so results in a

generic go-to-market strategy. It’s a case of what I call ‘CTO-led startup’, a

scenario where the founder may have incredibly deep technological skill and

knowledge, but forgets that they need to wear two hats: that of a tech

builder, and that of a visionary CEO. As an ‘enabling technology’, the CEO of

a blockchain-dependant business can potentially have a near unlimited vision

for the company. So it can feel counterintuitive to zero in on a singular

laser focus when building the brand, because it superficially seems like a

reductive strategy when compared to the broader vision.

Why Data Science Isn't an Exact Science

In fact, there are several reasons why data science isn't an exact science,

some of which are described below. "When we're doing data science effectively,

we're using statistics to model the real world, and it's not clear that the

statistical models we develop accurately describe what's going on in the real

world," said Ben Moseley, associate professor of operations research at

Carnegie Mellon University's Tepper School of Business. "We might define some

probability distribution, but it isn't even clear the world acts according to

some probability distribution." ... If you lack some of the data you need,

then the results will be inaccurate because the data doesn't accurately

represent what you're trying to measure. You may be able to get the data from

an external source but bear in mind that third-party data may also suffer from

quality problems. A current example is COVID-19 data, which is recorded and

reported differently by different sources. "If you don't give me good data, it

doesn't matter how much of that data you give me. I'm never going to extract

what you want out of it," said Moseley.

Artificial Intelligence Loses Some Of Its Edginess, But Is Poised To Take Off

“It appears that AI’s early adopter phase is ending; the market is now moving

into the ‘early majority’ chapter of this maturing set of technologies,” write

Beena Ammanath, David Jarvis and Susanne Hupfer, all with Deloitte, in their

most recent analysis of the enterprise AI space. “Early-mover advantage may

fade soon. As adoption becomes ubiquitous, AI-powered organizations may have

to work harder to maintain an edge over their industry peers.” ... “This could

mean that companies are using AI for IT-related applications such as analyzing

IT infrastructure for anomalies, automating repetitive maintenance tasks, or

guiding the work of technical support teams,” Ammanath and her co-authors

note. Tellingly, business functions such as marketing, human resources, legal,

and procurement ranked at the bottom of the list of AI-driven functions. An

area that needs work is finding or preparing individuals to work with AI

systems. Fewer than half of executives (45%) say they have “a high level of

skill around integrating AI technology into their existing IT environments,”

the survey shows.

DevOps engineers: Common misconceptions about the role

Rather than planning to evolve the role of DevOps engineer, identify those

people within the IT team — those who’ve been in development, architecture,

system engineering, or operations for a few years but who also have the soft

skills needed to both pitch ideas and deliver on them. DevOps engineers should

focus on problem-solving skills and on their ability to increase efficiency,

save time, and automate manual processes – and above all, to care about those

who use their deliverables. Workplace disruption has happened. Communication

has proven to be sometimes challenging in our virtual world. Projects stalled

by this disruption must be restarted. There is already a skills and gender gap

within IT. The DevOps engineer must become one of what the DevOps Institute

calls “the Humans of DevOps.” These are engineers who have people skills along

with process and technology skills. Learning among team members as well as

within the enterprise is paramount, and there has never been a better time to

do it. Consider whether a DevOps engineer has the soft skills to facilitate

the learning of team members and to continuously transform the team according

to the needs of the business.

The Hacker Battle for Home Routers

Trend Micro says that four years after the Mirai botnet, the landscape is more

competitive than ever. "Ordinary internet users have no idea that this war is

happening inside their own homes and how it is affecting them, which makes

this issue all the more concerning," according to a new Trend Micro report,

which was co-authored by Stephen Hilt, Fernando Mercês, Mayra Rosario and

David Sancho. Botnet code running on a device can diminish bandwidth. It could

also mean connectivity problems. If security solutions flag a device as being

part of a botnet, certain services may be inaccessible. At worst, if a router

is being used as a proxy for crime, the owner of the device could be blamed.

With many workers still working from home during the pandemic, there's also a

worry about how such infections could potentially affect enterprises as well.

Throughout 2019 and into this year, Trend Micro says, its telemetry detected a

rising number of brute-force attempts to infect routers, which involve trying

various combinations of login credentials. The company suspects the attempts

came from other routers.

The 'magic' of open source: better, faster, cheaper -- and trustworthy

Although open-source software has been available for decades, governments at

all levels are seeing the benefits of embracing it to better deliver services

to the public, a new report states. Those benefits include improving

efficiency, lowering costs, improving trust, increasing transparency and

reducing vendor lock-in, according to “Building and Reusing Open Source Tools

for Government,” released this month by think tank New America. What’s more,

open source allows for collaboration so that government entities with common

problems don’t have to reinvent the wheel to solve them. For instance, the

United Kingdom’s Government Digital Service’s Notify communications management

platform is available as open source, and the government of Canada adapted it

last year to fit its own needs, such as modifying it to support multiple

languages, the report states. In California, the Government Operations Agency

tasked a team to rethink how residents access information online. One of the

20-odd prototypes developed was used by another team to stand up an

unemployment insurance application within Covid19.ca.gov, a website created to

provide pandemic information, said Angelica Quirarte

COVID-19 has disrupted cybersecurity, too – here's how businesses can decrease their risk

To eep enterprises running, businesses must secure remote access and

collaboration services, step up anti-phishing efforts and strengthen business

continuity. Businesses need to establish a culture of robust cyber hygiene, by

providing resources to the workforce and managing access and monitoring activity

on critical assets. ... Not all organisations understand their security posture

and the effectiveness of security controls. As a result, they don’t make the

right decisions or prioritise the correct actions, which leaves the enterprise

open to attack and compromise. Securing end users, data and brand is the next

priority. As the number of cybersecurity threats has increased, chief security

officers and their teams are also benefiting from an increase in prioritisation.

Budget rebalancing will be inevitable as other projects are put on hold to

safeguard organisations and invest more in security. Cybersecurity strategists

should now think longer term, about the security of their processes and

architectures. They should prioritise, adopt and accelerate the execution of

critical projects like Zero Trust, Software Defined Security, Secure Access

Service Edge (SASE) and Identity and Access Management (IAM) as well as

automation to improve the security of remote users, devices and data.

Quote for the day: