Diligent Engine: A Modern Cross-Platform Low-Level Graphics Library

The next-generation APIs, Direct3D12 by Microsoft and Vulkan by Khronos are relatively new and have only started getting widespread adoption and support from hardware vendors, while Direct3D11 and OpenGL are still considered industry standard. New APIs can provide substantial performance and functional improvements, but may not be supported by older platforms. An application targeting wide range of platforms has to support Direct3D11 and OpenGL. New APIs will not give any advantage when used with old paradigms. It is totally possible to add Direct3D12 support to an existing renderer by implementing Direct3D11 interface through Direct3D12, but this will give zero benefits. Instead, new approaches and rendering architectures that leverage flexibility provided by the next-generation APIs are expected to be developed. There exist at least four APIs (Direct3D11, Direct3D12, OpenGL/GLESplus, Vulkan, plus Apple's Metal for iOS and osX platforms) that a cross-platform 3D application may need to support. Writing separate code paths for all APIs is clearly not an option for any real-world application and the need for a cross-platform graphics abstraction layer is evident.

"The whole goal of IAM is to make it a whole lot simpler for the user, rather than having to log on and configure access on thousands of different applications," Johnson said. "And the person on the user- or employee-enablement side of the house is really thinking about, 'How can I implement all this permissioning in a way that makes the users' lives easier, not harder?'" Organizations can run into trouble with IAM, however, when the right hand doesn't know what the left is doing, Johnson added. While the CISO and cybersecurity team might operate under false assurance that person X does not have access to resource Y, for example, someone in employee enablement might have in fact granted that access -- unaware of the security implications at play. "Then, when something bad occurs, the board might say, 'How could this happen?'" Johnson said.

Microsoft finds Russia-backed attacks that exploit IoT devices

Devices compromised in this way acted as back doors to secured networks, allowing the attackers to freely scan those networks for further vulnerabilities, access additional systems, and gain more and more information. The attackers were also seen investigating administrative groups on compromised networks, in an attempt to gain still more access, as well as analyzing local subnet traffic for additional data. STRONTIUM, which has also been referred to as Fancy Bear, Pawn Storm, Sofacy and APT28, is thought to be behind a host of malicious cyber-activity undertaken on behalf of the Russian government, including the 2016 hack of the Democratic National Committee, attacks on the World Anti-Doping Agency, the targeting of journalists investigating the shoot-down of Malaysia Airlines Flight 17 over Ukraine, sending death threats to the wives of U.S. military personnel under a false flag and much more. According to an indictment released in July 2018 by the office of Special Counsel Robert Mueller, the architects of the STRONTIUM attacks are a group of Russian military officers, all of whom are wanted by the FBI in connection with those crimes.

Privacy Attacks on Machine Learning Models

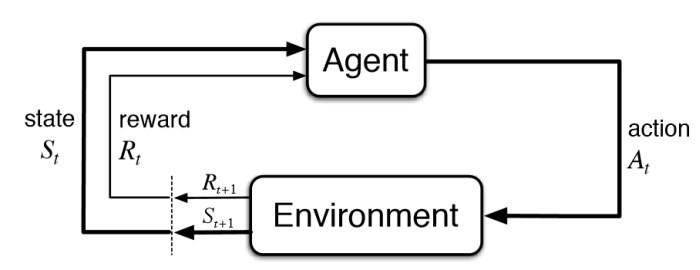

This type of attack is called a Membership Inference Attack (MIA), and it was created by Professor Reza Shokri, who has been working on several privacy attacks over the past four years. In his paper Membership Inference Attacks against Machine Learning Models, which won a prestigious privacy award, he outlines the attack method. First, adequate training data must be collected from the model itself via sequential queries of possible inputs or gathered from available public or private datasets that the attacker has access to. Then, the attacker builds several shadow models -- which should mimic the model (i.e. take similar inputs and outputs of the target model). These shadow models should be tuned for high precision and recall on samples of the training data that was collected. Note: the attack aims to have different training and testing splits for each shadow model, so you must have enough data to perform this step.

Unsupervised learning explained

Think about how human children learn. As a parent or teacher you don’t need to show young children every breed of dog and cat there is to teach them to recognize dogs and cats. They can learn from a few examples, without a lot of explanation, and generalize on their own. Oh, they might mistakenly call a Chihuahua “Kitty” the first time they see one, but you can correct that relatively quickly. Children intuitively lump groups of things they see into classes. One goal of unsupervised learning is essentially to allow computers to develop the same ability. As Alex Graves and Kelly Clancy of DeepMind put it in their blog post, “Unsupervised learning: the curious pupil,” ... Mixture models assume that the sub-populations of the observations correspond to some probability distribution, commonly Gaussian distributions for numeric observations or categorical distributions for non-numeric data. Each sub-population may have its own distribution parameters, for example mean and variance for Gaussian distributions. Expectation maximization (EM) is one of the most popular techniques used to determine the parameters of a mixture with a given number of components.

Mobile-Only Bank Monzo Warns 480,000 Customers to Reset PINs

Monzo reports that PINs are supposed to be encrypted and stored in the bank's internal systems with limited access, but because a bug allowed the PINs to be stored in plaintext, more employees could have accessed them. The software bug has since been fixed, the company reports. "If we've contacted you to tell you that you've been affected, you should head to a cash machine to change your PIN to a new number as a precaution," according to the company's blog. So far, Monzo's investigation hasn't turned up any cases of fraud stemming from the unsecured PINs, and no one from outside the bank apparently accessed the data, according to the bank's statement. A spokesperson for the company did not immediately reply to Information Security Media Group's request for comment. The Guardian reports, however, that this security vulnerability has persisted for at least the last six months, and that the incident has been referred to the U.K. Information Commissioner's Office, which is Britain's watchdog agency for consumer privacy issues.

CIOs In Banking And Financial Firms Still Grappling With Cybersecurity

When it comes to cybersecurity awareness and practices, CIOs in the banking and financial services industry are at a much higher maturity curve than their peers. Despite their awareness and concerns about online threats, a new study found that banking organizations are struggling to manage cybersecurity risks, with many CIOs acknowledging that they are still not doing enough to protect their systems, networks and data. The Synopsis report, based on a survey of CIOs and IT security practitioners from global financial services organizations conducted by Ponemon Institute, found that more than half of these firms have experienced theft of sensitive customer data or system failure and downtime because of insecure software or technology. Besides, the study shows, banking and financial firms’ CIOs are struggling to manage cybersecurity risk in their supply chain and are failing to assess their software for security vulnerabilities before release. “While the financial services industry is relatively mature in terms of its software security posture, organizations are grappling with a rapidly evolving technology landscape and facing increasingly sophisticated adversaries,” says Drew Kilbourne

Building Maintainable Software Systems

To keep the code clean and maintainable one can use clean architecture principles. There’s a whole book on that by Robert Martin and the acronym SOLID to go with it, but here I’m going to simplify it as separating what the system does from how it does it, and that the “what” is not dependent on the “how.” What the system does at its core is the domain and the use cases that surround it. How the system does it, relates to its infrastructure, presentation and configuration. ... A key point in determining which architecture, code organization, language, framework, etc. to use is the ability to justify your decisions. If you can’t justify the decision(s), then you are taking a chance that it will just work out. A better approach might be to first justify the decision to yourself, so that you can later justify it before others. A good way is to record those decisions, for example, by using Architecture Decision Records. Writing down your decision(s) helps you identify if it really makes sense, but it also benefits those coming after you to understand why the system is in its current state.

Best mobile device security policy for loss or theft

The first step to develop a reasonable response procedure for a stolen or lost work phone is to acknowledge what's at stake. Today's business smartphones and sometimes even tablets store a huge amount of information and access, so IT must address lost or stolen devices as serious threats. ... Another way IT can reduce the damage of a lost work phone is to ensure that users are on board with a mobile device security policy and established best practices. Users must know the exact steps to follow once the loss or theft occurs, such as how to report a lost device and how to help locate it. IT professionals may have listed or documented these steps in a manual, but they must communicate the process to users as well. Finally, IT must evaluate existing controls and processes for lost mobile devices. IT professionals can run tests for these policies on a one-off basis every year via a survey or in a one-on-one meeting

Facial recognition… coming to a supermarket near you

As with all algorithmic assessment, there is reasonable concern about bias. No algorithm is better than its dataset, and – simply put – there are more pictures of white people on the internet than there are of black people. “We have less data on dark-skinned people,” says Pantic. “Large databases of Caucasian people, not so large on Chinese and Indian, desperately bad on people of African descent.” Davis says there is an additional problem, that darker skin reflects less light, providing less information for the algorithms to work with. For these two reasons algorithms are more likely to correctly identify white people than black people. “That’s problematic for stop and search,” says Davis. Silkie Carlo, the director of the not-for-profit civil liberties organisation Big Brother Watch, describes one situation where an 14-year-old black schoolboy was “swooped by four officers, put up against a wall, fingerprinted, phone taken, before police realised the face recognition had got the wrong guy”. That said, the Facewatch facial-recognition system is, at least on white men under the highly controlled conditions of their office, unnervingly good. Nick Fisher, Facewatch’s CEO, showed me a demo version; he walked through a door and a wall-mounted camera in front of him took a photo of his face

Quote for the day:

"Leaders make decisions that create the future they desire." -- Mike Murdock

![Network World - Insider Exclusive [Winter 2018] - Intent-Based Networking [IBN] - cover art](https://images.idgesg.net/images/article/2018/01/nw_2018_intent-based_networking_cover_art-100747914-large.jpg)