A strategist’s guide to upskilling

Upskilling is not the same as reskilling, a term associated with short-term efforts undertaken for specific groups (for example, retraining steelworkers in air-conditioning repair or locksmithing). Reskilling doesn’t help much if there are too few well-paying jobs available for the retrained employees. An upskilling effort, by contrast, is a comprehensive initiative to convert applicable knowledge into productive results — not just to have people meet classroom requirements, but to have them move into new jobs and excel at them. It involves identifying the skills that will be most valuable in the future, the businesses that will need them, the people who need work and could plausibly gain those skills, and the training and technology-enabled learning that could help them — and then putting all these elements together. To someone accustomed to current forms of workforce training, in which resources are constrained and companies generally operate independently of one another, an upskilling initiative might seem massive and unaffordable.

Are CIOs truly prepared for the next economic downturn?

Moving responsibilities can take time and is disruptive to the overall operation of the IT organization. Multiply that by many individuals and one can quickly see how disruptive organizational changes can be to their culture. Organizational moral drops and added energy must be inserted to stabilize the remaining organization to ensure consistent operations. To prepare for the next decline, organizations must consider a more flexible organizational model that accommodates cross-functional knowledge while breaking down the silos. Focus on functions and parts of the organization that would remain should a dip occur. These functions should include those most directly tied to the intellectual property and critical functions of the company. Use contingent labor or outsourcing agreements to augment additional areas. Contingent labor allows for the ability to scale up or down as demand changes. The second aspect is to avoid long-term commitments. Look out for hard commitments that would limit flexibility to change contracts. Examples include contingent labor, outsourcing, licensing and spending thresholds. Negotiate contract options with flexibility should an economic decline hit. Vendors will be reluctant to agree to this language in their contract. Consider a compromise where commitments are made but have out-clauses should negative economic conditions prevail.

What is the CCPA and why should you care?

With every new law, regulation or standard, there are the details that one must comply with, in addition to repercussions of those issues. That alone could fill a few articles. One of those areas to consider is if your insurance policies will protect you for CCPA related issues. CCPA has a major effect in that area, and some of the areas you need to get your insurance department involved in, which includes professional liability/E&O, directors & officer’s policies, cyber-insurance, employment practices liability, and other areas. A part of your CCPA readiness assessment, ensure that all of the areas where CCPA can impact are identified and brought up to compliance. Like the state, CCPA is huge. Read the details and it’s easy to see that CCPA requires firms to make major infrastructure changes. CCPA mandates a significant amount of new processes around data collection. It requires significant reengineering and rearchitecture how personal data is handled. And like the mountain of the same name in California, CCPA is mammoth. If you think you are in scope for CCPA, take a few days to read everything you can on the topic. The more educated you are about the act, the better you can deal with it.

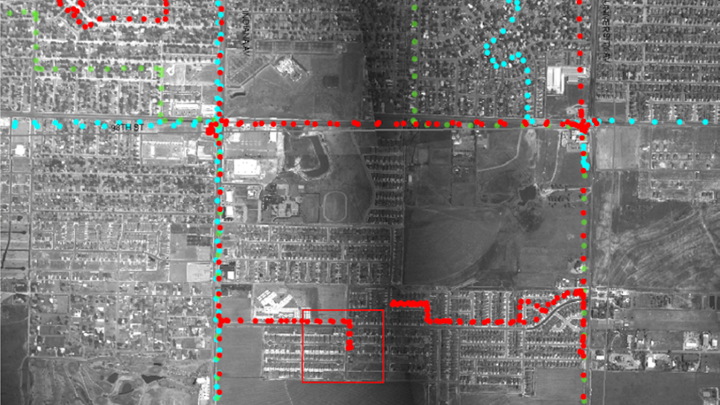

The Military-Style Surveillance Technology Being Tested in American Cities

When it comes to law enforcement, police are likewise free to use aerial surveillance without a warrant or special permission. Under current privacy law, these operations are just as legal as policing practices whereby an officer spots unlawful activity while walking or driving through a neighborhood. Say an officer sees marijuana plants through the open window of a house. Because the officer is in a public space—a road or sidewalk—he or she doesn’t need permission to see the illicit plants, or a warrant to photograph the scene. The only caveat to police aerial-surveillance activities is that they must employ publicly accessible technology, a term that has been defined, somewhat vaguely, in a small number of court cases. In two cases from the 1980s stemming from investigations in which police used cameras aboard helicopters to spot marijuana plants, the Supreme Court ruled that the law-enforcement agencies had not violated the Fourth Amendment, because both helicopters and commercial cameras are generally publicly available.

Humans consume and process information through reading, watching, and participating in discussions. In a similar manner, AI can be used to train computers in the “language of security” using techniques such as large-scale natural language processing (NLP). This greatly helps in harvesting cybersecurity information to help security analysts work more efficiently and faster. AI and analytics enable a Security Orchestration to automatically block threats, correct problems, respond to attacks and automate low level alerts based upon prior examples or similar historical threats. But it doesn’t stop there – in addition to responding faster, AI can be used as a trusted advisor, capable of offering best practice recommendations. For example, AI can be used to take automatic action when a risky user is detected by either verifying the user and/or suspending the user. It can help reduce the time for the access certification process by providing guidance on risk, taking automatic action on low risk certification and allowing the security personnel to focus on high risk access certifications.

A dismal industry: The unsustainable burden of cybersecurity

the way to improve security is to make the company boards accountable, and they will pressure the executives to take the right steps -- in a similar way that the Sarbanes-Oxley legislation made directors accountable for company financial reports. However, a lot of companies had trouble finding board members following Sarbanes-Oxley. This could happen again if board members are made accountable for cybersecurity breaches, which seems like an impossible task given the media coverage of larger, more disturbing attacks. Fear, uncertainty, and disaster is a traditional marketing tactic in the IT industry, and cybersecurity companies are happy to focus on the dire need for more spending on their wares and their services. The scare tactics have been effective, with significant rises in cybersecurity budgets of around 15% annually, says Rothrock. But this takes away money from other IT projects -- projects that could improve revenues. It's an ever-larger black hole of money and human resources that cannot be invested in productivity.

Today's AI 'Revolution' Is More Of An Evolution

The brittleness of today’s systems means companies must also devote considerable resources towards understanding the situations under which they may fail and constructing the necessary cushioning to minimize the impact of such failures on the applications themselves. This can take the form of hand-coded rulesets for the most mission-critical decisions or combining deep learning and classical models, with special handling of cases in which the two diverge beyond a certain threshold. Despite these limitations, deep learning is finding no shortage of applications in the enterprise, automating many tasks that had historically been strongly resistant to codification due to their noisy data, complex patterns or multimedia source data. Yet these applications are typically located outside of the limelight. In contrast to the splashy research demonstrations playing video games or teaching robots how to learn to walk, production deployments today tend to be far more mundane and located in less visible places, from image filtering to chat bots to routing systems. Each deployment displaces human workers that once filled those jobs or reduces the need to hire new workers, but its introduction is typically little publicized and little noticed outside those it immediately effects.

Whistleblower vindicated in Cisco cybersecurity case

The exploit Glenn, 42, discovered would have given an attacker full administrative access to the software that managed video feeds, letting them be monitored from a single location, the lawsuit says. It could also potentially allow unauthorized access to sensitive connected systems. That meant an intruder might have taken control of or bypassed physical security systems such as locks and fire alarms, which are regularly connected to camera systems. "An unauthorized user could effectively shut down an entire airport by taking control of all security cameras and turning them off," the suit says. Airports affected included Los Angeles International and Chicago's Midway, it says. "You could penetrate the entire system. And you could do that without any trace. And have complete backdoor access to the system whenever you wanted," said Michael Ronickher, an attorney representing Glenn with the firm Constantine Cannon LLP.

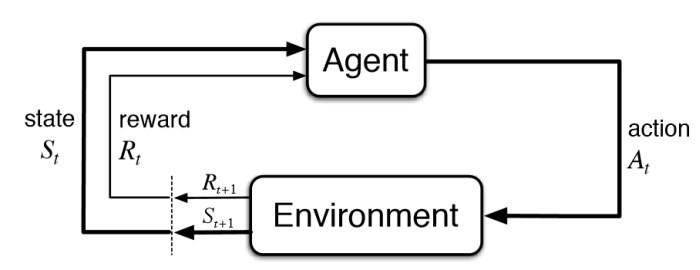

Trading Strategies Using Deep Reinforcement Learning

Reinforcement learning (RL) is about taking suitable action to maximize reward in a particular situation. It is employed by various software and machines to find the best possible behavior or path it should take in a specific situation. Reinforcement learning differs from supervised learning because, in supervised learning, the training data has the answer key with it so the model is trained with the correct answer itself, whereas in reinforcement learning, there is no answer, but the reinforcement agent decides what to do to perform the given task. In the absence of a training dataset, it is bound to learn from its experience. RL refers to a goal-oriented algorithm, that is, algorithms that seek to achieve a complex objective or to maximize the reward through a sequence of steps, such as obtaining the highest score in an Atari game. The elements that conform to this approach are states, a reward function, actions, and an environment in which the agent interacts. Deep Reinforcement Learning is essentially the combination of deep neural networks and reinforcement learning. In this case, we speak of a special type called Q-Learning.

How IoT is revolutionizing facilities data management

It is important to note, however, that data gathered by IoT can accumulate quickly, which can be a double-edged sword. The point of IoT is to be able to analyze all this accumulated data and generate meaningful insights from them. That’s what puts the “smart” in smart technologies. But at unfathomable levels of data that IoT devices are expected to generate, this is easier said than done. This is both the challenge and opportunity for facilities managers who are dealing more and more with IoT-enabled smart buildings and equipment within their operations.When used to collect facilities-related data – such as equipment outputs, electrical consumption, or asset function, for instance – large volumes and varieties of information are sent rapidly to central, Internet-based hubs. Without the proper infrastructure in place, it’s easy for these datasets to become siloed and rendered difficult to utilize. Therefore, rethinking how both how your data is stored and how it’s analyzed is a central requirement if you plan to implement IoT as part of your facilities management analytics strategy.

Quote for the day:

"Leadership is about carrying on when everyone else has given up" -- Gordon Tredgold

No comments:

Post a Comment