There is still a long way to go before PR achieves artificial intelligence nirvana, Graham explains. Talking about the Alexa/ Google home devices and autonomous cars, she continues: “These are employing machine learning to improve performance by analyzing and incorporating the data that they receive, but they aren’t exactly flawless and they employ many thousands of data scientists (along with millions of every day users) to inform and refine the technology.” In today’s competitive market, communicators must continue investing in AI. However, Rausch advises organizations to stay safe by remaining operational without depending on artificial intelligence promises and by taking advantage of how technology has proven itself to empower the PR process. Computers can act very decisively within seconds when they find evidence that something is happening. This shouldn’t mean that we can fully trust automated insights. “Hesitation is a very human thing to do. Computers don’t hesitate…they are absolutely literal,” explains Rausch.

Beyond Limits: Rethinking the next generation of AI

Beyond Limits evolved out of work with NASA's Jet Propulsion Laboratory (JPL) for remote rovers used to explore places like the moon and Mars. Due to the communications lag in space, real-time control is virtually impossible. Any AI solution must be not only fully autonomous, it must be able to train and, ideally, correct itself. When there is a problem it can’t correct, the bandwidth limitations for communication make full reprograming problematic…but point patches are certainly possible. This resulted in an AI platform uniquely able to be updated, modified and, to a certain and initially limited extent, able to both teach itself and make corrections while disconnected. This unusual requirement likely has made the resulting AI nearly ideal for areas where the AI must often act independent of oversight – and/or in areas where problems can escalate very rapidly – and the AI must be able to both deal with a diversity of known and unknown issues.

Transforming Organisations, Changing The Nature Of Work

In the Fourth Industrial Revolution the biggest challenge for every business will be ‘speed to capability’ In other words, how quickly can a company retool itself, both in terms of technology and skills, in order to perceive, analyse, understand, and respond to continuously changing customer behaviour and expectations? Cloud technologies can provide retooling agility, but that is not enough. Companies will need to reorganise work in order to obtain human agility as well. They need to be able to access and deploy a wide range of skills quickly and on demand. This means that we must forget the concept of a ‘job’. This concept is a relic of the First Industrial Revolution where stability was critical for business success, and people were deployed in stable organisational units. ... Human workers will be defined by their skills and not by job titles. In such a world, leadership needs to radically change too. Instead of a supervisory role that ensures processes are dutifully followed by all, the new leaders should be more like orchestra conductors: bringing together diverse talent and technology into a coherent whole that can deliver an excellent performance whatever score you put in front of them.

Experts Discuss Data Science and Machine Learning Best Practices

Surviving and thriving with data science and machine learning means not only having the right platforms, tools and skills, but identifying use cases and implementing processes that can deliver repeatable, scalable business value. The challenges are numerous, from selecting data sets and data platforms, to architecting and optimizing data pipelines, and model training and deployment. In response, new solutions have emerged to deliver key capabilities in areas including visualization, self-service and real-time analytics. Along with the rise of DataOps, greater collaboration and automation have been identified as key success factors. DBTA recently held a webinar with Bethann Noble, director of product marketing, machine learning, Cloudera; Gaurav Deshpande, VP of marketing, TigerGraph; and Will Davis, senior director of product marketing, Trifacta, who discussed new technologies and strategies for expanding data science and machine learning capabilities.

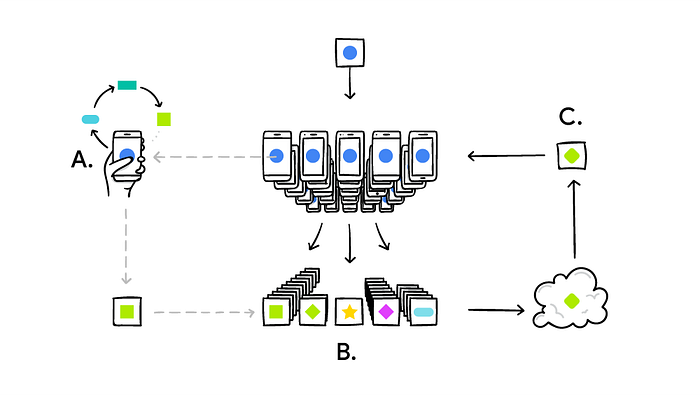

Visa says payment industry can move away from using passwords

For security-minded individuals, mobile device manufacturers have addressed concerns about stolen biometric information by storing and encrypting biometric templates — algorithmic representations instead of actual biometric attributes — locally on consumer-owned devices instead of the cloud. This ensures an individual is always in possession of their personal biometric data with the option to delete the data at any time. In addition, authentication accuracy is bolstered by liveness detection used by biometric scanners and software that can identify if a fingerprint is copied or a facial scan is of a mask. It’s been roughly six years since fingerprint sensors were integrated into consumer smartphones and in this short amount of time, consumers have grown increasingly comfortable with the approach. The need for quick and easy authentication will only increase with the growth of digital products and services, and remembering unique passwords for every internet-connected device or app is untenable.

How to Effectively Lead Remote IT teams

The best measure of team collaboration is how many times team members interact. If you are not at the same office, make sure you design special meetings for interactions. Encourage people to do it even online. For example, tell George to call Stefan, because he has something interesting to share! Or ask your remote team to go together for lunch and give them a topic to talk about. It is very inspiring if the topic is not about work, rather than something of a higher value, such as how can we stop poverty or why did we end up with the situation in Syria. The last one goes to live interaction and having shared fun experiences. If you doubt the investment of getting people at the same place, I am personally investing in inviting our partners in joining us during our team building events. Each year we celebrate our company anniversary at the seaside – this year it will be our 13 th and we will celebrate at one of the best Black Sea resorts – if you are one of our partners just reach Burgas on 13 July – the rest is on us!

Five Things to Understand About Digital Transformation

Today, leaders are talking about 3D printing, Internet of Things (IoT), Robotics and similar advancements in digital technology, which can drastically impact organizations and industries. Many leaders, however, are missing a few key points, resulting in a failure to leverage the power of digital in their business, and becoming irrelevant instead: Digitalization is not just a technology trend. It is an overarching business transformation driven by a shift in the organizational mindset; Digitalization is not characterized by creating mobile apps and having a social media presence. It is an entirely new approach to business. Digitalization is not the same as digitization” - Digitization - the process of converting the physical and analogue into something that’s virtual and digital - Digitalization - leveraging technology to create an exceptional customer experience, become agile and unlock new value. We are in the Era of the Digital BLUR. Organizations leveraging the power of digital are playing by very different rules and are attacking the incumbents from practically every industry. How?

Scrum Team: What Is Your Inner Compass?

If you can create a vision for your Scrum team, and you find them all aligned in the right direction, your work has a reasonably good chance at success. Have you ever been part of a Scrum team that struggled hard making any progress? Team members had plenty of talent, required resources, and opportunities, but they just couldn't progress enough and create impact. If the above plot sounds familiar to you, there's a strong possibility that you might find reading this article valuable. Great vision precedes success, and a compelling vision provides the right direction to the team. If you are unaware of the team's vision, you can't act with conviction. If you haven't inspected the vision in light of your purpose, you can't even be sure that the team you are on is the appropriate one for you. If the team members have an agenda of working against each other, the team's spirit and drive gets lost. Conversely, a team that embraces a vision is more focused, energized, and committed. It knows the reason for its existence. So, how do you inspect a team vision? How do you know whether it is worthy and compelling enough to drive people?

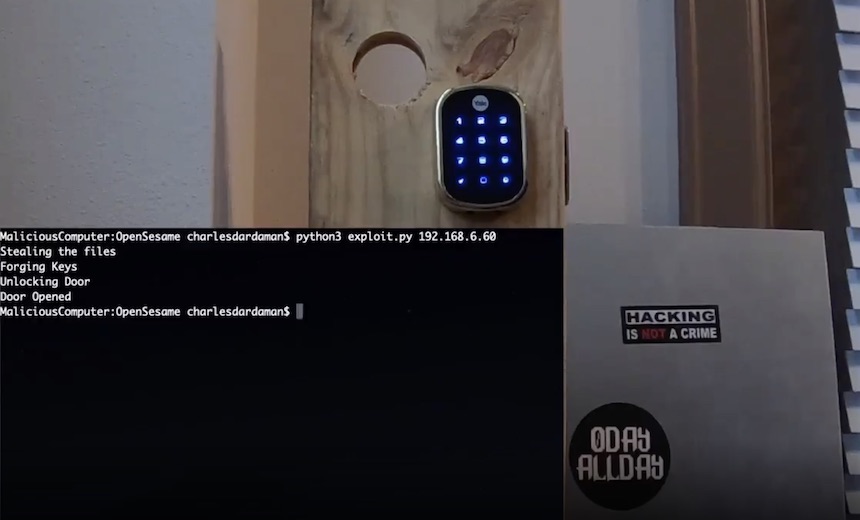

Automated Peril: Researchers Hack 'Smart Home' Hubs

They managed to retrieve a hardcoded SSH private key (CVE-2019-9560) in the controllers. By removing and then imaging an SD card from the controller, they were able to extract the private key, which was needed for root access. The private key was stored in a password-protected folder called /etc/dropbear and named dropbear_rsa_host_key. But they were still able to extract it despite it being password-protected. That SSH key isn't unique, either, so it could be reused across other controllers. In a short video, they show how it is possible to use the vulnerabilities to unlock a Yale lock linked to the controller. The researchers also discovered a local API authentication problem. They found a SHA1 password hash, and because the controller uses the pass-the-hash method rather than requiring the credentials to be input, they were able to construct a working authentication request. After that, they say it would be possible to send an authenticated request to unlock a lock.

What CIOs and CTOs Can Learn From Smart Cities

"One of the hard things is determining the problems you may not know about. One of those things is one-way streets," said Sherwood. "We've been working with NTT to count the number of drivers, and based on historic data and analytics we can start predicting when we might have a wrong way driver. We're able to count the number of vehicles to determine whether we need to invest more in signage. change the road layout, [or otherwise] solve the problem." Las Vegas is also using video analytics to improve traffic flow through dynamic signal timing. It's also counting pedestrian traffic and monitoring environmental factors, all for the purpose of better decision-making. ... "We have a variety of projects we're working on that focus on six key areas: public safety, education for workforce development and to help our population prepare for the future, economic development, health and wellness, social aspirations to try to close the digital divide, and mobility which focuses on how we move people around the city more efficiently."

Quote for the day:

"Leaders are people who believe so passionately that they can seduce other people into sharing their dream." -- Warren G. Bennis