Researchers say 6G will stream human brain-caliber AI to wireless devices

The most relatable one would enable wireless devices to remotely transfer quantities of computational data comparable to a human brain in real time. As the researchers explain it, “terahertz frequencies will likely be the first wireless spectrum that can provide the real time computations needed for wireless remoting of human cognition.” Put another way, a wireless drone with limited on-board computing could be remotely guided by a server-sized AI as capable as a top human pilot, or a building could be assembled by machinery directed by computers far from the construction site. Some of that might sound familiar, as similar remote control concepts are already in the works for 5G — but with human operators. The key with 6G is that all this computational heavy lifting would be done by human-class artificial intelligence, pushing vast amounts of observational and response data back and forth. By 2036, the researchers note, Moore’s law suggests that a computer with human brain-class computational power will be purchasable by end users for $1,000, the cost of a premium smartphone today; 6G would enable earlier access to this class of computer from anywhere.

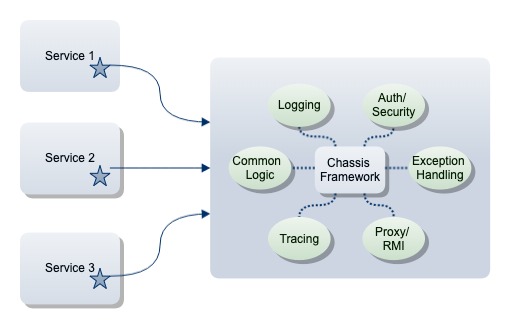

Serverless Computing from the Inside Out

Fundamentally, cybersecurity isn't about threats and vulnerabilities. It's about business risk. The interesting thing about business risk is that it sits at the core of the organization. It is the risk that results from company operations — whether that risk be legal, regulatory, competitive, or operational. This is why the outside-in approach to cybersecurity has been less than successful: Risk lives at the core of the organization, but cybersecurity strategy and spending has been dictated by factors outside of the organization with little, if any, business risk context. This is why we see organizations devoting too many resources to defend against threats that really aren't major business risks, and too few to those that are. To break the cycle of outside-in futility, security organizations need to change their approach, so they align with other enterprise risk management functions. And that approach is to turn outside-in on its head, and take an inside-out approach to cybersecurity. Inside-out security is not based on the external threat landscape; it's based on an enterprise risk model that defines and prioritizes the relative business risk presented by organizations' digital operations and initiatives.

Post-Hadoop Data and Analytics Head to the Cloud

Gartner analyst Adam Ronthal said that while there are some native Hadoop options available in public clouds like AWS, they may not be the best solution for many applications. "There's a fair bit of complexity that goes into managing a Hadoop cluster," he told InformationWeek. Non-Hadoop-based cloud solutions may look simpler and easier to organizations that are evaluating data and analytics solutions. But that doesn't mean there's not a place for Hadoop in the future. Ronthal said that Hadoop is experiencing a "market correction" rather than an existential crisis. There are use cases that Hadoop is really good at, he said. But a few years back, Hadoop was the rock star technology that was the solution to every problem. "The promises out there 3, 4, or 5 years ago were that Hadoop was going to change the world and redefine how we did data management," he said. "That statement overpromised and underdelivered. What we are really seeing now is recognition of workloads that Hadoop is really good at, like the data science exploration workloads."

Artificial intelligence could revolutionize medical care. But don’t trust it to read your x-ray just yet

The algorithms learn as scientists feed them hundreds or thousands of images—of mammograms, for example—training the technology to recognize patterns faster and more accurately than a human could. “If I’m doing an MRI of a moving heart, I can have the computer predict where the heart’s going to be in the next fraction of a second and get a better picture instead of a blurry” one, says Krishna Kandarpa, a cardiovascular and interventional radiologist at the National Institute of Biomedical Imaging and Bioengineering in Bethesda, Maryland. Or AI might analyze computerized tomography heads scans of suspected strokes, label those more likely to harbor a brain bleed, and put them on top of the pile for the radiologist to examine. An algorithm could help spot breast tumors in mammograms that a radiologist’s eyes risk missing. But Eric Oermann, a neurosurgeon at Mount Sinai Hospital in New York City, has explored one downside of the algorithms: The signals they recognize can have less to do with disease than with other patient characteristics, the brand of MRI machine, or even how a scanner is angled.

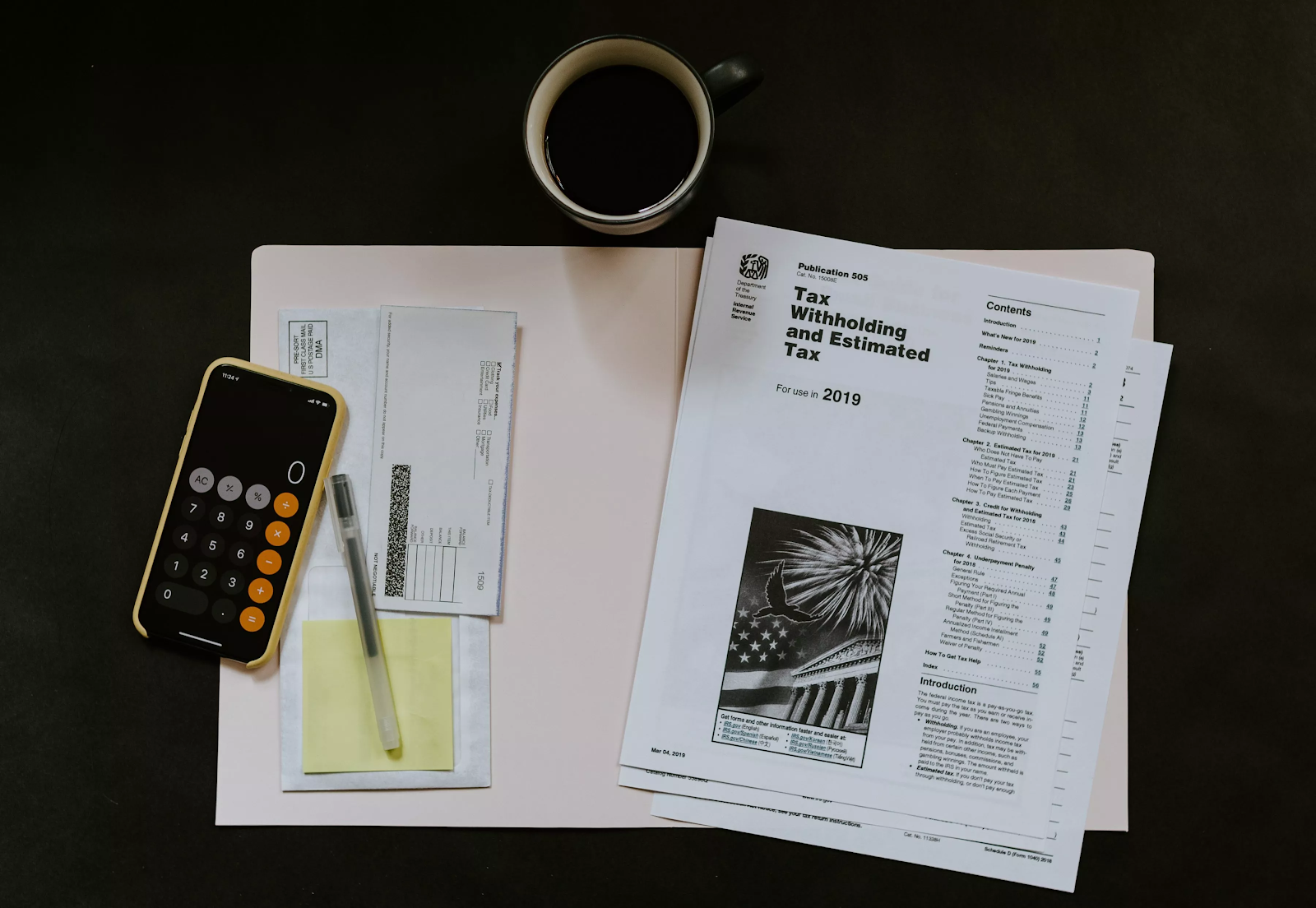

Cybersecurity Risk Assessment – Made Easy

Cybersecurity Risk Assessment is critical because cyber risks are part and parcel of any technology-oriented business. Factors such as lax cybersecurity policies and technological solutions that have vulnerabilities expose an organization to security risks. Failing to manage such risks provides cybercriminals with opportunities for launching massive cyberattacks. But fortunately a cybersecurity risk assessment allows a business to detect existing risks. A cybersecurity risk assessment also facilitates risk analysis and evaluation to identify vulnerabilities with higher damage potential. As a result, a business can identify suitable controls for addressing the risks. ... Cybersecurity risk assessments have many other benefits, all aimed at bolstering organizational security. Cybersecurity risk assessments are critical for any company to harden its cybersecurity. Most importantly, they are the method for a company to identify the most suitable security controls needed to achieve an optimum cybersecurity approach.

Cybersecurity Accountability Spread Thin in the C-Suite

"CEOs are no longer looking at cyber-risk as a separate topic. More and more they have it embedded into their overall change programs and are beginning to make strategic decisions with cyber-risk in mind," says Tony Buffomante, global co-leader of cybersecurity services at KPMG. "It is no longer viewed as a standalone solution." That sounds good at the surface level, but other recently surfaced statistics offer grounding counterbalance. A global survey of C-suite executives released last week by Nominet indicates these top executives have some serious gaps in knowledge about cybersecurity, with around 71% admitting they don't know enough about the main threats their organizations face. This corroborates with a survey of CISOs conducted earlier this year by the firm that indicates security knowledge and expertise possessed by the board and C-levels is still dangerously low. Approximately 70% of security executives agree that at least one cybersecurity specialist should be on the board in order for it to take appropriate levels of due diligence in considering the issues.

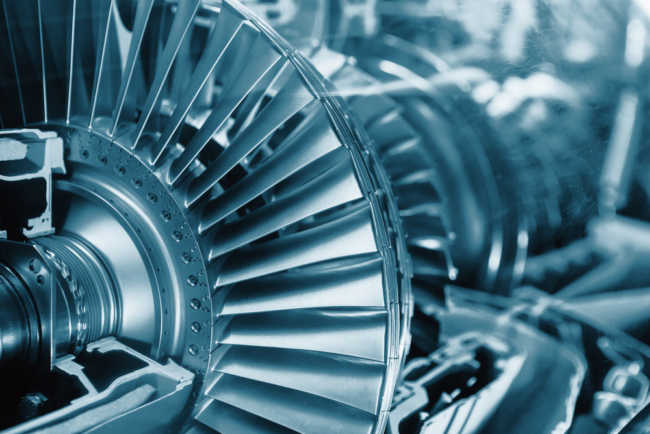

Industrial IoT as Practical Digital Transformation

To navigate this journey in the face of both uncertainty and hype, their company leaders chose a measured approach of “practical” digital transformation. To begin, they adopted IoT through an iterative process of incremental value testing. Notably, they selected goals for increasing internal effectiveness rather than fixating on new customer offerings. As a result, usage data from equipment inside customer facilities now empowers a more cost-effective services team and reduces truck rolls. Furthermore, understanding how their machines are operated in the field enables product teams to proactively identify problem areas and continuously improve their equipment offerings. Both use cases are internal rather than directly customer-facing. Yet it’s their customers who ultimately benefit from higher operational productivity enabled by these ever-smarter systems. Moving forward, machine utilization numbers will better prepare sales teams for guiding customers toward systems best matching their true capacity needs, as well as inform warranty management issues. Connected systems create opportunities for exceeding customer expectations at every turn.

Why the Cloud Data Diaspora Forces Businesses to Rethink their Analytics Strategies

The single biggest thing is it allows you to scale and manage workloads at a much finer grain of detail through auto-scaling capabilities provided by orchestration environments such as Kubernetes. More importantly it allows you to manage your costs. One of the biggest advantages of a microservice-based architecture is that you can scale up and scale down to a much finer grain. For most on-premises, server-based, monolith architectures, customers have to buy infrastructure for peak levels of workload. We can scale up and scale down those workloads -- basically on the fly -- and give them a lot more control over their infrastructure budget. It allows them to meet the needs of their customers when they need it. ... A lot of Internet of Things (IoT) implementations are geared toward collecting data at the sensor, transferring it to a central location to be processed, and then analyzing it all there. What we want to do is push the analytics problem out to the edge so that the analytic data feeds can be processed at the edge.

Brexit GDPR and the flow of data: there could be one winner and that’s the cyber criminal

Huseyin advocates technology. It may not come as a shock to learn he advocated a product from nsKnox. He refers to Cooperative Cyber Security which allows data to be shared across organisations and networks in a way that is completely cryptographic and shredded. “If you can take information with identifiers and put it into a form which is actually meaningless and shred it cryptographically and then distribute it to the partners of the data consortium who want to be able to access that information, you’re now pushing data around the world potentially without ever exposing the actual underlying information. “So for example, we could take your name and we can shred it and we can distribute it to let’s say two banks in Europe and two banks in the UK. Each of those banks holds a piece of information and collectively that information makes up your name, but individually those pieces of information are just bits of encrypted binary data. So totally meaningless.“ So that’s two potential solutions, get close to the regulator and apply appropriate technology.

How to Use Open Source Prometheus to Monitor Applications at Scale

Using Prometheus, we looked to monitor “generic” application metrics, including the throughput (TPS) and response times of the Kafka load generator (Kafka producer), Kafka consumer, and Cassandra client (which detects anomalies). Additionally, we wanted to monitor some application-specific metrics, including the number of rows returned for each Cassandra read, and the number of anomalies detected. We also needed to monitor hardware metrics such as CPU for each of the AWS EC2 instances the application runs on, and to centralize monitoring by adding Kafka and Cassandra metrics there as well. To accomplish this, we began by creating a simple test pipeline with three methods (producer, consumer, and detector). We then used a counter metric named “prometheusTest_requests_total” measured how many times each stage of the pipeline executes successfully, and a label called “stage” to tell the different stage counts apart (using “total” for the total pipeline count). We then used a second counter named “prometheusTest_anomalies_total” to count detected anomalies.

Quote for the day:

"Good things come to people who wait, but better things come to those who go out and get them." -- Anonymous