A second 737 Max crash raises questions about airplane automation

Instead of improving safety, innovations can allow airlines “to run greater risks in search of increased performance.” A high-ranking Boeing official told the Wall Street Journal that “the company had decided against disclosing more details to cockpit crews due to concerns about inundating average pilots with too much information—and significantly more technical data—than they needed or could digest.” But what good is a safety system that’s too intricate for highly trained professional airline pilots to understand? Each new automatic device, Perrow wrote, might solve some problems only to introduce new, more subtle ones. Make the system too complicated, he said, and it’s inevitable that regulators will lose track of which pilots had been told what, and that some pilots will get confused about which procedures to follow. It didn’t, he said, make much sense to blame pilots in cases like this. Pilot error, he said, “is a convenient catch-all.” But it’s the complexity of the system that’s really to blame.

Observability in Testing with ElasTest

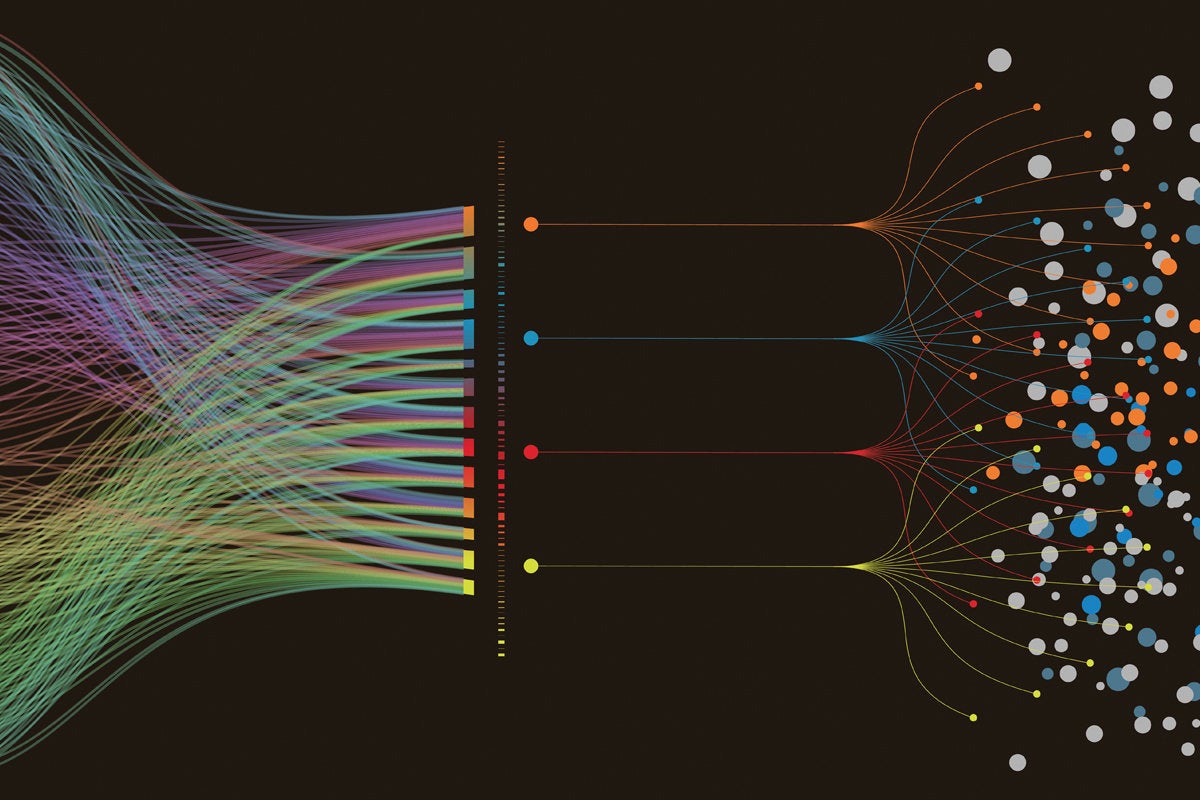

In a distributed system, finding the root cause of a bug is definitely not easy. When I face the problem, I’d like to be able to compare a success execution with a failure one, side by side. This is difficult due to the nature of logs, which can vary between executions. Such a tool should be able to identify the common patterns and discard the irrelevant pieces of information that might vary between two different executions. This comparison feature should be available as well for any other kind of metric. If I can compare the memory consumption or latency of requests of two consecutive executions I might be able to understand why the second failed. In general, we need more specific tools for this task. In a testing environment, we have more control as to what information to store but we need to raise awareness within the tools about the testing process. This way, we can gather the necessary information during test runs, and provide the appropriate abstractions to understand why a specific test failed.

The Effect of The Data Revolution in Enterprise Software Development

The advent of Big Data marks the rebirth of enterprise software. In the traditional model, it was a common practice for the entire enterprise to adapt around the software they used. In a recent survey, 80% of executives who used traditional software responded that the software negatively affected their company’s growth and that it wasn’t flexible enough to adapt to their changing needs. Meanwhile, Big Data made possible custom software development that works for the enterprise and moves with it. Focusing on short learning curves and intuitive interfaces, modern, data-driven software is empowering, not challenging. Enterprise software development now fuels innovation and boosts workplace productivity, preparing businesses for the digital age. Every business faces unique challenges and now, thanks to Big Data, dedicated enterprise software can address these challenges and modernize workflows. When bottlenecks are eliminated, all departments can collaborate seamlessly, utilize resources to the maximum, and stay agile across all project stages.

Security is a constant battle: Be the Secure Code Warrior in your organisation

The skills you learn using Secure Code Warrior cover over 150 types of vulnerabilities, including the OWASP Top 10 – this is very important to note. The Open Web Applications Security Project is a global organisation that has done tremendous work over the years in formalising and promoting best practice in application security. Amongst other things, it provides the OWASP standard that is a baseline of impartial, practical and cost-effective security best practice that can be used to establish a level of confidence in application security both within an organisation, and to external parties (list customers and regulators) to demonstrate a commitment to security. The importance of the alignment to the OWASP standard is that it is non-vendor specific, industry recognised and widely respected, and is not simply learning a single tool. The Secure Code Warrior platform teaches real-world skills, in the coding language of your choice, that is applicable to every industry on every platform, be that Enterprise, Cloud, Mobile or IoT.

How WebAssembly will change the way you build web apps

WebAssembly isn't a finished product, with plenty of room for improvement in both its support, features, and performance. "WebAssembly is young, what has landed in the browser right now is certainly not a fully mature product," says Williams, giving the example of garbage collection not being implemented in WASM as yet. "If you've started working with WebAssembly now, you'll immediately going to go 'Why is my WASM so big and why is it not as fast as I want it to be?'. "It's because it's a new technology, but that being said it is designed to be faster than JavaScript and it is often faster, and, simply by function of it being an instruction set, your programs are going to be significantly smaller." Williams is bullish on the prospects for WebAssembly, and with many people looking to the future of the web as it celebrates its 30th birthday, she has high hopes for how WebAssembly might transform the platform.

Container management tools must overcome limitations

"A lot of different pieces are coming together and driving new opportunities, as well as creating new challenges [with container management]," said Stephen Elliot, program vice president at IDC. Much like virtualization, containers move application development one layer away from the computing infrastructure, so developers spend more time enhancing applications and less time configuring system resources. This approach fits with today's continuous development mantra, which stresses speed. Consequently, containers are becoming quite popular. In fact, the application containers market will create $4.3 billion in revenue in 2022, according to 451 Research's November 2018 Market Monitor study on application containers. When companies deploy containers, many find they require new container management tools to use them at scale. "Monitoring container platforms means managing numerous moving parts, from a security, performance, compliance and availability perspective," said Torsten Volk

Culture & Methods – the State of Practice in 2019

It is only with cultural change that the promise of the new ways of working will be achieved. Many organizations are embarking on “Digital Transformation”, and it is often the same organization which has undergone two or three “Agile Transformations” in the past without seeing the promised benefits. We believe that this is because the adoption is often implemented to “pay lip-service” to the idea, and is shallow rather than truly transformational. According to the State of Agile report, agile software development has become a late majority approach; almost all software is now built using iterative and incremental approaches, and mainly using some derivation of Scrum or Kanban. The strong technical practices from eXtreme Programming are still the exception rather than the norm in early and late majority firms. As an industry, we may know how to build software better, but there isn’t the appetite to truly empower the teams and make the organisational changes needed to actually achieve the outcomes that Innovators have shown is possible.

Millions hit by major Android-based malware campaigns

After installation, the malware then connects with the command and control server to receive orders. These may range from opening a browser with a given URL to removing the app icon from the launcher. The app's three-pronged capabilities include showing ads, opening phishing pages, and exposing users to other applications. The attackers are also able to install a remote application from a designated server, allowing them to further infect users with malware at their discretion. "With the capabilities of showing out-of-scope ads, exposing the user to other applications, and opening a URL in a browser," the researchers said, "'SimBad' acts now as an Adware, but already has the infrastructure to evolve into a much larger threat." CheckPoint Research also outlined 'Operation Sheep' in a second report yesterday. This involves a group of Android apps harvesting contact information from users' phones on a mass scale without their consent. This malware has similarly been loaded in an SDK built for data analytics, and has been seen in up to 12 different Android apps to date.

Standardising The Data Scientist

With the data scientist categorised as an endangered species, it is difficult not only for organisations to recruit people into these roles, but also to ensure that they have the skills and expertise required for the job in hand. For James de Raeve of The Open Group, this can be attributed to a lack of standardisation in the profession. “There needs to be a common understanding around the skills and experiences of professional data scientists,” he says. “This way, there is guidance to help individuals grow their professional competence, while organisations gain support in building career models that meet the requirements of the business now and in the future.” In an effort to remedy this, The Open Group and IBM have come together to create a new certification programme for data science. Through the programme, individual data scientists can certify their skills via the process of peer review, thereby building trust in their profession. Similarly, at the business level, organisations can not only ensure that job opportunities are filled appropriately, but also develop individuals through their own, accredited programme

Power BI Security

Power BI uses two primary repositories for storing and managing data: data that is uploaded from users is typically sent to Azure BLOB storage, and all metadata as well as artifacts for the system itself are stored in Azure SQL Database. The dotted line in the Back-End cluster image, above, clarifies the boundary between the only two components that are accessible by users (left of the dotted line), and roles that are only accessible by the system. When an authenticated user connects to the Power BI Service, the connection and any request by the client is accepted and managed by the Gateway Role (eventually to be handled by Azure API Management), which then interacts on the user’s behalf with the rest of the Power BI Service. For example, when a client attempts to view a dashboard, the Gateway Role accepts that request then separately sends a request to the Presentation Role to retrieve the data needed by the browser to render the dashboard.

Quote for the day:

"Enthusiasm spells the difference between mediocrity and accomplishment." -- Norman Vincent Peale