The Neuroscience of Intelligence: An Interview with Richard Haier

Neuroscience approaches have already made intelligence research more mainstream and ready for inclusion in policy discussions. For example, the single most important factor that predicts school success, by far, is the student’s intelligence. Social economic status, family resources, school and teacher quality all pale in comparison. The data showing this is overwhelming. Yet, the word “intelligence” is virtually absent from all discussions about education policies in the United States, and many other countries. Even if intelligence is mostly influenced by genes, all that means for education is that each student comes to school with a different set of strengths for learning. Teachers all know this and the common goal is to maximize each students potential. Attempts to create policies to do this without paying attention to what we know about intelligence have failed for decades, especially with respect to closing achievement gaps.

Why Your Data Could Be At Risk Without Decentralized Computing

According to industry experts, it will take decades for CPUs to be properly redesigned to resolve these issues and replaced. What should the world do to protect itself in the meantime? The answer is decentralization. This is a form of “trustless” computing that assumes from the start that no single machine can be relied upon, instead spreading information out across many different computers or “nodes.” In this framework, even though each individual entity has the potential to be compromised, the decentralized collective will always perform the work safely and correctly. Bitcoin, Ethereum, and blockchain technology in general offer notable examples of decentralized computing. Decentralization achieves two goals. First, no single machine is making all the decisions, so no single machine can unilaterally make bad decisions that affect individual users.

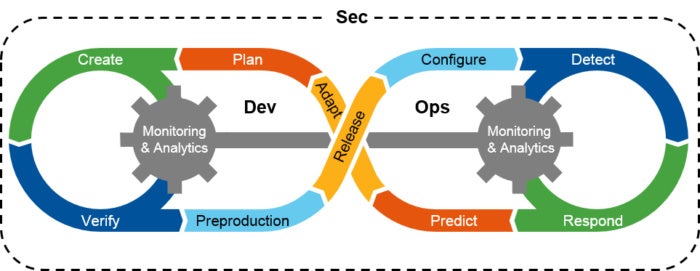

5 Ways SD-WAN Equips Enterprises to Improve Network Security

While the headlines have been alarming, overall industry trends are mixed. According to a recent report by the Ponemon Institute, the average cost of a data breach dropped by about 10 percent to $3.62 million in 2017. This is most likely tied to a reduction in the cost per record stolen, which declined from $158 in 2016 to $141 in 2017. However, the average size of data breaches rose 1.8 percent to more than 24,000 records. Clearly, this is not the time for enterprises to neglect network security. With the rapid expansion of the cloud, followed by what is likely to be an equally rapid move to the Internet of Things, wide-area infrastructure is in need of more flexible and robust protection. One of the most significant enhancements in this field is the advent of the software-defined wide-area network (SD-WAN). By abstracting regional connectivity on top of underlying hardware, enterprises can experience a number of benefits over traditional hardware-centric architectures.

6 things that prevent Blockchain from ruling the world

Generally speaking, the internet is fairly efficient when it comes to the transmission of data. The user requests information, and the server transmits back the piece of data requested with only a small amount of additional data required to get it there. However, the blockchain, in order for it to be preserved, as well as to prevent hacking, needs multiple copies distributed across many nodes. And the blockchain then requires a large amount of storage – for example, Bitcoin’s blockchain was nearly 150GB in size as of last month, and it’s getting bigger all the time. Furthermore, transmitting so much data for the blockchain each time also consumes additional electricity, making the blockchain quite inefficient. In a time where efforts are being made to compress video further to decrease the data required for a download, blockchain’s bulkiness makes little sense.

Financial savings just the beginning for CIOs who understand code quality

It is not just about cutting costs, but improving development productivity and code quality. In the past year, NCOI has fed code into the Cast system four times, but is moving to a contract to enable it to do so monthly to keep up with more regular software updates. “This is so we can refresh our portal every month,” said van Eeden. Ironically, since using the Cast system NCOI has been using more developers because it is doing more development. “For our core ERP application, we have doubled software development productivity,” said van Eeden. “My output doubled, and the quality in the sense of downtime and the number of bugs also improved dramatically.” Van Eeden said he knows there have been no software outages since the company has been using the software intelligence platform, whereas previously it “didn’t even look at the robustness of systems”.

The role of trust in security: Building relationships with management and employees

In reality, security processes must constantly evolve based on discussions between the chief security officer, management, and employees in every business unit, accounting for emerging risks, new technologies, and recently uncovered vulnerabilities. Chief security officers need to first and foremost ensure that a solid understanding exists between the security team and the business units. There is no way that anyone could understand the nuances of a business unit’s capabilities, processes, assets, and services to the extent the unit itself does, so it is tremendously important for a chief security officer to meet with each unit and develop a comprehensive security plan, which is aligned on the corporate level. Only by gaining a more complete understanding of the unique needs of a business unit can a chief security officer develop safeguards that reduce risks.

Demystifying DynamoDB Streams

In order to build something even as simple as a master-slave replication, there are several primitives to understand. The first and foremost is ordering. Imagine if two transactions were to be applied sequentially to a database — the first writes a new entry and the second deletes this entry, which ultimately results in no data persisting in the database — but if the ordering is not guaranteed, the delete transaction could be processed first (causing no effect) and then the write transaction applied, which results in data incorrectly persisting in the database. The second core primitive is duplication: each single transaction should appear exactly once within the log. Failure to enforce ordering or prevent duplication within a log can result in the master and slave becoming inconsistent. ... There are multiple strategies to checkpointing, each of which is a trade-off between specificity and throughput.

How AI Would Have Caught the Forever 21 Breach

As a first step, we must recognize that the days of the desktop/server model are over. In the case of Forever 21, the POS devices served as ground zero — not a laptop, a server, or even a corporate printer. In the age of the Internet of Things, we increasingly rely on "nontraditional" devices to optimize efficiency and boost productivity. But what constitutes a nontraditional device, and how do we look for it? Is it a device without a monitor? A device without a keyboard? Today a nontraditional device could be anything from heating and cooling systems to Internet-connected coffee machines to a rogue Raspberry Pi hidden underneath the floorboards. Protecting registered corporate devices is not enough — criminals will look for the weakest link. As our businesses grow in digital complexity, we have to monitor the entire infrastructure, including the physical network, virtual and cloud environments, and nontraditional IT, to ensure we can spot irregularities as they emerge.

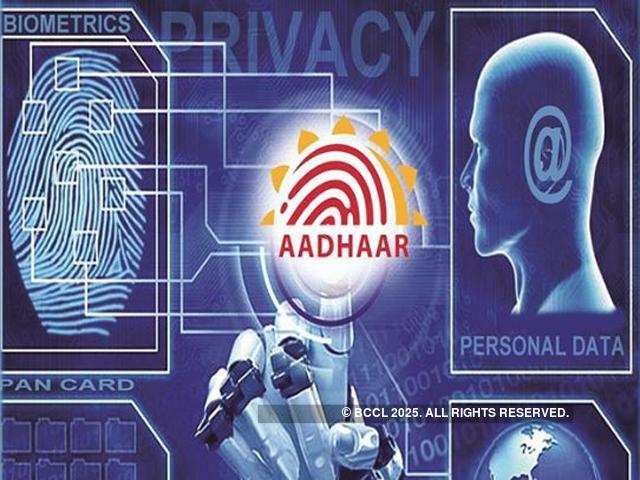

What is identity management? IAM definition, uses, and solutions

Compromised user credentials often serve as an entry point into an organization’s network and its information assets. Enterprises use identity management to safeguard their information assets against the rising threats of ransomware, criminal hacking, phishing and other malware attacks. Global ransomware damage costs alone are expected to exceed $5 billion this year, up 15 percent from 2016, Cybersecurity Ventures predicted. In many organizations, users sometimes have more access privileges than necessary. A robust IAM system can add an important layer of protection by ensuring a consistent application of user access rules and policies across an organization. Identity and access management systems can enhance business productivity. The systems’ central management capabilities can reduce the complexity and cost of safeguarding user credentials and access.

Mental Models & Security: Thinking Like a Hacker

Although we cannot predict the future with great certainty, we often subconsciously make decisions based on probabilities. For example, when crossing the road, we believe there's a low risk of being hit by a car. The risk exists, but if you've looked for traffic, you are confident that you can cross. The Bayesian method says that one should consider all prior relevant probabilities and then incrementally update them as newer information arrives. This method is especially productive given the fundamentally nondeterministic world we experience: we must use both prior odds and new information to arrive at our best decisions. While there may not be a simple answer to what it means to "think like a hacker," the use of mental models to build frameworks of thought can help avoid the pitfalls associated with approaching every problem from the same angle.

Quote for the day:

"It is easy to lead from the front when there are no obstacles before you, the true colors of a leader are exposed when placed under fire." -- Mark W. Boyer

![wireless network - internet of things [iot]](https://images.idgesg.net/images/article/2017/10/wireless_network_internet_of_things_iot_thinkstock_853701554-100739367-large.jpg)