India needs IoT security standards

The bigger issue is most of the sectors using digital technologies or

integrating emerging technologies do not have a digital risk element defined

by the sectoral regulators till date. A lack of National cyber strategy

highlighting the key risk to these sectors is still awaiting cabinet nod.

Hence, fighting ransomware, advanced persistent threats and malware is

becoming tough for the industry, which doesn’t have a framework to rely upon

to test or audit their systems. Earlier this year, the European body, ETSI,

released consumer IoT security standard. The standard specifies high-level

security and data protection provisions for consumer IoT devices which

includes IoT gateways, base stations and hubs, smart cameras, TV, smart

washing machines, wearables, health trackers, home automation systems,

connected gateways, refrigerators, door lock and window sensors. This standard

provides a minimum baseline for securing devices and sets provisions for

consumer IoT. It lays the foundation for setting strong password controls for

IoT devices by stating all consumer IoT device passwords must be unique. In

India, and across the world, we see consumer IoT devices getting sold with

universal default usernames and passwords.

How To Build Your Own Chatbot Using Deep Learning

Before jumping into the coding section, first, we need to understand some

design concepts. Since we are going to develop a deep learning based model, we

need data to train our model. But we are not going to gather or download any

large dataset since this is a simple chatbot. We can just create our own

dataset in order to train the model. To create this dataset, we need to

understand what are the intents that we are going to train. An “intent” is the

intention of the user interacting with a chatbot or the intention behind each

message that the chatbot receives from a particular user. According to the

domain that you are developing a chatbot solution, these intents may vary from

one chatbot solution to another. Therefore it is important to understand the

right intents for your chatbot with relevance to the domain that you are going

to work with. Then why it needs to define these intents? That’s a very

important point to understand. In order to answer questions, search from

domain knowledge base and perform various other tasks to continue

conversations with the user, your chatbot really needs to understand what the

users say or what they intend to do. That’s why your chatbot needs to

understand intents behind the user messages (to identify user’s intention).

Finnish government rolls out digital projects to support SMEs

Finland’s plan aims to use the EDIH-model to strengthen the digital

capabilities of companies across the country. The central strategy is based

on helping Finnish SME enterprises to easily access, exploit and profit from

the more extensive use of business impacting technologies such as artificial

intelligence (AI), robotics and high-performance computing. The design

constriction of the Finnish NDIH plan means it can link directly in to the

EU’s EDIH knowledge base and network. The prospective Finnish hubs will have

the built-in ability to accelerate the digital transformation not just in

Finland but also on a wider EU level. Earlier this year, the Finnish

government rolled-out an open survey scheme to measure interest from the

country’s technology sector in these network projects. The survey was

designed to help determine which Finnish tech actors may be qualified to

join the hubs. The results from the information survey will amplify the

Finnish government ministry’s ability to develop a national framework.

Moreover, it will allow it to organise and complete an application round for

Finland’s candidates to the Eurpean-wide hub by year-end 2020.

Data Privacy is a Brand Reputation Issue, Not a Compliance Issue

First, establish a privacy policy that is legal and ethical. Then, communicate

the privacy and data utilization policy clearly without all the obfuscating

legalese. Demonstrate that consumer data will be collected only by honest and

clear, not coercive, or deceptive consent. Next, create personal data pods for

consumers on their own devices, so they can download and control their data

easily in a usable, structured format instantly. Ask consumers if the brand

can use non-invasive Edge AI tools to provide them with learnings and key

insights into themselves that improve their lives without violating their

desires or values. Use those insights to generate predictions and

recommendations that enhance the consumer’s life, that serve their best

interests, and are delivered with emotionally intelligent humanity.

Continuously seek, and embrace, candid consumer feedback on the actual value

being created. Then, as real value is generated for the consumer using their

available data, and the consumer’s trust increases, open up an honest and

fiduciary dialogue about what additional data is needed in order to create

even more personalized value. As more and more data is accumulated from the

more trusting consumers, such as location, browsing, and other real-time data,

reach out to the hesitant consumers and share use cases.

What Are The Fastest Growing Cybersecurity Skills In 2021?

Cybersecurity professionals with cloud security skills can gain a $15,025

salary premium by capitalizing on strong market demand for their skills in

2021. DevOps and Application Development Security professionals can

expect to earn a $12,266 salary premium based on their unique, in-demand

skills. 413,687 job postings for Health Information Security professionals

were posted between October 2019 to September 2020, leading all skill areas in

demand. Cybersecurity's fastest-growing skill areas reflect the high

priority organizations place on building secure digital infrastructures that

can scale. Application Development Security and Cloud Security are far and

away from the fastest-growing skill areas in cybersecurity, with projected

5-year growth of 164% and 115%, respectively. This underscores the shift from

retroactive security strategies to proactive security strategies. According to

The U.S. Bureau of Labor Statistics' Information Security Analyst's Outlook,

cybersecurity jobs are among the fastest-growing career areas nationally. The

BLS predicts cybersecurity jobs will grow 31% through 2029, over seven times

faster than the national average job growth of 4%.

Q&A on the Book Accelerating Software Quality

There are multiple challenges that can be divided across test automation

creation and maintenance, test reporting and analysis, test management,

testing trends, and debugging. Traditional tools are not efficient enough to

provide practitioners with reliable, robust, and maintainable test scripts.

Test automation scripts keep breaking upon developers’ code changes made to

the apps, or elements on the app that aren’t properly recognized by the test

automation framework. Ongoing maintenance of scripts is also a challenge that

causes lots of false negatives and noise that drills into the CI pipeline. As

test execution scales, large test data accumulates and needs to be sliced and

diced to find the most relevant issues. Here, traditional tools are limited in

filtering big test data and providing data-driven smart decisions, trends,

root cause of failures, and more. Lastly, the time it takes to create a new

script that is code based, and debug it, is way too long to fit into today’s

aggressive timelines. Hence, AI and ML are in a great position to close this

gap by automatically generating test code and maintaining it through

self-healing methods.

How three simple steps will help you save on your cloud spending

The first crucial step is to get hold of the data in a format that will help

you understand how money is being spent. This will allow you to put guard

rails up to protect against overspending. To do this, you’ll need to utilise

tools that break down the usage data. Otherwise, you will just receive a bill

that looks like a long shopping list – that is almost impossible to

decipher. AWS does offer tools that can help here, but you can also add

third party solutions like CloudCheckr to collate this information and play it

back to you, with actionable metrics. This will also alert you to any under

used resources that could be turned off. You will also want to ensure you

implement a consistent tagging strategy across all of your cloud estate. This

will allow you to break spending down to the individual customer, department,

team and even developer. It will also help you understand where you’re getting

a good return on investment and where you might need to be more efficient.

It’s essential that you go native in public cloud infrastructures – otherwise

you will miss key features that allow you to automate and orchestrate. For

example, you should be taking advantage of features that will automate day to

day management tasks, such as software updates and back-ups.

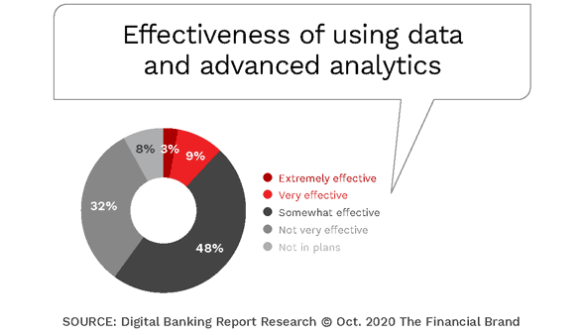

Banking Must Reduce AI Talent Gap for Digital Transformation Success

Using outside talent to improve productivity and results with data and AI

technology is definitely a valid path in the short-run, especially as most

banks and credit unions play catch-up in the race to leverage data insights

across the organization. But, as mentioned, the ability to deploy the insights

to specific product and service needs requires the experience of those who

have known the business for years. Without the involvement of the users of the

data and AI results, technology is deployed in a vacuum. Marketing managers

need to understand the targeting and personalization methodology the models

create. Product managers must understand the changes to processes and

procedures that is recommended by AI technologies to ensure all of the

required steps are in place for compliance purposes. And, risk managers need

to feel comfortable that the assumptions made by models continue to reflect

the cybersecurity requirements of the organization. The need to upgrade the

skills of the consumers of data and AI solutions usually is done by training

existing employees of the organization. This is usually a much more efficient

and less disruptive process than trying to train technology people the

internal intricacies of an organization.

Tackling multicloud deployments with intelligent cloud management

Looking at what the public cloud providers offer in terms of a control plane

for managing workloads, McQuire says Azure focuses on hybrid to edge and

on-premise workload management. “Google Cloud has been a latecomer in the

enterprise and going full board with cloud management,” he adds. For the

moment, says McQuire, the focus is on orchestration and control, security and

governance. He says this is a reflection of where IT organisations are in

terms of how they are using multiple public clouds. “There is a need to

understand the economic impact of moving workloads around,” he says. “Not only

do you have a need to understand the performance of different IT environments,

whether to deploy on-premise, in a private cloud or use one of the three

public clouds, there is also a requirement to understand the economics

associated with those decisions.” It is now not uncommon for IT

decision-makers to standardise on one public cloud for specialist workloads

such as artificial intelligence (AI), and use another for infrastructure as a

service (IaaS). McQuire adds: “Two years ago, companies started running

machine learning workloads with a single cloud provider. ..."

How Service Mesh Enables a Zero-Trust Network

To safeguard user data, organizations are adopting a zero-trust security model.

“Zero trust security means that we’re not trusting anybody,” said Palladino. “We

don’t trust our own services. We don’t trust our own team members.” Placing too

much trust in users, services and teams could cause a catastrophic failure. And,

“there is no bigger risk than thinking you are secure, while in reality, you are

not,” he said. ... Implementing these permissions is where a service mesh comes

in. The application teams often build security, yet it’s generally bad practice

to build your own cybersecurity. Security for microservices requires high

expertise, and standardizing connectivity between various microservices can

easily result in fragmented security implementations. Instead of building your

own security infrastructure, Palladino recommended utilizing service mesh. By

using service mesh as a control plane for microservices, platform architects can

specify specific rules and attributes to generate an identity on a per-service

basis. Service mesh also removes the burden of networking from developers,

enabling them to focus more on their core logic. “Application teams become

consumers of connectivity, as opposed to the makers to this connectivity,”

Palladino noted.

Quote for the day:

"You can't use up creativity. The more you use, the more you have." -- Maya Angelou

No comments:

Post a Comment